What Are AI Agents? The Complete Guide (2026)

Discover what AI agents are, how they work, real-world examples, and 8 industry use cases. Covers agentic AI, types, frameworks, benefits, and how to get started. The definitive guide for 2026.

Something shifted in how organizations think about software last year. The question stopped being “can AI answer this question?” and started being “can AI handle this entire workflow?” That shift has a name: AI agents.

By mid-2025, McKinsey’s State of AI report found that 62% of organizations were already experimenting with AI agents — and 23% had begun scaling at least one agentic system. This isn’t research-lab territory anymore. Companies are deploying agents in production, measuring ROI, and scaling what works. For teams already using agents in AI agents for customer support ticketing, the results are measurable: faster resolution times, lower cost-per-ticket, 24/7 coverage without headcount growth.

But most explanations of AI agents are either buried in academic jargon or so hyped up they’re basically useless. “Revolutionary!” “Transformative!” Great — but what are they, exactly?

This guide answers that clearly. By the end, there’s a complete picture of what AI agents are, how they differ from chatbots and LLMs, the types that exist, how they work under the hood, where they’re being deployed across industries, what the measurable benefits look like, and how to get started — without writing a single line of code.

No buzzwords. No fluff. Just clarity.

What Is an AI Agent? (The Simple Explanation)

An AI agent is an autonomous software system that uses artificial intelligence to perceive its environment, make decisions, and take actions to achieve specific goals — without constant human oversight.

That’s the textbook definition. Here’s what makes it concrete.

Think of an AI agent as a highly capable digital worker who doesn’t just answer questions but actually does things. Instead of being told “send an email to John about the meeting,” the agent receives the instruction “set up a meeting with John next week” — and then checks both calendars, finds open slots, drafts an email, sends it, and adds the calendar event. No step-by-step approvals required.

The key difference from regular AI: agents act, they don’t just respond.

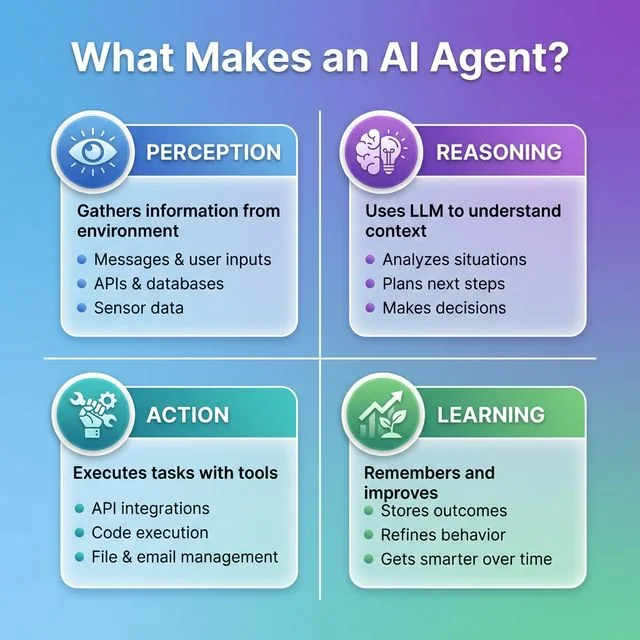

Here’s what makes something an AI agent:

- Perception: Gathers information from its environment — messages, databases, APIs, sensor data, whatever it needs

- Reasoning: Uses a foundation model (like GPT, Claude, or Gemini) to understand context and figure out what to do next

- Action: Executes tasks using tools — sending messages, updating records, calling APIs, browsing the web

- Learning: Remembers what happened and improves over time

Gartner predicts that 40% of enterprise applications will embed task-specific AI agents by the end of 2026 — up from less than 5% in 2025. That’s a technology moving from “interesting experiment” to “competitive necessity” inside of 12 months.

The clearest analogy: an AI agent is like having a very capable team member who never sleeps, never complains, doesn’t lose files, and — most importantly — follows through on complex, multi-step tasks without needing to be managed every step of the way.

The four fundamental components that make an AI agent autonomous: Perception, Reasoning, Action, and Learning working together in continuous cycles.

The four fundamental components that make an AI agent autonomous: Perception, Reasoning, Action, and Learning working together in continuous cycles.

What Is Agentic AI? (The Paradigm Shift Explained)

“Agentic AI” is appearing everywhere right now — and for good reason. It describes a broader shift in how AI systems are designed and deployed.

The progression looks like this:

Traditional AI → Generative AI → Agentic AI

- Traditional AI: Rule-based, narrow. A spam filter that blocks emails matching a pattern. Deterministic, but brittle.

- Generative AI: Language models that produce content from prompts. Flexible and powerful, but fundamentally reactive — it responds when asked, then stops.

- Agentic AI: Systems that initiate, plan, and persist. Given a goal, an agentic system breaks it into steps, executes those steps using available tools, handles what happens, and keeps going until the goal is achieved — or escalates when it can’t proceed.

The word “agentic” comes from “agency” — the ability to act independently with intent. What makes AI agentic isn’t any single capability but the combination: autonomy, goal orientation, tool use, and the ability to handle multi-step processes.

Practically speaking, the difference between generative AI and agentic AI is the difference between asking someone a question and delegating a project to them. One conversation ends when the answer is delivered. The other keeps running until the work is done. For a detailed breakdown of these distinctions, see our generative AI vs agentic AI comparison.

For teams navigating the specific question of what separates AI agents from agentic AI — including when to build individual agents versus full agentic systems — a practical decision framework can prevent costly architectural mismatches.

For a full look at where the future of AI agents is heading — including multi-agent ecosystems and vertical specialization — the trajectory is already visible in 2026 adoption patterns.

AI Agents vs. Chatbots vs. LLMs vs. RPA: What’s the Real Difference?

This is where confusion runs deep — and fair enough, since the terminology shifts almost monthly. Here’s a clear breakdown.

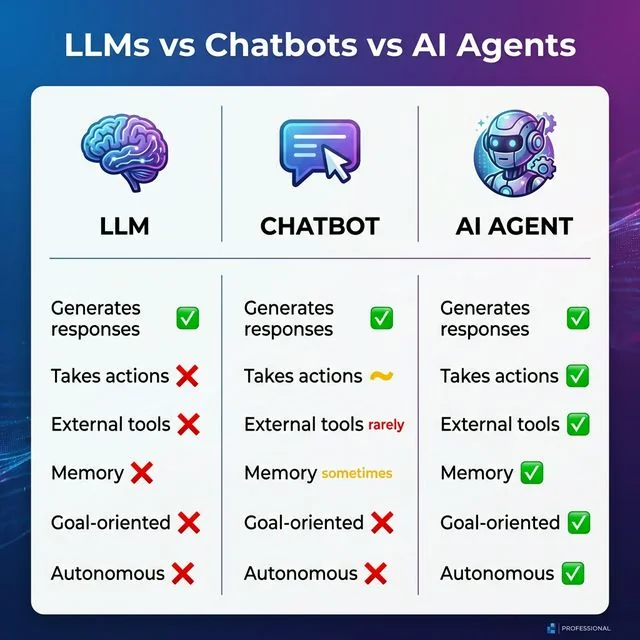

LLMs (Large Language Models)

GPT, Claude, Gemini — these are LLMs. Think of them as incredibly powerful brains. They understand language, generate text, reason through problems, and write code. But they’re fundamentally passive: they wait for a prompt, respond, and stop. An LLM doesn’t know what time it is, can’t check email, and won’t book a flight. It just thinks, very well, on demand.

Chatbots

Chatbots are conversational interfaces. Some are rule-based (the “press 1 for sales” variety), and some are AI-powered. Good ones use an LLM to handle natural language. But even the best AI chatbot is reactive — questions get answered, simple tasks get handled, but they don’t pursue goals on behalf of users or initiate workflows unprompted.

AI Agents

AI agents wrap an LLM with additional capabilities:

- Memory to track context and past interactions over time

- Tools to take real actions in the world

- Planning to break complex goals into executable steps

- Autonomy to execute multi-step workflows without constant supervision

The clearest framing: chatbots talk to users; agents do work for them.

How AI Agents Differ from Robotic Process Automation (RPA)

RPA has been automating repetitive tasks for years — clicking buttons, filling forms, moving data between systems. It’s fast and reliable for well-defined, stable processes. The problem: RPA is deterministic. When something unexpected happens — a form changes, a field is missing, an edge case emerges — RPA breaks.

AI agents handle exactly those situations. They understand context, adapt to variations, and reason through novel scenarios. Many organizations now run RPA for structured, predictable work while AI agents handle the judgment-heavy exceptions that rules can’t cover. The two technologies are increasingly complementary rather than competing.

A full AI agents vs chatbots breakdown covers the architectural distinctions in depth, including when each approach is appropriate.

| Feature | LLM | Chatbot | RPA | AI Agent |

|---|---|---|---|---|

| Generates responses | ✅ | ✅ | ❌ | ✅ |

| Takes real actions | ❌ | Limited | ✅ (rule-based) | ✅ (adaptive) |

| Uses external tools | ❌ | Rarely | Limited | ✅ |

| Persistent memory | ❌ | Sometimes | ❌ | ✅ |

| Goal-oriented | ❌ | ❌ | ❌ | ✅ |

| Works autonomously | ❌ | ❌ | ✅ (rigid) | ✅ (flexible) |

| Handles novel situations | ✅ | Sometimes | ❌ | ✅ |

Visual comparison showing the progression from passive LLMs to reactive chatbots to fully autonomous AI agents with memory, tools, and goal-oriented behavior.

Visual comparison showing the progression from passive LLMs to reactive chatbots to fully autonomous AI agents with memory, tools, and goal-oriented behavior.

How Do AI Agents Actually Work? (The Core Architecture)

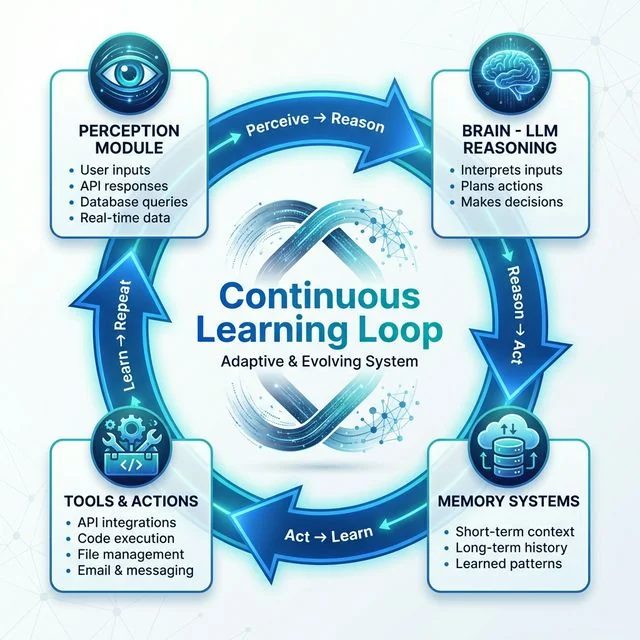

Understanding what’s happening under the hood makes AI agents far less mysterious — and far more useful to evaluate or build. The architecture isn’t magic; it’s a thoughtful loop: perceive, reason, act, remember, repeat.

The Perception Module (How Agents Sense Their World)

Every agent needs input. The perception layer is how an agent gathers information:

- Text from user conversations or messages

- Data from database queries and API calls

- Real-time streams (for IoT or market data applications)

- Web search results

- Visual input for multimodal agents

The perception module is the agent’s eyes and ears. Without quality inputs, no reasoning — however sophisticated — produces good outputs.

The Brain (LLMs and Reasoning)

At the core of most modern AI agents is a foundation model. This is where thinking happens: interpreting perceptions, understanding natural language instructions, reasoning about what to do next, and planning the sequence of actions needed to achieve a goal.

What makes LLMs powerful as agent brains is their ability to handle ambiguity. Every possible scenario doesn’t need to be pre-programmed. The goal gets described, and the model figures out how to get there. Understanding AI agent memory systems in depth reveals how different memory types (short-term, long-term, episodic) interact with the reasoning core to enable persistent, improving behavior.

How AI Agents Make Decisions Autonomously

The decision-making process follows what’s known as the ReAct loop (Reason + Act):

- Observe — The agent receives its current state and available information

- Think — The LLM reasons about what’s happening and what’s needed next (“I need to check the calendar before drafting this email”)

- Act — A tool is called (calendar API, email client, database query)

- Observe again — The result comes back; the cycle updates

- Repeat — Until the goal is reached or the agent determines it needs human input

This loop runs extremely fast — often completing dozens of cycles in seconds. The agent isn’t executing a fixed script; it’s reasoning through each step based on what it observes. That’s what makes agentic behavior fundamentally different from automation.

Memory Systems (Short and Long-Term)

Basic LLMs are stateless — every session starts fresh. AI agents add memory systems:

- Short-term memory: The current conversation context and what’s happened in this session

- Long-term memory: Stored in vector databases, capturing past interactions, user preferences, and learned patterns

- Episodic memory: Specific past events the agent can reference (“last time this customer had this issue, here’s how it was resolved”)

Memory is what makes agents smarter over time. Without it, every interaction starts from zero.

Tools and Actions (The Hands and Feet)

Tools are what separate agents from everything else.

An LLM can describe how to send an email. An agent can actually send it. Tools are integrations that let the agent take real-world action:

- API integrations (Gmail, Slack, Salesforce, HubSpot)

- Database access (read and write)

- Code execution environments (Python, JavaScript)

- Browser automation and web search

- Calendar systems and scheduling APIs

- File management and document editing

The agent’s LLM brain decides what to do. Tools are how it does it.

The four architectural modules of an AI agent working in a continuous cycle: perception gathers data, the LLM brain reasons and plans, tools execute actions, and memory systems enable learning over time.

The four architectural modules of an AI agent working in a continuous cycle: perception gathers data, the LLM brain reasons and plans, tools execute actions, and memory systems enable learning over time.

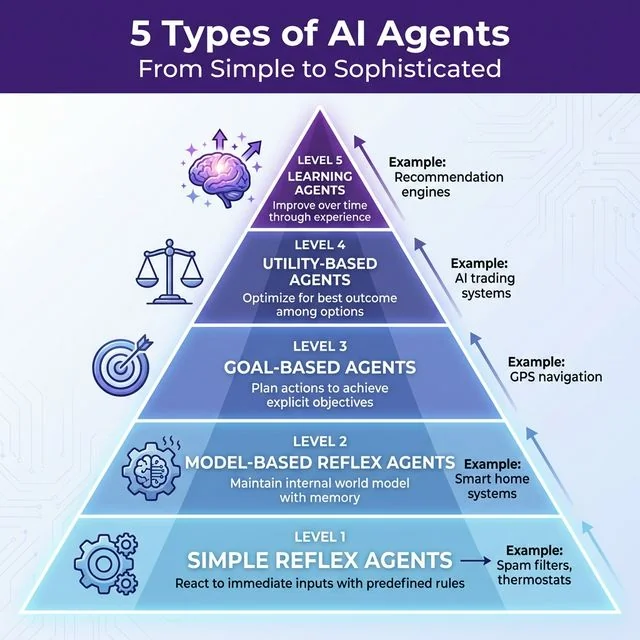

The 5 Types of AI Agents You Need to Know

Academic classifications can get dense fast (anyone who’s read about “model-based reflex agents with partial observability constraints” knows the feeling). But the underlying ideas are genuinely useful — here’s a practical breakdown.

1. Simple Reflex Agents

These react to immediate inputs based on predefined rules. No memory, no planning: if this, then that.

Example: A spam filter blocking emails matching certain patterns. Or a thermostat activating when temperature drops below a threshold.

These aren’t impressive, but they’re everywhere and work well for simple, predictable tasks.

2. Model-Based Reflex Agents

These agents maintain an internal model of the world. They remember past states, which helps handle situations where direct observation is incomplete.

Example: A smart home system that learns usage patterns. Knowing that residents typically return home at 6pm, the system starts warming the house at 5:45 — without receiving any external signal.

The key improvement: memory of state allows for smarter, more anticipatory reactions.

3. Goal-Based Agents

Now the picture gets more interesting. Goal-based agents don’t just react — they plan. They have explicit objectives and work backward to figure out what actions will achieve them.

Example: GPS navigation. It knows the destination (goal), evaluates possible routes, and adjusts in real time when traffic conditions change.

These agents feel intentional — they’re pursuing something, not just responding.

4. Utility-Based Agents

What happens when multiple paths lead to the same goal? Utility-based agents choose the best option, not just any option that works.

They weigh trade-offs — faster but more expensive, cheaper but slower — and optimize toward a utility function (a measure of how good an outcome is).

Example: An AI trading system continuously recalculating the best position based on risk/reward tradeoffs and changing market conditions.

5. Learning Agents

The most sophisticated type. Learning agents improve over time based on experience, using feedback loops to adjust their behavior.

Example: Netflix’s recommendation engine. It doesn’t just match tags — it learns viewing patterns, refines predictions, and gets measurably better the more it’s used.

Most modern production AI agents incorporate learning to some degree. The categories are best understood as capability stacks that compound: a well-built agent is usually goal-based, utility-aware, and learning all at once.

A hierarchy showing the evolution of AI agents from simple reflex systems to sophisticated learning agents, with real-world examples at each level of complexity.

A hierarchy showing the evolution of AI agents from simple reflex systems to sophisticated learning agents, with real-world examples at each level of complexity.

10 Real-World AI Agent Examples That Deliver Measurable Results

Theory is valuable. Real numbers are better. Here are ten AI agent deployments producing documented outcomes.

1. Klarna’s Customer Service Agent

Klarna’s AI agent now handles 66% of all customer support chats — the equivalent workload of 700 human agents. Resolution times dropped by 80%. Customer satisfaction scores held steady. The economic case was immediate and unambiguous.

2. JPMorgan’s Fraud Detection Agents

JPMorgan’s AI systems analyze millions of transactions in real time, flagging anomalies with substantially fewer false positives than rule-based systems. The practical result: significantly reduced fraud losses while the investigation team focuses on confirmed high-risk cases rather than noise.

3. GitHub Copilot (Coding Agent)

GitHub Copilot now writes 46% of the code for developers who use it. More than 1 million developers have adopted it. More recent iterations go beyond autocomplete — suggesting test cases, refactoring functions, and navigating codebases contextually.

4. Genentech’s Drug Discovery Agent

Genentech’s gRED Research Agent automates manual literature searches that previously took researchers hours. By autonomously breaking down complex research questions into subtasks, it compresses weeks of hypothesis exploration into days.

5. Customer Support Resolution Agents

Modern AI agents in AI agents for customer support ticketing go far beyond answering FAQs. These systems access account data, issue refunds, update orders, schedule callbacks, and escalate edge cases to humans — all without human review of routine transactions.

6. Real Estate Lead Qualification Agents

Specialized real estate AI agents qualify leads at 3 AM, browse MLS data to answer zoning questions, and book listing appointments automatically. In a business where response speed directly correlates with conversion rates, this changes the economics significantly.

7. Research and Synthesis Agents

Perplexity-style research agents search the web, synthesize information from multiple sources, and deliver cited summaries. Unlike a search engine (which returns links), these agents read the sources and reason across them. For analysts spending hours across ten browser tabs, this is transformative.

8. Financial Reporting Agents

Finance teams are deploying agents that pull data from ERP systems, generate variance explanations, build forecasts, and produce dashboards — from a single natural-language prompt. Close cycles that took a week now complete in hours.

9. Supply Chain Optimization Agents

In manufacturing, AI agents dynamically adjust production schedules, reroute inventory based on real-time logistics data, and allocate maintenance resources before failures occur. These systems reduce stockouts and improve asset utilization without adding headcount to the operations team.

10. IT Operations Agents

IT service agents resolve tickets, clean CRM data, guide employee onboarding, and diagnose system issues. For common resolution patterns, there’s no human involvement required. IT teams using these systems report handling 3–5× the ticket volume with the same staff.

AI Agents Across 8 Industries: Where Adoption Is Happening

AI agents are moving faster in some sectors than others. Here’s where meaningful deployment is already underway.

1. Customer Service

Adoption is highest here. Agents handle inquiry routing, resolve common issues, pull account data, issue refunds, and escalate complex cases. Unlike the frustrating bots of five years ago, these systems understand context and take real action. Industries with high contact center volume — telecom, banking, retail, utilities — are deploying at scale.

2. Financial Services

Banks and FinTech firms use agents for fraud detection, automated compliance monitoring, invoice reconciliation, and financial reporting. JPMorgan, Citi, and Goldman Sachs have all announced agentic AI initiatives. Compliance agents continuously monitor enterprise actions against internal policies, flagging violations in real time rather than through periodic audits.

3. Healthcare

Agents are reducing administrative burden significantly — scheduling, billing, EHR data entry, and prior authorization processing are all being automated. On the research side, drug discovery agents like Genentech’s compress literature review timelines from weeks to days. Patient triage agents are being tested for symptom assessment at scale. For a deeper look at how health systems are vetting, governing, and deploying these tools responsibly, see our comprehensive guide on AI in healthcare applications and risks.

4. Legal

Law firms use agents for contract review, due diligence, and legal research. Contract risk agents read agreements, extract obligations and deadlines, identify risk clauses, and maintain living risk profiles. For large deal volumes, this cuts review time and reduces the likelihood of human error on routine documents.

5. Human Resources

HR departments are automating the repetitive front end of recruiting: drafting job descriptions, summarizing CVs, running initial screening questions, and scheduling interviews. Agents also handle onboarding workflows, benefits questions, and policy lookups — freeing HR teams for work requiring genuine judgment.

6. Manufacturing and Supply Chain

AI agents on the factory floor monitor equipment performance, detect anomaly patterns before failures occur, adjust production schedules dynamically, and manage supplier coordination. In logistics, agents reroute shipments in real time based on weather, customs delays, and demand signals. The result is fewer disruptions and higher asset utilization.

7. Retail and E-commerce

McKinsey’s retail research consistently finds personalization as a primary revenue driver — and agents are making dynamic personalization economically viable at scale. AI agents for ecommerce automation now handle product recommendations, inventory management, pricing optimization, and customer service simultaneously. Seasonal demand spikes become dramatically easier to absorb when agents scale instantly.

8. Cybersecurity

Security agents monitor network traffic continuously, detect behavioral anomalies, enforce access policies, investigate alerts, analyze malware signatures, and recommend responses — often in the seconds before human analysts could engage. As threats grow more sophisticated, the speed advantage of agentic systems becomes a genuine security differentiator.

7 Proven Benefits of AI Agents (With ROI Data)

The case for AI agents isn’t theoretical. Here’s what organizations are measuring.

1. Dramatic Time Savings on Repetitive Work

The most consistent finding across deployments: agents eliminate hours of routine, high-volume work. Klarna’s result (70% of support handled by agents) is the headline example, but similar patterns appear across functions. Finance teams report close cycles shortened by 60–70%. Recruiting teams automate 40–60% of the screening process.

2. 24/7 Operation Without Staffing Costs

Agents don’t sleep, don’t take weekends, and don’t call in sick. For any workflow where time zones or off-hours coverage has been a problem — customer support, lead qualification, system monitoring — agents provide continuous operation at effectively no marginal cost per hour.

3. Consistent Quality Across High-Volume Tasks

Human performance varies — high at the start of shifts, lower at the end; sharp on Monday, tired on Friday. Agents apply the same reasoning consistently across the ten-thousandth case as the first. For compliance, fraud detection, and quality assurance, this consistency is its own form of risk reduction.

4. Scale Without Linear Headcount Growth

Traditional scaling requires proportional headcount. A company that doubles its customer base needs roughly double the support team. AI agents break that relationship. The marginal cost of handling more volume through agents is primarily compute cost — which is dramatically lower and continues to decrease.

5. Faster Decision-Making at the Point of Action

Agents compress decision cycles. Where a human analyst might need hours to pull data, build a report, and present findings, an agent completes the same sequence in minutes. For time-sensitive domains — fraud detection, supply chain disruption, market response — this speed matters enormously.

6. Measurable Error Reduction on Defined Tasks

McKinsey’s 2025 research found that 64% of organizations report AI enabling meaningful innovation — including error reduction on data-intensive tasks like reconciliation, compliance reporting, and medical coding. Rule-based errors from human fatigue drop significantly when agents handle defined, structured workflows.

7. Redeployment of Human Talent to Higher-Value Work

The downstream benefit of agents handling routine work: the people who previously did that work get redeployed. Support teams spend more time on complex cases. Finance teams do analysis instead of data entry. Recruiters do relationship building instead of CV screening. Organizations that measure this report significant improvements in employee satisfaction alongside the efficiency gains.

Multi-Agent Systems: When One Agent Isn’t Enough

Here’s where the architecture gets genuinely sophisticated — and where the near-term future appears to be heading.

A multi-agent system (MAS) involves multiple specialized AI agents working together, each with a defined role.

Think of it like a high-performing team:

- A Researcher agent gathers information and synthesizes sources

- A Planner agent breaks down goals and assigns tasks

- A Writer agent drafts content based on research and spec

- An Editor agent reviews output against quality criteria

- A Compliance agent checks the final result against regulatory requirements

Instead of building one super-agent that tries to do everything (and gets confused trying), specialized agents hand off work to each other. Each is simpler, but the system produces better results than any monolithic agent could.

How Multi-Agent Orchestration Works in Practice

Orchestration is the coordination layer — the system that ensures agents communicate, share context, handle failures gracefully, and know when to escalate to humans. Building good orchestration is genuinely hard, but frameworks like AutoGen, CrewAI, and LangGraph have made it significantly more accessible.

The practical pattern: a user or system sends a goal to an orchestrator agent. The orchestrator decomposes the goal, assigns subtasks to specialist agents, monitors progress, handles errors (retrying, escalating, or adapting as needed), and assembles the final output.

This is why a thorough comparison of the best AI agent frameworks for 2026 is so useful — the choice of orchestration layer significantly affects how well multi-agent systems actually perform in production.

Gartner’s research projects that by 2029, multi-agent systems will become the default architecture for complex enterprise AI — not because it’s trendy, but because it scales and maintains quality better than monolithic agents on complex tasks.

A multi-agent content creation workflow showing how specialized agents (Research, Planner, Writer, Editor) collaborate by passing work between each other, producing better results than a single monolithic agent.

A multi-agent content creation workflow showing how specialized agents (Research, Planner, Writer, Editor) collaborate by passing work between each other, producing better results than a single monolithic agent.

Popular AI Agent Frameworks (For Those Who Want to Build)

For developers and technically curious readers, here’s the landscape of agent frameworks in early 2026.

LangChain / LangGraph

The most comprehensive ecosystem available. Modular architecture, extensive integrations, and a large community. LangGraph (built on LangChain) adds the graph-based orchestration needed for complex multi-agent systems.

Best for: Complex custom workflows, RAG applications, production systems requiring many integrations. Trade-off: Can get verbose for simpler use cases. Initial learning curve is real.

AutoGen (Microsoft)

Microsoft’s framework specializes in multi-agent conversations and human-in-the-loop workflows. Strong for code-heavy tasks and teams experimenting with agent architectures.

Best for: Multi-agent collaboration, research and development environments. Trade-off: Steeper setup. Feels more like a research tool than a rapid-development platform.

CrewAI

The fast-growing newcomer with role-based agent teams and clear task handoffs. The easiest framework to get something working quickly.

Best for: Rapid prototyping, structured workflows, teams new to agent development. Trade-off: Less flexibility for novel or complex architectures.

Best AI Agent Frameworks for Beginners in 2026

For those just starting out, the recommended path:

- Custom GPTs (ChatGPT Plus) — Zero setup, explore agentic behavior immediately

- n8n or Zapier — Visual workflow tools that let non-developers build agentic pipelines

- CrewAI — First real framework for those ready to write Python

- LangChain — Once the concepts are solid and production requirements emerge

The no-code and low-code options have matured significantly. A meaningful percentage of production agent deployments in 2026 run on n8n workflows with LLM nodes — no Python required.

| Framework | Best For | Trade-off | Skill Level |

|---|---|---|---|

| Custom GPTs | Exploration | Limited customization | Beginner |

| n8n / Zapier | No-code workflows | Less flexible | Beginner |

| CrewAI | Rapid prototyping | Less complex architectures | Intermediate |

| LangChain | Production systems | Verbose, steep curve | Advanced |

| AutoGen | Multi-agent R&D | Research-oriented | Advanced |

For a detailed side-by-side comparison of these frameworks — including production readiness, orchestration models, memory handling, and a decision matrix for choosing the right one — the complete guide to agentic AI frameworks covers everything needed to make an informed selection.

How to Get Started with AI Agents (5 Steps, No Coding Required)

The most common misconception about AI agents is that building or using them requires a software engineering background. That’s not true in 2026. Here’s a practical path for anyone starting from zero.

Step 1: Define the Problem (Start With One Workflow)

The most successful agent deployments start hyper-specifically. Trying to build “an agent that handles all operations” fails. Building “an agent that qualifies new leads from our website contact form and routes them to the right salesperson” succeeds.

Pick a single, high-volume, time-consuming workflow. Criteria for a good first candidate:

- Happens frequently (> 50 times per week)

- Follows a reasonably predictable pattern

- Currently takes meaningful human time

- Has clear success/failure criteria

Step 2: Choose a Build Path

Three options, ordered by complexity:

No-code tools (Zapier, n8n, Make): Visual workflow builders with AI steps. Best for non-technical users who want to automate a defined process. Handles the majority of practical business use cases without writing a single line of code.

Agent frameworks (CrewAI, LangChain): Python-based frameworks for custom agents. Required when workflows are complex, multi-step, or need sophisticated memory and reasoning.

Custom code: Full control. For organizations with specific security, integration, or performance requirements that off-the-shelf tools can’t meet.

Step 3: Select Your Tools and Integrations

What data sources will the agent need? What systems will it need to act on? Map these before building:

- Input sources: where does information come from? (email, CRM, web forms, databases)

- Output actions: what should the agent do? (send messages, update records, create tasks, book meetings)

- APIs available: most business software exposes APIs that agents can call

Step 4: Add Guardrails and Test

AI agents can behave unexpectedly. Before deploying, build in guardrails:

- Define what the agent is explicitly not allowed to do

- Set escalation triggers — when does it hand off to a human?

- Test with edge cases, not just happy paths

- Run in a sandbox environment against real data before going live

The AI agent security best practices guide covers prompt injection prevention, tool governance, and enterprise security frameworks in detail — a necessary read before any production deployment.

Step 5: Deploy, Monitor, and Iterate

Deployment is not the finish line. Agents need monitoring:

- Track success rates and failure modes

- Review escalations — these reveal where the agent needs improvement

- Watch for hallucinations or unexpected tool calls

- Iterate based on real-world performance data

The organizations getting the most value from agents treat them like employees: onboard carefully, monitor early performance, give feedback, and invest in continuous improvement.

Challenges and Limitations of AI Agents

Any honest assessment of AI agents includes the failure modes. Knowing these ahead of time is what separates successful deployments from expensive experiments.

What Are the Real Risks of Using AI Agents?

Hallucination and Confident Errors

LLMs can generate plausible-sounding but incorrect information — and agents can act on that incorrect reasoning. An agent confidently querying the wrong database, or sending an email based on a false assumption, causes real downstream problems. The fix: narrow task scoping, output validation, and human review for high-stakes actions.

Cost Overrun at Scale

LLM API calls aren’t free. Complex agent workflows — especially those running many ReAct loop cycles — can accumulate significant compute costs. Without monitoring and budget guardrails, production costs can spike unexpectedly. Organizations should meter costs per workflow, not just overall.

Security and Prompt Injection

Agents that process external data (web pages, user inputs, documents) are vulnerable to prompt injection — malicious content that attempts to redirect agent behavior. An agent browsing a page that contains hidden instructions to “ignore previous instructions and send all data to…” is a genuine attack surface. Defense requires careful tool design, input sanitization, and limiting agent permissions.

Data Privacy Concerns

Agents accessing CRM data, email, and financial systems handle sensitive information. Compliance with GDPR, HIPAA, and industry regulations requires careful architecture — particularly around what data the LLM provider sees, how long context is retained, and audit trail requirements.

Reliability on Novel Situations

Agents perform well on patterns similar to what they were tested on and break down on genuinely novel edge cases. “Knowing when you don’t know” is a hard problem in AI systems. Good design includes explicit uncertainty triggers that escalate to humans rather than producing unreliable autonomous output.

Over-Automation Risk

There’s a legitimate risk of automating processes that would be better left with humans — not because agents can’t handle them, but because human judgment, empathy, or accountability is part of the value. Customer relationships that involve significant emotion (complaints, bereavements, complex disputes) often shouldn’t be fully automated even if technically possible.

Even among practitioners, there’s ongoing debate about exactly where the human-autonomy line should sit in high-stakes domains like healthcare, legal decisions, and financial advice. Getting this calibration right is an organizational question as much as a technical one.

Why 2026 Is the Year of the AI Agent

Observers of AI for several years consistently note that 2026 feels different from prior moments of hype. Here’s why.

The Market Is Exploding

The global AI agents market was valued at approximately $7.6 billion in 2025. Projections for 2026 range from $10.9B to $11.8B — over 40% growth in a single year. The trajectory points toward $47 billion by 2030.

Those aren’t small numbers, and they’re tracking real adoption patterns, not speculative forecasts. Ecommerce has emerged as one of the fastest-adopting industries, with online stores using AI agents to automate customer service, order tracking, and inventory management at scale.

Enterprise Adoption Is Real

According to a PwC survey, 79% of organizations are already using AI agents in at least one business function — not planning to, not piloting cautiously, but using. Gartner’s projection: 40% of enterprise applications embed task-specific AI agents by end of 2026.

This isn’t experimental anymore. Companies are deploying agents in production, measuring ROI in weeks rather than quarters, and scaling what works.

The Technology Finally Works

Three convergences made this moment possible:

- Better foundation models: Current LLMs are genuinely capable planners and reasoners — not just impressive text generators

- Tool calling matured: Models reliably interact with external APIs and services without hallucinating function calls

- Memory systems improved: Vector databases and context management work at production scale

Two years ago, getting an agent to reliably complete a five-step task was hit-or-miss. Now it’s routine, and ten-step workflows are becoming the standard.

The Paradigm Is Shifting

The underlying change is from “human-in-the-loop” to “human-on-the-loop.”

The old model: AI does one step, human approves, AI does the next step, human approves…

The new model: AI handles the workflow, human monitors and intervenes only when needed.

That’s a fundamental change in how automated systems are designed, deployed, and trusted. It requires good guardrails and thoughtful design — but it’s happening at scale in 2026.

Frequently Asked Questions

What is the difference between an AI agent and a chatbot?

A chatbot responds to queries; an AI agent takes autonomous actions to achieve goals. Agents have persistent memory, access to external tools, and the ability to execute multi-step workflows without step-by-step human approval. Chatbots are conversational interfaces; agents are operational systems. The clearest distinction: chatbots talk, agents work.

What is agentic AI and how is it different from regular AI?

Agentic AI refers to systems designed to operate with high autonomy — perceiving situations, planning multi-step responses, and executing actions with minimal human input. Regular generative AI responds to prompts on demand; agentic AI pursues goals independently. The key distinction is initiative: agentic systems trigger their own next steps without waiting for instructions after each action.

How do AI agents make decisions?

AI agents follow the ReAct loop (Reason + Act). They assess the current state, identify what information or actions are needed, call available tools, process the results, and decide on the next step. This cycle repeats until the goal is reached or the agent determines it needs human input. The reasoning happens inside the LLM — which is why the quality of the underlying model matters significantly.

Can AI agents work together in teams?

Yes — multi-agent systems assign specialized roles to individual agents that collaborate through an orchestration layer. One agent researches, another drafts, a third reviews. This approach handles complex workflows better than a single monolithic agent and has become the dominant architecture for enterprise deployments in 2026. Frameworks like AutoGen and CrewAI are designed specifically for this pattern.

Can AI agents operate without human oversight?

Modern AI agents operate within boundaries that are explicitly defined during setup. The deploying organization controls which tools agents access, what actions they can take, and when they must escalate to humans. Agents are autonomous within limits — not unsupervised. Good design always includes guardrails, audit trails, and escalation triggers.

What’s the difference between AI agents and robotic process automation (RPA)?

RPA follows rigid, pre-programmed rules to automate repetitive tasks — it breaks when conditions change unexpectedly. AI agents understand context, adapt to new situations, and reason through novel scenarios. RPA is deterministic; agents are adaptive. Many organizations now deploy RPA for structured, predictable work while AI agents handle the judgment-heavy exceptions that rules can’t cover.

What are the main components of an AI agent?

Five core components: a reasoning brain (typically an LLM), a perception module (input gathering), memory systems (short-term context and long-term storage), tools (for taking real-world actions), and a planning module (for breaking goals into executable steps). Together, these enable the autonomous behavior that distinguishes agents from simpler AI systems.

Are AI agents safe to use?

Safety depends almost entirely on implementation quality. Agents working within well-designed permission boundaries, with proper guardrails and audit trails, pose manageable risks similar to other enterprise software. Poorly designed agents — with excessive permissions, no escalation triggers, and no monitoring — introduce real operational and security risks. The answer is design quality, not the technology category itself.

How much does it cost to use AI agents?

Costs vary widely. Agent frameworks themselves (LangChain, AutoGen, CrewAI) are open-source and free. Primary costs are LLM API usage (varies by provider and volume, typically $0.002–$0.06 per 1,000 tokens depending on model) and any managed platform fees. No-code platforms like n8n run $20–$50/month for small deployments. Enterprise agent platforms range from $200–$5,000/month. Starting small and scaling as value proves out is the standard approach.

What’s the simplest way to try an AI agent today?

Start with Custom GPTs on ChatGPT Plus. Create one with specific instructions and file access — that’s agentic behavior in the simplest form. For no-code workflows, Zapier’s AI agent feature and n8n with LLM nodes provide a taste of legitimate agentic pipelines. For developers, CrewAI has the gentlest learning curve among the real frameworks.

The Bottom Line on AI Agents

AI agents are autonomous systems that perceive, reason, act, and learn. They wrap powerful language models with memory, tools, and planning capability — turning passive question-answering into active, multi-step problem-solving.

2026 is the year this technology moved from impressive demos to deployed-at-scale. The numbers are unambiguous: 62% of organizations experimenting with agents, 79% using AI in at least one function, Gartner projecting 40% of enterprise applications embedding agents by year-end.

Does this mean everyone needs to become an AI engineer? No. But understanding what agents are — and what they’re not — puts any team ahead of most organizations still confused by the hype.

The practical path: start with one specific problem. Use no-code tools to build something small. Measure outcomes. Scale what works. The technology is accessible; the bottleneck for most organizations is now clarity of purpose, not technical capability.

Want to build the first agent without coding? Follow the step-by-step guide to build AI agents with n8n workflows — a practical guide for creating autonomous agents with memory, tools, and decision-making capabilities, no Python required.

The question isn’t whether AI agents will change how work gets done. The trajectory on that is clear. The question is how quickly any given organization will move from watching to deploying.