Generative AI vs Agentic AI: Key Differences Explained

Discover the crucial differences between Generative AI and Agentic AI. Learn which technology fits your needs, explore real-world use cases, and see what the future holds.

ChatGPT can write a blog post—but it can’t research the topic, find sources, upload it to WordPress, and promote it on social media. That gap between generating content and completing tasks marks exactly where generative AI ends and agentic AI begins.

As AI capabilities expand in 2026, two terms dominate technology conversations: generative AI and agentic AI. While often used interchangeably, they represent fundamentally different approaches to artificial intelligence with distinct capabilities, limitations, and use cases. The distinction matters more than it might seem — organisations that conflate the two often deploy the wrong tool for the job and miss the efficiency gains that come from combining both.

For a foundational understanding of autonomous systems, see the complete guide on AI agents explained.

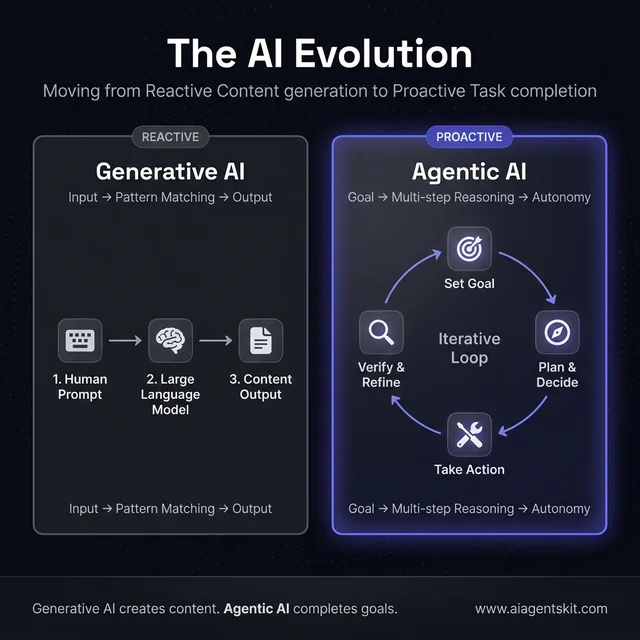

The fundamental shift from input-output reactivity to goal-oriented autonomous loops.

What Is Generative AI and How Does It Work?

Generative AI refers to artificial intelligence systems that create new content — text, images, audio, video, or code — based on patterns learned from training data. These systems operate reactively: they respond to prompts with generated outputs but don’t take independent actions or make decisions beyond the immediate generation task.

The numbers reflect the technology’s momentum. Grand View Research projects the generative AI market to grow from $22.2 billion in 2025 to $29.6 billion in 2026, with some forecasts placing the broader market at over $91 billion in 2026 alone at a 34% compound annual growth rate through 2030. McKinsey’s 2025 State of AI report found that 72% of organisations already use generative AI in at least one business function — up from roughly 50% between 2020 and 2023. That adoption curve is steep, and it’s still accelerating.

Core Characteristics of Generative AI Systems

Generative AI systems share several defining traits. They require explicit human prompts for each output and operate on a single-turn or multi-turn conversational basis. Most implementations remain stateless across sessions unless specifically designed with memory features. These systems can’t take actions in external systems — their output serves as the end product rather than a trigger for further operations.

The technical foundation rests on transformer architectures trained through large-scale unsupervised learning. During inference, these models perform pattern matching and probabilistic token prediction to generate coherent, contextually appropriate responses. The architecture excels at producing creative outputs but stops at the boundary of action-taking.

The foundation of these tools rests on large language models that process and generate human-like text through sophisticated neural network architectures.

Popular Generative AI Tools and Examples in 2026

The generative AI ecosystem features mature tools across multiple content types:

Text Generation:

- GPT-5 / GPT-5.2 (OpenAI): The current flagship family as of 2026. GPT-5 launched in August 2025, with GPT-5.2 Instant released February 10, 2026, and GPT-5.3-Codex — OpenAI’s most capable agentic coding model — released February 5, 2026. These models feature adaptive reasoning and support context windows exceeding 1 million tokens.

- Claude Opus 4.6 / Claude Sonnet 4.6 (Anthropic): The latest Claude 4 iterations, both released in February 2026. Opus 4.6 (launched February 5) excels at complex agentic tasks, while Sonnet 4.6 (launched February 17) offers superior search performance at a lower cost. Both support a 1M token context window in beta.

- Gemini (Google): Multimodal capabilities with deep ecosystem integration

- Llama (Meta): Open-source alternative enabling custom deployments

Image Generation:

- DALL-E 3 (OpenAI): Integrated with ChatGPT for conversational image creation

- Midjourney: Industry-leading image quality with artistic styling

- Stable Diffusion (Stability AI): Open-source image generation with local deployment options

- Adobe Firefly: Commercial-safe training data for enterprise design workflows

Code Generation:

- GitHub Copilot: AI pair programmer with IDE integration, now powered by GPT-5.2

- GPT-5.3-Codex: OpenAI’s dedicated agentic coding model combining code generation, reasoning, and general-purpose intelligence

Common Use Cases for Generative AI

Organisations deploy generative AI across numerous applications. Content creation remains the dominant use case, with 72% of generative AI users leveraging these tools for blog posts, marketing copy, and social media content. Technical teams use code completion and generation features to accelerate development. Translation and summarisation help process large document volumes quickly. Creative professionals employ image generation for design mockups and marketing materials. Customer support teams implement reactive chatbots that handle routine inquiries without human intervention.

That said, teams consistently discover that generative AI’s value plateaus when workflows require more than content production — research, multi-system coordination, and autonomous decision-making all point toward agentic systems.

What Is Agentic AI and What Makes It Different?

Agentic AI refers to AI systems that act autonomously to achieve goals. Unlike generative AI, which waits for prompts and produces outputs, agentic AI can plan, make decisions, use tools, and take actions with minimal or no human intervention. These systems break down complex goals into subtasks, execute multi-step workflows, and adapt based on intermediate results.

The market trajectory signals where enterprise investment is heading. The agentic AI market reached $7.3 billion in 2025 and is projected to hit $9.1–$10.9 billion in 2026. Gartner’s Technology Trends 2026 report predicts that 40% of enterprise applications will embed AI agents by end of 2026, a dramatic increase from less than 5% in 2025. McKinsey’s State of AI 2025 report found that 62% of organisations are already experimenting with AI agents, though only 23% have successfully scaled at least one agentic system within a business function — highlighting the gap between experimentation and production deployment.

Core Characteristics of Agentic AI Systems

Agentic AI systems operate through goal-oriented rather than prompt-reactive mechanisms. They maintain persistent state and memory across interactions, enabling context accumulation over time. These systems integrate extensively with external tools and APIs, allowing them to perform actions beyond content generation. Multi-step planning and execution capabilities enable them to pursue complex objectives independently. They often operate in loops, evaluating progress and adjusting tactics until achieving the stated goal.

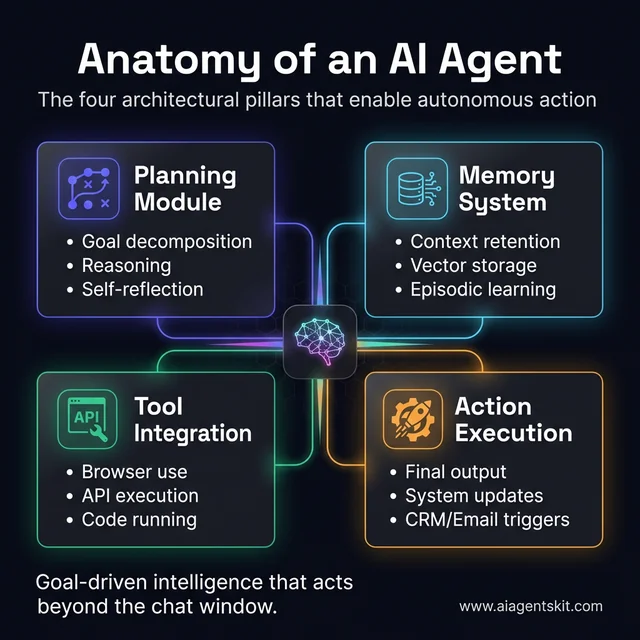

The technical architecture combines large language models with an orchestration layer containing several specialised modules. The planning module breaks goals into subtasks. The memory system maintains short-term and long-term context. Tool use capabilities enable API and code execution. Reflection and evaluation components support self-correction. Action execution modules translate decisions into real steps within external systems.

One distinction that frequently confuses practitioners: agentic AI and individual AI agents aren’t the same thing. For a precise breakdown of the key differences between AI agents and agentic AI — including a decision framework for choosing which approach fits a given use case — that guide covers each distinguishing factor in depth.

The four architectural pillars that transform a language model into an autonomous agent.

A notable development from February 2026: OpenAI co-founder Andrej Karpathy coined the term “agentic engineering” to describe the professional practice of designing, supervising, and shaping agentic AI systems — recognising that working with agents requires a distinct discipline beyond traditional prompt engineering.

For hands-on implementation, refer to the detailed AutoGPT tutorial covering step-by-step agent construction.

Popular Agentic AI Tools and Frameworks in 2026

The agentic AI ecosystem includes frameworks and specialised platforms:

- AutoGPT: Open-source autonomous agent framework enabling goal-directed behaviour

- CrewAI: Multi-agent orchestration framework for collaborative AI teams — one of the fastest-growing frameworks in 2025-2026

- LangChain/LangGraph: Developer tools and ecosystem for building agentic applications, with LangGraph now the preferred choice for complex stateful workflows

- Devin (Cognition): AI software engineer capable of end-to-end coding projects

- Microsoft Copilot Studio: Enterprise platform for building autonomous agents within Microsoft ecosystems

- Amazon Bedrock Agents: AWS-native agent development and deployment platform

- Harvey: Specialised agentic AI for legal research and document analysis

- Glean: Enterprise search and knowledge management with agentic capabilities

Common Use Cases for Agentic AI

Agentic AI excels in scenarios requiring sustained effort and decision-making. Autonomous research and report generation enables comprehensive analysis without constant human guidance. End-to-end workflow automation handles complex business processes spanning multiple systems. Code review and bug fixing workflows autonomously identify, diagnose, and resolve software issues. Multi-step customer service resolution goes beyond single-question answering to handle complete case management. Supply chain optimisation systems continuously adjust inventory and logistics based on changing conditions. Automated testing and QA agents execute comprehensive test suites and analyse results independently.

What’s the Difference Between Generative AI and Agentic AI?

Understanding the distinction between these AI paradigms requires examining their fundamental operational differences.

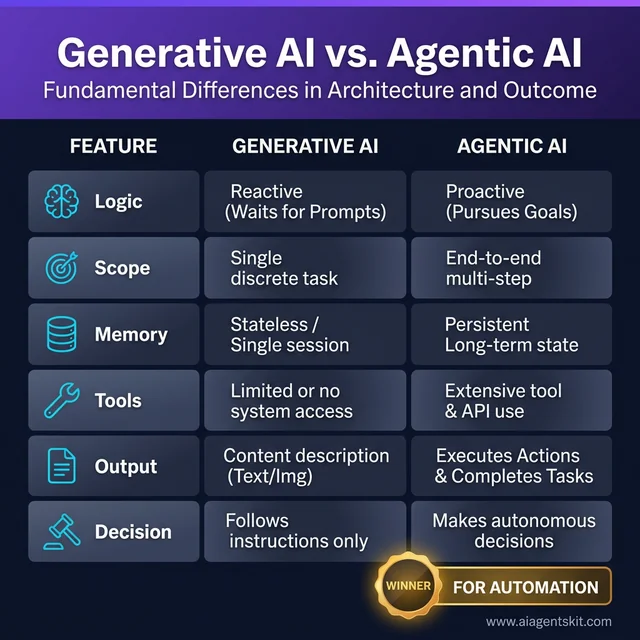

Key architectural and operational differences between generative and agentic systems.

The following comparison table summarises the key distinctions:

| Feature | Generative AI | Agentic AI |

|---|---|---|

| Interaction Model | Reactive (waits for prompts) | Proactive (pursues goals) |

| Scope | Single task per interaction | Multi-step workflows |

| Memory | Stateless (usually) | Persistent state and memory |

| Tool Use | Limited or none | Extensive tool integration |

| Decision Making | No independent decisions | Makes decisions to achieve goals |

| Human Input | Required for each output | Minimal after goal setting |

| Output | Content only | Actions plus content |

| Examples | ChatGPT, Claude, Midjourney | AutoGPT, CrewAI, Devin |

Real-World Scenario: Research and Content Production

Consider the task of creating a comprehensive market research report on a new sector. A generative AI system like ChatGPT or Claude produces well-written content based on information the user provides. The AI generates compelling prose, organised arguments, and clear summaries — but the user must supply every data point, determine which sources to trust, and manually handle fact verification and document formatting.

An agentic AI system approaches this entirely differently. Given the goal “produce a 20-page market research report on the electric vehicle charging infrastructure sector in Europe,” the agent independently searches for relevant regulatory filings, extracts key statistics from industry reports, cross-references conflicting figures, generates data visualisations, structures the findings into a coherent document, and queues follow-up research tasks where gaps appear. The agent makes hundreds of micro-decisions — which sources meet quality thresholds, what data merits inclusion, whether emerging trends contradict established assumptions — all without human intervention.

The practical gap is significant: a human analyst supported by generative AI might complete this task in a day; an agentic system can produce a comparable first draft in under an hour with equivalent quality on structured, data-heavy sections.

Real-World Scenario: Enterprise Customer Support

In customer support applications, the distinction becomes particularly clear. Traditional generative AI chatbots handle individual questions reactively. When a customer asks about order status, the chatbot responds with information drawn from knowledge bases. However, if the issue requires checking multiple systems, escalating to human agents, or processing a return, the chatbot can’t autonomously complete those actions — it can only provide instructions for a human to follow.

Agentic AI systems transform customer support by handling complete case resolution. The system checks order status, verifies payment information, processes refunds, schedules replacements, and updates CRM records — all without human intervention for routine cases. The difference between agents and chatbots fundamentally changes what automated support can achieve, shifting the technology’s role from information provider to task completer.

According to Gartner, 40% of enterprise applications will include agentic AI capabilities by end of 2026, up from under 5% in 2025 — signalling a fundamental shift from reactive to proactive AI systems across industries.

7 Limitations of Generative AI That Agentic AI Solves

Generative AI is genuinely powerful — but practitioners consistently hit the same walls. Understanding where those boundaries are is more useful than any feature comparison, because the limitations of generative AI are precisely the problems agentic AI was built to address. Knowing how AI hallucinations form and propagate is a useful baseline before deploying either system in production.

1. Hallucination — Confident Errors Without Self-Verification

Generative AI models produce plausible-sounding content based on statistical patterns, not verified facts. When a model doesn’t know something, it often fabricates confidently rather than admitting uncertainty — a phenomenon known as hallucination. There’s no built-in mechanism to cross-check outputs against real-world sources.

Agentic AI solves this through reflection loops and tool use. An agent can generate a draft, then call a web search tool to verify claims, flag inconsistencies, and revise before presenting a final output. The self-correction layer doesn’t eliminate errors, but it reduces their rate substantially in factual tasks.

2. Statelessness — No Memory Between Sessions

Most generative AI implementations are stateless by default. Each new conversation starts from scratch — the model has no recollection of previous interactions, accumulated context, or learned preferences. Users re-explain their situation every session.

Agentic systems maintain persistent memory through vector databases and episodic memory stores. A well-designed agent remembers a client’s preferences, project history, and past decisions — behaving more like an experienced colleague than a search engine.

3. Single-Turn Bottleneck — Requires Human Re-Prompting at Every Step

Generative AI requires a human to drive each micro-step of a complex workflow. Writing a research report means one prompt for research, another for outlining, another for drafting, another for editing — with the human acting as the workflow engine.

Agentic AI receives a high-level goal and handles the step decomposition autonomously. Chain-of-thought reasoning allows the agent to break objectives into subtasks, sequence them correctly, execute each step, and move to the next without pausing for human input at every decision point.

4. No Tool Use — Can’t Act Beyond the Chat Window

Generative AI can describe how to do something but can’t do it. It can’t browse the web, query a database, write a file, call an API, or interact with external systems. Its output is always text (or an image) — never an action.

Agentic AI uses function calling and tool integration to translate decisions into real-world actions. An agent can query a CRM, write and execute code, send an email, update a spreadsheet, or trigger a downstream workflow — all within a single goal-completion loop.

5. Context Window Exhaustion on Long Tasks

Even with context windows now reaching 1 million tokens (Claude Opus 4.6, GPT-5.2), long multi-session workflows eventually exceed capacity. Conversation history, retrieved documents, intermediate outputs, and tool responses accumulate rapidly in complex tasks.

Agentic systems manage this through hierarchical memory architecture: hot context (current session), episodic memory (recent interactions), and long-term storage (persistent knowledge base). The agent decides what to keep in active context and what to offload — making long-horizon tasks tractable.

6. No Adaptation — Output Is Final

Generative AI produces an output and stops. If that output is partially wrong or could be improved by additional information encountered mid-task, the model has no mechanism to course-correct. The human must re-prompt with corrections.

Agentic AI evaluates its own outputs through reflection modules. An agent can assess whether its draft meets the quality threshold, identify gaps, trigger additional research, revise based on new findings, and re-evaluate — iterating until the objective is satisfied or a defined exit condition is met.

7. Prompt Engineering Dependency — Garbage In, Garbage Out

The quality of generative AI output is directly proportional to the quality of the prompt. Teams spend significant time on prompt engineering — crafting, testing, and iterating prompts to reliably produce useful outputs. A slight change in wording can dramatically shift results.

While agentic AI still benefits from good goal specification, the planning layer provides a buffer between the human’s instruction and the model’s execution. The agent interprets intent, fills in gaps, and handles ambiguity that would derail a purely generative system. That said, even among experts, there’s genuine debate about how much agency should be delegated before human oversight becomes insufficient — and the right answer differs by use case.

Can Generative AI and Agentic AI Work Together?

The most powerful AI implementations often combine both paradigms rather than choosing exclusively between them. Generative AI provides the content creation capabilities that serve as building blocks, while agentic AI orchestrates those capabilities into complete workflows.

Hybrid AI Systems: Architecture and Design

Hybrid architectures leverage generative models as components within agentic frameworks. The agent handles planning, memory, and tool integration while delegating content generation tasks to specialised generative models. A marketing automation agent, for example, might use GPT-5 to write email copy, DALL-E to generate accompanying images, and internal analytics tools to optimise send times — all coordinated through the agentic orchestration layer, with the agent deciding which creative variant to deploy based on historical performance data.

This combination addresses limitations inherent to each approach alone. Pure generative AI can’t complete multi-step tasks autonomously. Pure agentic systems without generative components lack the creative and linguistic capabilities needed for content-heavy workflows. Together, they enable comprehensive automation of knowledge work that neither paradigm could achieve independently.

The evidence increasingly shows that hybrid deployments — not pure agentic systems — deliver the fastest ROI for organisations new to AI automation. Practitioners consistently find that starting with generative AI to establish content quality baselines, then layering in agentic orchestration, reduces implementation risk significantly compared to attempting full autonomy from day one.

When to Combine Both Approaches

Organisations benefit from hybrid systems when workflows involve both creative content generation and procedural execution. Marketing campaign management exemplifies this combination: agents handle scheduling, A/B testing, and performance analysis while generative models create ad copy, social posts, and email content.

Software development workflows similarly benefit, with agents managing project coordination, testing, and deployment while generative models handle code writing and documentation. A concrete example from 2026: several enterprise engineering teams report that hybrid setups — where agentic orchestrators coordinate GPT-5.3-Codex for code generation and separate models for documentation — have reduced sprint completion times by 30–45% on well-defined feature work.

Ask ChatGPT to write a ten-page report and it will produce ten pages — but an agent can research, outline, draft, and revise that report while interacting with external data sources and tools throughout the process.

For a detailed comparison of the leading frameworks that power these hybrid systems, the agentic AI frameworks guide covers architecture decisions, performance benchmarks, and enterprise use cases.

How Multi-Agent AI Systems Take Autonomy Further

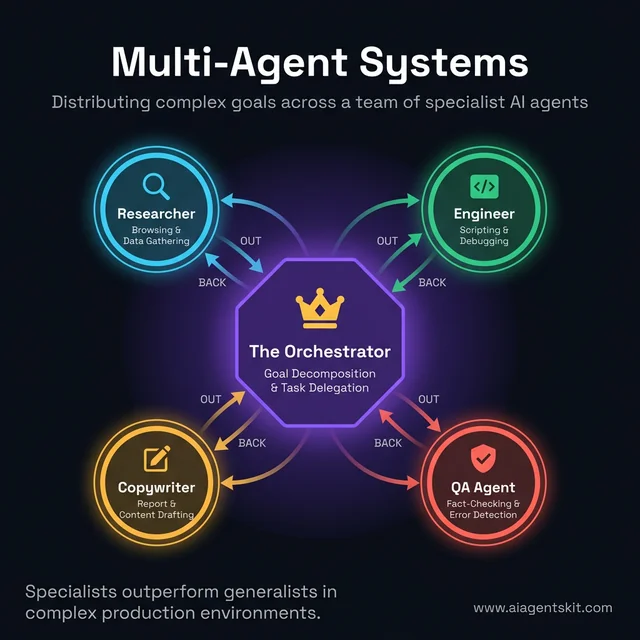

Single agents handle complex tasks well — but some objectives require specialised expertise that a single agent can’t replicate effectively. Multi-agent systems address this by deploying teams of specialist agents that collaborate, each owning a specific domain, coordinated by an orchestrator. Anthropic described this architecture in February 2026 as “a microservices moment for AI” — the same principle that transformed monolithic software into composable, maintainable services.

For teams evaluating which architecture fits their needs, the multi-agent systems explained guide covers design patterns and trade-offs in detail.

Distributing complex goals across a team of specialist AI agents for higher precision.

How Multi-Agent Orchestration Works

The architecture centres on a puppeteer (orchestrator) agent that receives the high-level goal, breaks it into parallel workstreams, and delegates each to a specialist agent. Specialist agents operate independently within their domain — a research agent queries sources, a writing agent drafts content, a verification agent fact-checks, a formatting agent assembles the final output. Results flow back to the orchestrator, which integrates them and manages dependencies.

This mirrors how high-performing human teams work. A consulting engagement doesn’t have one person doing all the research, analysis, writing, and client communication — it distributes those functions to specialists working in parallel. Multi-agent AI brings the same operating model to automated workflows.

Single Agent vs Multi-Agent: When Each Architecture Wins

| Scenario | Best Fit | Why |

|---|---|---|

| Linear, sequential workflow | Single agent | Simpler to debug, lower latency, less orchestration overhead |

| Parallel independent workstreams | Multi-agent | Faster completion, specialists outperform generalists |

| Tasks requiring quality gates | Multi-agent | Verification agents catch errors before output delivery |

| Exploratory or experimental tasks | Single agent | Lower setup cost for uncertain requirements |

| High-stakes outputs (legal, financial) | Multi-agent | Redundant checking reduces risk |

3 Real-World Multi-Agent Deployments in 2026

Software engineering teams deploy planner + coder + tester + PR-reviewer agents in sequence. The planner agent breaks a feature request into implementation tasks, the coder agent writes the code, the tester agent runs the test suite and flags failures, and the reviewer agent evaluates code quality before raising the pull request. Engineering teams using this pattern report 30–45% shorter sprint completion times on well-scoped features.

Financial research operations use a web-researcher agent to gather market data, a data-analyst agent to run quantitative models, a report-writer agent to synthesise findings, and a compliance-checker agent to flag regulatory concerns before the report is distributed. A single analyst supported by this system can produce research that previously required a team of four.

Customer resolution workflows decompose into an intake agent (categorises and prioritises the request), a data-retrieval agent (gathers account history, order details, policy information), a resolution agent (applies resolution logic and executes the fix), and a CRM-updater agent (records the outcome and closes the ticket). A logistics company using this architecture handled 82% of support requests autonomously within two weeks of deployment.

Frameworks Best Suited for Multi-Agent Work

- CrewAI: Purpose-built for multi-agent collaboration with role-based agent definitions and built-in task delegation — the fastest path to a working multi-agent prototype

- LangGraph: Best for complex stateful multi-actor applications where control flow, conditional routing between agents, and human-in-the-loop checkpoints are required

- Microsoft Copilot Studio: Enterprise-grade orchestration for teams operating within Microsoft’s ecosystem

5 Steps to Choose the Right AI Approach for Your Needs

Selecting between generative and agentic AI requires systematic evaluation of specific requirements. Follow this five-step framework to identify the optimal approach:

A systematic framework for matching technology to your specific business objectives.

Step 1: Define Your Goal With Precision

Start by articulating exactly what outcome the workflow needs to produce. Content creation tasks such as writing, image generation, or code completion align with generative AI capabilities. Task completion goals involving research, data processing, multi-system coordination, or autonomous decision-making indicate agentic AI requirements. Document the complete workflow from start to finish, including every decision point where a human currently makes a judgement call — those decision points are precisely where agentic AI adds its greatest value.

Step 2: Assess Workflow Complexity

Evaluate whether the goal involves single-step or multi-step execution. Simple, discrete tasks that complete in one interaction suit generative AI well. Complex objectives requiring sequential steps, conditional logic, or adaptation based on intermediate results demand agentic capabilities. Consider whether the workflow branches based on findings or requires iteration to refine outputs — any branching logic that currently requires human attention is a candidate for agentic automation.

Step 3: Evaluate Integration Requirements

Determine whether the AI system must interact with external tools, databases, or APIs. Standalone content generation without system integration points works well with generative AI. Workflows requiring database queries, file system operations, third-party API calls, or multi-system coordination require agentic AI’s tool-use capabilities. List every external system the AI must access to complete its objective — if that list exceeds two systems, agentic AI is almost certainly the right choice.

Step 4: Consider Reliability and Oversight Requirements

Assess the organisation’s tolerance for variability and the potential impact of errors. Generative AI produces consistent quality for creative tasks but requires human oversight for high-stakes decisions. Agentic AI introduces additional reliability considerations: agents can make incorrect decisions, enter processing loops, or take unintended actions when encountering unexpected states.

Stack Overflow’s 2024 Developer Survey found that 67% of developers cite reliability concerns as the primary barrier to agentic AI deployment, with only 12% having deployed agents to production environments. Even among experts, there’s ongoing debate about where the appropriate reliability threshold lies — most practitioners recommend human-in-the-loop supervision until an agent has demonstrated consistent behaviour across hundreds of real-world task variants.

Step 5: Match Technology to Requirements

Based on the assessment, select the appropriate approach. Choose generative AI when content creation, brainstorming assistance, or reactive Q&A capabilities are the primary need. Select agentic AI when autonomous task completion, multi-step workflows, or system integration is required. Consider hybrid approaches when the use case spans both categories. Start with simpler implementations before advancing to complex agentic systems — many successful agentic deployments began as generative AI projects that gradually acquired tool-use capabilities.

Agentic AI vs Generative AI: 9 Industry Use Cases Compared

The choice between generative and agentic AI looks different depending on the sector. While generic feature comparisons help frame the distinction, the clearest way to understand which approach fits a given context is to see how leading industries are actually deploying each. The pattern that emerges: generative AI wins on content creation within a workflow; agentic AI wins when the workflow itself needs to run without constant human steering. For a deeper dive into specific deployment scenarios, the AI agent use cases guide covers over 50 real-world implementations.

Healthcare: From Clinical Notes to Autonomous Care Coordination

Generative AI role: Summarising patient records, drafting discharge letters, generating patient-facing FAQs, creating training materials for clinical staff.

Agentic AI role: Autonomous patient journey orchestration — managing fragmented care pathways across multiple systems without clinician prompting. Agentic systems act as care coordinators, updating EHR records from clinical conversations, scheduling follow-ups, flagging deteriorating lab values, and triggering escalation protocols when thresholds are breached. In drug discovery, BCG research finds that agentic AI pipelines can compress the early-stage drug discovery timeline by up to 40% by running molecular simulation and literature review agents in parallel.

The divide: Generative AI produces clinical documentation; agentic AI manages clinical processes.

Finance: From Report Drafting to Autonomous Risk Management

Generative AI role: Regulatory report drafting, client communication scripts, earnings call summaries, investment memo generation.

Agentic AI role: Real-time fraud detection with autonomous case escalation; AML/KYC process automation that queries multiple compliance databases, scores risk, and flags suspicious patterns without analyst intervention; autonomous underwriting that retrieves applicant data, runs risk models, and produces a decision recommendation within minutes. Lloyds Banking Group has described deploying agentic AI to offer “always-on, hyper-personalised” financial services — a capability that requires proactive action, not just responsive content generation.

The divide: Generative AI enhances analyst productivity; agentic AI automates analyst decision workflows.

Manufacturing: From Documentation to Digital Factory Floors

Generative AI role: Maintenance manual generation, product documentation, training material creation, RFQ response drafting.

Agentic AI role: Predictive maintenance agents that inspect sensor data, identify anomalies, open service tickets, and notify maintenance teams before failures occur. Supply chain orchestration agents that monitor inventory, model demand scenarios, and trigger reorders autonomously. Production scheduling agents that detect deviations, adjust work orders, and coordinate supplier communications without plant manager involvement. Manufacturing Dive reports that 2026 is the year agentic AI moves from experimentation to “running factories” — a qualitative shift from advisory to executive function.

The divide: Generative AI supports the humans running operations; agentic AI runs operations directly.

Marketing: From Copy Generation to Full Campaign Automation

Generative AI role: Ad copy, email subject lines, social posts, blog drafts, product descriptions, creative briefs.

Agentic AI role: End-to-end campaign management — agents handle A/B test setup and analysis, automated media buying optimisation, performance-based creative iteration, audience segmentation, and cross-channel scheduling. A marketing agent receives the campaign brief and manages execution autonomously, escalating to human creatives only for brand-sensitive decisions.

The divide: Generative AI is the creative engine; agentic AI is the campaign operations manager.

Software Development: From Autocomplete to Autonomous Engineering

Generative AI role: Code suggestions in the IDE (GitHub Copilot), boilerplate generation, code explanation, documentation drafting.

Agentic AI role: Full feature development from ticket to PR. Tools like Devin (Cognition) and GPT-5.3-Codex (OpenAI, released February 2026) can receive a feature specification, write the implementation across multiple files, run tests, debug failures, and open a pull request — without developer involvement in each step. The developer reviews and approves rather than writing line by line.

The divide: Generative AI accelerates writing code; agentic AI completes engineering tasks.

Getting Started: Tools and Frameworks for 2026

Practical implementation requires selecting appropriate tools. The following recommendations cover both generative and agentic AI technologies as they stand in early 2026.

Best Generative AI Tools for Production Use

For Text Generation:

- ChatGPT Plus/Pro (GPT-5.2): The current standard for accessible general-purpose text generation, with web browsing, document analysis, and emerging agentic features via Operator

- Claude Pro (Opus 4.6 / Sonnet 4.6): Extended context windows up to 1M tokens (in beta) for long document processing; Opus 4.6 excels at complex reasoning while Sonnet 4.6 offers better cost efficiency for high-volume applications

- API Access: OpenAI’s GPT-5 family, Anthropic’s Claude 4.6 API, and Google’s Gemini API enable integration into custom applications with per-token pricing that varies significantly by model tier

For Image Generation:

- Midjourney: Superior image quality for creative and marketing projects

- DALL-E 3: Convenient ChatGPT integration for conversational workflows

- Stable Diffusion: Free, open-source option with local deployment capabilities

For Code Generation:

- GitHub Copilot: IDE integration with real-time code suggestions, now powered by GPT-5.2

- GPT-5.3-Codex: Best-in-class for agentic coding tasks requiring multi-file reasoning and autonomous debugging

The key decision between generative AI tools often comes down to context window size and pricing. For tasks involving large codebases or lengthy documents, Claude Opus 4.6’s 1M token window (in beta) currently has an edge. For creative writing and general reasoning, GPT-5.2 Instant tends to deliver faster responses at lower cost. Practitioners find it worth running parallel tests across two to three models for high-stakes use cases before committing to a primary provider.

Best Agentic AI Frameworks for Development Teams

For teams new to agentic development:

- CrewAI: Python framework designed for multi-agent collaboration with readable, intuitive syntax; the fastest path from concept to working prototype for most teams

- AutoGPT: Accessible entry point for experimenting with autonomous agents; useful for validating whether a use case genuinely benefits from agentic architecture

For experienced developers building production systems:

- LangChain: Comprehensive ecosystem with extensive tool integrations and the largest community — best for teams that need broad integration support

- LangGraph: The more advanced choice for building stateful, multi-actor applications where control flow complexity matters; increasingly preferred over basic LangChain for production agentic systems

- LlamaIndex: Specialised for connecting LLMs to private data sources — the right choice when retrieval-augmented generation is central to the workflow

The AI agent frameworks comparison provides detailed analysis of options, performance benchmarks, and cost models for different skill levels and use cases. For practical implementation guidance, the “Build Your First AI Agent with Python” tutorial on this site walks through a complete agent from scratch — covering tool integration, memory setup, and safety mechanisms.

The Future of AI: Agentic Safety, Governance, and Convergence

The AI ecosystem continues evolving rapidly, with both generative and agentic capabilities advancing simultaneously. What’s changed in early 2026 is that the conversation has shifted from “what can these systems do?” to “how do we govern what they’re allowed to do?” — a more mature question that signals the technology’s transition from experimental to enterprise-grade.

Enterprise Adoption: Where Agentic AI Stands in 2026

McKinsey’s State of AI 2025 report confirms that AI-centric organisations are seeing a 20–40% reduction in operating costs and a 12–14 point increase in EBITDA margins, driven by automation and more efficient talent allocation. The technology sector leads in scaled agentic adoption, with healthcare showing strong uptake in knowledge management and insurance in marketing and sales.

That said, the implementation gap is real. Only 23% of organisations that are experimenting with agents have successfully scaled them within a business function. 80% of executives cite agentic AI as critical for company survival by 2027, yet many are still navigating the classic adoption curve — high ambition, slower execution. Most organisations that start with agentic AI discover that reliability engineering and safety architecture take 40–60% of total project time, a cost that early planning teams consistently underestimate.

The Safety and Governance Landscape (February 2026)

As agents gain autonomy, governance has moved from a theoretical concern to an active operational one. Several significant frameworks emerged from research and regulatory bodies in early 2026:

- UC Berkeley’s Agentic AI Risk-Management Standards Profile (February 2026): Aligns with the NIST AI Risk Management Framework, providing structured guidance for developers and deployers of agentic systems

- Singapore’s IMDA Agentic AI Governance Framework (January 2026): Organised around four pillars — upfront risk assessment, clear human accountability, robust technical controls, and end-user responsibility

- The International AI Safety Report 2026 (February 3, 2026): Underscored the widening gap between the rapid evolution of AI systems and the slower adaptation of oversight mechanisms, advocating for systemic resilience over control-based governance alone

Security considerations are equally pressing. Cisco’s 2026 AI Readiness Index identifies agentic AI as a “threat multiplier” for cyberattacks, enabling faster, more scalable malicious activities including tool poisoning, overprivileged access, and supply chain tampering. Governance and security are becoming key competitive differentiators — not just compliance checkboxes.

Even among experts, there’s active debate about where the right boundary between autonomy and oversight lies. The working consensus in 2026 is that fully autonomous agents suit lower-stakes, reversible actions, while high-stakes decisions — financial transactions above defined thresholds, customer-facing commitments, and regulatory-adjacent activities — should retain human approval gates regardless of agent capability level.

The Convergence of Both Paradigms

The boundary between generative and agentic AI will continue blurring as tools incorporate features from each. ChatGPT already demonstrates agentic capabilities through its Operator product for autonomous task completion. Claude’s Computer Use feature enables autonomous desktop application interaction. GPT-5.3-Codex combines generative code production with agentic multi-file reasoning and debugging. These developments suggest future AI assistants will seamlessly combine content generation with task completion in unified interfaces that abstract the underlying distinction.

Deloitte’s AI Institute identifies this convergence as the defining trend of 2026-2028, predicting that the generative/agentic distinction will become less meaningful to end users as capabilities merge — much as the distinction between “mobile” and “desktop” software blurred as responsive design matured.

Generative AI vs Agentic AI: 10 Top Questions Answered

What is the difference between generative AI and agentic AI?

Generative AI creates content based on prompts but can’t take independent actions. Agentic AI pursues goals autonomously, making decisions, using tools, and executing multi-step workflows. The fundamental distinction lies in reactive versus proactive behaviour: generative AI responds to inputs while agentic AI drives toward objectives.

Is ChatGPT generative AI or agentic AI?

ChatGPT primarily functions as generative AI, responding to individual prompts with generated content. OpenAI has added agentic features including web browsing, code interpreter, and the Operator capability for autonomous task completion. Most users interact with ChatGPT’s generative capabilities, while agentic features via Operator represent a newer, more limited deployment.

What are examples of agentic AI in 2026?

Notable agentic AI examples include AutoGPT for general autonomous tasks, CrewAI for multi-agent collaboration, Devin for software engineering, Harvey for legal research, and Microsoft Copilot Studio for enterprise automation. OpenAI’s Operator and Anthropic’s Computer Use represent cutting-edge production deployments, while Claude Opus 4.6 (launched February 2026) specifically targets complex agentic tasks with its 1M token context window.

Can AI agents replace generative AI?

No — AI agents and generative AI serve complementary purposes rather than substituting for one another. Agents often incorporate generative models as components for content creation tasks. Generative AI excels at creative output, while agents excel at orchestration and task completion. Most effective implementations combine both technologies in hybrid architectures.

How does agentic AI work technically?

Agentic AI combines large language models with specialised architectural components. The planning module decomposes goals into subtasks. Memory systems maintain context across interactions. Tool use capabilities enable API and system integration. Reflection components evaluate progress and enable self-correction. Action execution translates decisions into concrete steps — including calling external APIs, writing and running code, and interacting with web interfaces.

Is agentic AI the future of AI?

Agentic AI represents the direction enterprise AI investment is heading, but not a wholesale replacement of generative AI. Both paradigms will continue developing, with increasing convergence as tools incorporate capabilities from each category. Gartner projects that agentic AI will drive approximately 30% of enterprise application software revenue by 2035, up from 2% in 2025 — indicating substantial but not exclusive adoption.

What can agentic AI do that generative AI cannot?

Agentic AI performs multi-step workflows autonomously, integrates with external tools and systems, maintains persistent memory across sessions, and makes independent decisions to achieve goals. While generative AI creates content based on immediate prompts, agentic AI can research topics, process data across multiple sources, execute complex procedures, and adapt strategies based on intermediate results.

How do I build an agentic AI system?

Start with established frameworks like LangGraph, CrewAI, or AutoGPT rather than building from scratch. Define clear goals and constraints for the agent. Implement memory systems for context retention. Integrate necessary tools and APIs. Build in evaluation and safety mechanisms — including human approval gates for high-stakes actions. Begin with simple use cases before advancing to complex autonomous behaviours.

Are AI agents reliable enough for production?

AI agents currently suit supervised automation and human-in-the-loop workflows rather than fully autonomous high-stakes decisions. Reliability varies significantly by use case and implementation quality. The 67% of developers citing reliability concerns in Stack Overflow’s 2024 survey underscores the need for careful testing, monitoring, and fallback mechanisms. Start with low-risk, reversible actions and gradually increase autonomy as reliability is demonstrated.

How much does it cost to run agentic AI vs generative AI?

Agentic AI typically costs more than generative AI due to multiple LLM calls per task, infrastructure requirements for maintaining state, and integration complexity. A single generative AI query incurs one API call, while agents may make dozens of calls to plan, execute, and verify tasks. However, agentic automation can reduce overall costs by eliminating manual work for complex multi-step processes — AI-centric organisations report 20–40% operating cost reductions, per McKinsey’s State of AI 2025.

What to Implement First: A Practical Roadmap

Generative AI and agentic AI represent distinct but complementary approaches to artificial intelligence. Generative AI excels at creating content — text, images, code, and more — based on human prompts. Agentic AI extends these capabilities to autonomous task completion, enabling systems that plan, decide, and act with minimal human intervention.

The choice between these technologies depends on specific needs: content creation favours generative AI, while task automation and workflow completion require agentic approaches. Many organisations will benefit from combining both paradigms into hybrid systems that leverage generative capabilities within agentic orchestration frameworks.

The right sequencing matters. Most practitioners recommend establishing generative AI capabilities first — building content quality baselines, getting teams comfortable with AI outputs, and identifying which workflows generate the most value. Agentic layers come next, starting with low-stakes, reversible tasks and expanding as reliability is demonstrated. As both technologies mature and converge, that sequencing becomes the fastest path from experimentation to measurable business impact.

For teams ready to move from strategy to execution, the AI implementation roadmap provides a phased framework for deploying both generative and agentic AI across an organisation without disrupting existing operations.