AI Agents for Automation: The Complete 2026 Guide

How AI agents transform business automation — real ROI data, industry use cases, no-code tools, implementation steps, and top frameworks. Backed by Gartner, McKinsey, and Deloitte.

Something shifted in enterprise automation over the past 18 months that most organizations haven’t fully reckoned with yet. According to Gartner’s 2025 Strategic Predictions, 40% of enterprise applications will incorporate task-specific AI agents by the end of 2026 — a figure that stood at less than 5% just a year earlier. That’s not an incremental improvement; that’s a structural shift.

The challenge for most businesses isn’t whether to adopt AI agents for automation — it’s understanding what makes them fundamentally different from the automation tools that came before, and how to implement them without the governance failures that Gartner warns could abandon 40% of agentic AI projects before they reach scale. Understanding what AI agents are is the essential starting point.

This guide covers how AI agents work, what tasks they can automate, how they compare to traditional automation, real ROI data from enterprise deployments, industry-specific use cases, no-code tool comparisons, and a practical implementation roadmap — all in one place, combining Tier 1 research from Gartner, McKinsey, Deloitte, and PwC.

What Are AI Agents for Automation and How Do They Work?

AI agents for automation are software systems that use large language models (LLMs) as a reasoning engine to perceive inputs, plan multi-step actions, execute those actions across integrated tools, and adapt based on outcomes — all without requiring constant human prompting. Unlike a chatbot that answers a question or a script that runs a predefined workflow, an AI agent behaves more like a digital worker: it receives a goal, breaks it down, and figures out how to complete it.

The clearest analogy is the difference between a vending machine and a skilled assistant. A vending machine follows a fixed script: press button, dispense item. An AI agent reasons: “What does this person need? What tools do I have access to? What’s the most efficient path?” That reasoning capability — powered by today’s frontier models like GPT-5, Claude 4 Opus, and Gemini 3 Pro — is what separates agentic AI from all prior automation paradigms.

It’s worth distinguishing these systems from simpler AI tools: agents vs chatbots is one of the most misunderstood comparisons in enterprise AI right now, and the distinction matters enormously when scoping deployment costs and expectations.

Core Components of an Autonomous AI Agent

Every production-grade AI automation agent operates on four foundational layers:

1. LLM Reasoning Layer The “brain” of the agent — a large language model (GPT-5, Claude 4 Sonnet, Gemini 3 Pro, or Llama 4 for open-source deployments) that handles natural language understanding, contextual reasoning, and decision-making. The quality of this layer determines the agent’s ability to handle ambiguity and novel situations.

2. Memory Systems

- Short-term (working context): The active conversation and task history the agent holds during a session

- Long-term (persistent storage): External memory stores (vector databases, structured databases) that let agents recall past interactions, user preferences, and institutional knowledge across sessions

3. Tool Integrations The set of APIs, databases, communication platforms, and external services the agent can call. A customer service agent might have tools for: CRM lookup, email sending, ticket creation, knowledge base search, and order management system queries.

4. Orchestration Framework The coordination layer that decides which tools to call, in what order, how to handle errors, and when to escalate to a human. Frameworks like LangGraph and CrewAI handle this orchestration for developer-built agents; cloud platforms like Vertex AI Agent Builder do this at enterprise scale.

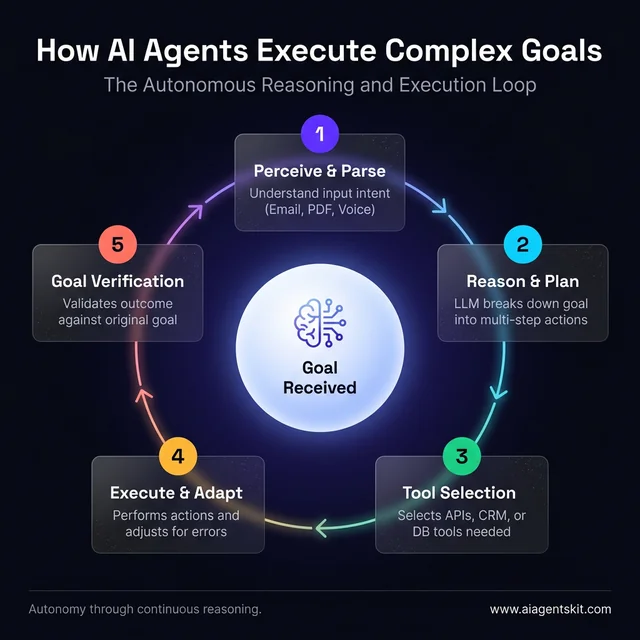

The autonomous reasoning and execution loop: how agentic AI dynamically plans and adapts.

How AI Agents Orchestrate Multi-Step Workflows

The defining characteristic of agentic AI is the ability to chain actions dynamically, not just execute a fixed sequence. Here’s what a multi-step workflow looks like in practice:

A customer submits a refund request via email. A traditional automation might route it to a human queue. An AI agent, by contrast:

- Reads and parses the email (NLP understanding)

- Queries the order management system (tool use)

- Checks the refund policy knowledge base (retrieval)

- Evaluates eligibility based on order date and policy (reasoning)

- If eligible: initiates the refund, sends confirmation email, updates CRM record (execution)

- If not eligible: drafts a polite exception request for human review (escalation)

The entire workflow completes autonomously in seconds — and if the order system returns an unexpected error at step 3, the agent adapts rather than failing silently. Many teams discover that this adaptability is what tips the economic case: the agent handles exceptions that would have required human escalation under rule-based automation.

What Tasks Can AI Agents Actually Automate?

The question organizations get stuck on isn’t whether AI agents work — it’s which of their specific tasks are worth automating first. The honest answer is that not every workflow is equally suited to agentic automation, and picking the wrong first use case is the fastest route to an abandoned pilot.

AI agents perform best when a task meets at least three of these four criteria: it involves high volume, it requires some reasoning through variable inputs (not a single fixed script), it draws on multiple data sources, and its outcomes are measurable. Every department has workflows that match this profile — the challenge is finding them.

Communication and Customer-Facing Tasks

This is where AI agents deliver the fastest, most visible ROI because the volume is high and the value of each interaction is measurable:

- Email triage and response drafting — Agents read incoming email, classify intent, draft context-aware replies, and route exceptions to the right team member. Organizations with high-volume inbound email report recovering 2–4 hours per person per day.

- Live chat and virtual support — Agents handle tier-1 inquiries end-to-end, resolving the bulk of common questions without escalation. The 60–80% containment rate cited across enterprise deployments reflects how narrow the majority of support requests actually are.

- Appointment and meeting management — Cross-referencing availability, sending scheduling invitations, managing rescheduling requests, and sending reminders — tasks that consume 5–10% of many administrative roles without adding meaningful value.

- Follow-up sequences — Post-meeting summaries, check-ins, renewal reminders, and re-engagement campaigns, personalized to context without human authorship per message.

Data and Back-Office Tasks

The back office runs on moving information between systems — exactly the kind of work AI agents handle without fatigue or error accumulation:

- Report generation — Agents query databases, aggregate data from multiple systems, format outputs per template, and distribute to stakeholders on schedule. What used to take a junior analyst half a day takes an agent minutes.

- Document information extraction — Agents read invoices, contracts, PDFs, and forms, pulling structured data from unstructured sources. This is where the gap vs. traditional RPA is most stark: an RPA bot needs a fixed template; an agent reads and understands the document.

- Data entry and CRM hygiene — Logging call notes, updating contact fields after demos, syncing data across systems, deduplicating records.

- Invoice processing and accounts payable — Read invoices (any format), match to purchase orders, flag exceptions for human review, route approvals, and mark payments.

Decision-Support and Compliance Tasks

These tasks require genuine judgment — the category where AI agents begin replacing human cognitive work rather than just human labor:

- Lead scoring and qualification — Agents evaluate inbound signals (website behavior, open rates, firmographic data) against scoring models and classify leads without human intervention.

- Fraud and anomaly detection — Real-time pattern analysis across transaction streams, identifying deviations from baseline behavior and triggering holds or alerts. The key advantage: agents can identify novel fraud patterns that don’t match predefined rules.

- Compliance monitoring — Agents scan regulatory sources for changes, compare against internal policies, and flag gaps requiring human attention.

- Contract clause review — First-pass review against approved templates, flagging non-standard language, liability clauses, and missing provisions for attorney review.

The Automation Suitability Test

Before committing resources to a use case, run it through this four-question filter:

- Volume: Does this workflow run at least 50–100 times per week?

- Variability: Does it require reasoning through variable inputs — or does a fixed script already handle it reliably?

- Data access: Can the agent be given access to the required data sources without violating data governance policies?

- Measurability: Is there a clear metric that proves the agent is performing better than the status quo?

Workflows that answer “yes” to three or more of these questions are the best first deployments. Most organizations discover that customer inquiry handling, invoice processing, and lead qualification score highest — which explains why these three use cases dominate early-stage adoption across industries.

7 High-Impact Use Cases for AI Agent Automation

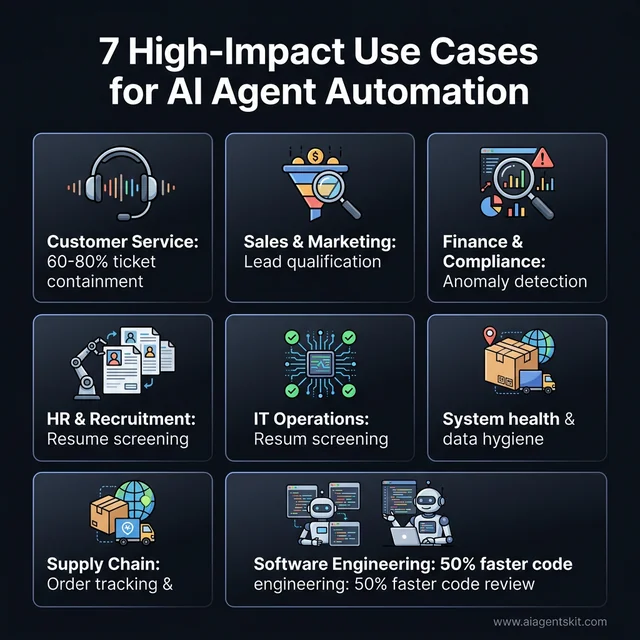

The scale of AI agent use cases across business functions has expanded dramatically. A May 2025 PwC survey of executives found that 79% have adopted AI agents, with 66% reporting measurable productivity gains — and 73% believing AI agents will provide a significant competitive advantage within 12 months. Here are the seven functions where that advantage materializes most clearly.

Where AI agents deliver the highest measurable ROI across business functions in 2026.

1. Customer Service and Support AI agents handle tier-1 inquiries, process refunds, update account details, track orders, and route complex issues to the right human — all without human intervention for routine cases. Organizations deploying agents in support consistently report 60–80% containment rates on tier-1 volume.

2. Sales and Marketing Automation Agents research leads, draft personalized outreach, qualify inbound leads against scoring models, schedule discovery calls, and update pipeline stages — compressing what used to take a sales rep hours into minutes.

3. Finance and Compliance AI agents reconcile transactions across multiple systems, flag anomalies for human review, monitor regulatory changes across jurisdictions, update internal policies, and generate audit-ready summaries.

4. HR and Recruitment From screening CVs against structured criteria to scheduling interviews, answering candidate questions, and managing onboarding workflows — HR automation agents reduce administrative overhead on talent teams by 30–50% in publicly reported deployments.

5. IT Operations Ticket triage and resolution agents can resolve 40–60% of IT support tickets autonomously (password resets, software access requests, basic connectivity issues). Beyond ticketing, agents clean CRM data, monitor system health, and escalate infrastructure alerts with context already gathered.

6. Supply Chain Management Agents track order status across carrier APIs, proactively notify customers of delays, optimize delivery schedules based on real-time data, and manage vendor communications for routine procurement workflows.

7. Software Engineering Support Code review agents check PRs against style guides and security policies; documentation agents generate API docs from code; test generation agents create unit test suites from function signatures. Software engineering automation is growing at a projected CAGR of 52.4% from 2025 to 2030.

AI Agents for Automation by Industry

The practical question isn’t whether AI agents deliver value — it’s where they deliver value first, most reliably, and with the shortest path from deployment to measurable ROI. The answer varies by industry context and the specific operational constraints each sector faces.

Healthcare: Clinical and Administrative Automation

Healthcare administration represents one of the clearest ROI opportunities in agentic AI: the documentation burden on clinical staff has reached a tipping point — physicians consistently report spending more time on administrative tasks than on direct patient care — and AI agents directly address that gap.

Where healthcare agents deliver measurable results:

- Clinical documentation: Agents generate structured clinical notes from conversation transcripts and pre-populate EHR fields. Health systems including Mayo Clinic and Cedars-Sinai report documentation time reductions of 30–50% per clinical encounter with ambient intelligence tools.

- Prior authorization: Agents access clinical data, match against payer rules, and draft auth requests — reducing turnaround from days to hours. Infinitus AI has documented 10x reduction in time-to-authorization in production deployments.

- Appointment scheduling and no-show management: Agents monitor cancellations, identify open slots, reach out to waitlisted patients, and manage rescheduling workflows without staff intervention.

- Medical coding and billing: Agents extract diagnostic codes from clinical documents, cross-reference against ICD/CPT codes, and flag documentation gaps before claim submission — reducing denial rates and accelerating reimbursement cycles.

The governance note that matters: healthcare AI agents must operate under HIPAA-compliant architectures, with data residency controls, full audit logging of every data access event, and clear human sign-off requirements for any clinical decision. These requirements are institutional prerequisites, not optional enhancements.

Financial Services: Fraud, Compliance, and Underwriting

Financial services leads enterprise AI adoption in depth of deployment. The shift to agentic architectures is accelerating specifically because AI agents can handle judgment-intensive workflows that earlier ML models could not.

- Real-time fraud detection: Agents analyze transaction streams across behavioral baselines, device signals, and network patterns simultaneously — identifying novel fraud behaviors before they’ve been codified in rules. Organizations report false negative reductions of 30–40% vs. rule-based predecessors.

- Credit underwriting automation: Agents retrieve applicant data from multiple sources, normalize against scoring models, run scenario analysis, and generate structured underwriting recommendations. Throughput improvements of 3–5x more applications reviewed per analyst day for cases that reach human review are consistently reported.

- Regulatory compliance monitoring: Agents scan SEC filings, financial regulatory bulletins, and international compliance databases, compare updates against internal policies, draft gap analysis reports, and assign remediation owners.

- Invoice and expense processing: Ardent Partners has documented 70%+ reduction in invoice processing cost per invoice in agentic deployments versus manual processing.

For a deep dive into how AI is transforming fraud detection, AML compliance, credit scoring, and agentic banking workflows — including governance frameworks and EU AI Act requirements — the complete guide to AI in fintech covers each application in detail.

Legal: Research, Review, and Client Workflows

Legal workflow automation has accelerated faster than most in the profession anticipated. The volume of document-intensive work and clear ROI on routine tasks has driven adoption despite the sector’s historically cautious technology posture.

- Contract review and risk flagging: Thomson Reuters CoCounsel and LexisNexis Protégé reached commercial deployment in 2025, with documented first-pass review time reductions of 70–90% vs. manual attorney review.

- Legal research: Agents run multi-source research across case law, statute databases, and internal precedent libraries, surfacing relevant precedent with citations. What used to take associates several hours completes in minutes.

- E-discovery support: Agents classify documents by relevance, privilege, and responsiveness — reducing review team size requirements by 40–70% in documented e-discovery deployments.

- KYC and client onboarding: Agents collect and verify client documentation, run sanctions and AML checks, and compile onboarding files, routing exceptions to compliance officers.

Marketing: Campaign Automation and Lead Intelligence

Marketing has the highest density of high-volume, variable-input workflows of any business function — which explains why AI agents have found the widest range of applications here.

- Multi-agent campaign orchestration: Planning, execution, and analytics agents coordinate to keep campaigns optimizing 24/7 without requiring continuous human oversight.

- Lead scoring and qualification at scale: Agents evaluate behavioral signals, firmographic data, and intent signals to score leads continuously. Sales teams receive a ranked, annotated queue rather than a raw lead list.

- Personalized content generation: Agents generate email copy variants, landing page headlines, and social post variations tailored to segment and stage — removing the production bottleneck without sacrificing personalization.

- Competitor and market intelligence: Agents monitor competitor activity and surface strategic implications on a defined cadence, with alerts triggered only when significant changes occur.

HR and Operations: Recruiting and Workforce Automation

HR automation delivers one of the clearest qualitative returns alongside the quantitative savings: when agents handle the administrative tasks, human HR teams focus on the high-judgment work that actually differentiates great people operations.

- Candidate sourcing and screening: Agents search sourcing platforms, evaluate applications, and generate ranked shortlists with structured evaluation notes. Recruiting teams that previously spent 40% of their time on screening logistics redirect that time to candidate experience.

- Interview scheduling coordination: Automated multi-participant scheduling across time zones and calendar systems, with automatic rescheduling when conflicts arise.

- Onboarding workflow management: Agents coordinate document collection, IT provisioning triggers, training schedule creation, and orientation logistics. New hires receive personalized onboarding plans; HR teams receive completion dashboards.

- Employee query handling: An HR knowledge agent connected to policy documents and benefits information handles high-volume routine questions, freeing HR business partners for strategic advisory work.

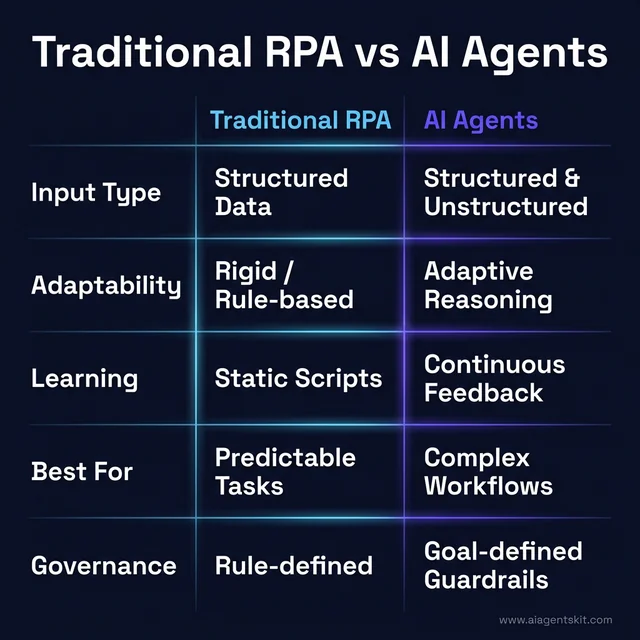

How AI Agent Automation Differs from Traditional RPA

Many organizations approaching agentic AI already have robotic process automation (RPA) deployments. The question practitioners consistently ask: does AI automation replace RPA, or work alongside it? The answer is almost always the latter — and understanding why requires clarity on what each technology actually does rather than what vendors say about them.

RPA excels at structured, deterministic workflows: it automates repetitive tasks involving structured data, fixed interfaces, and predictable inputs. RPA bots don’t reason — they follow rules exactly. They’re brittle when the interface changes, the input format shifts, or an exception occurs outside their programmed scope. McKinsey’s State of AI in 2025 report found that 62% of organizations are now experimenting with AI agents — many of which are actively trying to extend what their existing RPA investments can handle into less-structured territory.

| Dimension | Traditional RPA | AI Agents |

|---|---|---|

| Input Type | Structured data only | Structured + unstructured (email, PDFs, images) |

| Adaptability | Rigid — breaks on exceptions | Adaptive — reasons through unexpected inputs |

| Learning | None — static rules | Yes — improves from feedback |

| Best For | High-volume, predictable, rule-based tasks | Complex, variable, context-dependent workflows |

| Governance | Easier to audit | Requires robust guardrails and oversight |

The complementary relationship matters: AI agents can orchestrate RPA bots. An agent that reasons about a complex customer complaint can call an RPA bot to execute the CRM update — combining the reasoning capability of the agent with the precision of the bot for structured data entry. Teams exploring AI automation workflows find this hybrid architecture consistently delivers the fastest ROI, particularly in environments with significant legacy system investments.

RPA vs. AI Agents: Comparing adaptability, input types, and governance patterns.

The concept of hyperautomation — combining AI agents, RPA, machine learning, and analytics into a unified automation fabric — is what Gartner and industry analysts describe as the 2026 enterprise standard. Organizations that treat these technologies as competitors rather than complements miss the compound efficiency gains that emerge from the combined stack.

What Do the Numbers Say? Enterprise Adoption in 2026

The adoption curve for AI agents for automation has accelerated faster than virtually any enterprise technology in recent memory. The numbers from authoritative sources paint a consistent picture of a technology crossing the threshold from “experimental” to “standard” — and the governance infrastructure struggling to keep pace.

Market Size: The global AI agents market was valued at approximately $7.63 billion in 2025, growing to an estimated $8.81–$12.06 billion in 2026, with compound annual growth rates projected between 40–50% through 2030.

Enterprise Application Penetration: Gartner predicts that by end of 2026, 40% of enterprise applications will incorporate task-specific AI agents — up from less than 5% in 2025. That represents eight years of typical enterprise technology adoption compressed into less than 24 months.

Productivity and Efficiency: Deloitte’s State of AI in the Enterprise 2026 report found that 66% of organizations report improved productivity through enterprise AI adoption, while 40% cite cost reduction as a key benefit.

Fortune 500 Reality Check: 67% of Fortune 500 companies had production agentic AI deployments in 2025 — representing a 340% increase from 2024.

The Governance Gap: Deloitte found that only one in five companies have a mature governance model for autonomous AI agents despite 74% projected to be using agents moderately by 2026. The deployment-governance gap is what Gartner flagged when predicting 40% project abandonment by 2027.

The competitive pressure is real. PwC’s survey found that 73% of executives believe AI agents will provide a significant competitive advantage in the next 12 months.

How Much Can AI Agents Actually Save? Real ROI Data

The question that determines whether an AI agent project gets funded isn’t “what can agents do?” — it’s “what will agents save?” Decision-makers need numbers. Here’s what the data from actual deployments shows.

The Cost Differential That Makes the Business Case

The clearest starting point is customer service, where the cost-per-interaction comparison is documented and consistent:

- Human agent cost per interaction: $3.00–$6.00 (fully-loaded labor cost)

- AI agent cost per interaction: $0.25–$0.50 (compute + API costs)

At the middle of those ranges: a human interaction costs $4.50; an AI agent interaction costs $0.37. For an organization handling 100,000 tier-1 support interactions per month, that differential represents $4.13 million in annualized savings — before accounting for the agent’s 24/7 availability and faster resolution time. McKinsey’s 2025 State of AI research documented average operational cost reductions of 20–30% across functions where AI automation has reached maturity.

ROI by Use Case: Where Returns Are Highest

| Use Case | Typical Cost Reduction | Typical Payback Period |

|---|---|---|

| Customer service tier-1 | 60–80% cost per ticket | 3–6 months |

| Invoice processing | 50–70% cost per invoice | 2–4 months |

| Lead qualification | 40–60% time reduction | 4–8 months |

| Compliance monitoring | 30–50% analyst hours saved | 6–12 months |

| Clinical documentation | 30–50% documentation time | 6–12 months |

| Legal review (first-pass) | 70–90% first-pass time | 4–8 months |

| Software code review | 30–50% review time | 3–6 months |

Enterprise deployments report payback periods of 4–6 months on average. Smaller, well-scoped deployments have reported payback periods as short as 6 weeks.

Documented ROI benchmarks across the most common enterprise AI agent use cases.

What AI Automation Actually Costs to Implement

Initial deployment costs:

- Discovery, process mapping, and scoping: $5,000–$50,000

- No-code platform deployment: $2,000–$10,000 setup; $100–$500/month ongoing

- Developer framework (LangChain/CrewAI): $20,000–$120,000 build; $500–$5,000/month ongoing

- Enterprise cloud platform (Vertex AI / Bedrock): $50,000–$200,000; $2,000–$25,000/month ongoing

The cost insight most organizations miss: Model inference accounts for approximately 20% of total agentic AI cost of ownership. The remaining 80% is integration work, governance tooling, monitoring infrastructure, and human oversight mechanisms — costs that don’t appear in the API pricing page but determine whether the deployment is sustainable at scale. 92% of businesses implementing agentic AI report cost overruns, most attributable to underestimating this surrounding infrastructure cost.

AI Agents for Small and Medium Businesses

The assumption that AI automation requires large engineering teams and significant capital has become increasingly inaccurate. The tooling available in 2026 has moved the accessible starting point down to businesses with no dedicated engineering staff and automation budgets measured in hundreds of dollars per month, not hundreds of thousands.

The SMB Advantage: Speed

Small businesses have one structural advantage that large enterprises lack: speed to deploy. Where a Fortune 500 company requires months of procurement, compliance review, and IT integration, a 20-person business can test a tool, validate a workflow, and deploy it in production within days. That speed advantage is significant because agentic automation rewards early movers — the first business in a competitive set to automate lead qualification or customer response is capturing time-to-contact advantages that compound into revenue.

The data validates this opportunity: 91% of SMBs using AI agents report revenue growth, and 73% achieve positive ROI within 3 months — faster than most enterprise deployments achieve.

4 Starting Points for SMBs

1. Customer Email and Inquiry Triage Connect an AI agent to a shared inbox. The agent reads incoming messages, classifies them by type, generates draft responses for common queries, and routes exceptions to the right person. Tools that make this accessible without engineering: Zapier AI Workflows, Make.com with Claude or GPT integration, or a basic n8n setup. Time to deploy: 2–5 days.

2. Appointment Booking and Scheduling An agent connected to a calendar and booking page handles inbound scheduling requests, confirms availability, sends confirmations, manages cancellations, and sends reminders. For service businesses, consultancies, healthcare practices, and legal offices, this use case alone recovers 5–10 hours per week. Time to deploy: 1–3 days.

3. Invoice Processing and Accounts Payable An agent reads invoices in any format, extracts line items, matches to purchase orders or expense categories, and routes approvals. For businesses handling 20+ invoices per week, this eliminates the manual data entry and matching work — and the error accumulation that comes with it. Time to deploy: 1–2 weeks.

4. Lead Qualification and Follow-Up An agent monitors incoming leads from web forms, enriches contact data, scores leads against simple criteria, and triggers personalized follow-up emails for high-scoring leads while routing lower-priority leads to nurture sequences. For businesses where leads arrive faster than the sales team can follow up manually, this use case delivers directly measurable revenue impact. Time to deploy: 3–7 days.

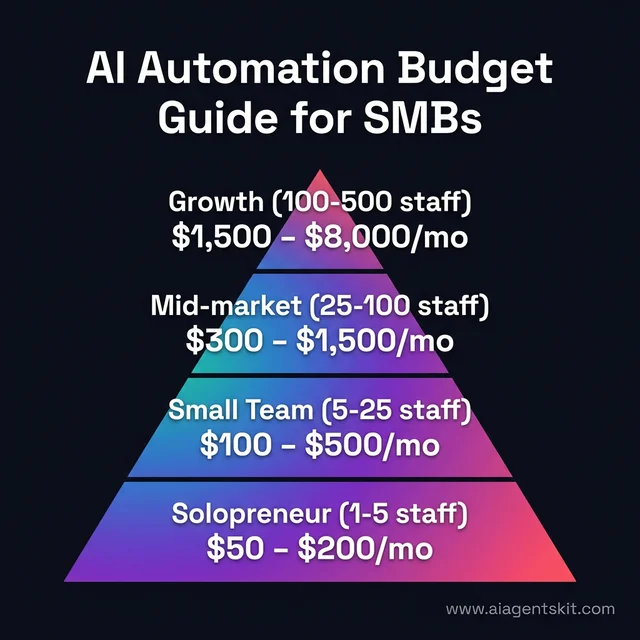

Budget Guide: What AI Automation Costs at Different SMB Scales

| Scale | Recommended Approach | Monthly Cost | Setup Time |

|---|---|---|---|

| Solopreneur / 1–5 staff | Zapier AI or Make.com | $50–$200/month | 1–5 days |

| Small team (5–25 staff) | n8n cloud or Make Business | $100–$500/month | 1–2 weeks |

| Mid-market (25–100 staff) | n8n self-hosted or basic LangChain | $300–$1,500/month | 2–6 weeks |

| Growth (100–500 staff) | LangGraph / CrewAI + cloud platform | $1,500–$8,000/month | 1–3 months |

Tiered budget guide for SMBs: matching automation goals with headcount and monthly spend.

The most common mistake: trying to automate too many processes at once before any single automation is proven and stable. Start with one workflow consuming the most hours with the most predictable inputs, automate it fully, prove the ROI, then expand. Starting with one well-chosen workflow beats starting with five half-finished ones every time.

No-Code and Low-Code AI Automation Tools

Not every automation needs a developer. For organizations without engineering teams — or with engineering capacity already committed to product development — the no-code and low-code category has become the most practical entry point for AI agents.

Tools like Zapier, Make, and n8n have moved well beyond “if-this-then-that” workflow automation into genuine AI agent capabilities. Organizations that dismissed these tools in 2023 are finding that the 2026 versions support multi-step reasoning, LLM integration, branching conditional logic, and tool use across hundreds of business applications — without a single line of code.

Understanding the Tool Tiers

Tier 1 — No-Code (No engineering required)

Zapier AI Agents integrates AI capabilities into its 8,000+ app ecosystem. Non-technical users build agents that respond to triggers, process inputs with Claude, GPT-5, or Gemini, make decisions based on LLM reasoning, and take actions across connected apps. Setup is drag-and-drop. Best for: simple to moderate agent workflows where pre-built app integrations cover the required tool set.

Make.com offers a visual canvas for orchestrating complex multi-step AI workflows with 400+ native AI integrations and a visual audit trail. More powerful than Zapier for complex conditionals; operation-based pricing makes it more economical at high volumes.

Tier 2 — Low-Code (Minimal technical knowledge required)

n8n is the most powerful option in this tier and bridges into developer territory. Its open-source foundation enables self-hosting for complete data sovereignty — critical for regulated industries. n8n’s native AI node supports LangChain integration, RAG pipelines, and self-hosted LLM connections. Execution-based pricing makes it the most cost-effective option for high-volume agentic workflows. For a hands-on guide to building production-grade agents with n8n, the n8n AI agent workflow guide covers the full implementation pattern.

Microsoft Copilot Studio allows non-engineers to build AI agents connected to Teams, SharePoint, and Dynamics 365 — with Microsoft’s enterprise compliance controls applied by default.

Tier 3 — Developer Frameworks

LangChain, LangGraph, CrewAI, and AutoGen — covered in depth in the frameworks section. The right choice when custom integrations, complex multi-agent coordination, or production observability requirements exceed no-code capabilities. A structured comparison of these frameworks is available in the AI agent frameworks guide.

Tier 4 — Enterprise Platforms

Vertex AI Agent Builder, Amazon Bedrock Agents, Azure AI Studio, Salesforce Agentforce, and ServiceNow AI. Purpose-built for large-scale deployments with enterprise security, compliance, and integration SLAs.

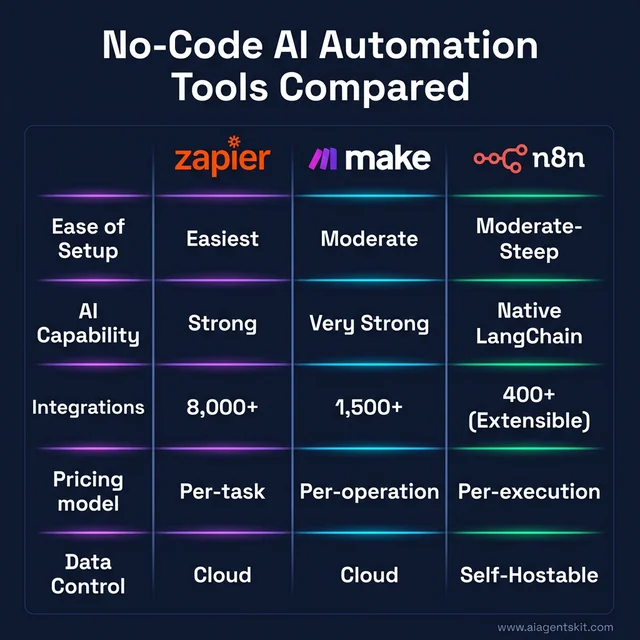

Zapier vs. Make vs. n8n: Side-by-Side Comparison

| Dimension | Zapier | Make | n8n |

|---|---|---|---|

| Ease of setup | Easiest | Moderate | Moderate–Steep |

| AI agent capability | Growing; GPT/Claude/Gemini | Strong; 400+ AI apps | Strongest; LangChain-native |

| Native app integrations | 8,000+ | 1,500+ | 400+ (extensible via HTTP) |

| Pricing model | Per-task (expensive at volume) | Per-operation (mid-range) | Per-execution (lowest at scale) |

| Data control | Cloud only | Cloud only | Self-hostable |

| Best for | Speed and breadth | Visual complexity | Data sovereignty and cost efficiency |

Decision logic: No technical resources and speed matters most → Zapier. Complex conditionals with broad app coverage → Make. Data sovereignty or high volume → n8n.

When to Graduate from No-Code to Developer Frameworks

The signals that no-code tools have reached their ceiling for a given use case:

- Custom integrations needed that aren’t available natively

- Multi-agent coordination requirements that no-code tools approximate imperfectly

- Production observability requirements for compliance or debugging

- Volume high enough that per-task pricing becomes more expensive than infrastructure investment

At that point, the move to a developer framework is a logical step in the maturity progression, not a platform migration.

Tool selection matrix: choosing between Zapier, Make, and n8n based on complexity and volume.

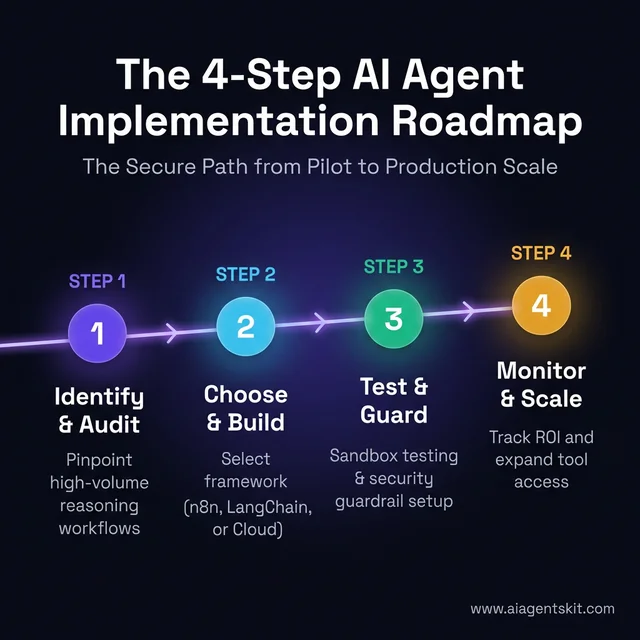

How to Implement AI Agents for Business Automation

The gap between “a pilot exists” and “agents run business-critical workflows at scale” is where most organizations stall. The recommended starting point isn’t picking a framework — it’s understanding the processes well enough to identify which ones are genuinely suited to agentic automation.

A structured roadmap for moving from process audit to production-scale AI automation.

Step 1: Identify the Right Processes to Automate

Not every process benefits from AI agent automation. The best candidates share four characteristics: they involve high volume, they require some reasoning or judgment (not just rule-execution), they pull from multiple data sources, and they have measurable outputs that make ROI visible.

Start with an audit of existing workflows. Flag processes that require staff to: switch between multiple systems, interpret unstructured inputs (emails, documents), make judgment calls against defined policies, or handle high volumes of routine exceptions. Customer inquiry handling, invoice exception processing, and lead qualification are the most common starting points for good reason — they score highly on all four criteria and have established benchmarks for measuring improvement.

Step 2: Choose the Right Platforms and Frameworks

Platform selection depends on technical depth, existing infrastructure, and scale requirements. Three broad categories exist, and picking the wrong tier is one of the most common implementation failures:

No-code / Low-code: n8n, Make, Zapier AI. Best for: operations teams without dedicated engineering resources, simple agent workflows with defined tool sets. Fastest time-to-deployment, lowest entry cost.

Developer frameworks: LangChain, LangGraph, CrewAI, AutoGen. Best for: engineering teams that need full control over agent behavior, multi-agent coordination, and custom tool integrations.

Enterprise cloud platforms: Vertex AI Agent Builder (Google), Amazon Bedrock Agents, Azure AI Studio (Microsoft). Best for: organizations requiring enterprise security, compliance, and scale from day one.

Selecting the AI agent frameworks that fit the organization’s current technical maturity is as important as the framework’s theoretical capabilities — an overbuilt solution becomes a governance liability.

Step 3: Build, Test, and Deploy With Guardrails

The key elements of a safe agent deployment:

- Define the allowed tool set explicitly — don’t give an agent access to tools it doesn’t need

- Set action limits — for any action with external consequences, implement approval gates or rate limits

- Human-in-the-loop checkpoints — for decisions above a defined complexity or cost threshold, route to human review before execution

- Sandbox testing — run the agent against historical data in an isolated environment before touching production systems

- Audit logging — every agent action should be logged with the reasoning chain that led to it

Gartner estimates that over 40% of agentic AI projects could be abandoned by 2027 if organizations fail to establish proper governance from the start. Guardrails built at deployment time are dramatically easier to maintain than governance retrofitted after an incident.

Step 4: Monitor, Iterate, and Scale

The metrics that matter most in the first 90 days of an agent deployment:

- Task completion rate: Percentage completed without human escalation

- Escalation rate: Percentage requiring human review, and categorized reasons why

- Error rate: Percentage resulting in incorrect actions that need remediation

- Latency: Time per task compared to the established human baseline

The scale pattern that consistently emerges in successful deployments: start with a single, well-defined use case → prove ROI with quantifiable metrics → expand tool access incrementally → move to multi-agent architecture where specialized agents hand off tasks between each other.

Top Frameworks and Tools for Building AI Automation Agents

The landscape of AI automation tools has matured significantly since 2024. What was largely a developer-only territory has expanded into enterprise platforms, no-code environments, and specialized frameworks.

| Framework/Platform | Best For | Technical Level | LLM Support |

|---|---|---|---|

| LangChain / LangGraph | Complex multi-step pipelines, developers | Advanced | GPT-5, Claude 4, Gemini 3, Llama 4 |

| CrewAI | Multi-agent team architectures | Intermediate–Advanced | GPT-5, Claude 4, Gemini 3 |

| n8n | No-code visual automation with AI | Beginner–Intermediate | GPT-5, Claude 4 via API |

| Vertex AI Agent Builder | Enterprise-grade, Google Cloud | Enterprise | Gemini 3 Pro/Flash (native) |

| Amazon Bedrock Agents | AWS-native enterprise deployment | Enterprise | Claude 4, Llama 4, Titan |

| Azure AI Studio | Microsoft ecosystem integration | Enterprise | GPT-5, Claude 4 via Azure |

| AutoGen (Microsoft) | Conversational multi-agent | Advanced | Multiple LLMs |

LangChain and LangGraph remain the most widely adopted developer frameworks globally. LangGraph’s state machine approach is particularly effective for complex, branching workflows where the agent needs to track position in a long-running process and recover gracefully when a step fails.

CrewAI has emerged as the go-to framework for multi-agent systems where different agents play defined roles — a researcher agent, an analyst agent, and an executor agent working in sequence.

n8n bridges the gap between traditional workflow automation and AI agents. Its visual canvas lets non-engineers build agent workflows by connecting nodes — and its self-hosted option gives enterprises the data control that compliance teams require.

Modern agent frameworks integrate with current frontier models: GPT-5 and GPT-5-Turbo from OpenAI, Claude 4 Opus and Claude 4 Sonnet from Anthropic (200K context windows), Gemini 3 Pro from Google (2M token context), and Llama 4 for organizations requiring open-source, locally-hosted reasoning. Most practitioners adopt a tiered approach: a fast, cost-efficient model for routine decisions, and a powerful model reserved for complex reasoning steps where accuracy is the priority.

What Are the Biggest Risks of Autonomous AI Agents?

The honest picture on AI agent risks is more nuanced than either the hype or the skepticism suggests. These systems deliver genuine and measurable value — but the failure modes are distinct from traditional software and require specific, proactive mitigation strategies.

1. Unintended Actions and Goal Misinterpretation An agent given a goal will pursue it — but “pursue” can sometimes mean taking actions that technically satisfy the objective while violating the intent. Explicit scope definition matters more than choosing the right model or framework.

2. Runaway Costs Agents make API calls — and in loops, those calls accumulate rapidly. An agent stuck in a retry loop on a failing API can burn through compute budgets in minutes without detection. Rate limiting, cost caps, and real-time spend monitoring are non-negotiable infrastructure requirements.

3. Data Security and Access Scope Prompt injection attacks — where malicious content in an email or document tricks the agent into executing unintended commands — are a documented and actively exploited vulnerability class. Principle of least privilege applies: agents should have the minimum tool access necessary to complete their defined tasks.

4. LLM Hallucination in Reasoning Chains Even frontier models can make factual errors. When those errors occur mid-workflow, they propagate into downstream actions. Fact-checking agents and human review gates for consequential decisions are the primary mitigations practitioners use at scale.

5. The Governance Gap Deloitte’s 2026 enterprise AI report found that only 1 in 5 companies possess a mature governance model for autonomous AI agents — despite 74% projected to be using agents moderately by 2026. The most effective governance frameworks treat agents like elevated-privilege accounts: the same rigorous access controls, audit requirements, and review cycles that apply to privileged human users.

Security and governance scorecard: mapping common autonomous AI risks to proven mitigation steps.

Mitigation Checklist:

- ✅ Define agent scope explicitly (allowed tools, action types, cost limits)

- ✅ Implement human-in-the-loop for high-consequence decisions

- ✅ Establish comprehensive audit logging (every action + reasoning chain)

- ✅ Apply principle of least privilege to tool access

- ✅ Test with adversarial inputs (prompt injection scenarios) before production

- ✅ Set cost caps and alert thresholds before live deployment

- ✅ Build governance policy before the first deployment, not after an incident forces it

AI Agents for Automation: Frequently Asked Questions

What are AI agents and how do they automate tasks?

AI agents are software systems that use large language models to perceive inputs, reason about goals, plan actions, and execute tasks across integrated tools — without constant human direction. They automate tasks by receiving a high-level objective, breaking it into steps, calling the right tools in the right sequence, and adapting when intermediate results change the plan. Unlike rule-based automation, they handle ambiguous inputs and novel situations through contextual reasoning rather than pre-programmed scripts.

How do AI agents differ from traditional RPA tools?

Traditional RPA bots execute defined scripts on structured data — they’re precise and fast but brittle when inputs change. AI agents reason through tasks using LLMs, handle unstructured inputs (emails, documents, images), and adapt to unexpected outcomes. The key difference: RPA follows rules, AI agents apply judgment. In practice, the most effective enterprise automation architectures combine both — AI agents orchestrate workflows and handle reasoning-intensive steps, while RPA bots handle structured data entry and legacy system interactions with precision.

What industries benefit most from AI agent automation?

Customer service leads adoption due to clear, measurable ROI on inquiry containment. Finance and compliance follow closely — regulatory monitoring, reconciliation, and audit preparation are high-volume, high-stakes workflows that benefit from both the speed and accuracy of agents. Healthcare, legal, marketing, software engineering, HR/recruitment, and supply chain all show strong adoption driven by industry-specific high-volume administrative burdens. The common thread: high-volume workflows with some reasoning component generate the highest ROI from agentic automation.

Can small businesses use AI agents for automation?

Small businesses can implement AI agents today for $50–$200/month using no-code platforms like Zapier AI or Make.com — requiring no development resources and deploying in days rather than weeks. Industry data shows 73% of SMBs using AI agents achieve positive ROI within 3 months, and 91% report measurable revenue growth. The most practical starting points: customer email triage, appointment scheduling, invoice processing, and lead qualification. The barrier in 2026 isn’t cost — it’s knowing which workflow to start with and which platform is appropriate for the team’s technical capacity.

What frameworks are used to build AI automation agents?

The main frameworks fall into three tiers. For developers: LangChain and LangGraph (most widely adopted), CrewAI (multi-agent team architectures), and AutoGen (Microsoft, conversational multi-agent). For no-code/low-code: n8n, Make, and Zapier with AI integrations. For enterprise scale: Google’s Vertex AI Agent Builder, Amazon Bedrock Agents, and Azure AI Studio. Framework selection should align with the team’s technical depth and the deployment’s governance requirements.

What are the biggest security risks of AI agents?

The primary security risks are prompt injection attacks (malicious content tricking the agent into unintended actions), excessive permission scope (agents with access to tools they don’t need), and runaway cost generation (agents in loops making expensive API calls without limits). Mitigation requires: applying the principle of least privilege to all tool access, implementing rate limits and cost caps, testing against adversarial inputs before production deployment, and establishing comprehensive audit logging of all agent actions and their reasoning chains.

How long does it take to implement AI agents for business?

A simple, well-defined use case with a no-code platform can be operational in days to 2 weeks. A custom developer-built agent using LangChain or CrewAI with enterprise integrations typically takes 6–12 weeks from scoping to initial deployment. Enterprise platform deployments on Vertex AI or Bedrock, with full security review and governance documentation, commonly run 3–6 months for the first production use case. The investment in proper scoping, testing, and guardrail design in the early phase directly reduces post-deployment remediation cost.

How do you measure ROI from AI agents?

The most reliable ROI metrics are: task completion rate (percentage completing autonomously vs. requiring human escalation), time-per-task reduction compared to the human baseline, error rate and remediation cost, and labor cost savings in FTE-equivalent hours recovered. For customer-facing agents, customer satisfaction scores and average resolution time complete the picture. Organizations consistently report 20–40% operational cost reductions in functions where agents are well-implemented — but establishing the measurement framework before deployment, not after, is what makes those figures meaningful.

What is a multi-agent system and how is it used for automation?

A multi-agent system is an architecture where multiple specialized AI agents collaborate to complete complex workflows, each handling a distinct role. A common enterprise pattern: a routing agent classifies incoming requests; specialized agents handle each task type (billing, support, account management); a synthesis agent assembles outputs before communicating with the end user. Multi-agent systems build modular, maintainable automation at scale rather than one monolithic agent trying to handle everything. See the multi-agent systems guide for architecture patterns and production examples.

What is the difference between intelligent automation and AI agents?

Intelligent automation (IA) is an umbrella term covering the combination of AI, RPA, and machine learning applied to business process automation. AI agents are one specific implementation within that broader category — specifically systems that use LLMs to reason through goals rather than execute fixed scripts. Traditional intelligent automation platforms use rules, decision trees, and ML models for structured workflows. AI agents add the ability to handle unstructured inputs, reason through variable situations, and adapt to outcomes. Modern platforms like UiPath and ServiceNow increasingly incorporate both within the same deployment.

What is hyperautomation and where do AI agents fit?

Hyperautomation is Gartner’s framework describing the coordinated use of multiple automation technologies — AI, RPA, machine learning, process mining, and analytics — to automate as much of the business process landscape as technically possible. AI agents occupy the orchestration layer within hyperautomation: they handle the reasoning-intensive, variable-input workflows that RPA and traditional automation cannot. The typical architecture has AI agents reasoning and coordinating; RPA bots executing structured legacy-system tasks; and analytics platforms monitoring outputs and feeding insights back to the planning layer.

Can small businesses afford AI agents?

Small businesses can implement AI agents today at monthly costs starting below $200 using no-code platforms — requiring no development resources. If an agent saves 5 hours per week at $30/hour labor equivalent, the value delivered is $600/month against typical platform costs of $100–$300/month. Industry data shows 73% of SMBs using AI agents achieve positive ROI within 3 months and 91% report measurable revenue growth. The barrier in 2026 isn’t cost — it’s knowing which workflow to start with.

How much does it cost to implement AI agents for a business?

No-code deployments (Zapier, Make, n8n) cost $2,000–$10,000 in setup plus $100–$500/month ongoing. Developer framework deployments (LangChain, CrewAI) typically run $20,000–$80,000 in development plus $500–$5,000/month in infrastructure. Enterprise cloud platform deployments (Vertex AI, Bedrock, Azure AI Studio) with full security and compliance review: $50,000–$200,000 for the first production use case, plus $2,000–$25,000/month in operations. The critical cost to remember: model inference is ~20% of total cost of ownership; the other 80% is integration work, governance tooling, and oversight infrastructure — the figure most organizations underestimate.

AI Agents for Automation: What Comes Next

The trajectory over the next 12–24 months is toward what analysts call “AI organizations” — coordinated multi-agent systems that function as operational layers, handling entire business functions with machine-level throughput and increasingly human-level contextual judgment. The teams that get there first aren’t the ones that move fastest; they’re the ones that build governance and measurement infrastructure before deployment pressure mounts from leadership.

Three practical insights from the patterns observed across early-adopter organizations: First, start with a single, well-defined use case and prove ROI before expanding tool access or use-case scope. Second, governance isn’t a constraint on innovation — it’s the infrastructure that makes scale both possible and sustainable. Third, the framework matters far less than the workflow design; a well-designed agent on n8n consistently outperforms a poorly designed agent built on LangChain, regardless of the technical sophistication of the underlying platform.

The momentum is real — with 40% of enterprise apps incorporating task-specific agents by end of 2026 and major organizations already seeing double-digit efficiency gains, the question isn’t whether to adopt AI agents for automation but how to do it with the discipline that separates successful deployments from abandoned pilots. The natural next step is understanding how multi-agent systems extend these agent-level capabilities into fully autonomous, enterprise-wide operational architectures.