Sovereign AI: What It Is and Why Nations Are Racing to Build It

Sovereign AI explained: what it is, why 71% of executives call it an existential concern, the full tech stack, country strategies, and how organizations can build AI autonomy.

Something fundamental shifted in global AI strategy in 2025 — and it wasn’t a new model announcement. It was governments and enterprises suddenly realizing that their most critical AI capabilities run entirely on infrastructure they don’t own, in countries they don’t control. That realization landed hard, and fast.

The stakes are high enough that understanding AI regulation and governance is no longer optional for anyone building or deploying AI at scale. According to McKinsey’s 2025 sovereign AI analysis, 71% of executives, investors, and government officials now characterize sovereign AI as an “existential concern” or “strategic imperative.” That number tells a clear story about where global AI priorities are heading.

Sovereign AI covers everything from how a nation develops its own AI models to how an enterprise ensures its AI-driven decisions remain under local legal jurisdiction. This guide covers what sovereign AI is, why the global push for AI independence is accelerating, how nations and organizations are responding, and what the practical path forward looks like.

What Is Sovereign AI? A Clear Definition

Sovereign AI refers to a nation’s or organization’s capacity to develop, control, and operate artificial intelligence systems using its own infrastructure, data, workforce, and governance frameworks — without undue dependence on foreign entities or external providers.

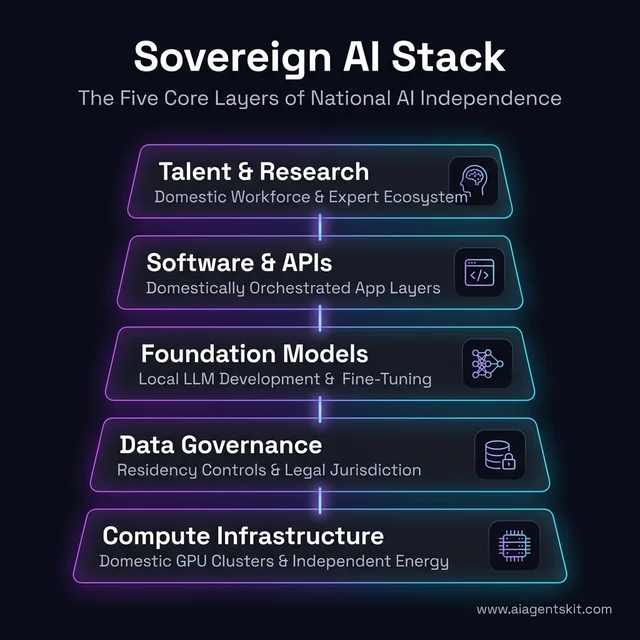

The concept is broader than it might first appear. Controlling AI isn’t just about where data is stored. It means owning or controlling the entire AI lifecycle:

- Compute infrastructure: GPU clusters, data centers, and energy systems physically located and legally governed within national territory

- Data layer: Data collected, stored, and processed under domestic laws with clear residency requirements

- AI models: Foundation models and large language models trained on domestically governed datasets

- Software and applications: Systems built on the sovereign infrastructure rather than foreign-controlled APIs

- Talent and research: A domestic workforce capable of maintaining, improving, and innovating the AI stack

This scope distinguishes sovereign AI from narrower concepts. Data sovereignty is about where data lives. Cloud sovereignty is about who operates the cloud infrastructure. Sovereign AI is about control over the entire large language models development and deployment chain — from raw training data to inference at scale.

The distinction from Cloud AI is equally important. Cloud AI gives organizations access to powerful AI services without needing to build the infrastructure — Google Cloud, AWS, and Azure provide this. Sovereign AI prioritizes ownership and strategic control over convenience and cost. The two approaches aren’t mutually exclusive, but they represent fundamentally different strategic postures.

Most nations and enterprises today are somewhere in the middle — using cloud AI for most workloads while pursuing sovereignty selectively for their most sensitive, strategic, or regulated use cases.

Sovereign AI vs. Private AI vs. Public AI: What’s the Difference?

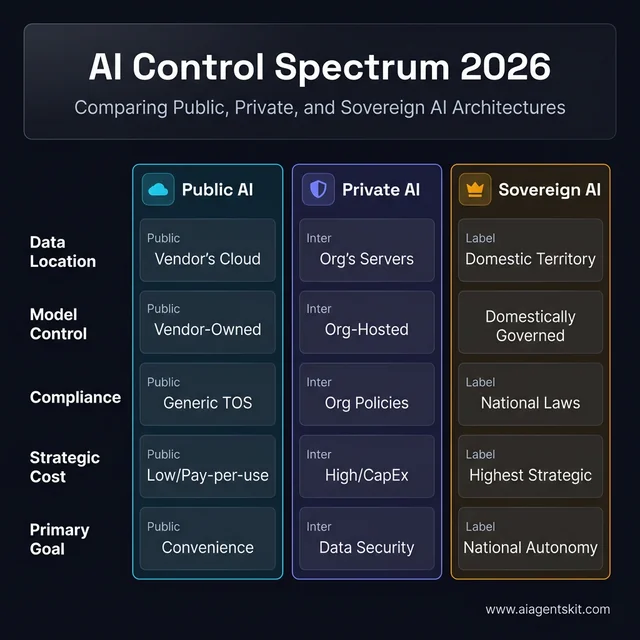

These three terms appear in conversations about AI control, but they’re not interchangeable. Understanding where sovereign AI fits in the landscape helps organizations make more informed architecture decisions.

Public AI refers to AI services delivered as software-as-a-service by third-party vendors — ChatGPT, Google Gemini, Claude, and similar tools. Data is processed on the vendor’s infrastructure, under the vendor’s terms. For non-sensitive tasks, public AI offers unmatched convenience and capability at low cost. But any data submitted to inference is leaving the organization’s perimeter, often crossing national borders and falling under foreign legal jurisdiction.

Private AI means deploying AI systems entirely within an organization’s own controlled environment — on-premises servers, a private cloud, or a virtual private cloud. The organization controls the infrastructure and enforces data access policies. Private AI is driven by data security, regulatory compliance (HIPAA, GDPR, SOC 2), and protection of proprietary intellectual property. Private AI is an organizational-level decision, not a national policy.

Sovereign AI operates at a higher level of control. It encompasses the entire AI value chain — compute infrastructure, data governance, model development, and applications — under national or institutional legal jurisdiction. Sovereign AI may include private AI deployments, but the defining characteristic is alignment with a nation’s or government’s laws, security requirements, and strategic interests. A sovereign AI system might run on private cloud infrastructure, but it additionally ensures the model weights were trained domestically, the data never left national borders, and all personnel with access meet domestic clearance requirements.

The practical difference shows up when handling AI data privacy and governance requirements: an organization running private AI on AWS GovCloud has private AI but not necessarily full sovereign AI — the infrastructure still operates under US jurisdiction and could be subject to US legal orders for data access.

| Dimension | Public AI | Private AI | Sovereign AI |

|---|---|---|---|

| Data location | Vendor’s cloud (any country) | Organization’s infrastructure | Domestic territory, legally governed |

| Model control | Vendor-owned | Organization-hosted (often vendor model) | Domestically governed or developed |

| Compliance | General terms of service | Organization-defined policies | National laws + regulatory mandates |

| Cost model | Pay-per-use, low entry | High upfront, lower operating long-term | Highest upfront, strategic investment |

| Best for | General tasks, non-sensitive data | Regulated enterprises, IP-sensitive ops | Defense, national critical infrastructure, regulated government agencies |

| Who uses it | Everyone | Enterprises in regulated industries | Nations, defense agencies, large gov bodies |

Figure 1: Comparison of Public, Private, and Sovereign AI control models across five critical dimensions.

Many organizations run all three simultaneously — a tiered approach where public AI handles productivity tools, private AI handles sensitive customer data workloads, and sovereign AI covers the most critical national-security or regulatory-compliance use cases. The lines aren’t rigid; the goal is matching the right level of control to the actual sensitivity and strategic importance of each AI workload.

Why Sovereign AI Has Become a National Priority

The sovereign AI strategy conversation accelerated in 2025 for three compounding reasons: geopolitical AI competition, tightening data regulations, and the economic logic of keeping AI value domestic.

The Geopolitical Driver

AI has become a foundational national capability — the modern equivalent of nuclear technology or semiconductor manufacturing. The US-China AI competition reshaped how nations think about AI dependencies, with US export controls on advanced AI chips demonstrating how quickly access to AI infrastructure can become a foreign policy lever.

Nations that depend solely on US or Chinese AI platforms face a strategic vulnerability: if geopolitical tensions escalate, their AI capabilities could be disrupted overnight. The concentration of AI power among a handful of providers like OpenAI, Google DeepMind, and Anthropic — all headquartered in the United States — has made this vulnerability concrete.

According to Gartner’s 2025 sovereign cloud forecast, by 2027, 35% of countries will be locked into region-specific AI platforms. That’s not a prediction of fragmentation — it’s already happening. Global sovereign cloud IaaS spending is forecast to reach approximately $80 billion in 2026, representing a 35.6% year-over-year increase.

The Regulatory Driver

Digital regulations have evolved from data protection to comprehensive AI governance. India’s 2023 Digital Personal Data Protection Act, the EU AI Act, and dozens of national AI frameworks now require specific controls over how AI systems process citizen data. Many of these regulations effectively mandate some level of sovereign AI capability for compliance.

Organizations operating in multiple jurisdictions face a compounding challenge: they must satisfy different residency requirements, model transparency rules, and liability frameworks simultaneously. The easiest path to compliance is often to run AI workloads within sovereign infrastructure from the start.

The Economic Logic

Perhaps the most underappreciated driver is economic. When a government agency or local business uses a foreign AI platform, the economic value — the training data, the model improvements, the usage fees — flows abroad. Nations increasingly view sovereign AI as an economic development strategy: keeping AI training jobs, GPU procurement budgets, and model licensing revenue domestic.

McKinsey’s analysis estimates the sovereign AI market could reach $600 billion by 2030. That’s not just a technology market figure — it represents the economic activity that governments are competing to capture within their borders.

The 5 Pillars of a Sovereign AI Technology Stack

Building sovereign AI isn’t a single purchase decision — it’s a layered infrastructure strategy. Ppractitioners consistently find that nations and organizations underestimate how interdependent these layers are. A gap in any one pillar creates a vulnerability that undermines the others.

Figure 2: The five interdependent pillars required to build a complete sovereign AI capability, from compute to talent.

Compute Infrastructure: The Foundation of AI Independence

The AI compute layer is the most visible and most expensive element of sovereign AI. Training frontier AI models requires many thousands of high-end GPUs — the kind of local GPU infrastructure requirements that only a handful of nations could currently meet from domestic supply.

The cost of compute is significant. According to UBS strategists’ 2025 report, sovereign and enterprise AI will account for 17% of global AI capital expenditure in 2025 — equivalent to approximately $61 billion of the $360 billion total global AI capex. Nations investing in this layer are building national GPU clusters, partnering with hyperscalers on “sovereign regions,” and, in some cases, pursuing domestic chip design to reduce hardware dependencies.

Energy is the hidden constraint. Modern AI data centers consume extraordinary amounts of power. Nations building sovereign compute capacity must simultaneously plan for the energy infrastructure to support it — a factor that has made certain geographies (those with abundant renewable energy) natural candidates for sovereign AI data centers.

Data Governance and Local Data Residency

Data residency means that data is stored and processed within a country’s physical territory, subject to its laws. For AI, this extends beyond static storage to dynamic processing — where training jobs run, where inference happens, and who can access which dataset.

Sovereign AI data governance typically involves:

- Domestic data centers for all training and inference workloads on sensitive data

- Legal controls on cross-border data transfer

- Clear ownership rights for data generated from public sector operations

- Standardized formats that domestic AI systems can access without routing through foreign APIs

The compliance complexity is real. Data residency compliance requirements vary significantly across jurisdictions — GDPR in Europe, DPDPA in India, CCPA in California — and they continue to evolve. Many organizations discover that meeting data residency requirements for AI is considerably more complex than meeting them for traditional databases, because AI training creates derived data products that carry information about the original training set.

Foundation Models and Local LLM Development

The model layer is where sovereign AI ambitions often collide with economic reality. Developing a frontier large language model — the kind that competes with GPT-5 or Claude 4 — costs hundreds of millions to over a billion dollars in compute alone. That’s before factoring in research talent, data curation, and inference infrastructure.

Most nations and organizations don’t build from scratch. The practical spectrum runs from:

- Full pre-training (only feasible for the US and China scale of investment, plus a handful of well-funded nations)

- Fine-tuning open models — adapting models like Meta’s Llama 4 on domestic datasets and local languages

- Hosting and adapting existing models within sovereign infrastructure, ensuring inference stays within jurisdiction

Countries like India, France, and Malaysia have invested in multilingual foundation models trained on domestic language datasets — not to compete with OpenAI on general capability, but to ensure their populations can access AI in native languages without routing sensitive queries through foreign servers.

Software Applications and the API Layer

The application layer is often overlooked in sovereign AI discussions but is strategically significant. When domestic organizations build critical services on top of foreign APIs, they create dependencies that even sovereign infrastructure at lower layers can’t fully address.

Sovereign AI software strategy means building the application and middleware layers on top of domestically governed models and infrastructure. This creates a complete chain of custody from user interaction down to model weights — critical for sectors like defense, healthcare, and public administration.

AI Talent and Research Capability

No amount of compute investment produces a sovereign AI capability without the human infrastructure to run it. AI researchers, ML engineers, data scientists, and AI policy experts are unevenly distributed globally — concentrated in a small number of research centers and companies, mostly in the US, UK, and China.

Nations pursuing sovereign AI must simultaneously invest in compute infrastructure and build deep education pipelines. This is the slowest layer to develop — universities take years to train AI researchers, and attracting diaspora talent requires sustained policy effort. Many practitioners point to talent as the real long-term constraint on sovereign AI ambition, even more so than capital.

How 5 Nations Are Building Their Sovereign AI Strategy

National AI sovereignty strategies vary dramatically based on each country’s starting position, economic resources, and strategic priorities. The national AI ecosystems and the startups powering them look different in every geography. Here’s how five representative nations are approaching the challenge.

India: Scale, Inclusion, and Infrastructure

India’s IndiaAI Mission, approved in 2024 with a budget of approximately $1.25 billion, represents one of the most comprehensive national sovereign AI programs in the developing world. The mission targets exceeding 38,000 GPUs of compute capacity specifically available to domestic startups and researchers at subsidized rates.

Uniquely, India’s sovereign AI strategy explicitly prioritizes linguistic and cultural inclusion. The Bhashini initiative provides open AI services in dozens of Indian languages, ensuring that sovereign AI benefits extend beyond English-speaking urban populations. India also plans to develop indigenous AI chips by 2030 — a long-term move to reduce hardware dependencies at the foundation layer.

United Arab Emirates: Government-First AI Integration

The UAE’s National AI Strategy 2031 takes a government-operations-first approach, targeting AI integration across 38 federal government entities. In practice, this means the UAE is pursuing sovereign AI primarily as a governance modernization tool, with private sector development as a secondary goal.

The Stargate UAE initiative — a collaboration between the UAE government, OpenAI, Oracle, and G42 — illustrates a hybrid sovereignty model: securing foreign AI capability within UAE-controlled infrastructure rather than building entirely domestically. Abu Dhabi’s planned data center cluster, announced in partnership with Microsoft and other hyperscalers, demonstrates how Gulf states are using capital leverage to secure favorable sovereignty terms from global providers.

United Kingdom: Orchestration Over Dominance

The UK’s AI Opportunities Action Plan, unveiled in January 2025, takes a distinctive strategic posture: rather than trying to compete with the US or China on frontier model size, the UK is focused on controlling the “orchestration layer” — setting global standards, designing governance frameworks, and ensuring AI interoperability remains under democratic oversight.

The plan includes £500 million for a dedicated UK Sovereign AI unit, $2.7 billion committed to AI compute capacity expansion by 2030, and the establishment of AI Growth Zones — designated areas designed to attract AI-related infrastructure and research. The National Data Library, funded at over £100 million, aims to make high-quality UK datasets available to domestic researchers and companies.

France and the EU: Regulatory and Model Sovereignty

France has pursued a dual strategy through Mistral AI — a domestically developed LLM that competes globally — and active participation in the EU AI Act, which creates regulatory conditions that favor European AI sovereignty. The GAIA-X cloud initiative represents the EU’s attempt to create interoperable European sovereign cloud infrastructure that keeps data flows within European jurisdiction.

The EU’s approach is explicitly regulatory: by creating compliance requirements that are difficult for non-EU providers to fully meet, the EU creates market space for European sovereign AI providers to establish themselves.

Canada: Open-Source Sovereign Ecosystem

Canada’s approach prioritizes open-source AI infrastructure, with TELUS launching Canada’s first fully sovereign AI factory in 2025 focused on open-source model development. The Canadian strategy acknowledges that full independence on frontier models isn’t achievable without US-scale investment, and instead focuses on building a sovereign ecosystem around open and auditable AI systems.

Japan: Research-Anchored Compute Investment

Japan’s AI Strategy centers on the RIKEN Center for Advanced Intelligence Project as a research anchor, combined with significant compute investment — approximately $10 billion committed to domestic AI infrastructure through 2030. Japan’s approach is notable for its deliberate integration of AI into manufacturing and robotics, sectors where Japan already holds competitive advantages, rather than attempting to compete with the US and China on general-purpose frontier models.

The government has mandated that AI systems used in critical national infrastructure — energy, financial systems, transportation — must operate under Japanese data jurisdiction. Japan is also pursuing a semiconductor independence strategy in partnership with TSMC’s new Kumamoto fabrication facility, reducing long-term hardware dependencies that otherwise constrain sovereign AI ambitions.

Singapore: Southeast Asian AI Hub Strategy

Singapore’s National AI Strategy 2.0, launched in 2023 and expanded through 2025, positions the city-state not as a sovereign AI leader on the frontier model dimension, but as the governance and standards hub for Southeast Asian AI. Singapore’s AI Verify Framework — a governance testing toolkit for AI systems — has been adopted by multiple ASEAN nations as a compliance baseline.

Practically, Singapore has invested in significant sovereign compute capacity through its National Supercomputing Centre (NSCC), providing shared AI infrastructure to domestic researchers and enterprises. The strategy explicitly acknowledges the economic reality: Singapore’s 6 million population cannot justify a national frontier LLM investment independently, but can become indispensable by setting interoperability standards and hosting the AI governance infrastructure that larger regional economies depend on.

Saudi Arabia: Capital-Intensive Sovereign AI Buildout

Saudi Arabia represents the most capital-intensive sovereign AI program outside the US and China. Project Transcendence, backed by the Public Investment Fund (PIF) and the Vision 2030 economic diversification agenda, targets $100 billion in AI infrastructure investment over a decade — including massive sovereign data center clusters, a domestic high-performance chip design program, and substantial compute procurement agreements negotiated at the nation-state level.

A defining feature of Saudi Arabia’s approach is language-anchored AI sovereignty: the development of AceGPT and related Arabic-language large language models ensures that AI capability for Arabic speakers doesn’t require routing queries through servers in English-speaking countries. NEOM — the $500 billion smart city project — serves as a testbed for sovereign AI deployment at urban scale, with AI models governing traffic, energy, and public services under Saudi legal jurisdiction.

Sovereign AI Use Cases: Real-World Applications by Industry

Sovereign AI isn’t abstract policy — it produces concrete applications in sectors where data sensitivity, regulatory requirements, or national security considerations make foreign AI dependency unacceptable. The sovereign AI use cases emerging in 2025 and 2026 are already reshaping how regulated industries build their AI strategies.

Healthcare: Protecting Patient Data at the Model Level

Healthcare is arguably the highest-stakes domain for sovereign AI. The challenge isn’t just data storage — it’s that AI inference creates derived insights that carry information about the underlying patient data. Sending patient records through a foreign API doesn’t just expose the data in transit; it contributes to training a foreign-controlled model.

In practice, AI in healthcare organizations using sovereign AI approaches are deploying:

- On-premise clinical documentation AI: Transcription and summarization of consultation notes, discharge letters, and referrals — all processed on locally governed infrastructure without patient data leaving the hospital network

- Sovereign diagnostic imaging analysis: MRI, CT, and X-ray analysis running on domestic GPU clusters, with model weights and training data staying within national medical data systems

- Prior authorization and compliance AI: AI that reads payer policies and assembles evidence packages for insurance approvals, processing sensitive health records without foreign cloud involvement

- Public health surveillance: National disease monitoring and outbreak forecasting systems that aggregate data across domestic healthcare providers under national epidemiological authority

Hospitals in the EU, UK, and India are among the most advanced in deploying sovereign healthcare AI — driven by GDPR, NHS data governance frameworks, and India’s DPDPA requirements respectively.

Financial Services: Keeping Decision-Making Data Domestic

Regulated financial institutions face a particular sovereign AI challenge: the data that makes AI models most useful in finance — transaction histories, credit records, behavioral analytics — is also the data most strictly regulated for residency and access. AI in fintech faces compliance requirements that essentially mandate sovereign AI approaches for high-stakes decisions.

Sovereign AI use cases proving highest value in financial services:

- Fraud detection models: Real-time transaction analysis running within domestic infrastructure, where the behavioral patterns that define fraud are entirely derived from local customer data and never leave jurisdiction

- Compliance and conduct monitoring: AI reviewing communications for regulatory breaches (market abuse, mis-selling, conduct risk) — data that regulators require to remain within national boundaries

- Affordability checks and credit scoring: AI models making decisions about credit access must be auditable under local consumer protection laws, requiring domestically controlled model transparency

- Regulated document generation: AI-assisted generation of prospectuses, contracts, and regulatory filings where legal jurisdiction determines liability for content accuracy

Banks in the UK, EU, and Singapore are operating sovereign AI for these workloads while using public AI for lower-sensitivity tasks like customer service FAQ bots and internal productivity tools.

Defense and Intelligence: Non-Negotiable Sovereignty

For defense and intelligence agencies, sovereign AI isn’t a strategic choice — it’s an operational requirement. Any AI capability that processes classified information, mission data, or sensitive national security materials must operate without foreign access.

Defense sovereign AI use cases extend across the full spectrum of modern military operations:

- Cybersecurity threat detection and response: AI systems that analyze domestic network traffic for adversarial intrusion must process that traffic entirely within national infrastructure — the data itself constitutes sensitive intelligence

- Electronic warfare systems: AI-powered jamming, signals intelligence, and electromagnetic spectrum management in sensitive regions like the Arctic, South China Sea, and Eastern Europe

- Multi-domain situational awareness: Fusing data from GNSS, radar, satellite imagery, and sensor networks into unified operational pictures — all under domestic control

- Military intelligence analysis: Processing battlefield data, signals intercepts, and open-source intelligence through AI models with no foreign data pathway

Air-gapped sovereign AI deployments — where no network connection to external infrastructure exists — represent the highest sovereignty tier and are standard for the most sensitive defense applications.

Government and Public Administration

Public sector sovereign AI use cases are expanding rapidly, driven by citizen data protection requirements and the political sensitivity of AI-assisted government decision-making under foreign-controlled systems:

- National language AI services: Government services chatbots, document processing, and citizen communication tools running in local languages and on domestic infrastructure — critical for nations where major AI providers have poor coverage of the national language

- Tax and benefits administration: AI systems that process sensitive citizen financial data for tax assessment, benefits eligibility, and fraud detection — legally required to remain under domestic jurisdiction in most countries

- Border control and immigration: AI assisting with document verification, risk assessment, and case management — data that cannot legally be processed under foreign jurisdiction in most national security frameworks

- Infrastructure management AI: AI governing energy grids, water systems, and transportation networks — classified as critical national infrastructure in most regulatory frameworks, with corresponding data sovereignty requirements

How Organizations Can Start Building AI Sovereignty

National sovereign AI programs are government-level initiatives, but organizations — from large enterprises to public sector bodies — face analogous decisions at smaller scale. Most organizations discover that sovereign AI isn’t a binary choice but a spectrum, and the right position on that spectrum depends on their specific regulatory obligations and strategic sensitivities.

How to Assess Your Organization’s AI Sovereignty Gap

Before investing in sovereign AI infrastructure, organizations need clarity on where they actually sit on the dependency spectrum. Practitioners find that a structured assessment typically surfaces more hidden dependencies than expected.

A sovereignty gap assessment covers four dimensions:

- Critical AI use cases: Which AI systems, if disrupted or compromised, would have material operational or regulatory consequences?

- Data flow mapping: Where does training data come from, where does it go when used in AI inference, and who can access those data flows?

- Vendor dependency analysis: Which AI capabilities are single-supplier? What’s the switching cost and the disruption risk if that supplier changes pricing, availability, or access policies?

- Regulatory obligation inventory: What data residency, model transparency, and algorithmic accountability requirements apply to AI systems in the organization’s operating jurisdictions?

Organizations typically fall into four maturity levels:

- Level 1 (Cloud Dependent): All AI runs on foreign-provider cloud infrastructure with no contractual sovereignty protections

- Level 2 (Residency Assured): Cloud AI with contractual data residency guarantees; data doesn’t leave jurisdiction

- Level 3 (Hybrid Sovereign): Critical workloads run on sovereign or on-premise infrastructure; standard workloads remain cloud-based

- Level 4 (Full Stack Sovereign): All AI compute, data, and models operate under domestic control; foreign provider access strictly limited

Figure 3: The four levels of AI sovereignty maturity, showing the journey from cloud dependence to full strategic autonomy.

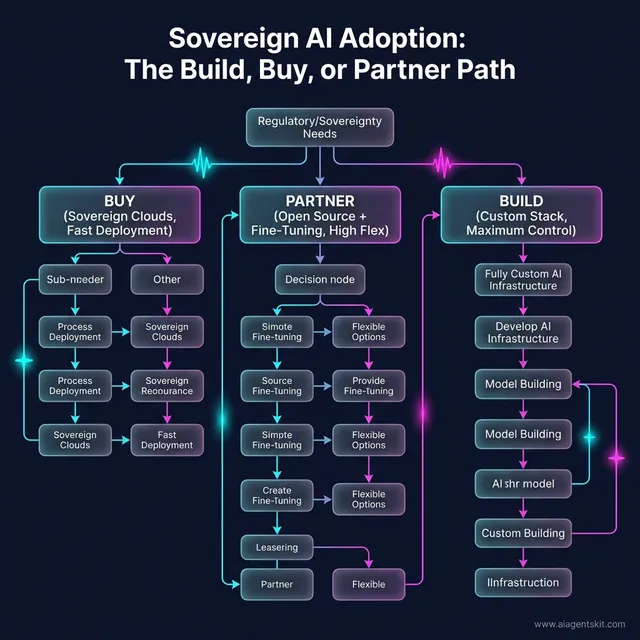

Sovereign AI Adoption: Build, Buy, or Partner?

The build-buy-partner decision is where the AI adoption roadmap for organizations gets concrete. For most organizations, full build isn’t on the table — it’s the province of large sovereign wealth funds, major defense agencies, and a handful of well-resourced tech giants.

Build means developing AI models and infrastructure entirely in-house or through domestic research partnerships. This makes sense only for organizations with genuinely unique data assets, massive scale, and the regulatory mandate to justify the investment. Most defense agencies and national intelligence organizations fall into this category.

Buy means procuring from vendors who offer sovereign-certified AI — hyperscalers with sovereign regions (Microsoft Azure Government, AWS GovCloud, Google Public Sector), sovereign cloud providers, or domestic AI vendors who operate within local legal frameworks. This is the pragmatic path for most large enterprises and public sector organizations.

Partner combines elements of both: using open-source foundation models (Llama 4, Mistral, and similar) hosted within sovereign infrastructure, fine-tuned on domestic data by internal or domestic partner teams. This approach lets organizations leverage global AI research investment while maintaining operational sovereignty over the training and inference layer.

The evidence strongly suggests that most organizations will land in the buy-or-partner quadrant for the foreseeable future. The economies of frontier model development are too extreme for most organizations to justify going alone.

Figure 5: High-level decision matrix for organizations deciding between sovereign clouds, open-source partnerships, or custom builds.

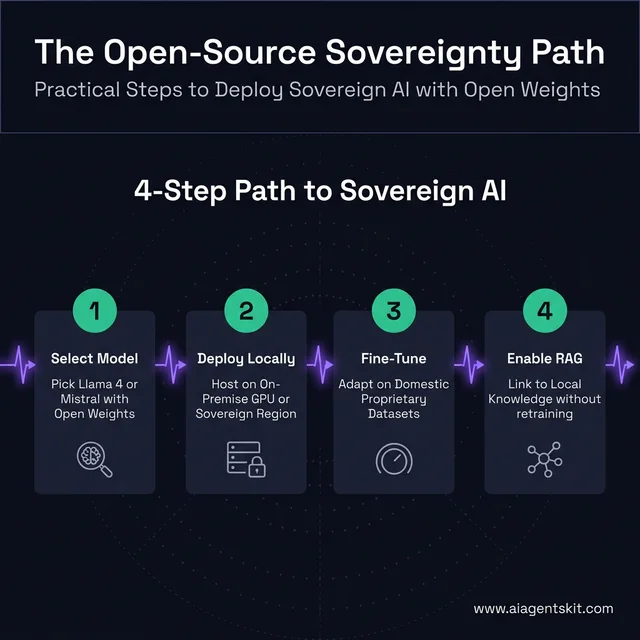

How to Build Sovereign AI with Open-Source Models

For most organizations, open-source AI is the practical gateway to meaningful sovereign AI capability. The open-source ecosystem has matured dramatically: models like Meta’s Llama 4, Mistral Large 2, Falcon 3, and DeepSeek’s open-weight releases now offer performance approaching proprietary frontier models for many domain-specific tasks — and they can be deployed entirely within sovereign infrastructure.

The best open-source LLMs approach to sovereign AI follows a four-step path that most organizations can execute with realistic budgets:

Step 1 — Select a foundation model appropriate to the use case and language. Criteria include: model license terms (verify the license permits commercial use and doesn’t require data sharing with the original developer), language coverage for non-English applications, context window size for document-heavy workloads, and compute requirements relative to available infrastructure.

Step 2 — Deploy on sovereign-governed infrastructure. This means on-premise GPU servers, a private cloud within national territory, or a hyperscaler sovereign region certified for local data residency. The key requirement: no inference traffic leaves the legal jurisdiction. The model weights are downloaded once and remain on locally controlled hardware.

Step 3 — Fine-tune on domestic datasets. Most sovereignty value comes from adaptation to local context: fine-tuning on the organization’s own documents, data, and domain improves performance while ensuring the model reflects domestically generated knowledge. For most organizations, fine-tuning via LoRA (Low-Rank Adaptation) on a modest domestic GPU cluster is achievable at a fraction of full pre-training cost.

Step 4 — Implement RAG for dynamic knowledge. Retrieval-Augmented Generation (RAG) connects the sovereign model to internal documents, databases, and knowledge bases without requiring continuous retraining. RAG is the cost-effective path for most enterprise sovereign AI deployments — using open-source models with RAG can reduce operational expenses by up to 70% compared to proprietary API dependence, according to multiple cost analyses from 2025.

For the highest-sensitivity workloads, air-gapped deployment takes sovereignty further: the entire system operates on hardware physically isolated from external networks. No internet connection, no software update channel, no usage telemetry. This adds operational complexity but provides the highest level of assurance for defense, intelligence, and classified government applications.

Figure 4: A practical four-step workflow for organizations to achieve sovereignty using open-source models and RAG.

What Are the Real Challenges of Sovereign AI?

Sovereign AI is strategically compelling and often politically attractive — but practitioners who’ve worked through implementations encounter obstacles that policy documents rarely acknowledge.

Cost and resource concentration: Training a frontier AI model today costs up to $1 billion in compute alone, according to multiple third-party analyses aggregated by McKinsey. Infrastructure investment — data centers, power, cooling, networking — adds hundreds of millions more. Sovereign AI that’s genuinely competitive at the frontier is accessible only to a small number of nations and organizations globally.

The talent paradox: AI researchers cluster in a small number of ecosystems — US tech companies, UK research labs, Chinese state programs. Building a sovereign AI capability in a country without established AI talent is circular: you need AI expertise to train AI models, but you need sovereign AI success to attract AI experts. Many nations are trying to solve this by establishing favorable immigration pathways for AI talent and creating incentive structures to retain domestic researchers who might otherwise leave for Silicon Valley.

Fragmentation risk: As sovereign AI programs proliferate, global AI interoperability becomes more complex. Different sovereign platforms may produce incompatible model formats, conflicting API standards, and competing governance frameworks. For global organizations, this means managing compliance with multiple sovereign AI regimes simultaneously — a significant operational overhead.

Even among experts, there’s debate about whether full technological stack independence is achievable or even desirable for smaller nations. The semiconductor supply chain illustrates the challenge: even “sovereign” AI infrastructure typically depends on NVIDIA chips manufactured with equipment from dozens of countries. True end-to-end AI sovereignty is theoretically achievable but practically out of reach for most participants.

The vendor lock-in paradox: Sovereign AI is partly motivated by a desire to escape vendor lock-in to major US cloud providers. But pursuing sovereign AI through hyperscaler “sovereign regions” — a common pragmatic choice — replaces one form of dependency with another. Organizations should examine whether their chosen sovereignty path genuinely reduces strategic risk or simply moves the dependency to a different layer.

Innovation speed: Nations and organizations investing heavily in sovereign AI infrastructure may find that the global frontier advances faster than they can keep up. The resources consumed building and maintaining sovereign capability are resources not available for frontier AI research. The organizations getting the most out of sovereign AI tend to accept this tradeoff explicitly: they’re not trying to win the frontier race, they’re ensuring resilience and regulatory compliance on the capabilities they need.

Sovereign AI Cost and ROI: What Organizations Actually Spend

Decision-makers approaching sovereign AI for the first time consistently ask the same question: what does this actually cost, and what do organizations get back? The cost picture is wide-ranging — from sub-$100K open-source deployments to billion-dollar national programs — but ROI data from 2025 implementations is starting to quantify the returns.

What Sovereign AI Implementations Cost

Costs vary dramatically based on the depth of sovereignty pursued:

Entry-level sovereign AI (open-source + hosted inference): $50,000–$250,000 for initial implementation, covering GPU server procurement or sovereign cloud hosting, open-source model deployment, and data integration. Ongoing operational costs typically run $10,000–$50,000 per month depending on inference volume.

Domain-specific fine-tuning project: Add $100,000–$380,000 in data readiness costs — collection, cleaning, labeling, and integration — which independent AI implementation research consistently identifies as the largest hidden cost in AI projects. The software and compute often cost less than getting the data ready.

Enterprise-grade sovereign AI platform: $500,000–$5 million for organizations building comprehensive sovereign capability, including dedicated GPU compute, data governance infrastructure, model development pipelines, and security certification. Talent costs — AI specialists command $100,000–$300,000 in annual compensation — typically represent the largest ongoing expense.

Sovereign cloud TCO premium: Organizations running sovereign-certified cloud regions (Azure Government, AWS GovCloud, equivalent) pay approximately 20–40% higher total cost of ownership than equivalent standard cloud configurations, according to infrastructure cost analyses. This premium covers the additional security certification, dedicated staff clearance requirements, and geographic constraint overhead.

National-program scale: Full national sovereignty programs — building domestic compute clusters, training foundation models, establishing data governance infrastructure — range from $1 billion (India’s IndiaAI Mission) to $100 billion (Saudi Arabia’s Project Transcendence) over multi-year timelines.

What Organizations Get Back

ROI from sovereign AI comes through four channels:

Compliance risk avoidance: The EU AI Act allows fines up to 7% of global annual turnover for violations. GDPR fines for data residency breaches have reached hundreds of millions. An organization with $1 billion in revenue that avoids a single significant compliance action through sovereign AI infrastructure can justify substantial investment on risk avoidance alone.

Operational efficiency: Enterprises report an average return of $3.70 for every $1 invested in AI, with 74% achieving positive ROI within the first year of deployment, based on aggregated enterprise AI outcome data. Sovereign AI’s additional benefit is that efficiency gains from proprietary data remain entirely internal — no training data contribution to a foreign model.

Strategic ROI multiplier: Research compiled by HPCWire in 2025 found that organizations treating AI and data sovereignty as mission-critical priorities achieve up to 5× higher ROI in innovation metrics, operational efficiency, and long-term competitive positioning compared to organizations that treat AI purely as an operational tool with no sovereignty considerations.

Vendor leverage: Organizations with sovereign AI capability — especially those with their own fine-tuned models — hold significantly better negotiating positions with AI vendors. They can credibly walk away from pricing increases or terms-of-service changes that disadvantage them. This leverage is difficult to quantify but consistently cited by enterprise AI leaders as a strategic benefit of sovereignty investment.

The organizations finding the clearest sovereign AI ROI are those that start with a specific, high-value use case in a regulated domain — healthcare claims processing, financial compliance monitoring, government document handling — rather than attempting broad infrastructure investment without a concrete workload anchor.

Sovereign AI Vendors and Solutions: What to Evaluate in 2026

The sovereign AI vendor landscape has matured significantly. Organizations no longer face a binary choice between full DIY and full foreign-provider dependence. Several credible sovereign AI solution categories have emerged.

Hyperscaler Sovereign Regions

All three major hyperscalers now offer dedicated sovereign infrastructure designed to meet national data residency requirements:

- Microsoft Azure Government / EU Data Boundary: Azure Government provides FedRAMP-certified sovereign infrastructure in the US. Azure’s EU Data Boundary initiative extends similar residency guarantees to European customers, with data processing commitments under EU jurisdiction.

- AWS GovCloud (US) / AWS EU Sovereign Cloud: AWS GovCloud is fully isolated from standard AWS infrastructure, operated by US persons, and designed for US government workloads under ITAR and EAR. AWS EU Sovereign Cloud (launched 2025) provides analogous residency guarantees for EU-regulated workloads.

- Google Public Sector / Google Cloud Sovereign Solutions: Google’s public sector cloud offers dedicated sovereign regions for government clients, with Access Transparency controls that log all Google personnel access to customer data.

The advantage of hyperscaler sovereign regions: access to the full cloud application ecosystem within a sovereignty-compliant wrapper. The limitation: the infrastructure and model layers remain under foreign corporate control, which may not satisfy the most demanding sovereignty definitions.

Purpose-Built European Sovereign Cloud

Europe has the most developed ecosystem of purpose-built sovereign cloud providers, driven by GDPR compliance demand and the EU’s GAIA-X initiative for interoperable European data infrastructure:

- NexGen Cloud (UK-based): Focuses specifically on sovereign AI infrastructure, providing high-performance GPU compute within UK jurisdiction for AI training and inference workloads

- OVHcloud (French): Europe’s largest independent cloud provider, offering sovereign cloud solutions with full French and EU legal jurisdiction

- T-Systems Open Telekom Cloud (German): Sovereign cloud infrastructure operated by Deutsche Telekom’s enterprise division, with German data jurisdiction and BSI security certification

- IONOS (German): Provides European sovereign cloud with a focus on managed sovereignty for mid-market organizations

Open-Source AI Hosting Platforms

For organizations pursuing the open-source path to sovereignty:

- Hugging Face Inference Endpoints: Allows deploying open-source models on infrastructure within specific geographic regions (EU, US, Asia-Pacific), giving organizations control over where inference runs while leveraging Hugging Face’s model optimization tooling

- Ollama (self-hosted): The easiest path to running open-source models entirely on local hardware, with no cloud dependency whatsoever. Suitable for organizations with existing on-premise GPU infrastructure

- vLLM (self-hosted inference server): Production-grade open-source inference framework for organizations deploying sovereign AI at scale on dedicated hardware

Evaluation Criteria for Sovereign AI Vendors

When assessing any sovereign AI vendor, practitioners should evaluate against these sovereignty-critical criteria:

- Data residency guarantee: Is data processing contractually guaranteed to stay within the required legal jurisdiction? What happens if the vendor operates across borders?

- Model ownership: Who owns the model weights? Does the vendor have any right to use inference data for training?

- Personnel access controls: Are there requirements for locally cleared or nationally resident personnel to access systems? What are the audit mechanisms?

- Audit and logging: Can the organization independently audit all access to its AI infrastructure and data? Are logs tamper-resistant?

- Exit portability: Can the organization extract all data, models, and configurations without vendor cooperation? Vendor lock-in that survives a sovereignty dispute isn’t sovereignty.

- Regulatory certification: Which specific certifications does the solution hold — FedRAMP, BSI C5, IRAP, AGIMO, or equivalent — and do those certifications match the organization’s actual regulatory obligations?

Sovereign AI: Frequently Asked Questions

What is sovereign AI and why does it matter?

Sovereign AI is a nation’s or organization’s ability to develop, deploy, and control AI systems using infrastructure, data, and talent within its own legal jurisdiction — without dependence on foreign entities. It matters because AI has become a critical strategic resource: disruptions to AI access, data exfiltration, or loss of algorithmic control can have serious consequences for national security, economic competitiveness, and regulatory compliance. As AI becomes embedded in critical infrastructure, the ability to operate AI independently from geopolitical disruptions becomes essential.

How is sovereign AI different from cloud AI?

Cloud AI provides convenient, scalable access to AI capabilities through shared infrastructure owned and operated by companies like Google, AWS, and Microsoft. Sovereign AI prioritizes control and jurisdiction over convenience: the compute runs in locally governed data centers, the data stays within national borders, and the models are either locally developed or hosted under sovereign governance terms. An organization can use cloud AI and achieve sovereign AI compliance simultaneously — if the cloud services are provided through a sovereign region certified for local data residency.

Which countries are leading in sovereign AI development?

The United States and China lead on pure AI capability and compute investment. Among nations building explicitly sovereign AI programs, the UAE, India, France, the UK, and Canada have the most mature and well-funded strategies as of 2026. Japan, South Korea, Singapore, and Saudi Arabia are also active investors. The EU is advancing sovereign AI through regulatory frameworks (EU AI Act) and infrastructure initiatives (GAIA-X) rather than purely through model development.

Is sovereign AI too expensive for smaller nations?

Full frontier sovereign AI is out of reach for most smaller nations — developing a competitive large language model requires hundreds of millions to billions of dollars. However, a meaningful sovereign AI capability doesn’t require competing at the frontier. Smaller nations can build sovereignty at the data and inference layer by: hosting fine-tuned versions of open-source models (Llama 4, Mistral) within domestic infrastructure; establishing domestic regulatory frameworks for AI that apply to foreign-provider deployments; and investing in domain-specific models for priority sectors like healthcare, education, and public administration.

What industries benefit most from sovereign AI?

Industries with the highest sensitivity to data breach, regulatory exposure, and geopolitical risk benefit most from sovereign AI: defense and intelligence (highest sensitivity), healthcare and genomics (patient data sovereignty), finance and banking (regulatory and intellectual property), critical infrastructure (energy, water, telecommunications), and government administration (citizen data, policy decision-making). Commercial industries where competitive advantage derives from proprietary data — pharma R&D, advanced manufacturing — also see significant sovereign AI value.

What is the difference between data sovereignty and AI sovereignty?

Data sovereignty refers to the legal authority over data: who controls where it’s stored, under which laws it’s governed, and who can access it. AI sovereignty extends this concept to the entire AI lifecycle: not just where data lives, but how it’s used to train models, where training jobs run, who can observe what models have learned, and how AI-generated insights are managed. An organization can have strong data sovereignty — keeping data within its borders — while having weak AI sovereignty, if AI training and inference run through foreign-operated systems that learn from that data.

How does sovereign AI protect against geopolitical risks?

When AI infrastructure crosses national boundaries, it creates vulnerabilities. An adversarial nation could restrict access to AI chips needed for training, impose sanctions on AI providers, require model backdoors as a condition of market access, or simply discontinue service. Sovereign AI reduces these risks by ensuring that critical AI capabilities can continue operating without foreign platform access. This doesn’t mean total isolation — most sovereign AI programs still participate in global AI research — but it means that core operational capabilities don’t depend on foreign goodwill.

Can organizations build sovereign AI without a national program?

Yes — organizations can pursue AI sovereignty independently of government programs. The practical approach involves: running AI inference on locally hosted infrastructure (on-premise servers or sovereign-certified private cloud); using open-source models that the organization controls, audits, and fine-tunes; establishing contractual data residency guarantees with any cloud AI providers used; and maintaining comprehensive logging of how AI systems process sensitive data. Many enterprises in regulated industries have been building these capabilities for years, predating the “sovereign AI” terminology.

What is a sovereign cloud and how does it relate to sovereign AI?

Sovereign cloud refers to cloud infrastructure operated under local legal jurisdiction, typically subject to domestic data residency regulations and accessible only by personnel meeting local clearance requirements. Sovereign cloud is a necessary but not sufficient component of sovereign AI: an organization can run AI workloads on sovereign cloud infrastructure while still depending entirely on foundation models trained and owned by foreign providers. Full sovereign AI requires sovereign cloud plus domestically governed model development or local model hosting.

How long does it take to build sovereign AI capabilities?

Building sovereign cloud infrastructure: 12–24 months for established cloud providers to stand up sovereign region capacity. Developing a domain-specific fine-tuned model from an open-source base: 3–12 months depending on data availability and compute. Building a frontier-competing large language model from scratch: 3–7 years, assuming adequate funding and talent. Developing the full sovereign AI stack — compute, data governance, model development, talent pipeline — to meaningful capability: 5–10 years for the most ambitious national programs. The UK, India, and UAE all have strategies that acknowledge this multi-year timeline.

What are the best sovereign AI vendors for enterprises?

Enterprise organizations have three credible paths: hyperscaler sovereign regions (Microsoft Azure Government, AWS GovCloud, Google Public Sector) for organizations that need the full cloud ecosystem with residency compliance; purpose-built European sovereign cloud providers (OVHcloud, T-Systems, NexGen Cloud) for EU-regulated organizations requiring the strictest data jurisdiction; and self-hosted open-source deployments using Ollama or vLLM on dedicated on-premise infrastructure for organizations needing air-gapped or maximum-cost-efficiency deployments. Evaluation should focus on data residency guarantees, model ownership terms, personnel access controls, audit capabilities, and exit portability — not just headline features.

How is sovereign AI different from private AI?

Private AI and sovereign AI are related but distinct concepts. Private AI means deploying AI within an organization’s own controlled infrastructure — keeping data within the organization’s security perimeter. Sovereign AI operates at a higher level: it ensures the entire AI stack (infrastructure, data, models, operations) aligns with national laws, regulatory mandates, and strategic interests. An organization can run private AI on infrastructure in another country — which gives data security without national sovereignty. Sovereign AI requires the infrastructure, data, and governance to be subject to domestic legal jurisdiction. In practice, sovereign AI deployments are typically private AI deployments that additionally meet national sovereignty criteria.

Conclusion

Sovereign AI has moved from a policy discussion to a capital allocation decision. Governments are committing billions to compute infrastructure. Enterprises are auditing their AI dependencies for regulatory and strategic risk. The sovereign AI market, projected at $600 billion by 2030 according to McKinsey, reflects not just government investment but the collective bet that AI independence is worth the significant cost and complexity involved.

The most important insight practitioners take from serious sovereign AI analysis is that sovereignty isn’t binary. Nations and organizations don’t choose between full dependence and full independence — they choose which layers of the AI stack to control given their resources, regulatory obligations, and risk tolerance. A government healthcare agency might need Level 4 sovereignty for patient data AI while accepting Level 2 for internal productivity tools.

The nations and organizations building sovereign AI capabilities today aren’t just protecting against today’s risks. They’re positioning themselves for a world where AI is as strategically critical as energy independence or financial system control. Understanding the future trajectory of AI autonomy is essential for anyone making infrastructure or policy decisions that will shape the next decade.

The sovereign AI race is well underway. The question now isn’t whether to engage with it — it’s where to start.