How to Become an AI Prompt Engineer in 2026

Learn how to become an AI prompt engineer in 2026. Covers salary ($109K–$216K+), skills, tools, a 12-month roadmap, job descriptions, certifications, and context engineering.

The fastest-growing AI skill of the past three years doesn’t belong to machine learning engineers or data scientists. It belongs to the practitioners who know how to make AI models behave — precisely, reliably, and at scale. Mastering prompt engineering basics is now the entry point to an entirely new category of AI career that didn’t exist five years ago.

The market signal is clear. According to Gartner’s research, 80% of engineering teams will need to upskill due to generative AI by 2027, with prompt engineering and retrieval-augmented generation listed as priority competencies. The question isn’t whether this skill matters — it’s how to build it systematically.

This guide covers exactly what a prompt engineer does in 2026, the honest market reality, the seven skills that separate competent practitioners from great ones, and a phase-by-phase 12-month roadmap for getting there.

What Does a Prompt Engineer Actually Do in 2026?

The job description has evolved considerably since the role first appeared in 2022. A prompt engineer isn’t just someone who writes creative queries — that framing was always too narrow. The fuller picture involves designing AI interactions systematically, evaluating model behavior across edge cases, building reusable prompt libraries, and increasingly, orchestrating how AI agents receive and process context across complex multi-step workflows.

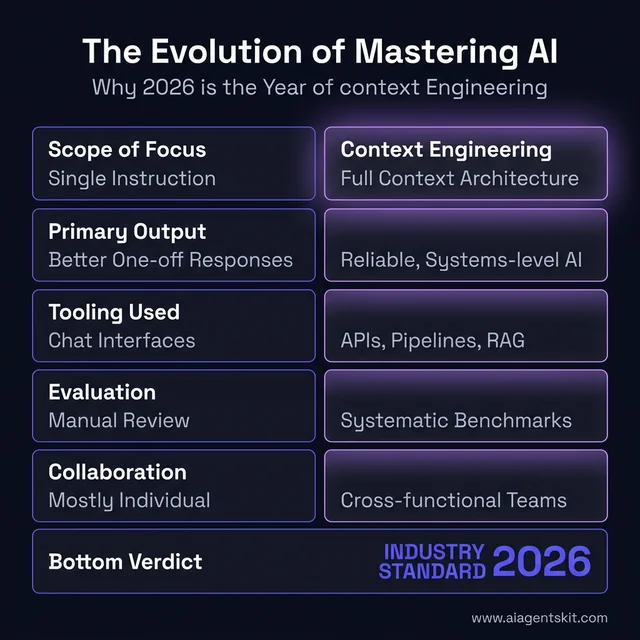

Researchers and practitioners increasingly draw a distinction between traditional prompt engineering and the broader discipline now called “context engineering.” Where prompt engineering focuses on the individual instruction sent to a model, context engineering encompasses everything the model sees — system instructions, retrieved documents, tool outputs, conversation history, memory states, and structured schemas. The most sophisticated prompt engineering roles in 2026 operate at this systems level, not just at the level of individual prompts.

How the Role Has Evolved Since 2023

| Aspect | 2023 Prompt Engineering | 2026 Context Engineering |

|---|---|---|

| Scope | Craft individual prompts | Design full context architecture |

| Output | Better single responses | Reliable, repeatable AI systems |

| Tooling | Chat interfaces | APIs, pipelines, agent frameworks |

| Evaluation | Manual output review | Systematic metrics and benchmarks |

| Collaboration | Mostly individual | Cross-functional (dev, product, data) |

The evolution of mastering AI: From simple prompt engineering to complex context architecture.

What does this look like day-to-day? A typical workflow might involve reviewing overnight AI output logs to catch regressions, testing new prompt variables against a benchmark test suite, debugging why a customer support agent began giving incorrect escalation responses, and meeting with a product team to scope a new document summarization feature. Afternoons often include documenting effective prompt patterns in a shared knowledge base, running training sessions for non-technical teams, and experimenting with the latest model capabilities from GPT-5, Claude 4, or Gemini 3.

The best practitioners in this field approach each challenge the way system prompt design experts do — with a systematic, hypothesis-driven mindset. They treat each prompt like a test case, not a creative exercise. They establish clear success criteria, run controlled comparisons, and document findings precisely enough that colleagues can reproduce results months later.

What surprises many practitioners is how much of the job involves organizational and communication skills rather than purely technical work. Explaining AI limitations to stakeholders who want magic outcomes, training cross-functional teams on effective prompt patterns, and translating vague business requirements into testable AI constraints — these consume a significant portion of experienced practitioners’ time.

A Prompt Engineer’s Day in the Life

The 9-to-5 reality looks nothing like what most career overviews describe. A snapshot of a typical day at a mid-sized tech company in 2026:

Morning (9–12)

- Log review (30 min): Pull overnight AI output reports. Flag any responses that triggered quality guardrails or confidence scores below threshold. Classify failures by type: hallucination, refusal, format error, or context drift.

- Prompt testing sprint (2 hrs): Run controlled A/B tests on two prompt variations for a new product feature. Use Promptfoo to run 50+ test cases automatically, review failures, adjust system prompt variables, and document results with before/after metrics in a shared Notion doc.

- Stakeholder sync (30 min): Brief the product manager on why last week’s customer email AI started generating off-brand responses. Translate the technical root cause (context window overflow during threading) into plain language.

Afternoon (1–5)

- Deep work — prompt architecture (2 hrs): Design the context pipeline for a new internal knowledge assistant. Decide chunking strategy for the document retrieval layer, write and test system prompts, define fallback behavior when retrieval confidence is low.

- Cross-team collaboration (1 hr): Pair with two software engineers integrating the prompt pipeline into the product API. Explain token budget constraints and why certain context elements take priority.

- Documentation and learning (1 hr): Update the team’s internal prompt pattern library with this week’s learnings. Read one paper or technical blog post on evaluation methodology.

Tools open throughout the day: OpenAI Playground, Anthropic Console (Claude), Promptfoo (testing), LangSmith (tracing), Python IDE (pipeline scripts), GitHub (version control for prompts and configs), Notion or Confluence (documentation).

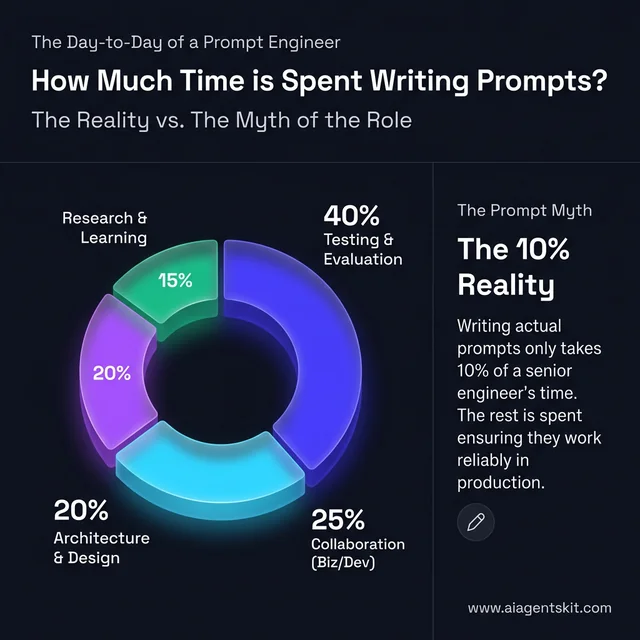

The job is roughly 40% testing and evaluation, 25% documentation and collaboration, 20% design and architecture, and 15% research and experimentation. Writing individual prompts is maybe 10% of total time — the rest is building systems that make prompts reliable.

How modern prompt engineers actually spend their time: Testing and evaluation dominate the workflow.

Is Becoming a Prompt Engineer Worth It in 2026?

The honest answer requires acknowledging both sides of a genuinely mixed picture. The skill is extraordinarily valuable; the specific job title is evolving in ways that require strategic thinking about career positioning.

What the Data Actually Shows

The market numbers are compelling. According to ZipRecruiter’s March 2026 data, the average annual salary for an AI prompt engineer in the United States stands at $136,407, with top earners reaching $192,000. Glassdoor salary data places the median total compensation at $126,000, with senior practitioners earning up to $216,000 annually. Entry-level professionals with strong portfolios can expect $85,000–$120,000 at market entry.

The structural demand signal is equally strong. Gartner predicts over 80% of enterprises will have deployed generative AI by 2026, up from less than 5% in 2023 — creating massive demand for practitioners who can make these systems work reliably in production. By 2027, the same firm projects 75% of hiring processes will incorporate AI proficiency testing, making this skill set increasingly visible in formal evaluation frameworks.

McKinsey’s State of AI research shows that nearly four in ten organizations plan to reskill more than 20% of their workforce in AI competencies. That reskilling creates internal demand — companies need people who can design and run those training programs, not just write prompts themselves.

The most in-demand AI skills currently reward practitioners who understand the most in-demand AI skills across the full stack, rather than treating prompt engineering as an isolated specialty.

The Job Title Reality

Here’s the counterpoint that most career guides skip. A Microsoft-commissioned survey ranked “Prompt Engineer” second-to-last among new roles companies plan to add in the next 12–18 months. LinkedIn’s data from early 2026 shows only approximately 82 active “Prompt Engineer” job postings in the US at any given time — modest for a supposedly in-demand role.

The explanation isn’t that the skill is dying. It’s that prompt engineering is following the path of earlier foundational skills: spreading horizontally across many roles instead of concentrating in a single title. Think of how “webmaster” largely vanished in the early 2000s, but web development, content management, and SEO became distinct careers each larger than the original role ever was.

The most in-demand AI roles in 2026 increasingly embed prompt engineering competency inside titles like “AI Engineer,” “Applied AI Specialist,” “LLM Product Engineer,” and “AI Automation Specialist.” Organizations are training product managers, software engineers, and domain experts in prompting rather than hiring separate teams of prompt specialists.

The practical takeaway: the skill is more valuable than ever, and the job market is wider than the narrow title suggests. Organizations that have committed to AI fluency programs find that practitioners who combine strong prompting skills with domain expertise or technical capabilities consistently command higher compensation than those treating prompt engineering as their sole professional identity.

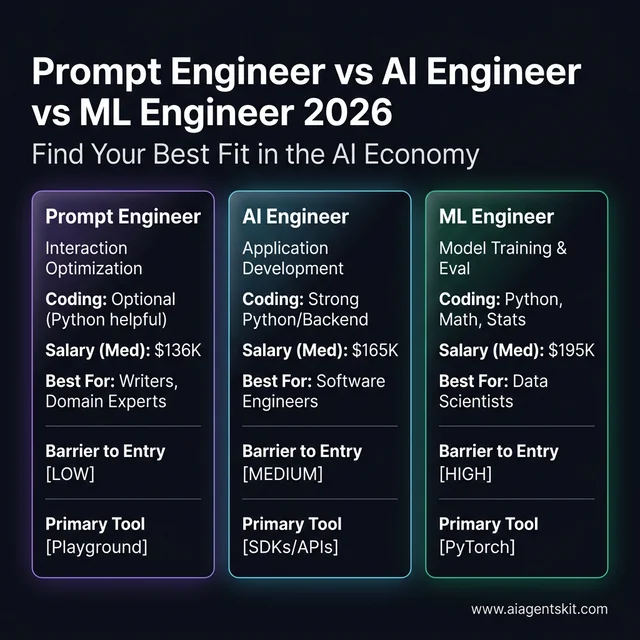

Prompt Engineer vs. AI Engineer vs. ML Engineer: Which Role Fits?

Most career guides treat these three titles interchangeably — they aren’t. Understanding the distinctions helps candidates target the right roles and frame their skills accurately. The lines are blurring in 2026 as all three increasingly overlap in agentic AI development, but the differences in day-to-day work and skill requirements remain meaningful.

For a deeper exploration of these overlapping roles, the complete guide to AI Engineer vs. ML Engineer differences covers how each role is evolving and where they converge.

| Dimension | Prompt Engineer | AI Engineer | ML Engineer |

|---|---|---|---|

| Primary Focus | Optimizing how models receive and process information | Building production AI-powered applications | Training, evaluating, and optimizing machine learning models |

| Core Activity | Prompt design, context architecture, evaluation, red teaming | API integration, agent development, MLOps, system design | Dataset preparation, model training, algorithm optimization |

| Coding Required | Optional (Python helpful) | Strong Python + backend | Strong Python + math/statistics |

| Barrier to Entry | Lowest | Medium | Highest |

| Salary Range (US) | $85K–$216K | $110K–$280K | $110K–$300K |

| Where They Work | AI product teams, AI labs, enterprises | AI product companies, integration shops | AI research labs, enterprise ML platforms |

| Best Background | Writing, domain expertise, QA, or technical | Software engineering + AI curiosity | Math, statistics, data science |

Choosing your path: Comparing skills, salaries, and barrier to entry across 2026's top AI roles.

How the Roles Overlap in Practice

In 2026, many practitioners hold hybrid positions. An AI Engineer building a customer support agent will necessarily write and test prompts — doing prompt engineering as part of a broader role. An ML Engineer fine-tuning domain-specific models needs to understand prompting to evaluate instruction-following quality. The practitioner who understands all three disciplines is increasingly the most valuable person in any AI product team.

The distinction that matters most for career decision-making: Prompt Engineers and AI Engineers can both enter the field without formal ML training. ML Engineers typically require 2–3 years of foundational study before becoming productive contributors. That distinction in time-to-value drives most career choice decisions for people coming from non-technical backgrounds.

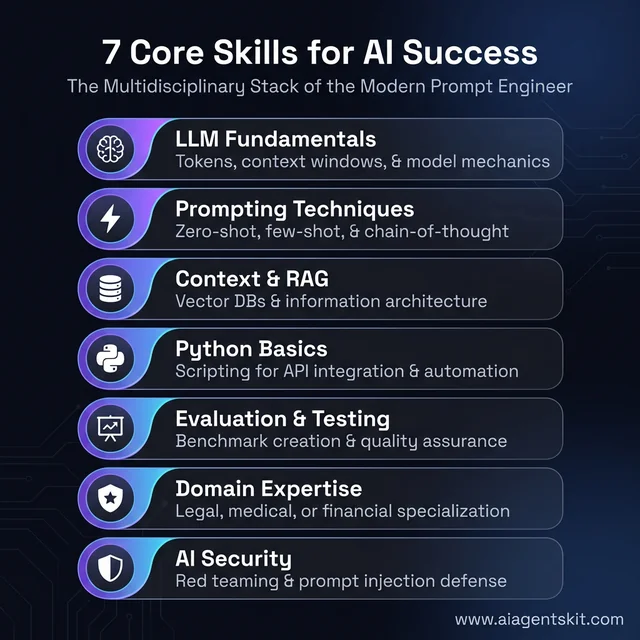

7 Core Skills Prompt Engineers Need to Master

Competent practitioners don’t develop these skills sequentially — they build them in parallel, with foundational capabilities enabling the more advanced ones. Here’s what the actual job market rewards, ordered by priority for career impact.

1. LLM Fundamentals

Understanding how large language models work isn’t optional for serious practitioners. The core concepts — tokens, context windows, temperature, top-p sampling, and the distinction between instruction-tuned and base models — directly inform how practitioners design effective prompts and diagnose failures when outputs go wrong.

This means understanding why Claude 4’s 200K context window changes what’s possible for document analysis, why GPT-5’s architecture involves different performance tradeoffs, and why a temperature setting of 0.0 versus 0.9 produces fundamentally different output characteristics for different use cases.

2. Prompting Techniques

Mastery of the core technique set is non-negotiable for any practitioner positioning themselves for career opportunities:

- Zero-shot prompting: Direct instruction without examples — effective when tasks align closely with model training

- Few-shot prompting: Providing 2–5 examples to establish the desired pattern — dramatically improves output consistency for specialized tasks

- Chain-of-thought prompting: Forcing step-by-step reasoning — transforms complex reasoning accuracy significantly

- System prompts: Persistent instructions that define context, role, and constraints for an entire interaction

- Role prompting: Assigning expert personas to leverage model training associations

Practitioners fluent in chain-of-thought techniques consistently produce more reliable outputs on complex reasoning tasks — a measurable performance difference that translates directly into demonstrable business value on portfolio projects.

3. Context Engineering and RAG

By 2026, the most sophisticated prompt engineering roles involve what’s properly called context engineering: designing the complete information architecture an AI model receives, not just the immediate instruction. This includes managing retrieval-augmented generation (RAG) pipelines, which let models reference external document collections dynamically during inference. RAG is now a baseline expectation for any AI assistant deployed on proprietary knowledge bases.

4. Python Fundamentals

Python matters more than most entry-level guides acknowledge. The transition from chat-interface prompting to production-ready AI systems almost always requires scripting capability. The minimum useful proficiency includes variables, loops, functions, JSON handling, HTTP requests, and basic API authentication. That skill set unlocks API integration, automated testing at scale, output evaluation pipelines, and the ability to build real tools that hiring managers can evaluate — not just demonstrate techniques in chat windows.

5. Evaluation and Testing Methodology

The practitioners who advance fastest treat prompting as an engineering discipline, not a creative exercise. They establish success criteria before testing, maintain comparison baselines, document failure modes systematically, and build regression test suites to catch performance degradations when models update. This methodology — borrowed from software quality assurance — separates senior practitioners from beginners and is increasingly what hiring managers screen for.

6. Domain Expertise

Firms in healthcare, legal services, and financial services consistently pay 30–50% premiums for practitioners who understand their domain deeply enough to recognize when AI outputs are subtly wrong. A prompt engineer who understands medical coding catches errors in clinical documentation AI that a generalist entirely misses. Existing domain expertise is a significant asset that compounds quickly when combined with prompting skills — and it’s one of the few genuine moats in an increasingly crowded market.

7. AI Security Awareness

Prompt injection attacks — where malicious content in a model’s input manipulates its behavior — are now a recognized production risk that organizations actively screen for in hiring. Senior practitioners understand how to design guardrail prompts, recognize injection vulnerability patterns, and test AI systems for adversarial robustness. An emerging sub-specialty called red teaming — systematically attempting to break AI systems to surface safety and reliability failures before they reach production — is now listed as a preferred skill in roughly 30% of senior prompt engineering job postings. Even among experts, there’s ongoing debate about the best approaches to guardrailing, which means practitioners who engage with the literature stand out.

PromptOps is another emerging discipline practitioners should know: the operational practice of managing prompts as production infrastructure — with version control, A/B testing, monitoring dashboards, and regression detection — rather than treating them as informal, ad-hoc text inputs.

| Skill | Priority | Time to Competence |

|---|---|---|

| LLM fundamentals | Essential | 2–4 weeks |

| Prompting techniques | Essential | Ongoing |

| Context engineering / RAG | High | 1–2 months |

| Python basics | High | 2–3 months |

| Evaluation methodology | High | 1–2 months |

| Domain expertise | High (multiplies income) | Ongoing |

| AI security / red teaming | Medium | 2–4 weeks |

| PromptOps | Medium-Advanced | 1–2 months |

The multidisciplinary skill stack: Seven core competencies required for professional prompt engineering mastery.

Essential Tools Every Prompt Engineer Uses in 2026

Knowing the tool ecosystem isn’t optional for practitioners who want to move beyond chat-interface prompting into production-grade work. These are the tools that actually appear in job descriptions and get referenced in technical interviews. Understanding how to build a RAG chatbot gives context for why several of these tools exist — they solve real problems in deployed AI systems.

| Tool | Category | Free/Paid | Best For |

|---|---|---|---|

| OpenAI Playground | Platform testing | Free + paid | Parameter experimentation (temperature, top-p, system prompts) |

| Anthropic Console | Platform testing | Free + paid | Claude-specific prompt testing and API debugging |

| LangChain | Framework | Open-source | Building complex prompt chains and agent workflows |

| LlamaIndex | Framework | Open-source | RAG pipelines and document retrieval integration |

| Promptfoo | Testing & evaluation | Open-source | Automated prompt testing, regression suites, security scanning |

| LangSmith | Observability | Free tier + paid | Tracing, debugging, and logging LLM call chains |

| PromptLayer | PromptOps | Free tier + paid | Prompt version control, collaboration, analytics |

| Langfuse | PromptOps | Open-source + cloud | Observability, evaluation, and prompt management |

| Weights & Biases | Experiment tracking | Free tier + paid | Tracking prompt experiments at research scale |

| Braintrust | Evaluation platform | Paid + free trial | Automated evaluation, dataset management, production monitoring |

| Python + OpenAI SDK | Automation | Open-source | API integration, batch processing, automated testing scripts |

| PromptBase | Marketplace | Marketplace | Buying/selling validated prompt templates |

Which Tools Matter at Each Career Stage

Beginner (months 1–3): OpenAI Playground, Anthropic Console. These require zero setup and build intuition fast. Don’t invest in paid tooling until these feel second nature.

Intermediate (months 4–8): Python + OpenAI SDK, LangChain basics, Promptfoo for automated testing. This combination unlocks everything beyond chat-interface work, including portfolio projects that impress hiring managers.

Advanced / Production (months 9+): LangSmith, PromptLayer or Langfuse (pick one), Braintrust, LlamaIndex for RAG. These are what practitioners work with daily in team environments and what hiring managers at AI companies look for when screening candidates.

The 12-Month Roadmap to Becoming a Prompt Engineer

The following roadmap reflects the realistic path for someone starting with limited AI experience. Those with technical backgrounds will advance faster through phases 1 and 2; those with strong domain expertise should prioritize phases 3 and 4 more aggressively.

The 12-month mastery roadmap: A phased approach to building a successful AI career from scratch.

Phase 1: Foundation (Months 1–3)

This phase builds intuition and understanding that everything else depends on.

Month 1 — Immersion

The goal is pattern recognition: spending enough time interacting with models that their behavior becomes predictable rather than mysterious. Many practitioners underestimate how important this intuitive phase is — it’s hard to systematically improve something you don’t yet understand well enough to predict.

- Create accounts on ChatGPT (GPT-5), Claude (Claude 4 Sonnet), and Gemini (Gemini 3 Pro)

- Run 50+ prompts across diverse use cases: writing, coding assistance, analysis, summarization, classification

- Compare how the same prompt performs differently across models

- Start noticing failure patterns — when does the model misunderstand? When does it hallucinate? When does it add content the prompt didn’t request?

- Read OpenAI’s official prompt engineering documentation (free, surprisingly practical)

Time commitment: 6–10 hours per week

Month 2 — Technique Mastery

Formalize the intuitions from month 1 into repeatable, documented techniques.

- Master zero-shot vs. few-shot prompting: build test cases demonstrating when each approach applies

- Learn chain-of-thought prompting for complex reasoning tasks; test on 5–10 different problem types

- Practice role-based prompting and system prompt construction

- Complete one free structured course (Google Prompting Essentials or DeepLearning.AI’s ChatGPT Prompt Engineering course)

- Begin a personal prompt library documenting what works, what fails, and the hypotheses behind each

Month 3 — Ecosystem Exploration

Broaden exposure while deepening the prompt library.

- Test identical prompts across GPT-5, Claude 4, and Gemini 3 — document behavioral differences systematically

- Explore Claude 4’s extended context window for long-document work where GPT-5 truncates

- Experiment with structured output formats (JSON, markdown, specific schemas); this skill is highly valued

- Begin experimenting with OpenAI Playground for temperature and parameter testing to build quantitative intuition

Phase 1 Milestone: Consistent high-quality outputs from multiple models with documented understanding — not just felt intuition — of why certain approaches outperform others.

Phase 2: Skill Building (Months 4–6)

This phase adds technical depth that opens the full range of career opportunity.

Month 4 — Python Fundamentals

The goal isn’t software engineering proficiency — it’s the minimum competency that unlocks API integration and automated testing. Many practitioners delay this unnecessarily; starting earlier is almost always the right decision.

- Complete Python fundamentals: variables, data types, loops, functions, list comprehensions

- Understand JSON data format (AI APIs return JSON almost exclusively)

- Write a simple file processing script that reads input and produces structured output

- Learn basic HTTP request concepts (GET/POST, headers, authentication)

Resources: freeCodeCamp’s Python course (free), “Automate the Boring Stuff with Python” (free online), Codecademy Python track

Month 5 — API Integration

Connect prompting knowledge with Python capability through OpenAI API integration.

- Obtain OpenAI and Anthropic API keys

- Build first script: takes a text file as input, returns AI-processed output with error handling

- Understand token counting and API pricing implications (essential for real-world cost estimation)

- Build a simple content processing tool — summarizer, classifier, or email writer

- Learn rate limiting, retry logic, and basic error handling patterns

Month 6 — Advanced Techniques

Level up to production-relevant skills that hiring managers actually screen for.

- Learn RAG concepts: document chunking, embedding, vector similarity search, retrieval, integration with prompts

- Understand prompt chaining — linking multiple model calls for complex multi-step tasks

- Practice context window management for long documents that exceed single-prompt limits

- Experiment with structured JSON output extraction and schema validation

- Explore tool-calling / function-calling APIs (both OpenAI and Anthropic) — this underpins agentic systems

Phase 2 Milestone: A working Python script that processes real inputs through an AI API and produces structured, validated outputs. Practical understanding of how RAG and context engineering work in deployed systems.

Phase 3: Portfolio Building (Months 7–9)

This is the phase most aspiring prompt engineers fail to complete. Portfolios matter more than certifications — significantly more, according to hiring managers at AI companies who review candidates regularly. Every project should document the problem, approach, methodology, and measurable results in enough detail that a stranger could evaluate the quality of thinking independently.

Month 7 — Content Automation System

Build an automated pipeline that generates content with consistent quality and measurable output standards.

Options:

- Blog outline generator with SEO optimization criteria

- Social media content creator with brand voice preservation across different platforms

- Product description writer from structured specifications with quality scoring

Document everything: before/after examples, quality scoring rubrics applied, consistency metrics across 20+ runs, time savings achieved versus manual process.

Month 8 — Custom AI Assistant

Create a specialized AI persona for a specific professional domain that demonstrates system prompt architecture and domain knowledge integration.

Options:

- Customer support bot for a specific industry vertical with proper escalation handling

- Research assistant that cites sources and explicitly acknowledges uncertainty

- Educational tutor for a particular subject domain with adaptive difficulty

- Legal Q&A assistant with appropriate disclaimers and clear scope limitations

Show conversation examples across difficult scenarios. Explain system prompt design decisions in writing. Document how edge cases and failure modes were identified and handled.

Month 9 — Technical Integration Project

Build something that combines prompting with real technical complexity. Quality AI portfolio project ideas at this level demonstrate context engineering thinking, not just prompting fluency.

Options:

- Data extraction pipeline converting unstructured documents to structured JSON with accuracy validation

- Code review assistant that provides actionable, prioritized feedback on real code samples

- Multi-step agentic workflow that manages a complex task with tool calls and graceful error handling

- Model comparison analysis that benchmarks 10+ prompts across GPT-5, Claude 4, and Gemini 3 with quantified scoring

Phase 3 Milestone: Three documented projects with clear methodology, measurable outcomes, code available for review, and documentation detailed enough that hiring managers can evaluate decision quality independently.

Phase 4: Market Entry (Months 10–12)

Month 10 — Portfolio Packaging

- Create a portfolio site or well-structured GitHub repository that presents work accessibly

- Write case studies for each project: problem → approach → methodology → results (with specific metrics)

- Document prompt patterns and learnings in a public-facing knowledge base or blog

- Optimize LinkedIn with relevant keywords, project highlights, and clear value proposition

- Practice explaining technical decisions in plain language suitable for non-technical stakeholders

Month 11 — Validation and Network

- Complete 1–2 recognized certifications to pass HR keyword filters at larger companies

- Engage with AI communities on Twitter/X, Reddit’s r/PromptEngineering and r/MachineLearning, Discord servers

- Consider contributing to open-source AI projects for visible GitHub contribution activity

- Reach out to practitioners in target roles for informational conversations — the AI community tends to be accessible

Month 12 — Active Market Entry

- Apply to 10+ target roles per week, tracking responses in a simple spreadsheet for iteration

- OR launch freelancing: 3+ proposals per day across Upwork, Toptal, and direct outreach to target companies

- Iterate on applications based on response patterns — adjust resume framing, portfolio highlights, or targeting

- Interview preparation: STAR-method stories about specific prompt engineering challenges systematically solved

Reality note: Timeline varies significantly with starting background and time availability. Some practitioners land roles in month 6 with strong portfolios; others take 18 months while learning part-time. Both paths are valid. The roadmap is a framework, not a race — what matters is building demonstrable capability.

5 Portfolio Projects That Impress Hiring Managers

Portfolio depth consistently outweighs certification breadth when hiring managers at AI companies and enterprises evaluate candidates. The evidence from practitioners who’ve gone through hiring processes is consistent: one well-documented project with quantified results is worth more than five certifications. Here’s what actually gets attention.

1. Content Generation System with Quality Metrics

What: Automated pipeline that generates consistent, high-quality content — blog outlines, product descriptions, or social copy — with measurable output quality tracked systematically.

Skills demonstrated: Prompt chaining, consistency optimization, quality evaluation methodology, output formatting control

What hiring managers want to see: A/B comparison showing different prompt variations and their impact on output quality, scoring rubrics used consistently, before/after improvement evidence with specific numbers

Time investment: 1–2 weeks

2. Custom AI Assistant for Niche Domain

What: Specialized chatbot designed for a specific professional or industry context — legal FAQs, health information, technical support, or educational tutoring — with careful handling of domain constraints.

Skills demonstrated: System prompt architecture, domain knowledge integration, edge case handling, failure mode documentation and mitigation

What hiring managers want to see: Conversation logs showing difficult scenarios handled gracefully, written explanation of system prompt design decisions, domain-specific accuracy evidence where testable

Time investment: 2–3 weeks

3. Structured Data Extraction Pipeline

What: Tool that converts messy, unstructured text into clean, structured data — resumes to JSON, contracts to key terms, articles to structured summaries — with validation and error handling.

Skills demonstrated: Structured output design, accuracy optimization, schema engineering, validation logic and error recovery

What hiring managers want to see: Accuracy metrics compared to manual baseline, edge case examples showing how the system handles ambiguous inputs, documentation of the validation approach

Time investment: 1–2 weeks

4. Multi-Model Benchmark Analysis

What: Systematic comparison of how the same prompts perform across GPT-5, Claude 4, and Gemini 3 — with quantified scoring, documented behavioral differences, and actionable recommendations.

Skills demonstrated: Evaluation methodology, analytical thinking, deep model knowledge, systematic documentation

What hiring managers want to see: Reproducible scoring methodology, clear behavioral distinctions with explanations grounded in model architecture, actionable recommendations for when to use which model

Time investment: 1 week

5. Agentic Workflow Demo

What: A multi-step autonomous workflow using tool-calling APIs — a research agent that searches, retrieves, synthesizes, and formats findings, or a data processing pipeline handling the full lifecycle from raw input to verified output.

Skills demonstrated: Context engineering, tool-calling architecture, agentic reasoning design, graceful error handling and recovery

What hiring managers want to see: Demonstration of handling failure gracefully without human intervention, clear documentation of the system prompt architecture decisions, performance on edge cases that would break simpler implementations

Time investment: 2–3 weeks

| Project | Core Skill Demonstrated | Targeting Bonus |

|---|---|---|

| Content system | Consistency + evaluation | Include quantified quality metrics |

| Custom assistant | System design + domain | Show difficult conversation handling |

| Extraction pipeline | Accuracy + structure | Compare error rate vs. manual baseline |

| Benchmark analysis | Methodology + model knowledge | Publish as shareable comparison report |

| Agentic workflow | Context engineering + architecture | Demonstrate graceful recovery from failures |

What a Prompt Engineer Job Description Actually Looks Like

Before targeting roles, it helps to understand what job postings actually require versus what they list as nice-to-have. Based on 2026 job market analysis, here’s a representative breakdown of what a real prompt engineering job description contains — and what it reveals about the role’s expectations.

Real job titles to search (since “Prompt Engineer” postings are sparse): AI Specialist, Applied AI Engineer, LLM Product Engineer, AI Content Specialist, Conversational AI Designer, AI Automation Engineer, Generative AI Developer, AI Systems Engineer. For the full landscape of remote-friendly AI roles and how to find them, AI remote job search strategies explains which platforms concentrate AI-specific listings.

Sample job description requirements (composite from 2026 postings):

| Requirement Type | What It Actually Means |

|---|---|

| ”Design and optimize prompts for LLM-based features” | Write, test, and iterate system prompts and user prompt templates |

| ”Build evaluation frameworks” | Create test suites to measure prompt quality at scale |

| ”Collaborate with product and engineering” | Translate business requirements into AI behavior specs |

| ”Experience with RAG or vector databases” | Understand how retrieval-augmented generation works in practice |

| ”Python scripting for automation” | Write scripts to batch-test prompts or integrate AI into pipelines |

| ”Familiarity with LangChain or similar” | Know at least one agent framework well enough to build with it |

| ”Monitor production AI system behavior” | Use observability tools to catch issues before customers see them |

Typical nice-to-haves: Fine-tuning experience, multi-modal AI (image/audio/video), red teaming or adversarial testing background, specific domain expertise (healthcare, legal, finance), published writing or research on AI topics.

What most postings don’t explicitly require but implicitly expect: The ability to write documentation that non-technical stakeholders understand, strong opinions about AI ethics formed from actual practical experience, and a portfolio demonstrating measurable improvements to AI system behavior.

Prompt Engineer Salary and Career Paths in 2026

Salary Ranges by Level (United States)

| Level | Experience | Base Salary | Total Compensation |

|---|---|---|---|

| Entry | 0–2 years | $85,000–$120,000 | $93,000–$135,000 |

| Mid-Level | 2–5 years | $120,000–$160,000 | $135,000–$185,000 |

| Senior | 5+ years | $160,000–$216,000 | $185,000–$260,000 |

| Head of AI / Lead | 7+ years | $216,000–$375,000 | $260,000–$450,000+ |

Data aggregated from ZipRecruiter March 2026, Glassdoor 2025-2026, and industry analysis from buildfastwithai.com Q1 2026

Prompt Engineer Salary by City (United States)

| City / Region | Mid-Level Salary | Senior Salary | Premium vs. National Average |

|---|---|---|---|

| San Francisco, CA | $165,000–$210,000 | $230,000–$295,000 | +35–40% |

| New York, NY | $155,000–$200,000 | $210,000–$280,000 | +25–30% |

| Seattle, WA | $150,000–$195,000 | $205,000–$270,000 | +20–25% |

| Austin, TX | $135,000–$175,000 | $180,000–$240,000 | +10–15% |

| Boston, MA | $145,000–$190,000 | $200,000–$260,000 | +20–25% |

| Remote (US-based) | $125,000–$165,000 | $170,000–$225,000 | National avg |

| Remote (Non-US) | $75,000–$130,000 | $120,000–$180,000 | Varies by region |

Top tech companies operate in their own compensation tier: Glassdoor data from 2025 places Google’s median total compensation for AI specialists at $245,000 and Meta’s at $234,000.

Freelance rates for independent practitioners range from $75–$150/hour at mid-level to $150–$250+/hour for senior specialists with proven domain expertise and documented case studies. Top contractors working with US clients remotely report annual earnings between $200,000 and $400,000+. Retainer-based AI operations consulting engagements typically run $2,000–$6,000 per month for ongoing work. The freelance market rewards practitioners who’ve built visible portfolio evidence of outcomes more strongly than consultants who rely on credentials alone.

High-Paying Industry Specializations

| Industry | Salary Premium | Why It Pays More |

|---|---|---|

| Healthcare AI | $180K–$400K senior | Accuracy stakes, HIPAA requirements, limited qualified pool |

| Legal Tech | $180K–$320K senior | Domain expertise required, risk sensitivity, nuanced judgment |

| Financial Services | $150K–$280K senior | Compliance requirements, high-stakes automated decisions |

| Big Tech | $140K–$300K + equity | Scale impact, competitive talent market, equity upside |

Five Career Paths Worth Evaluating

1. In-House Prompt Engineer / AI Specialist Focused work within one company’s AI systems. Offers stability, domain depth, and scale impact. Best for practitioners who prefer deep expertise in specific systems over variety.

2. Freelance AI Consultant Variable client work across industries. Higher hourly rates, maximum schedule flexibility, income variability. Best for entrepreneurial practitioners comfortable with self-marketing and client relationship management.

3. AI Product Manager Combines prompt engineering knowledge with product strategy and roadmap ownership. Higher compensation ceiling, more stakeholder management, less hands-on model work. Best for business-minded practitioners who want strategic influence.

4. AI Trainer / Educator Corporate training programs, course creation, and thought leadership. Strong passive income potential once audience is established — though building that audience takes 12–24 months of consistent effort. Best for practitioners who genuinely enjoy teaching.

5. Domain AI Specialist Deep expertise in one vertical — healthcare AI, legal AI, financial AI. Premium compensation, reduced competition from generalists, and strong client relationship potential. Best for practitioners who already have domain expertise from a previous career and want to add AI skills to significantly increase market value.

6. Conversational AI Designer A hybrid role between UX design and prompt engineering. Designs the dialogue architecture for AI-powered products — chatbots, voice assistants, customer interfaces. Often doesn’t require coding. Growing in demand as companies realize that bad conversational AI design damages customer experience regardless of model quality.

7. AI Trainer / Red Teamer Works with AI labs and enterprises to improve model alignment and safety. Responsibilities include generating adversarial test cases, identifying failure modes, and writing human feedback for reinforcement learning from human feedback (RLHF) pipelines. Some of these roles are contract or part-time, making them excellent entry points for career changers building initial AI credentials.

Frequently Asked Questions About Prompt Engineering Careers

How long does it take to become an AI prompt engineer?

The realistic range is 6–18 months depending on starting background and available weekly hours. Practitioners with strong writing skills, analytical thinking, and 15+ weekly hours available often reach job-readiness in 6–8 months. Those learning part-time alongside full-time employment typically need 12–18 months to build a competitive portfolio. The timeline is driven primarily by portfolio completion, not course hours accumulated — a fact that surprises many learners who spend months in course-taking mode without building anything.

Can you become a prompt engineer without a computer science degree?

Absolutely. Most hiring managers at AI companies and enterprises explicitly prioritize demonstrated skills over academic credentials. A strong portfolio with quantified results, systematic documentation, and working examples consistently outweighs a CS degree in evaluation processes. So much so that many teams have removed degree requirements from their AI specialist job descriptions. That said, some larger enterprise companies use degree requirements as an HR filter — in those cases, Google Prompting Essentials or similar recognized certifications can partially substitute.

Do you need coding skills to become a prompt engineer?

Not to start — but Python significantly expands both the scope of available work and the compensation potential over time. Practitioners working exclusively through chat interfaces are limited to manual, one-at-a-time interactions that can’t scale. Basic Python (variables, loops, functions, API calls) unlocks automated testing, batch processing, integration into production systems, and the ability to build tools rather than just demonstrate techniques. Most serious prompt engineering roles in 2026 assume at least basic scripting fluency, and the roles that don’t are generally lower-paying.

Is prompt engineering being replaced by AI in 2026?

Models are improving at understanding poorly-constructed prompts, which reduces the value of simple prompt optimization. What isn’t being automated is the complex judgment required for production systems: edge case handling, systematic evaluation methodology, domain-specific accuracy requirements, context architecture design for multi-agent workflows, AI security awareness, and the organizational work of training colleagues on effective AI use. The role is evolving toward higher-order judgment and system design rather than disappearing. Think of how spreadsheets changed what accountants do without eliminating accounting expertise.

What is context engineering and how is it different from prompt engineering?

Prompt engineering focuses on crafting the individual instruction sent to a model — the immediate query or task specification. Context engineering is the broader discipline of designing everything a model receives during inference: system instructions, retrieved documents, conversation history, tool outputs, memory states, and structured schemas that define how the model should reason. As AI systems become more agentic and multi-step, managing that full context window becomes as important as writing good prompts. Practitioners with context engineering skills command meaningful salary premiums because the discipline requires deeper systems thinking.

Which certifications are most valuable for prompt engineering careers?

The comparison below reflects actual employer recognition and candidate outcome data from 2025-2026:

| Certification | Provider | Cost | Time | Best For | Employer Recognition |

|---|---|---|---|---|---|

| Google Prompting Essentials | Free | 5–10 hrs | Quick credential, broad recognition | High (Google brand) | |

| Prompt Engineering for ChatGPT | Vanderbilt / Coursera | ~$49 | 15–20 hrs | Most comprehensive foundational cert | High (university credibility) |

| Generative AI: Prompt Engineering Basics | IBM / Coursera | Free (audit) | 10–15 hrs | Technical depth, IBM brand | Medium-High |

| AI+ Context Engineering | NetCom Learning | $300–500 | 20–30 hrs | Enterprise context engineering focus | Medium (specialist value) |

| AWS AI Practitioner | Amazon | $150 (exam) | 40+ hrs study | Cloud-native enterprise roles | High (AWS ecosystem) |

| Microsoft Agentic AI Business Solutions | Microsoft | $165 (exam) | 40+ hrs study | Microsoft-stack enterprise roles | High (Microsoft ecosystem) |

| IBM RAG and Agentic AI Professional | IBM / Coursera | ~$200 | 30–40 hrs | Enterprise agentic AI, RAG systems | Medium-High |

The honest assessment: certifications help pass automated HR keyword filters but don’t prove competence to technical hiring managers. Portfolio projects are the actual proof of skill. The optimal strategy is completing one recognized certification to clear HR filters, then investing remaining time in portfolio project development. Reviewing the full list of recommended AI certifications helps prioritize based on target role and industry.

What industries pay the highest salaries for prompt engineering skills?

Healthcare AI consistently reaches $180,000–$400,000 for senior specialists because accuracy stakes are high and domain knowledge requirements shrink the qualified candidate pool dramatically. Legal tech follows at $180,000–$320,000, where understanding legal nuance and risk sensitivity is difficult to acquire quickly. Financial services ranges from $150,000–$280,000, driven by compliance complexity and decision stakes. Big tech pays $140,000–$300,000 with meaningful equity upside. Generalist roles without domain specialization cluster in the $100,000–$160,000 range — still attractive, but significantly below what domain specialists earn.

What is the biggest mistake aspiring prompt engineers make?

Certification accumulation without portfolio building is consistently the most costly error practitioners make. Multiple completed courses demonstrate effort but don’t prove capability to any hiring manager worth working for. The actual differentiator in hiring processes is evidence: before/after examples showing measurable improvement, projects that solve real problems with documented methodology, and documentation revealing systematic thinking behind the approach. One well-documented project with specific outcome metrics is worth more than five certifications in any honest evaluation — and this pattern holds across all levels from entry-level to senior specialist hiring.

What interview questions do prompt engineers get asked?

Hiring processes in 2026 typically include a mix of behavioral and technical/demonstration questions. Knowing what to expect removes significant stress and lets candidates prepare specifically.

Behavioral questions (STAR-method answers expected):

- “Tell me about a time a production AI system failed and how you diagnosed and fixed the problem.”

- “Describe a situation where you had to explain AI behavior limits to a non-technical stakeholder.”

- “Walk me through how you approached building a prompt evaluation framework from scratch.”

- “What’s the most complex context engineering challenge you’ve tackled, and what did you learn?”

- “How do you stay current on model updates and decide when to revisit existing prompts?”

Technical / demonstration questions:

- “Given this user query, write a system prompt that produces consistent, safe, on-brand responses.”

- “Here’s an AI output that’s failing. Diagnose what’s going wrong and rewrite the prompt.”

- “Design an evaluation framework for a customer-facing AI assistant. What metrics would you track?”

- “How would you implement RAG for this use case? Walk through your chunking and retrieval strategy.”

- “What red teaming approaches would you apply to an AI system handling sensitive user data?”

Red flag to watch for: A company that tests via written prompt exercises with no rubric and no debrief isn’t evaluating prompting ability — they’re collecting free labor. Legitimate evaluations include discussion of your reasoning process, not just the output.

Can non-technical people with no coding background become prompt engineers?

Absolutely — and the specific path is more achievable than most people realize. The key insight is that different job titles within the AI field have very different technical barriers.

Roles fully accessible without coding:

- AI Content Specialist / AI Copywriter

- Conversational AI Designer

- AI Trainer (providing human feedback for RLHF)

- Prompt Quality Analyst

- AI Product Strategist

The non-technical practitioner’s roadmap through the 12 months above:

- Phases 1 and 2 (months 1–3): Fully non-technical — this is technique mastery through chat interfaces

- Month 4 (Python): Optional at this stage; delay if another skill is more pressing

- Month 5 onward: Python becomes important for career ceiling but not required to start working

Portfolio adapted for non-coders: Document-based projects (prompt libraries, evaluation rubrics, conversation logs with annotated analysis) work well. A well-documented comparison of 50 prompts across three models with explicit quality scoring is compelling evidence of serious methodology even without a single line of code.

Career changers from writing, marketing, legal, healthcare, and education backgrounds consistently find that domain expertise accelerates their entry significantly — often more than an equivalent investment of time in Python would.

The Skill That Lasts Beyond the Title

The prompt engineering job title may continue evolving — toward AI Engineer, Applied AI Specialist, or context engineering roles — but the core competency is becoming foundational infrastructure for knowledge work across every industry. Organizations that have invested seriously in this capability report faster automation development, more reliable AI deployments, and significantly better AI-human collaboration outcomes.

The 12-month roadmap outlined here isn’t a race. It’s a framework that accommodates different starting points, different time availability, and different destination goals. What remains constant is that building genuine capability evidenced by a portfolio gives candidates and freelancers evaluation criteria that hiring managers can assess independently of credentials.

The skill set developed through this path — systematic thinking, precise communication, evaluation methodology, and technical fluency — transfers across every job title this field touches. Once the portfolio foundation is solid, strategically choosing the right credential to optimize market positioning becomes a tactical question, not a prerequisite for competence.