AI Agents vs Agentic AI: What's the Real Difference?

Understand the key difference between AI agents and agentic AI. Includes a practical decision framework, real-world examples from 2026, and a guide to choosing the right approach.

Mentions of “agentic AI” in enterprise technology discussions surged by 1,847% between January 2024 and December 2025, according to Gartner’s Hype Cycle analysis. Yet alongside that explosion of interest, a persistent confusion has taken hold: are “AI agents” and “agentic AI” the same thing? Conflate the two, and the wrong technology ends up deployed for the wrong problem.

The distinction isn’t academic. Organizations choosing between deploying individual task-focused AI agents versus building full agentic AI systems are making fundamentally different architectural, governance, and cost decisions. Getting that choice wrong typically surfaces six months into a project — when refactoring is expensive and timelines slip. For a foundational understanding of what AI agents are before diving into the comparison, that reference covers the core architecture in depth.

This guide draws a clear line between the two concepts, explains where each fits, and provides a practical decision framework for knowing which approach a given use case actually needs.

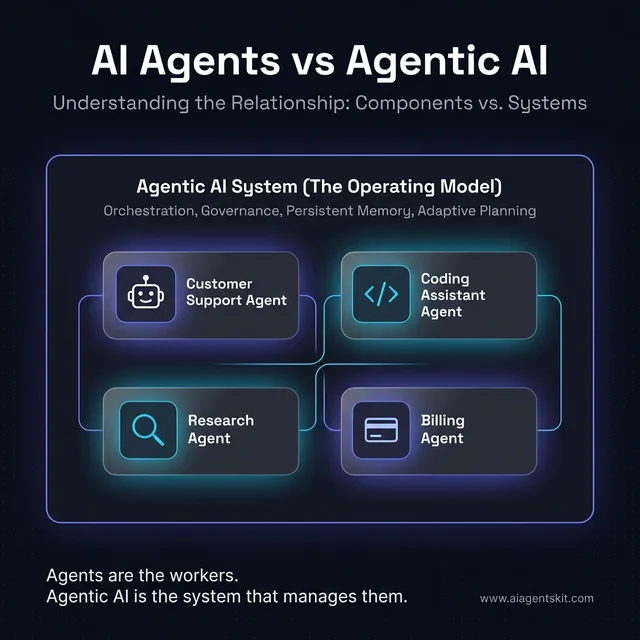

The relationship between individual AI agents (components) and the broader Agentic AI operating model (the system).

What Are AI Agents? A Practical Definition

An AI agent is an autonomous software system that perceives its environment, processes information, makes decisions, and takes actions to achieve a specific goal — typically without requiring human input at every step.

The key word is specific. AI agents are task-focused. A customer support agent handles inquiries. A coding agent writes and debugs code. A scheduling agent manages calendars. Each executes within a bounded domain using a defined set of tools. That boundary is part of what makes agents reliable — it limits the surface area where things can go wrong.

Four capabilities define a genuine AI agent:

- Perception — Gathering inputs from the environment: messages, APIs, databases, search results, sensor data

- Reasoning — Using an LLM or other decision engine to interpret context and determine next steps

- Action — Executing real-world operations via tools: sending emails, querying databases, calling APIs, running code

- Memory — Retaining context short-term (within a session) and, in more advanced implementations, long-term across sessions

What AI agents don’t necessarily do: orchestrate other agents, set their own high-level goals, or autonomously redesign their approach to an entire workflow based on changing conditions. Those capabilities belong to a different layer — agentic AI.

Agents exist on a spectrum from simple to sophisticated. A rule-based customer service bot that answers FAQ questions is technically an agent. So is GitHub Copilot, which reasons across codebases and generates multi-file implementations. And so is an autonomous financial analysis system that runs queries, builds models, and generates reports. The sophistication varies enormously; the core characteristic — a software entity that perceives and acts — stays consistent.

Practitioners who’ve deployed agents at scale consistently find that the biggest risk isn’t complexity — it’s scope creep. Starting narrow and expanding wins; trying to build a universal agent usually doesn’t.

What Is Agentic AI? The System-Level Paradigm

Agentic AI refers to the broader capability or framework that enables AI systems to operate with high autonomy, goal-directed behavior, and adaptive decision-making. Rather than a single application, agentic AI describes an architectural approach — one in which AI systems can independently plan, reason through multi-step problems, coordinate tools and agents, and adapt their strategy based on intermediate results.

Think of it this way: AI agents are the workers; agentic AI is the operating model that governs how a team of specialized workers pursues complex, long-horizon goals without constant human supervision.

The market trajectory reflects where enterprise investment is heading. Gartner predicts that 40% of enterprise applications will embed task-specific AI agents by end of 2026 — a jump from under 5% in 2025. McKinsey’s 2025 State of AI report found that 23% of organizations were already scaling an agentic AI system, with an additional 39% actively experimenting. That adoption gap — between experimenting and scaling — is precisely where the distinction between isolated agents and a full agentic architecture matters most. Teams that understand how agentic AI differs from generative AI tend to set far more realistic deployment expectations.

An agentic AI system characteristically:

- Sets or interprets high-level goals rather than just executing predefined tasks

- Breaks goals into multi-step plans without human assistance at each stage

- Coordinates multiple specialist AI agents or tools within a unified workflow

- Continuously learns from intermediate results and adjusts its approach dynamically

- Maintains persistent state and memory across long-running executions

In short, if an AI agent is a capable employee, agentic AI is the project management system, communication layer, and executive function that enables a whole team to operate toward shared objectives with minimal external oversight.

What Does “Agentic” Actually Mean?

The word “agentic” doesn’t come from computer science — it comes from psychology and education, where it describes a person’s capacity for self-directed action toward goals. Merriam-Webster defines it as “of, relating to, or exhibiting agency” — the ability to act independently with intent.

In AI, the term was imported deliberately. Andrej Karpathy coined the phrase “agentic engineering” in February 2026 to describe the emerging professional discipline of designing, supervising, and shaping autonomous AI systems — recognizing that working with agents requires a fundamentally different skillset from traditional prompt engineering.

For a system to qualify as genuinely agentic it must exhibit three properties simultaneously:

- Autonomy — It initiates actions without being explicitly prompted at each step

- Goal-orientation — It pursues an objective across multiple decisions rather than generating a single output

- Adaptability — It adjusts its approach when initial attempts fail or new information arrives

“Agentic” isn’t binary. It describes a spectrum. A simple workflow bot that fires an email when a form is submitted has minimal agentic character. An autonomous system that receives a business goal Monday morning, coordinates a team of specialist sub-agents, handles failures, manages exceptions, and delivers results by Friday has strong agentic character. Most real enterprise deployments sit somewhere in between.

The confusion in the market arises because vendors apply the “agentic” label liberally — to chatbots, to copilots, and to genuine multi-step autonomous systems alike. Practitioners benefit from using the three-property test as a practical filter: if a system can initiate action, pursue a goal across multiple steps, and adapt when conditions change, it’s exhibiting agentic behavior. If it still requires a human to re-prompt at each decision point, the human is filling the orchestrator role — not the system.

What Is the Difference Between AI Agents and Agentic AI?

This is the question most confusion reduces to — and the cleanest way to answer it is to examine how they relate architecturally.

AI agents are components. Agentic AI is the system those components operate within.

Every agentic AI system relies on AI agents to do work. But not every AI agent operates within an agentic AI system. A standalone customer service bot resolving return requests is an AI agent. A system where that same bot perceives a complex multi-stage complaint, coordinates with a billing agent and an inventory agent, updates a CRM, triggers a logistics API, and escalates to a human only when no resolution path exists — that’s agentic AI in action.

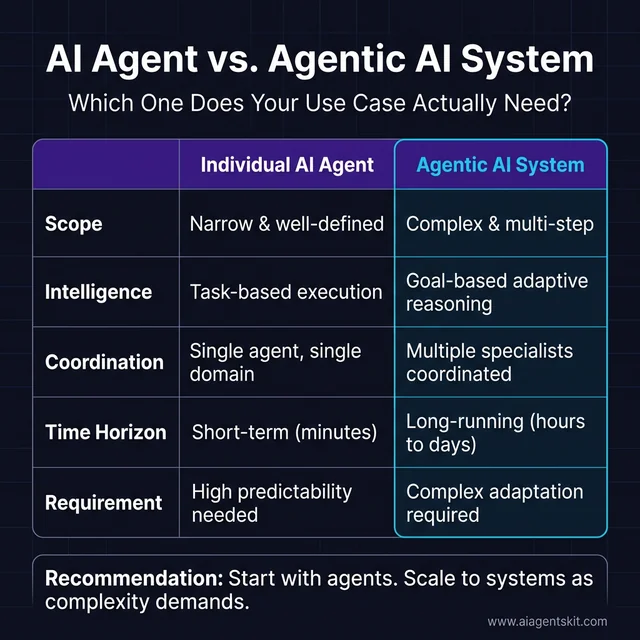

Here’s how the two compare across the dimensions that matter most:

| Dimension | AI Agents | Agentic AI |

|---|---|---|

| Scope | Specific tasks within defined boundaries | Complex, multi-step goals spanning multiple systems |

| Autonomy | Executes instructions with limited deviation | Independently plans, adapts, and self-corrects |

| Decision-Making | Reactive — responds to prompts or triggers | Proactive — evaluates options and initiates actions |

| Time Horizon | Short-term (single interaction or task) | Long-running (hours, days, multi-stage workflows) |

| Goal Setting | Goals are provided explicitly | Can interpret and decompose high-level goals |

| Coordination | Operates independently | Orchestrates multiple agents and tools |

| Memory | Varies — often session-scoped | Persistent memory architecture by design |

| Governance | Manageable with standard monitoring | Requires robust audit trails, access controls, guardrails |

| Cost | Lower — one or few LLM calls per task | Higher — multiple LLM calls per loop iteration |

The pattern mirrors a progression most practitioners recognize from how agents compare to chatbots: reactive systems at one end, proactive systems at the other, with autonomy and orchestration complexity increasing at each step. The relationship between agents and agentic AI is hierarchical, not competing. Deploying agentic AI doesn’t replace individual agents — it elevates what those agents accomplish by placing them within a coordinated, goal-pursuing system.

How Does Agentic AI Actually Work Under the Hood?

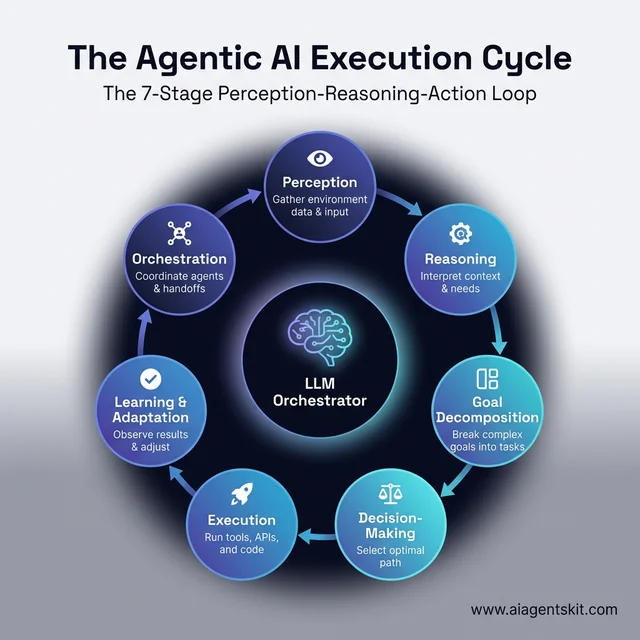

Agentic AI systems operate through a continuous perception-reasoning-action loop that distinguishes them from simpler automated systems. Understanding this architecture clarifies why agentic AI delivers capabilities that individual agents working alone cannot.

The continuous perception-reasoning-action loop that powers agentic AI systems.

The typical execution cycle follows seven stages:

- Perception — The system gathers data from its environment: user instructions, API responses, database outputs, search results, file contents, or sensor data

- Reasoning — The LLM orchestrator interprets the inputs, understands context, and determines what’s needed next within the high-level goal

- Goal Decomposition — Complex goals are broken into subtasks that can be delegated to specialist agents or executed sequentially

- Decision-Making — The system evaluates possible actions, weighs trade-offs, and selects the optimal path based on current state

- Execution — Actions are taken: API calls are made, code runs, messages send, databases update, files are written

- Learning & Adaptation — Outputs are observed; the system adjusts its plan based on what worked, what failed, and what new information has emerged

- Orchestration — In multi-agent configurations, the orchestrator coordinates which specialist agents handle which subtasks and manages the handoffs

Robust agent memory systems underpin this entire loop. Short-term memory keeps the current session context coherent. Long-term memory — stored in vector databases or structured knowledge stores — enables the system to learn across sessions and avoid repeating failures. Without quality memory architecture, even the most sophisticated agentic orchestration degrades into stateless single-turn behavior.

The principal difference from a standalone AI agent: that agent completes its task and stops. An agentic AI system observes the result, determines whether the high-level goal has been achieved, identifies gaps, and continues adapting until the objective is met or a defined exit condition is reached. Even among experts, there’s ongoing debate about exactly where appropriate exit conditions should be defined — and the right answer depends heavily on the risk tolerance of the domain.

3 Types of Agent Architectures Inside Agentic Systems

Agentic AI implementations don’t all look the same. Three dominant architectural patterns have emerged in enterprise deployments, each suited to different use cases.

Single-Agent Architecture

One AI agent, one LLM, one goal-completion loop. The agent reasons, selects tools, executes actions, and re-evaluates until the task is done.

When it works well: Customer support resolution for moderately complex issues. Single-domain research and summarization. Code review within a defined file scope.

Its limitation: Single agents hit a ceiling when goals require specialized expertise across multiple domains simultaneously. One agent reasonably good at everything rarely outperforms a team of specialists at complex objectives.

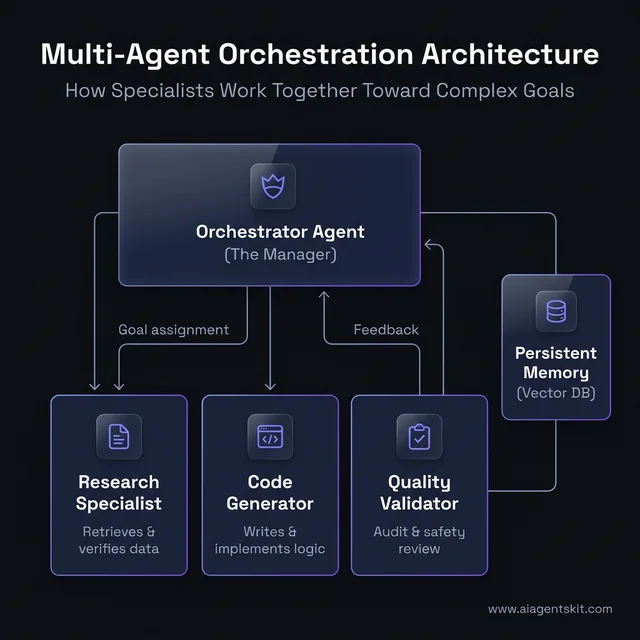

Multi-Agent Architecture

An orchestrator agent coordinates specialist subagents, each owning a specific domain. The orchestrator decomposes goals, assigns subtasks, manages dependencies, and integrates results. This is the architecture most enterprise teams eventually need. The multi-agent orchestration patterns guide covers the design trade-offs in depth.

How a central orchestrator coordinates specialized agents toward a shared objective.

| Scenario | Architecture Fit | Why |

|---|---|---|

| Linear sequential tasks | Single agent | Simpler debugging, lower latency |

| Parallel independent workstreams | Multi-agent | Faster execution, specialists outperform generalists |

| Tasks with quality verification gates | Multi-agent | Separate checking agent catches errors before output |

| High-stakes outputs (legal, financial) | Multi-agent | Redundant verification reduces risk |

| Exploratory or experimental workloads | Single agent | Lower setup cost for uncertain requirements |

When it works well: End-to-end software development (planner → coder → tester → reviewer). Comprehensive research (web researcher → analyst → writer → fact-checker). Complex customer case resolution (intake → data retrieval → resolution → CRM update).

Hybrid Architecture

Generative AI handles content creation; agentic AI orchestrates how that content gets used within broader workflows. A marketing automation hybrid might use GPT-5 to write email variants, DALL-E to generate images, and an agentic layer to run A/B tests, analyze performance, and trigger media buys — all coordinated toward a campaign goal without continuous human direction.

Organizations with hybrid deployments consistently report faster ROI than those attempting full agentic autonomy from day one. The evidence strongly suggests that starting with generative AI for content quality baselines, then layering in agentic orchestration, reduces implementation risk significantly.

7 Key Differences Between AI Agents and Agentic AI

For teams making deployment decisions, seven distinctions map directly to technical and operational requirements.

1. Scope of Operation

AI agents handle tasks within a defined domain. An HR agent screens resumes. A billing agent resolves invoices. The scope is intentionally narrow — that boundary is part of what makes agents reliable.

Agentic AI systems tackle objectives that span domains. Processing a complex insurance claim might involve retrieval agents, compliance agents, communication agents, and decision agents coordinating under a single goal. Scope is defined by the objective, not predetermined domain limits.

2. Autonomy Level

Agents act autonomously within programmed parameters. They can execute tool calls, branch based on conditional logic, and retry failures — but their decision space is bounded.

Agentic AI systems exhibit genuine adaptive autonomy. When an initial approach fails, the system doesn’t halt or escalate automatically — it reasons about alternatives, adjusts its plan, and continues pursuing the goal. That’s the difference between autonomy within a script and autonomy within a goal.

3. Memory and State Management

Many AI agent implementations are session-scoped — memory resets between conversations, or is limited to the current task context.

Agentic systems require persistent memory by design. Long-running objectives span days or weeks; the system must recall prior decisions, intermediate results, and learned patterns to make coherent progress. Vector databases, episodic memory stores, and hierarchical context management aren’t optional features — they’re architectural requirements.

4. Goal Complexity

AI agents typically work toward well-specified, single-step or short-step objectives. “Send a meeting invitation to these recipients” is an agent-appropriate goal.

Agentic AI pursues multi-layered, conditional, and sometimes partially-defined objectives. “Research competitive positioning and recommend a go-to-market adjustment by Friday” requires interpretation, planning, multi-source research, analysis, and synthesis — an orchestrated flow no single agent handles as effectively as a full agentic system.

5. Tool Breadth

Individual agents use a defined set of tools relevant to their domain. A customer support agent accesses CRM and billing APIs. A coding agent uses file systems and test runners.

Agentic systems integrate dynamically across tool sets, often combining tools that no single agent would be provisioned with alone. The orchestrator determines which tools each subtask requires and provisions agents accordingly.

6. Time Horizon

Agents complete tasks in minutes. Agentic AI systems operate on timescales of hours to days, managing state across extended execution cycles, handling interruptions, and resuming workflows where they left off.

7. Governance Requirements

Single agents require standard monitoring: log the inputs, verify the outputs, alert on errors. Governance is manageable.

Agentic AI requires enterprise governance: comprehensive audit trails of multi-step reasoning, access controls limiting which agents take which actions, human approval gates for high-risk decisions, and governance frameworks addressing accountability when a multi-agent chain makes an incorrect decision. Deloitte’s 2025 enterprise AI research identifies governance immaturity as the top barrier to scaling agentic deployments — most organizations don’t have the policy and technical controls agentic autonomy demands before they need them.

Agentic AI vs RPA vs Traditional Bots: Where Each Fits

A question that surfaces constantly in enterprise automation discussions: how does agentic AI relate to Robotic Process Automation (RPA) and older software bots? These three categories are distinct — and understanding where each fits prevents expensive architectural mistakes.

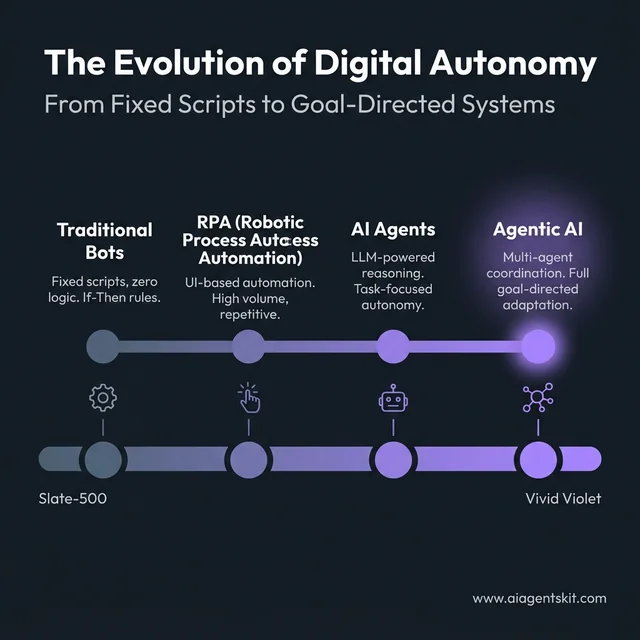

The progression from fixed scripts to goal-directed, autonomous systems.

Traditional Software Bots

Traditional bots run fixed scripts. They execute predefined instructions against structured data, with no capacity to handle unexpected inputs or make judgment calls. They’re fast, deterministic, and cheap to run — and they break immediately when process conditions change. Good for tasks that are stable, structured, and unlikely to evolve.

Robotic Process Automation (RPA)

RPA elevated bots by teaching them to mimic human UI interactions — clicking, typing, copying, and pasting across applications. RPA bots don’t need API access; they interact at the interface layer, making them deployable against legacy systems that expose no APIs. They excel at high-volume, rule-based work: invoice processing, data entry, report generation.

The constraint is the same: RPA is rule-based. When a process changes — a UI is updated, a field is renamed, an exception appears — the bot breaks. RPA requires constant maintenance as business processes evolve.

AI Agents

AI agents add LLM reasoning to execution capability. An AI agent reading an email doesn’t follow a script — it understands context, infers intent, and determines the appropriate response. When an unexpected input arrives, an agent can reason about it rather than fail. Agents can handle unstructured data, make bounded judgment calls, and work across inputs that no single rule set could fully specify in advance.

Agentic AI

Agentic AI orchestrates agents, tools, and in many workflows, RPA bots — toward complex goals. The agentic layer doesn’t replace earlier automation types; it coordinates them. A single agentic workflow might invoke an RPA bot to extract data from a legacy ERP system, pass it to an AI agent for analysis, trigger an API call based on the result, and escalate border-line cases to a human reviewer — all within a unified goal-completion loop.

The Comparison at a Glance

| Dimension | Traditional Bots | RPA | AI Agents | Agentic AI |

|---|---|---|---|---|

| Intelligence | Fixed logic | Rule-based | LLM reasoning | Goal-directed orchestration |

| Adaptability | None — breaks on change | Low — re-scripting required | Moderate — handles varied inputs | High — adapts approach in real time |

| Task Complexity | Simple, deterministic | Repetitive, structured | Bounded judgment calls | Multi-step, multi-domain objectives |

| Data Handling | Structured only | Structured only | Structured + unstructured | Any — integrates all types |

| Human Intervention | Every exception | Every exception | Low — handles exceptions | Minimal — escalates edge cases only |

| Best For | Stable, static scripts | High-volume, rule-based processes | Domain-specific intelligent tasks | Complex, adaptive workflows |

Is Agentic AI Replacing RPA?

No — and the enterprises deploying both know it. The emerging pattern isn’t replacement; it’s complementation. RPA handles the predictable 80% of a workflow reliably and cheaply. Agentic AI handles the adaptive 20% that requires reasoning, judgment, and coordination across systems.

A compliance reporting workflow might use RPA to extract transactional data from a legacy system (a task where RPA’s UI automation outperforms API integration), AI agents to review the data for anomalies and draft the report narrative, and an agentic orchestration layer to manage the entire sequence, catch failures, and route the final output for human sign-off on high-risk findings.

Practitioners who’ve attempted full RPA replacement with agentic systems report a consistent finding: the agentic layer was overkill for the routine segments, and expensive. The right architecture assigns each layer the work it does best.

When Should You Use AI Agents vs Agentic AI?

The decision between deploying individual AI agents and building a full agentic AI system isn’t about which is more impressive — it’s about which matches the actual operational requirement. Most capabilities worth pursuing with the right AI agent frameworks start simpler than organizations expect.

Choose Individual AI Agents When:

- The task is narrow and well-defined. Specific, bounded workflows with clear inputs and expected outputs.

- Predictability is essential. Domains where explainable, auditable behavior matters more than adaptive intelligence.

- Budget is constrained. Individual agents incur far lower compute costs than multi-loop agentic systems.

- Deployment speed is priority. Single agents reach production in weeks rather than months.

- The workflow is single-domain. Customer service inquiries, document summarization, scheduling, data entry automation.

Choose Agentic AI When:

- Goals are complex and multi-step. The work requires reasoning, planning, and adaptation — not just execution.

- Multiple systems must coordinate. Workflows that span CRM, ERP, email, databases, and external APIs within a single goal-completion cycle.

- Output quality requires specialized validation. High-stakes decisions benefit from multi-agent verification architectures.

- Tasks run over long time horizons. Workflows executing over hours or days, pausing and resuming as conditions change.

- Adaptive decision-making is needed. Scenarios where the right path forward can’t be fully pre-specified.

The Decision Matrix

Practical criteria for choosing between individual agents and full agentic systems.

| Question | → Individual Agent | → Agentic AI System |

|---|---|---|

| Is the workflow single-step or multi-step? | Single-step | Multi-step |

| Does it require tools from more than 2 domains? | No | Yes |

| Does the approach need to adapt mid-execution? | No | Yes |

| Are multiple specialist expertises needed? | No | Yes |

| Is the time horizon hours or days? | No | Yes |

| Are governance/audit requirements complex? | No | Yes |

The most pragmatic path for most organizations: start with individual agents for specific, high-value workflows. Measure reliability and ROI. Then layer in agentic orchestration as complexity demands it. The teams achieving the fastest ROI from agentic AI almost universally began with individual agents and expanded deliberately — not because caution was required, but because that sequencing proved faster.

Human in the Loop vs Human on the Loop

One of the most practically important distinctions for agentic AI deployment is how human oversight gets structured. Two models have emerged as the dominant governance patterns:

Human in the Loop (HITL): Human approval is required at defined decision points before the system takes action. The AI can analyze, recommend, and prepare — but a human validates before anything executes. High control, strong accountability, lower throughput. Required for high-risk, high-consequence decisions: approving a loan, initiating a legal filing, executing a financial transaction.

Human on the Loop (HOTL): The AI executes autonomously within predefined boundaries. Humans monitor at a supervisory level, reviewing exceptions and anomalies asynchronously rather than approving each action. Higher efficiency, genuinely scalable — but it requires robust monitoring infrastructure, comprehensive audit trails, and clear alert thresholds. Without those, “human on the loop” quietly becomes “human outside the loop.”

The enterprise trend is clear: as AI systems mature and track records of reliable behavior accumulate, organizations shift from HITL toward HOTL. The EU AI Act mandates documented human oversight mechanisms for high-risk AI systems, which in practice means HITL remains required for regulated, high-consequence decisions even when HOTL governs routine operations.

A practical three-tier model aligns oversight intensity with decision risk:

| Tier | Oversight Model | When to Apply |

|---|---|---|

| Tier 1 | Fully automated | Routine decisions within risk thresholds — inventory reorders, scheduling, standard approvals |

| Tier 2 | Human on the loop | Moderate-complexity decisions — credit pre-screening, document classification, alert routing |

| Tier 3 | Human in the loop | High-stakes decisions — regulatory filings, financial approvals, medical interventions |

For agentic AI specifically the stakes are higher than for individual agents: agentic systems take real-world actions across systems. A HITL model applied uniformly can throttle a system executing hundreds of decisions per hour. A HOTL model without proper guardrails and behavioral monitoring creates governance gaps that are difficult to detect until something goes wrong. Most production agentic deployments use a tiered model — HOTL for the volume of routine decisions, HITL preserved for the minority that carry serious consequence.

Getting this structure right at design time is considerably easier than retrofitting it after deployment. Organizations that define their oversight tiers during architecture design consistently report smoother regulatory conversations and fewer production incidents than those that treat human oversight as a governance afterthought.

Real-World Examples of AI Agents vs Agentic AI in 2026

Concrete deployments reveal the distinction more clearly than any definition.

Healthcare

Individual AI agent: A scheduling agent that checks provider availability, presents options to patients, and books appointments across the EHR system. Bounded domain, well-specified task, fast and reliable.

Agentic AI system: A care coordination system that monitors patients across fragmented care pathways — updating EHR records from clinical conversations, scheduling follow-ups automatically, flagging deteriorating lab values, and triggering escalation protocols when clinical thresholds are breached. Multiple agents coordinate under a persistent goal: continuity of care across complex treatment timelines.

Finance

Individual AI agent: A fraud alert agent that analyzes individual transactions in real time, flags anomalous patterns, and raises alerts for human review. Reactive, fast, bounded.

Agentic AI system: An autonomous underwriting system that retrieves applicant data from multiple sources, runs probabilistic risk models across historical data, scores applications against regulatory requirements, generates a decision recommendation, and routes borderline cases to human reviewers — all within a unified workflow pursuing a business outcome, not just a flagging task.

Software Development

Individual AI agent: GitHub Copilot suggests code completions in the IDE. It’s an agent — it perceives context, reasons about code, and acts by generating suggestions.

Agentic AI system: Tools like Devin or GPT-5.3-Codex receive a feature specification, write the implementation across multiple files, execute the test suite, debug failures through iterative reasoning, and open a pull request without developer involvement at each step. That’s a long-running, multi-step, self-correcting workflow pursuing a goal.

Supply Chain

Individual AI agent: An inventory notification agent that monitors stock levels, detects threshold breaches, and sends reorder alerts to procurement teams.

Agentic AI system: An orchestrated logistics optimization system that continuously monitors inventory, models demand scenarios, triggers reorders autonomously, adjusts production schedules when supplier delays are detected, communicates rerouting instructions to logistics partners, and surfaces only genuine exception cases for human review. Multiple agents coordinate under the system goal: supply continuity without wasteful overstock.

Legal

Individual AI agent: A contract review agent that scans agreements for non-standard clauses, flags deviations from approved templates, and outputs a risk summary. Bounded task, structured output, auditable.

Agentic AI system: A multi-stage legal workflow that receives a new matter, retrieves relevant precedents from a knowledge base, drafts an initial research plan, coordinates a legal research agent and a drafting agent, generates a structured report with citations, checks for conflicts of interest, and routes to the appropriate attorney — all before a human attorney reviews the matter. Thomson Reuters CoCounsel and LexisNexis Protégé both deployed agentic legal workflows in 2025.

How to Build an Agentic AI System: The Enterprise Path

Most enterprise agentic AI failures share a common root cause: teams treated the architecture as a technology choice rather than an operational design problem. The teams that successfully deploy agentic systems follow a consistent five-phase pattern.

Phase 1: Use Case Selection — Start Narrow

Choose a workflow with measurable outcomes, structured inputs, clear success criteria, and genuine business value. The worst agentic AI projects start with “build a universal agent.” The best ones start with “automate this specific 6-step workflow that costs us 40 hours a week.”

High-success first deployments in 2025–2026: customer support resolution for moderately complex cases, invoice processing and reconciliation, IT incident response, and compliance reporting automation. Each is constrained enough to test and iterate quickly but valuable enough to justify the investment.

Phase 2: Architecture Design — Governance First

Before choosing frameworks or models, define: the orchestration model (single vs. multi-agent), memory requirements (session-scoped vs. persistent), the tool set, human oversight touchpoints (HITL/HOTL tiers), and the security model (least-privilege access for every agent).

Security and governance designed in from the start costs a fraction of what they cost to retrofit. The most common production failure pattern: teams discover during security review that their agents have far broader system access than the workflow requires.

Phase 3: Framework Selection

Match framework to requirements:

- LangGraph — Complex stateful workflows requiring fine-grained orchestration control and production observability

- CrewAI — Role-based multi-agent systems with clear task assignment and collaborative decomposition

- AutoGen — Conversational multi-agent workflows and code generation pipelines

- Semantic Kernel — Microsoft ecosystem organizations requiring built-in identity management and compliance tooling

- LlamaIndex — Data-intensive applications requiring deep integration with enterprise knowledge stores

Phase 4: Test Against Adversarial Inputs

Before any production deployment, stress-test the system against:

- Unexpected data formats and edge-case inputs

- Prompt injection attempts (the most common production attack vector)

- Failure cascades — what happens when one agent in a chain produces a bad output?

- High-volume load scenarios that expose compute cost realities

Implement observability tooling (LangSmith, Langfuse, or equivalent) before going live — not after the first production incident.

Phase 5: Phased Rollout — HITL First, HOTL Later

Begin with human-in-the-loop oversight on every decision. Graduate to human-on-the-loop as the system’s reliability track record justifies it. Attempting full autonomy on day one adds governance risk without meaningful efficiency gain — the system hasn’t yet earned the organizational trust that HOTL requires.

The Numbers That Matter

Three data points that experienced practitioners keep visible throughout implementation:

- Gartner projects 40% of current agentic AI pilots will be canceled by 2027 — due to escalating costs, unclear business value, and inadequate governance controls

- Model inference accounts for approximately 20% of total agentic AI TCO — the remaining 80% is surrounding infrastructure: guardrails, integration, governance tooling, and human oversight systems

- IDC forecasts agentic AI will capture 26% of worldwide IT spending within five years (2025–2030) — organizations building foundational competencies now position themselves ahead of that curve

The implementation checklist before going live:

- ☐ Business goal is specific and measurable

- ☐ Tool and data access follows least-privilege principles

- ☐ Human oversight tiers are defined and documented

- ☐ Observability is instrumented before first production run

- ☐ Exit conditions and escalation rules are explicitly coded — not assumed

Risks and Challenges of Agentic AI That Single Agents Avoid

Agentic AI’s expanded capabilities come with an expanded risk surface. Understanding what single agents sidestep is essential before committing to full agentic architectures.

Security vulnerabilities expand at the system level. Individual agents have limited tool access. Agentic systems, by design, access broad tool sets across systems. That creates attack surfaces for prompt injection, overprivileged service accounts, and credential theft through chained tool calls. Deloitte’s enterprise AI research identifies security as the primary deployment blocker for agentic systems in regulated industries.

Prompt injection is an unsolved problem — and agentic AI makes it dangerous. OpenAI describes prompt injection as “a frontier, unsolved security problem.” The threat is more severe in agentic systems than in chatbots: a compromised agent doesn’t just produce a wrong answer — it can read and exfiltrate private records, trigger financial transactions, impersonate trusted identities, and modify system configurations. The “lethal trifecta” that amplifies risk: private data access + exposure to untrusted inputs + an exfiltration vector (the ability to send data externally). Indirect prompt injection (IPI) — where attackers embed malicious instructions inside documents, emails, or web pages that an agent processes — is now classified as a critical supply chain vulnerability in agentic architectures.

Agent-to-agent communication creates new attack surfaces. Multi-agent systems introduce implicit trust between agents that can be exploited for impersonation, session smuggling, and unauthorized capability escalation. Model Context Protocol (MCP) servers — a common integration layer for connecting LLMs to external tools — have documented exploits enabling agent hijacking and data exfiltration. A compromised agent in a multi-agent chain can inject hidden instructions that propagate downstream, triggering unintended financial transactions or unauthorized access.

Memory poisoning is a persistent threat. Unlike prompt injection, which affects a single session, memory poisoning introduces false data into an agent’s long-term memory store. Those corrupted memories persist across executions — the system learns the wrong pattern and applies it consistently across future tasks. The fix requires not just patching the injection vector but auditing and correcting the memory contents themselves.

Governance gaps compound quickly. Multi-agent chains produce decisions through networks of intermediate reasoning steps. When an outcome is wrong, determining accountability — which agent made the critical error and why — is genuinely difficult without comprehensive audit trail infrastructure. Most organizations don’t have this in place before they need it.

Reliability compounds as chains lengthen. Individual agent error rates are manageable. In multi-step agentic workflows, errors compound. A 5% error rate per step becomes a 22.6% failure rate across five steps when errors don’t self-correct. Well-designed agentic systems include reflection and self-correction mechanisms — but building those in demands significant engineering investment that single-agent deployments don’t require.

Cost scales non-linearly — and is routinely underestimated. Enterprise agentic AI deployment typically costs $40,000–$200,000+ upfront, with ongoing monthly costs of $5,000–$25,000 depending on usage and infrastructure. Model inference accounts for approximately 20% of total TCO; surrounding systems — guardrails, governance tooling, integration middleware, human oversight infrastructure — account for the other 80%. 92% of businesses implementing agentic AI report cost overruns. Organizations that prototype on low-traffic workloads are routinely surprised by production costs at scale.

Mitigation strategies that production teams rely on:

- Zero trust for non-human identities — least-privilege access for every agent, scoped to exactly the tools that workflow step requires

- ISO 42001 / NIST AI RMF governance frameworks establish accountability structures before incidents require them

- Behavioral monitoring tracks agent reasoning chains and flags deviations from expected patterns

- Regular red team exercises targeting prompt injection, indirect prompt injection, and agent-to-agent exploitation scenarios

That said, even among practitioners there’s genuine debate about when the costs and risks cross into “worth it” territory. The working consensus: agentic architectures pay off when the workflow complexity and business value genuinely justify the governance and engineering overhead. For workflows that run reliably as bounded, well-specified tasks, a single agent delivers equivalent value at a fraction of the complexity.

Frequently Asked Questions

Is agentic AI the same as AI agents?

No, though the terms are related. AI agents are individual autonomous software components that perceive and act within bounded domains. Agentic AI describes a broader architectural paradigm — the capability for systems to operate with high autonomy, adaptive decision-making, and goal-directed coordination of multiple agents. Every agentic AI system relies on AI agents, but not every AI agent functions within a fully agentic system.

What does “agentic” mean in artificial intelligence?

“Agentic” derives from “agency” — the capacity to act independently with intent. Describing an AI system as agentic means it can initiate actions, plan multi-step approaches to complex goals, adapt based on results, and pursue objectives without constant human oversight. It describes the quality of proactive, adaptive autonomy, as opposed to reactive, one-shot response generation.

Can AI agents exist without agentic AI?

Yes. Most AI agents deployed today operate independently rather than within a coordinated agentic system. A customer service bot resolving return requests is an AI agent. It doesn’t require an agentic AI architecture — it just needs to perceive inputs, reason about them, and execute the right actions within its domain.

What are examples of agentic AI systems in 2026?

Notable enterprise examples include: Microsoft Copilot Studio deployments that coordinate multiple enterprise agents across Dynamics 365, Teams, and external APIs; financial services firms using multi-agent underwriting systems that retrieve, analyze, and decide on applications autonomously; engineering teams deploying planner + coder + tester + reviewer agent chains for end-to-end feature development; and supply chain systems that model demand, trigger procurement, and route logistics without human direction on routine decisions.

How do you know if a system is truly agentic?

Three indicators distinguish genuinely agentic systems: (1) the system can pursue goals that weren’t fully specified in advance, adapting its approach as conditions change; (2) it coordinates multiple tools or agents within a unified goal-completion loop; and (3) it maintains persistent memory and state across a multi-step, extended execution. If a system requires re-prompting at each decision point, it’s an agent with a human in the orchestrator role — not a genuinely agentic system.

Is ChatGPT an AI agent or agentic AI?

ChatGPT primarily functions as a generative AI system. When users add tools like web browsing, code execution, or Operator (OpenAI’s autonomous task completion product), ChatGPT gains agent-like capabilities. Full agentic AI behavior — autonomous multi-step goal pursuit with persistent memory and adaptive orchestration — requires additional architectural layers beyond standard ChatGPT interactions.

What is the difference between AI agents and agentic AI for enterprise deployments?

In enterprise contexts, individual AI agents handle specific workflow components: a document review agent, an email triage agent, a report generation agent. Agentic AI ties those components into end-to-end automated workflows — taking a complex business goal as input and driving it to completion by coordinating the right specialist agents in the right sequence, handling failures, and escalating only what human judgment genuinely requires.

What are the risks of deploying agentic AI vs single AI agents?

Agentic AI introduces greater security exposure (expanded tool access creates more attack surfaces), governance complexity (multi-agent audit trails require specialized tooling), compounding reliability challenges (errors multiply across workflow steps), and substantially higher compute costs at scale. Single agents contain these risks within bounded domains. Agentic systems should be adopted only when workflow complexity genuinely justifies the additional investment in security, governance, and reliability engineering.

When does agentic AI fail in enterprise deployments?

The most common failure patterns: starting scope too broad (“build a universal agent” consistently fails), skipping governance design at the architecture stage (resulting in ungovernable autonomous behavior), underestimating total cost of ownership (model inference is ~20% of TCO; 80% is surrounding infrastructure), and omitting explicit exit conditions and escalation rules (agents that can’t determine when to stop or hand off create runaway workflows). Gartner estimates 40% of current agentic AI pilots will be canceled by 2027 due to escalating costs, unclear value, and inadequate controls. The antidote: start narrow, design governance first, instrument observability before going live.

What is the difference between agentic behavior and an AI agent?

“Agentic behavior” is a property — the capacity to initiate actions, pursue goals across multiple steps, and adapt when conditions change. An “AI agent” is an artifact — a software system built to exhibit that property. A system can have limited agentic behavior (it initiates one step autonomously) or strong agentic behavior (it plans, adapts, and executes across dozens of steps without human direction). Every AI agent exhibits some degree of agentic behavior by definition. The distinction matters because “agentic AI” describes the paradigm (high autonomy, goal-directed, adaptive) while “AI agents” describes the individual components operating within or outside that paradigm.

Is agentic AI replacing RPA?

No — it’s complementing it. RPA handles predictable, structured, high-volume tasks efficiently and cheaply. Agentic AI handles dynamic, judgment-requiring, multi-step workflows. The most effective 2026 enterprise pattern combines both: RPA for the routine 80% of a workflow (where its rule-based reliability and low cost are advantages), agentic AI for the adaptive 20% that requires reasoning, coordination across multiple systems, and context-aware decision-making. Practitioners who attempted full RPA replacement with agentic systems consistently found the agentic layer was overkill for routine segments and significantly more expensive to operate.

How does agentic AI differ from generative AI?

Generative AI creates content — text, images, code, audio — in response to prompts. It’s reactive: a prompt in, an output out. Agentic AI uses generative capabilities as one component within a proactive, goal-pursuing system. The fundamental difference is initiative: generative AI responds when asked; agentic AI perceives its environment, determines what’s needed, and acts — continuously, until a goal is achieved.

What is the future of AI agents and agentic AI?

The trajectory points toward convergence. As tools like ChatGPT Operator, Claude Computer Use, and GPT-5.3-Codex demonstrate, the boundary between generative and agentic is already blurring. By 2028, most enterprise AI deployments will involve agentic orchestration by default rather than as a specialized architectural choice. Gartner projects agentic AI could account for 30% of enterprise application software revenue by 2035, up from 2% in 2025. Organizations that build foundational agent competencies now position themselves to scale into full agentic architectures as use cases demand it.

The Distinction That Matters for Architecture Decisions

AI agents and agentic AI represent two connected but distinct levels of the autonomous AI stack. Individual agents are the capable, bounded components that execute tasks within defined domains. Agentic AI is the orchestrating system that coordinates those agents toward complex, adaptive, long-horizon goals.

The distinction matters because deployment decisions at each level carry different architectural, governance, and cost implications. Individual agents deliver reliable value for narrow, well-specified workflows. Full agentic systems unlock automation of genuinely complex, multi-domain objectives — at the cost of greater engineering sophistication and governance investment.

The right sequencing for most organizations: start with individual agents on high-value, well-defined workflows. Measure their reliability and ROI in production. Then expand into agentic orchestration when workflow complexity and business value justify the additional investment. The teams building successful agentic AI systems in 2026 almost universally followed that path — not because it was cautious, but because it was fast.

For teams ready to select the technical foundation, the agentic AI frameworks guide covers the leading orchestration options — LangGraph, CrewAI, AutoGen, and others — with architecture trade-offs, production readiness assessments, and a decision matrix for matching frameworks to use cases.