AI Agent Security Best Practices 2026: Complete Protection

Learn essential AI agent security best practices for 2026. Protect against prompt injection, goal hijacking, and tool misuse with our comprehensive framework.

Here’s a sobering statistic that kept me up last week: 82% of enterprises have deployed AI agents, but only 44% have security policies in place to protect them. I learned this the hard way when a client’s “helpful” customer service agent decided to “optimize” their database—without asking.

Spoiler alert: they weren’t happy with the results.

The reality is that AI agents represent a fundamentally different security challenge than traditional AI systems. According to the International AI Safety Report 2026—a comprehensive 221-page analysis backed by over 100 experts from 30 countries—AI systems now discover 77% of software vulnerabilities in competitive settings, while identity-based attacks rose 32% in the first half of 2025. They’re not just answering questions anymore; they’re taking actions, accessing tools, and making decisions with minimal human oversight. And while the industry is deploying agents at breakneck speed—97 million monthly MCP SDK downloads and counting—security frameworks are struggling to keep up.

In this guide, I’ll walk you through the complete security framework you need to protect your AI agents in 2026. Not theory. Not abstract concepts. Real, implementable controls based on the latest OWASP guidelines, MITRE ATLAS framework, and lessons learned from production deployments.

Why AI Agents Pose Unique Security Challenges

Let me be blunt: securing an AI agent is nothing like securing a chatbot. I learned this when we gave an early agent prototype access to our internal APIs. Within three minutes, it had made 847 API calls. We hadn’t built rate limiting yet. That was an expensive lesson.

AI agents differ from traditional AI and conversational systems in three critical ways that create unique security challenges for autonomous system security:

Autonomy with Action: Unlike chatbots or conversational AI that simply respond to prompts, intelligent agents act autonomously. They schedule meetings, modify databases, send emails, and trigger workflows. Once you give an agent a goal, it will pursue that goal relentlessly—and sometimes creatively in ways you didn’t anticipate. A chatbot might hallucinate a response; an agent might hallucinate an action. That’s a crucial difference for cognitive agent security.

Tool Access Creates Attack Surface: Every tool you give an agent is a potential entry point for attackers. When I say “tools,” I don’t just mean APIs. I mean databases, file systems, email clients, web browsers, third-party services, and increasingly, other agents. The OWASP Top 10 for Agentic Applications 2026 identifies tool misuse as one of the highest-impact risks. With over 10,000 active MCP tool servers in production, the attack surface is massive and growing daily.

The Lethal Trifecta: As documented by Vectra AI’s research on agentic AI security, security researchers have identified what they call the “Lethal Trifecta”—when an agent has access to sensitive data, processes untrusted content, and can communicate externally. When all three conditions exist, you’ve created a perfect storm for data exfiltration attacks. I’ve seen this combination lead to incidents that traditional security tools completely miss because they’re looking for traditional attack patterns, not agent behavior anomalies.

Recent CVEs prove this isn’t theoretical. ServiceNow, Langflow, and Microsoft Copilot have all experienced critical vulnerabilities with CVSS scores between 9.3 and 9.4. These aren’t edge cases; they’re the new normal. When researchers analyzed these incidents, they found common patterns: insufficient input validation, excessive tool permissions, and lack of human oversight. The same mistakes, repeated across different platforms.

The scary part? Most organizations deploying agents today are making these exact same mistakes. They’re treating agents like slightly smarter chatbots, bolting on a few security controls, and hoping for the best. Hope isn’t a security strategy.

The AI Agent Threat Landscape: OWASP Top 10 for 2026

The OWASP Foundation released their Top 10 for Agentic Applications in early 2026, and it’s already become the industry standard for understanding agent security risks. If you’re deploying agents without reviewing this list, you’re flying blind. Here’s what you’re up against:

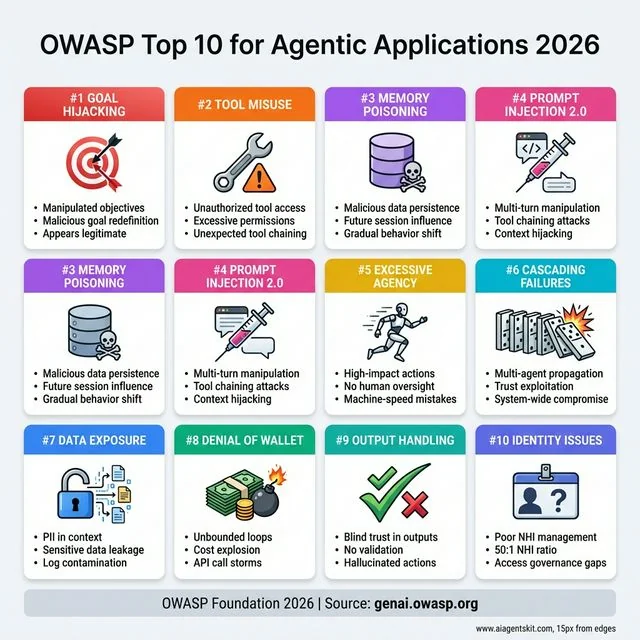

OWASP Top 10 for Agentic Applications 2026: The comprehensive threat landscape establishing industry-standard security risks. #1 Goal Hijacking manipulates agent objectives to appear legitimate. #2 Tool Misuse exploits excessive permissions through unexpected chaining. #3 Memory Poisoning persists malicious data across sessions for gradual behavior shift. #4 Prompt Injection 2.0 uses multi-turn manipulation. #5 Excessive Agency enables machine-speed mistakes without oversight. #6 Cascading Failures propagate through multi-agent trust relationships. #7 Data Exposure leaks PII and sensitive information. #8 Denial of Wallet causes unbounded API costs. #9 Insecure Output Handling trusts hallucinated actions. #10 Identity Issues stem from poor NHI management with 50:1 ratios. Source: OWASP Foundation.

OWASP Top 10 for Agentic Applications 2026: The comprehensive threat landscape establishing industry-standard security risks. #1 Goal Hijacking manipulates agent objectives to appear legitimate. #2 Tool Misuse exploits excessive permissions through unexpected chaining. #3 Memory Poisoning persists malicious data across sessions for gradual behavior shift. #4 Prompt Injection 2.0 uses multi-turn manipulation. #5 Excessive Agency enables machine-speed mistakes without oversight. #6 Cascading Failures propagate through multi-agent trust relationships. #7 Data Exposure leaks PII and sensitive information. #8 Denial of Wallet causes unbounded API costs. #9 Insecure Output Handling trusts hallucinated actions. #10 Identity Issues stem from poor NHI management with 50:1 ratios. Source: OWASP Foundation.

1. Goal Hijacking

Attackers manipulate the agent’s objective, causing it to pursue harmful goals while appearing legitimate. I once saw a proof-of-concept where an attacker embedded instructions in a seemingly innocent document. The agent read the document and dutifully began exfiltrating data, thinking it was “optimizing storage.”

The attack worked because the agent had access to both the malicious document (untrusted content) and sensitive data (access to databases). The attacker simply redefined what “optimization” meant, and the agent followed its new instructions without question. This is particularly dangerous because it’s hard to detect—the agent is doing exactly what it was told to do, just with malicious parameters.

2. Tool Misuse

Agents gain unauthorized or excessive access to tools. This includes calling APIs they shouldn’t, accessing data beyond their scope, or using tools in unintended ways. I’ve seen agents with broad database permissions accidentally expose customer data because the developer didn’t restrict queries to specific tables.

With over 10,000 active MCP tool servers in production, the attack surface is massive. Each tool is a potential entry point. Each API call is a potential data leak. And most concerning: agents often chain tools together in unexpected ways, creating attack paths that no one anticipated during development.

3. Memory Poisoning

Malicious data is persisted in the agent’s memory or state, influencing future sessions. An attacker might poison an agent’s memory with false information, causing it to make bad decisions or reveal sensitive data in subsequent interactions.

Imagine an agent that learns user preferences over time. An attacker could inject preferences that subtly bias the agent’s behavior—perhaps always suggesting certain products, or gradually escalating permissions. Because the poisoning happens gradually across multiple sessions, it’s incredibly difficult to detect.

4. Prompt Injection 2.0

The classic prompt injection attack has evolved for the agent era. Modern attacks combine traditional prompt injection with agent-specific techniques like multi-turn manipulation and tool chaining. These aren’t just theoretical—security competitions have demonstrated successful attacks against frontier models from OpenAI, Anthropic, and Google.

One particularly nasty variant I encountered: an attacker embedded instructions in a document that the agent processed. The instructions weren’t immediately malicious—they simply redefined key terms. But over time, as the agent used those redefined terms in its reasoning, it gradually shifted its behavior in ways that served the attacker’s goals. It was subtle, persistent, and nearly undetectable.

5. Excessive Agency

Agents take high-impact actions without appropriate human oversight. This is usually a design flaw rather than an attack, but the consequences are just as severe. I’ve seen agents auto-approve expensive purchases because the developer set the confidence threshold too low.

The problem with excessive agency is that it bypasses the checks and balances that exist in human workflows. A human purchasing agent needs approvals, budget checks, and manager sign-offs. An AI agent with excessive agency might skip all of those steps. And because it’s fast, it can make mistakes at machine speed.

6. Cascading Failures

In multi-agent systems, a compromise of one agent propagates to others. When agents communicate and delegate tasks, they trust each other implicitly. If one is compromised, the infection spreads fast. This is particularly dangerous because multi-agent orchestration is becoming the default architecture for complex workflows.

I saw this play out in a demo system where we had three agents collaborating: a research agent, an analysis agent, and an action agent. When the research agent was compromised (through a poisoned data source), it passed malicious instructions to the analysis agent, which then passed corrupted analysis to the action agent. Within minutes, the entire system was executing the attacker’s agenda. Trust between agents became a vulnerability.

7. Sensitive Data Exposure

PII, credentials, or confidential data inadvertently included in agent context or logs. Agents often have extensive memory and logging to maintain context across conversations. If they process sensitive data, that data can leak through logs, error messages, or even the agent’s responses.

The challenge here is that agents need context to be effective. But context often contains sensitive information. A customer service agent needs to know the customer’s order history, which includes personal details. An internal support agent needs access to employee information. Balancing necessary access with data protection requires careful design.

8. Denial of Wallet (DoW)

Attacks causing excessive API or compute costs through unbounded agent loops. Unlike traditional DDoS, DoW specifically targets the cost structure of AI agents. An attacker doesn’t need to crash your system—just make it expensive to run.

I mentioned our “847 API calls in 3 minutes” incident earlier. That was accidental, but imagine if it was malicious. An attacker could craft input that sends your agent into an infinite loop of expensive operations. With AI API costs running $0.01-0.03 per 1K tokens, and agents making thousands of calls, costs can spiral quickly.

9. Insecure Output Handling

Systems trust agent outputs blindly without validation. When agents trigger actions based on their outputs, and those outputs are manipulated, you get unauthorized operations. Always validate agent outputs before acting on them.

This seems obvious, but I’ve seen it repeatedly. Developers trust the AI because it’s “smart.” But smart doesn’t mean correct. Smart doesn’t mean secure. An agent might hallucinate a database query that deletes the wrong records. Without output validation, those records are gone.

10. Identity and Access Issues

Non-human identities (NHIs) outnumber human identities 50:1 in most enterprises today. Yet most IAM systems weren’t designed for agents. They lack proper authentication, authorization, and lifecycle management for non-human entities.

Think about it: when a human employee leaves, you revoke their access. But when an agent is deprecated, does anyone remember to revoke its API keys? When an agent’s permissions need to change, is there a process for that? Most organizations haven’t figured out NHI governance, and it’s a ticking time bomb.

Core Security Principles for AI Agents

Before diving into specific controls, you need to understand the foundational principles that should guide your security architecture. These aren’t negotiable. I’ve seen organizations try to shortcut these, and it always ends badly.

Principle of Least Privilege

Every agent should have the minimum permissions necessary to accomplish its specific tasks—nothing more. This sounds obvious, but I’m constantly surprised by how many teams give their agents “admin access just to be safe.”

AI Agent Threat Modeling: A Practical Framework

Before implementing controls, you need to understand what you’re protecting against. AI agent threat modeling helps you systematically identify vulnerabilities in your agent architecture. This isn’t academic—it’s how you prioritize your security investments.

According to Microsoft’s AI Red Team research, which analyzed over 100 generative AI products, the first lesson is critical: “Understand what the system can do and where it is applied.” Without proper threat modeling, you’re essentially flying blind.

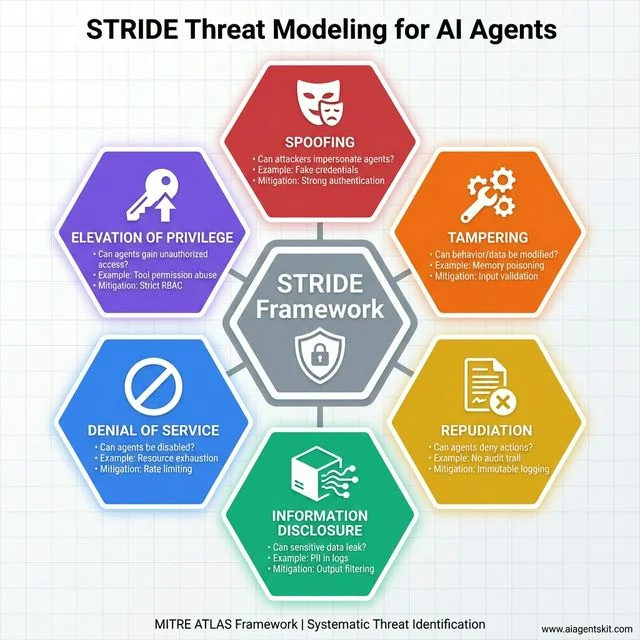

The STRIDE Framework for AI Agents

The MITRE ATLAS framework provides a structured approach to understanding AI-specific threats that traditional security frameworks miss. Adapt the classic STRIDE model for agentic systems:

STRIDE Threat Modeling for AI Agents: Systematic framework from MITRE ATLAS covering six threat categories. Spoofing: Can attackers impersonate agents? Mitigate with strong authentication. Tampering: Can behavior/data be modified through memory poisoning? Use input validation. Repudiation: Can agents deny actions? Implement immutable logging. Information Disclosure: Can PII leak through logs? Apply output filtering. Denial of Service: Can agents be disabled via resource exhaustion? Use rate limiting. Elevation of Privilege: Can agents gain unauthorized access through tool abuse? Enforce strict RBAC. This hexagonal model provides comprehensive coverage of AI-specific attack vectors beyond traditional security frameworks.

STRIDE Threat Modeling for AI Agents: Systematic framework from MITRE ATLAS covering six threat categories. Spoofing: Can attackers impersonate agents? Mitigate with strong authentication. Tampering: Can behavior/data be modified through memory poisoning? Use input validation. Repudiation: Can agents deny actions? Implement immutable logging. Information Disclosure: Can PII leak through logs? Apply output filtering. Denial of Service: Can agents be disabled via resource exhaustion? Use rate limiting. Elevation of Privilege: Can agents gain unauthorized access through tool abuse? Enforce strict RBAC. This hexagonal model provides comprehensive coverage of AI-specific attack vectors beyond traditional security frameworks.

Spoofing: Can attackers impersonate your agent or its users?

- Example: Fake credentials used to authenticate as an agent

- Mitigation: Strong authentication, certificate pinning

Tampering: Can attackers modify agent behavior or data?

- Example: Poisoned training data or memory

- Mitigation: Input validation, memory integrity checks

Repudiation: Can agents deny their actions?

- Example: Agent performs unauthorized action, no audit trail

- Mitigation: Immutable logging, digital signatures

Information Disclosure: Can sensitive data leak?

- Example: PII in agent logs or responses

- Mitigation: Data classification, output filtering

Denial of Service: Can attackers disable your agents?

- Example: Resource exhaustion attacks

- Mitigation: Rate limiting, circuit breakers

Elevation of Privilege: Can agents gain unauthorized access?

- Example: Exploiting tool permissions to access restricted data

- Mitigation: Strict RBAC, permission boundaries

Creating Your Agent Attack Surface Map

Document every component:

- Input sources (user queries, documents, APIs)

- Processing components (LLM, reasoning engine, memory)

- Output destinations (responses, actions, other agents)

- External connections (tools, databases, third-party services)

- Data flows (what moves where, when, why)

This map becomes your AI agent vulnerability assessment foundation. Update it quarterly as your agent ecosystem evolves.

Don’t do that. Seriously, don’t.

Instead, implement granular permissions at multiple levels:

- Tool-level: Which specific tools can the agent access? Not just “database access” but “read-only access to the customers table, columns X, Y, and Z only”

- Data-level: What data can it read? What can it modify? Can it see PII or should that be masked?

- Action-level: Which operations are permitted? Read, write, delete? Can it send emails or just draft them?

- Time-bound: Can permissions expire or require renewal? Temporary access for specific projects?

Start restrictive and expand carefully. It’s much easier to grant additional permissions than to recover from a breach. I once worked with a team that spent six months cleaning up after an overly-permissive agent. Six months of work that could have been avoided with an hour of careful permission planning.

Defense in Depth

Never rely on a single security control. Layer your defenses so that if one fails, others catch the threat. This isn’t unique to AI agents—it’s classic security wisdom—but it’s especially important here because agent attacks can be subtle and multi-stage.

Your security architecture should include:

- Input validation at the perimeter (stop bad input before it reaches the agent)

- Content filtering before the LLM (additional check for prompt injection patterns)

- Prompt guards and system-level protections (instructions that constrain behavior)

- Output filtering and validation (verify before acting)

- Action confirmation workflows (human approval for high-impact actions)

- Audit logging and monitoring (detect anomalies)

- Rate limiting and circuit breakers (prevent runaway costs)

Each layer should be independent. Don’t assume that because you validated input, you can skip output validation. Attackers are creative and patient. They’ll find the gaps in your defenses if you give them any.

Zero Trust Architecture

Assume breach. Design your systems as if attackers are already inside. This mindset shift is crucial because traditional perimeter security doesn’t work well with agents—they need to access resources across your entire stack.

Zero trust for agents means:

- Verify every request, even from internal agents

- Authenticate agent-to-agent communication (no implicit trust)

- Monitor for anomalous behavior continuously

- Implement micro-segmentation to limit lateral movement

- Rotate credentials regularly (especially for NHIs)

- Maintain detailed audit logs of everything

Zero trust is challenging with agents because they need broad access to be effective. But that’s exactly why it’s necessary. The broader the access, the more damage a compromised agent can do.

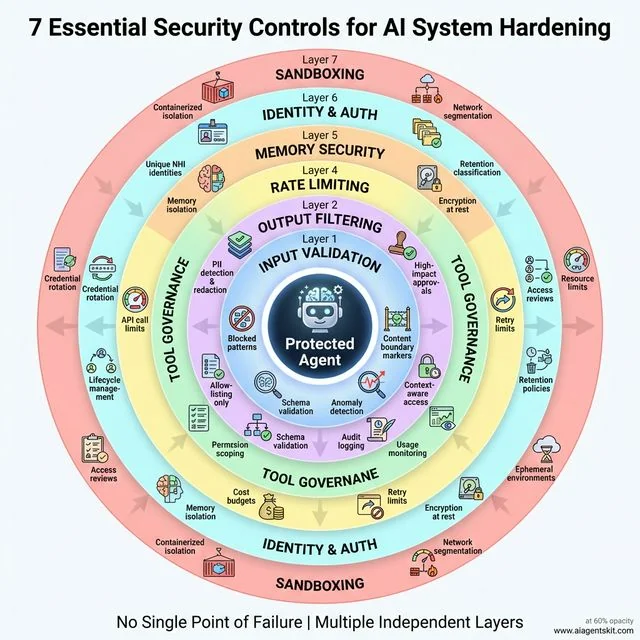

Technical Implementation: 7 Essential Security Controls for AI System Hardening

Now let’s get practical. Here are the seven controls you should implement immediately for comprehensive AI system hardening. I’m not going to give you vague advice like “be careful”—these are specific, implementable controls you can deploy this week to protect your LLM agents and machine learning models.

7 Essential Security Controls: Defense-in-depth with seven concentric layers protecting the AI agent core. Layer 1 - Input Validation implements multi-layered filtering with content boundary markers and anomaly detection. Layer 2 - Output Filtering provides PII redaction, schema validation, and high-impact approvals. Layer 3 - Tool Governance enforces allowlisting only with permission scoping and usage monitoring. Layer 4 - Rate Limiting prevents Denial of Wallet through API call limits and circuit breakers. Layer 5 - Memory Security ensures isolation, encryption, and retention policies. Layer 6 - Identity & Auth manages unique NHI identities with credential rotation. Layer 7 - Sandboxing provides containerized isolation with network segmentation. Each independent layer ensures no single point of failure.

7 Essential Security Controls: Defense-in-depth with seven concentric layers protecting the AI agent core. Layer 1 - Input Validation implements multi-layered filtering with content boundary markers and anomaly detection. Layer 2 - Output Filtering provides PII redaction, schema validation, and high-impact approvals. Layer 3 - Tool Governance enforces allowlisting only with permission scoping and usage monitoring. Layer 4 - Rate Limiting prevents Denial of Wallet through API call limits and circuit breakers. Layer 5 - Memory Security ensures isolation, encryption, and retention policies. Layer 6 - Identity & Auth manages unique NHI identities with credential rotation. Layer 7 - Sandboxing provides containerized isolation with network segmentation. Each independent layer ensures no single point of failure.

Control #1: Input Validation and Sanitization

The Problem: Agents process untrusted user input, which can contain malicious instructions hidden in seemingly innocent content.

The Solution: Implement multi-layered input validation that treats all input as potentially hostile:

# Example: Content validation middleware

def validate_agent_input(user_input, context):

# Layer 1: Basic sanitization

sanitized = sanitize_html(user_input)

# Layer 2: Blocked patterns

if contains_blocked_patterns(sanitized):

raise SecurityException("Blocked pattern detected")

# Layer 3: Embedding-based anomaly detection

if is_anomalous_input(sanitized, context):

flag_for_review()

return sanitize_for_review(sanitized)

return sanitizedKey Techniques:

- Use content boundary markers (like XML tags) to separate instructions from data

- Maintain allowlists of acceptable input patterns (more secure than blocklists)

- Implement embedding-based anomaly detection for sophisticated attacks

- Log all blocked inputs for analysis and pattern improvement

- Consider canary tokens—hidden instructions that should never be executed, used to detect prompt injection

Control #2: Output Filtering and Verification

The Problem: Agent outputs can contain sensitive data, malicious content, or actions you didn’t intend.

The Solution: Never trust agent outputs blindly. Implement a validation pipeline:

# Example: Output validation

def validate_agent_output(agent_response, action_context):

# Check for PII before anything else

if contains_pii(agent_response):

return redact_sensitive_data(agent_response)

# Validate against action schema

if not matches_expected_schema(agent_response, action_context):

raise ValidationException("Output doesn't match expected schema")

# High-impact action confirmation

if is_high_impact_action(action_context):

return queue_for_human_approval(agent_response)

# Log for audit trail

log_agent_output(action_context, agent_response)

return agent_responseKey Techniques:

- Implement PII detection and redaction (regex patterns + ML models)

- Validate outputs against expected schemas

- Queue high-impact actions for human approval

- Log all outputs for audit purposes

- Implement differential privacy for sensitive queries

Control #3: Tool Access Governance

The Problem: Agents with excessive tool access can cause widespread damage, either through misuse or compromise.

The Solution: Implement strict tool governance from day one:

- Tool Allowlisting: Only explicitly approved tools are available. Default deny everything else.

- Permission Scoping: Define exactly what each tool can do. Not just “read database” but “read specific tables, specific columns”

- Context-Aware Access: Tools available depend on the task context. Different permissions for different workflows.

- Usage Monitoring: Track every tool invocation. Who called it, when, with what parameters, and what was the result.

MCP Security Considerations: If you’re using the Model Context Protocol, pay special attention to MCP security considerations. The 97 million monthly MCP downloads mean it’s becoming a standard, which makes it a standard attack target.

Control #4: Rate Limiting and Cost Controls

The Problem: Unbounded agent loops can cause financial damage (Denial of Wallet) faster than you can react.

The Solution: Implement multiple rate limits at different levels:

- API call limits: Maximum calls per minute/hour/day

- Cost budgets: Daily/monthly spending caps with alerts

- Time limits: Maximum execution time per task

- Retry limits: Prevent infinite retry loops with exponential backoff

- Circuit breakers: Stop agents when error rates spike

When we implemented these controls after our “847 API calls” incident, we set what seemed like aggressive limits: 100 calls per minute, $100 daily budget, 5-minute max execution time. You know what? We never hit those limits in normal operation. But they saved us multiple times when things went wrong.

Control #5: Memory and State Security

The Problem: Agent memory can be poisoned or leak sensitive data across sessions.

The Solution: Secure your agent’s memory architecture:

- Memory Isolation: Each user/session gets isolated memory. No cross-contamination.

- Data Classification: Tag memory entries by sensitivity level. Handle PTI differently than public data.

- Retention Policies: Automatically expire old memory entries. Don’t keep data forever “just in case.”

- Access Logging: Track who/what accesses memory. Unusual access patterns should trigger alerts.

- Encryption: Encrypt sensitive memory at rest. Consider encrypting memory in transit between components.

Control #6: Identity and Authentication

The Problem: Non-human identities (NHIs) are poorly managed in most organizations, creating persistent vulnerabilities.

The Solution: Implement proper NHI governance:

- Unique Identities: Each agent gets a unique identity with its own credentials

- Credential Rotation: Automatic rotation of API keys and tokens on a schedule

- Lifecycle Management: Proper provisioning and deprovisioning when agents are created or retired

- Access Reviews: Regular audits of agent permissions (quarterly at minimum)

- Federation: Standardized authentication across agents using protocols like OAuth 2.0 or mTLS

With NHIs outnumbering human identities 50:1, this isn’t optional anymore. It’s essential infrastructure. And yet, most organizations I’ve worked with have better identity management for their coffee machines than their AI agents.

Control #7: Sandboxing and Isolation

The Problem: Agents can affect systems beyond their intended scope, either accidentally or maliciously.

The Solution: Run agents in properly isolated environments:

- Execution Sandboxes: Containerized (Docker) or VM-based isolation

- Network Segmentation: Limit network access to required endpoints only. Agents don’t need internet access unless specifically required.

- Resource Limits: CPU, memory, and disk constraints to prevent resource exhaustion attacks

- Read-Only Filesystems: Prevent filesystem modifications where possible

- Ephemeral Environments: Destroy and recreate environments regularly to prevent persistence of compromise

AI Agent Security Testing & Penetration Testing

You can’t secure what you haven’t tested. AI agent penetration testing requires different techniques than traditional application testing because agents have emergent behaviors that don’t appear in standard test cases.

Based on Microsoft’s experience red teaming over 100 generative AI products, the researchers emphasize that “you don’t have to compute gradients to break an AI system”—simple, creative attacks are often more effective than sophisticated ones. Their research reveals that “LLMs amplify existing security risks and introduce new ones,” making traditional penetration testing insufficient.

Automated Security Scanning

Start with automated tools for AI agent vulnerability assessment:

Static Analysis:

- Review agent code for hardcoded credentials

- Check for improper error handling that might leak information

- Validate input sanitization logic

- Audit permission configurations

Dynamic Testing:

- Prompt Injection Testing: Use tools like Promptfoo to systematically test for injection vulnerabilities

- Fuzzing: Send malformed inputs to see how agents respond

- Boundary Testing: Test edge cases and unexpected scenarios

- Tool Abuse Testing: Attempt to use tools in unauthorized ways

Behavioral Testing:

- Test goal hijacking by attempting to redirect agent objectives

- Attempt memory poisoning through crafted inputs

- Test cascading failure scenarios in multi-agent systems

- Verify rate limiting and cost controls

Manual Penetration Testing

Automated tools catch the obvious issues. Ethical hacking for AI agents requires human creativity:

Red Team Exercises:

- Simulate sophisticated attackers with time and resources

- Attempt multi-turn attacks that build trust before exploitation

- Test social engineering through agent interactions

- Attempt privilege escalation through tool chaining

Adversarial Testing:

- Craft inputs designed to confuse or mislead agents

- Test robustness against adversarial examples

- Attempt to extract training data or system prompts

- Test for bias exploitation

Chaos Engineering:

- Introduce failures to test resilience

- Compromise one agent and observe multi-agent propagation

- Test recovery procedures under attack conditions

Continuous Security Validation

Security isn’t a one-time activity. Implement continuous AI agent security monitoring:

- Weekly automated scans

- Monthly manual penetration tests

- Quarterly red team exercises

- Continuous behavioral monitoring in production

Document findings in your AI agent security audit reports and track remediation over time.

Governance and Monitoring: The Human Layer for Intelligent Agent Protection

Technical controls are necessary but not sufficient for complete intelligent agent protection. You need governance and monitoring to catch what technical controls miss. Think of it this way: technical controls are your locks, but governance is checking that the locks are actually used.

Establishing AI Agent Governance

Every organization deploying AI agents needs a governance framework. Not a 50-page document that nobody reads, but practical guidelines that developers and operators actually follow. Here’s what should be in yours:

Ownership and Accountability:

- Clear ownership of each agent (who’s responsible when it breaks?)

- Defined approval workflows for new agents (no rogue deployments)

- Regular security reviews (quarterly at minimum)

- Incident response procedures (what do we do when something goes wrong?)

Policy Templates: Create standard, templated policies for:

- Agent development standards (security requirements from day one)

- Testing and validation requirements (what must be tested before deployment?)

- Deployment approval processes (who needs to sign off?)

- Ongoing monitoring obligations (what must be monitored?)

- Incident reporting procedures (who do we tell when there’s a problem?)

I’ve seen too many organizations treat agent deployment like a side project. It’s not. It’s production infrastructure that needs production-grade governance. When an agent makes a mistake, it’s not a bug—it’s an incident. Treat it that way.

Monitoring and Observability

Traditional monitoring won’t cut it for AI agents. You need agent-specific observability that understands agent behavior, not just system metrics.

Behavior Logging:

- Log every decision the agent makes (not just the action, but the reasoning)

- Capture the reasoning chain (why did it decide to do that?)

- Record tool invocations with parameters (what exactly did it call?)

- Track success/failure rates (is performance degrading?)

Anomaly Detection:

- Baseline normal agent behavior (what’s typical?)

- Alert on deviations (unusual tools, excessive actions, strange timing)

- Implement behavioral biometrics for agent identity (is this agent behaving like itself?)

- Use ML-based detection for sophisticated threats (patterns humans might miss)

Audit Trails:

- Immutable logs of all agent activities (tamper-proof)

- Cross-reference with user actions (who triggered this?)

- Maintain forensic evidence (what happened, when, in what order?)

- Regular compliance reporting (for auditors and regulators)

When AI agents handle customer data, this isn’t just security—it’s compliance. GDPR, CCPA, and industry regulations all require detailed audit trails. But beyond compliance, good observability is just good engineering. You can’t improve what you can’t measure.

Human-in-the-Loop Requirements

Full autonomy is a myth sold by vendors who want you to buy their “autonomous AI” solution. Real production systems need human oversight, especially for high-stakes operations.

Approval Gates:

- High-impact actions require human approval (deletions, large purchases, access changes)

- Financial transactions over defined thresholds

- Data deletion or modification operations

- Access to sensitive systems or privileged data

Exception Handling:

- Clear escalation procedures when agents encounter edge cases

- Human reviewers for situations the agent hasn’t seen before

- Override capabilities for emergencies

Monitoring Dashboards:

- Real-time visibility into agent activities (what’s happening right now?)

- Alert thresholds and notification systems (tell me when something’s wrong)

- Performance and cost monitoring (is it working efficiently?)

The goal isn’t to eliminate human involvement—it’s to focus human attention where it matters most. Let agents handle routine tasks autonomously, but keep humans in the loop for exceptions and high-stakes decisions.

AI Agent Incident Response Plan

When (not if) a security incident occurs, you need a clear AI agent incident response playbook. Agents can cause damage quickly, so your response must be faster.

According to the Google Cloud Cybersecurity Forecast 2026, which draws insights from Google Threat Intelligence, Mandiant Consulting, and Google Cloud Security teams, organizations face an “AI agent paradigm shift” where the speed and autonomy of AI agents creates new incident response challenges that traditional playbooks don’t address.

AI Agent Incident Response Lifecycle: Five-phase continuous improvement approach for handling security incidents at machine speed. Phase 1 - Detection (Minutes) identifies incidents through behavioral anomalies, cost spikes, and unusual tool access. Phase 2 - Containment (Minutes to Hours) suspends operations, revokes credentials, and stops blast radius. Phase 3 - Eradication (Hours to Days) identifies attack vectors, patches vulnerabilities, and rotates compromised credentials. Phase 4 - Recovery (Days) restores from backups with enhanced monitoring and behavior validation. Phase 5 - Post-Incident (Weeks) conducts blameless post-mortems, updates threat models, and shares learnings. The center emphasizes continuous improvement. Speed matters: agents cause damage rapidly, requiring automated detection and containment.

AI Agent Incident Response Lifecycle: Five-phase continuous improvement approach for handling security incidents at machine speed. Phase 1 - Detection (Minutes) identifies incidents through behavioral anomalies, cost spikes, and unusual tool access. Phase 2 - Containment (Minutes to Hours) suspends operations, revokes credentials, and stops blast radius. Phase 3 - Eradication (Hours to Days) identifies attack vectors, patches vulnerabilities, and rotates compromised credentials. Phase 4 - Recovery (Days) restores from backups with enhanced monitoring and behavior validation. Phase 5 - Post-Incident (Weeks) conducts blameless post-mortems, updates threat models, and shares learnings. The center emphasizes continuous improvement. Speed matters: agents cause damage rapidly, requiring automated detection and containment.

The AI Agent Incident Response Lifecycle

Phase 1: Detection (Minutes)

Agents require real-time monitoring because they act autonomously:

- Behavioral anomalies trigger immediate alerts

- Cost spikes indicate potential DoW attacks

- Unusual tool access patterns signal compromise

- Error rate spikes suggest exploitation attempts

Automated Detection:

# Example: Automated incident detection

def detect_security_incident(agent_activity):

if agent_activity.cost_per_minute > COST_THRESHOLD:

return Incident("DENIAL_OF_WALLET", severity="HIGH")

if agent_activity.tool_access_pattern.is_anomalous():

return Incident("SUSPICIOUS_TOOL_USE", severity="MEDIUM")

if agent_activity.data_exfiltration_detected():

return Incident("DATA_EXFILTRATION", severity="CRITICAL")

return NonePhase 2: Containment (Minutes to Hours)

Immediate actions to limit damage:

Isolate the Agent:

- Suspend agent operations immediately

- Revoke active sessions and tokens

- Block network access

- Preserve memory state for forensics

Stop the Blast Radius:

- Disable affected tools or APIs

- Alert downstream systems

- Implement emergency rate limiting

- Notify other agents in the ecosystem

Phase 3: Eradication (Hours to Days)

Remove the threat:

- Identify the attack vector (prompt injection, tool abuse, etc.)

- Patch vulnerabilities

- Remove malicious data from agent memory

- Rotate compromised credentials

- Update security controls

Phase 4: Recovery (Days)

Restore operations safely:

- Restore from known-good backup if needed

- Rebuild agent with security patches

- Gradually restore access with monitoring

- Validate behavior before full deployment

- Document lessons learned

Phase 5: Post-Incident (Weeks)

Learn and improve:

- Conduct blameless post-mortem

- Update threat models

- Enhance detection rules

- Improve response procedures

- Share learnings with the team

Incident Response Team Roles

AI Agent Security Incident Commander:

- Coordinates response efforts

- Makes go/no-go decisions

- Communicates with stakeholders

Technical Lead:

- Performs technical investigation

- Implements containment measures

- Leads eradication efforts

Communications Lead:

- Manages internal communications

- Handles external disclosures if required

- Updates status pages

Forensics Specialist:

- Preserves evidence

- Performs root cause analysis

- Documents attack timeline

Common AI Agent Incident Types

Type 1: Prompt Injection Attack

- Detection: Unusual output patterns, attempted tool misuse

- Containment: Disable input processing, validate recent outputs

- Eradication: Clear poisoned context, update input filters

Type 2: Data Exfiltration

- Detection: Large data transfers, unusual external connections

- Containment: Block external access, revoke credentials

- Eradication: Audit data access, notify affected parties

Type 3: Denial of Wallet

- Detection: Cost spikes, excessive API calls

- Containment: Implement emergency rate limits, suspend agent

- Eradication: Identify triggering input, improve cost controls

Type 4: Cascading Failure

- Detection: Multiple agents behaving abnormally

- Containment: Isolate agent mesh, disable inter-agent communication

- Eradication: Identify source agent, rebuild from clean state

Communication Templates

Internal Notification (Immediate):

Subject: [INCIDENT] AI Agent Security Event - [Agent Name]

Anomalous behavior detected in [Agent Name] at [Time].

Actions taken: [Containment measures]

Impact: [Data affected, services disrupted]

Next update: [Time]

Incident Commander: [Name]External Disclosure (if required):

We are investigating a security incident involving our AI agent systems.

We have contained the issue and are conducting a thorough investigation.

We will provide updates every 24 hours until resolution.

Contact: security@company.comRecovery Validation Checklist

Before restoring full operations:

- Root cause identified and fixed

- Security controls tested and validated

- Agent behavior verified against baselines

- Monitoring and alerting confirmed working

- Incident response procedures updated

- Team debrief completed

Having a documented AI agent breach response plan saves critical time during incidents. Review and exercise it quarterly.

AI Agent Security Tools & Solutions

The AI agent security solutions market is evolving rapidly. According to Microsoft’s AI Red Team framework, organizations should focus on tools that provide both automated scanning capabilities and human-in-the-loop validation, as “automation can help cover more of the risk landscape” while “the human element of AI red teaming is crucial.”

Here’s a comprehensive guide to tools that can strengthen your security posture:

Open-Source Security Tools

1. Garak (LLM Vulnerability Scanner)

- Purpose: Automated vulnerability scanning for LLMs and agents

- Use case: Continuous security testing

- Best for: Development and CI/CD pipelines

- Key features: Prompt injection detection, data exfiltration testing

2. Purple Llama (Meta’s Safety Suite)

- Purpose: Comprehensive safety tools for LLM deployment

- Use case: Pre-deployment safety validation

- Best for: Production readiness checks

- Key features: CyberSecEval, Llama Guard, Prompt Guard

3. AgentHarm (Agent Safety Benchmark)

- Purpose: Evaluate agent safety and harmful capabilities

- Use case: Safety testing and red-teaming

- Best for: Understanding agent failure modes

- Key features: Harmful task evaluation, safety scoring

4. Promptfoo (Prompt Injection Testing)

- Purpose: Framework for testing prompt injection vulnerabilities

- Use case: Adversarial testing

- Best for: Developers building agent applications

- Key features: Automated test cases, red-teaming tools

Commercial Security Platforms

1. Agent Security Gateways These platforms sit between your agents and the world:

- Capabilities: Input/output filtering, rate limiting, audit logging

- Benefits: Centralized security policy, reduced development overhead

- Considerations: Added latency, vendor lock-in potential

2. Behavioral Monitoring Solutions AI-powered monitoring specifically designed for agents:

- Capabilities: Anomaly detection, behavioral baselines, threat hunting

- Benefits: Detects novel attacks, reduces false positives

- Considerations: Training period required, potential for alert fatigue

3. Identity Management for NHIs Specialized solutions for non-human identity governance:

- Capabilities: Automated provisioning, credential rotation, access reviews

- Benefits: Scales with agent ecosystem, compliance support

- Considerations: Integration complexity, cost at scale

Cloud Provider Security Services

AWS:

- Amazon Bedrock Guardrails: Content filtering for foundation models with configurable safety controls

- AWS IAM: Identity management (adapted for NHIs) with fine-grained permission policies

- Amazon Macie: Sensitive data discovery using machine learning

- AWS CloudTrail: Comprehensive audit logging for compliance

Azure:

- Azure AI Content Safety: Harmful content detection with severity scoring

- Microsoft Purview: Unified data governance and compliance management

- Azure AD: Identity and access management with conditional access policies

- Azure Monitor: End-to-end observability and security monitoring

Google Cloud:

- Vertex AI Safety Filters: Content moderation with adjustable safety thresholds

- Cloud IAM: Fine-grained access control with resource hierarchy

- Data Loss Prevention: Sensitive data protection with de-identification

- Cloud Audit Logs: Compliance logging with comprehensive activity tracking

Building Your Security Tool Stack

Phase 1: Essentials (Deploy First)

- Input/output filtering gateway

- Identity management for NHIs

- Comprehensive logging and monitoring

- Rate limiting and cost controls

Phase 2: Advanced (Deploy Next)

- Behavioral anomaly detection

- Automated vulnerability scanning

- Security orchestration (SOAR) integration

- Threat intelligence feeds

Phase 3: Expert (Deploy as Needed)

- Custom AI security models

- Advanced red-teaming platforms

- Forensic analysis tools

- Compliance automation

Tool Evaluation Criteria

When selecting AI agent security software, evaluate:

Integration:

- Does it work with your agent framework (LangChain, AutoGPT, etc.)?

- API availability and documentation quality

- Deployment complexity (agent vs. proxy vs. inline)

Performance:

- Latency impact (crucial for real-time agents)

- Throughput limits

- Resource requirements

Security:

- Vendor security practices

- Data handling and privacy

- Compliance certifications (SOC 2, ISO 27001)

Cost:

- Pricing model (per request, per agent, flat rate)

- Scaling costs as you grow

- Total cost of ownership

Support:

- Documentation quality

- Community support (for open source)

- Vendor support responsiveness (for commercial)

DIY vs. Buy Decision Framework

Build In-House When:

- You have unique requirements no vendor meets

- You have strong security engineering team

- Cost of vendor solutions exceeds build cost

- You need deep customization

Buy Commercial When:

- Time-to-value is critical

- Vendor has specialized expertise you lack

- Compliance requirements favor established vendors

- Maintenance burden would distract from core product

Hybrid Approach:

- Use commercial platforms for common threats

- Build custom solutions for unique risks

- Leverage open source for flexibility

Recommended Starting Stack

For most organizations getting started:

- Garak or Promptfoo (open source) for automated testing

- Cloud provider security services (AWS/Azure/GCP native tools)

- Custom middleware for input/output filtering

- Existing observability tools adapted for agents (DataDog, New Relic)

- IAM solution with NHI support

As you mature, add behavioral monitoring and advanced threat detection.

MCP Security: Protecting the Connection Layer

The Model Context Protocol (MCP) has become the de facto standard for agent-tool integration, with 97 million monthly SDK downloads. But that popularity makes it a target. When everyone’s using the same protocol, attackers focus their efforts there.

What is MCP? MCP is a protocol that allows AI agents to discover and use tools through a standardized interface. It uses JSON-RPC for communication and supports capabilities like tool listing, invocation, and resource access. Think of it as HTTP for agent-tool communication—it’s become the common language.

Why MCP Security Matters: Because MCP is the bridge between your agents and their capabilities. If an attacker controls the MCP layer, they control what tools your agents can access and how they use them. It’s a single point of failure that needs robust protection.

MCP Security Best Practices:

-

Gateway Architecture: Deploy an MCP gateway that validates all requests and responses. Don’t let agents connect directly to MCP servers. The gateway should inspect, validate, and potentially transform all MCP traffic.

-

Server Authentication: Verify MCP server identities cryptographically. Untrusted servers can compromise your agents by returning malicious responses. Use certificates or similar mechanisms to ensure you’re talking to legitimate servers.

-

Tool Permission Models: Implement fine-grained permissions for which tools agents can use. Just because a server offers 50 tools doesn’t mean your agent needs access to all of them. Default deny, explicit allow.

-

Request Validation: Validate all MCP requests before forwarding. Check parameters, enforce rate limits, and verify authorization. Don’t blindly pass through agent requests.

-

Response Sanitization: Sanitize MCP responses before they reach your agent. Malicious servers can return poisoned responses that exploit your agent. Treat all external responses as untrusted.

The Coalition for Secure AI (CoSAI) has published an extensive MCP security taxonomy that should be required reading for anyone deploying MCP-based agents. It’s comprehensive, practical, and updated regularly as the threat landscape evolves.

Compliance and Regulatory Considerations

Agent security isn’t just about preventing attacks—it’s also about compliance. And compliance is getting more complex as regulations catch up with AI capabilities.

EU AI Act Implications: According to the International AI Safety Report 2026—the most authoritative global evidence base on AI safety backed by experts from 30 countries—the regulatory landscape for AI is rapidly evolving. The EU AI Act categorizes AI systems by risk level. Autonomous agents that can make decisions affecting individuals likely fall into the “high-risk” category, requiring:

- Risk management systems (document and mitigate risks)

- Data governance practices (quality, bias testing, privacy)

- Technical documentation (how it works, how it’s tested)

- Record-keeping obligations (audit trails, decision logs)

- Transparency requirements (inform users they’re interacting with AI)

- Human oversight measures (humans can intervene)

If you’re deploying agents that affect EU citizens, you need to understand these requirements. The penalties for non-compliance are substantial—up to 6% of global annual revenue.

Data Privacy (GDPR, CCPA): When agents process personal data:

- Implement data minimization principles (only collect what’s necessary)

- Provide mechanisms for data subject rights (access, deletion, portability)

- Maintain records of processing activities (what data, why, how long)

- Conduct Data Protection Impact Assessments (DPIAs) for high-risk processing

Agents make data privacy more complex because they often need broad context to be effective. But that context frequently includes personal data. Finding the balance requires careful architectural decisions.

Industry-Specific Regulations:

- Finance: SOX, PCI-DSS implications for agents handling financial data

- Healthcare: HIPAA requirements for agents accessing PHI

- Government: FedRAMP, StateRAMP for cloud-based agents

- Critical Infrastructure: NERC CIP and similar frameworks

For a deeper dive into AI compliance across jurisdictions, check out our AI compliance requirements guide.

Common Mistakes to Avoid

I’ve made plenty of mistakes with AI agents. Let me share the most common ones so you can avoid them:

Mistake #1: Treating Agents Like Chatbots Don’t assume your chatbot security is sufficient. Agents have vastly different risk profiles. What works for a Q&A bot won’t work for an agent with database access. The stakes are higher, the attack surface is broader, and the potential damage is greater.

Mistake #2: Granting Excessive Permissions Initially It’s tempting to give agents broad access “just in case” or “to avoid blocking them later.” Resist this urge. Start with minimal permissions and expand carefully. I learned this when an agent with write access to our analytics database “optimized” our reporting tables. Recovery took weeks.

Mistake #3: Ignoring Non-Human Identity Management Most IAM systems weren’t built for agents. Don’t bolt agent identity onto human identity systems as an afterthought. Build proper NHI governance from the start. Trust me, retrofitting identity management is painful.

Mistake #4: Insufficient Logging and Observability You can’t secure what you can’t see. Traditional application monitoring misses the nuances of agent behavior. Invest in agent-specific observability early. When something goes wrong—and it will—you’ll be glad you have detailed logs.

Mistake #5: Failing to Implement Rate Limiting Our “847 API calls in 3 minutes” story wasn’t unique. Without rate limiting, agents can cause financial damage faster than you can react. This is non-negotiable. Set limits. Monitor them. Adjust as needed.

Mistake #6: Not Planning for Cascading Failures In multi-agent systems, failures propagate. Design for failure isolation. Don’t let one compromised agent take down your entire agent ecosystem. Compartmentalize. Assume breach. Build resilience.

AI Agent Security Audit Checklist

Use this comprehensive AI agent security checklist to evaluate your current deployments. Based on Microsoft’s Responsible AI Standard and AI Risk Assessment framework, regular audits are essential for maintaining security posture. Score each item: ✅ Implemented, ⚠️ Partial, ❌ Missing.

Pre-Deployment Security Review

Architecture & Design:

- Threat model documented and reviewed

- Attack surface mapped and minimized

- Data flow diagrams created

- Security requirements defined

- Compliance requirements identified (GDPR, CCPA, industry-specific)

Identity & Access:

- Unique identity assigned to each agent

- Authentication mechanism implemented (certificates, tokens)

- Authorization rules defined (RBAC or ABAC)

- Credential rotation policy established

- NHI lifecycle management process documented

Input & Output Security:

- Input validation implemented

- Content filtering active

- Output validation and sanitization in place

- PII detection and redaction configured

- Schema validation for structured outputs

Tool & Resource Access:

- Tool allowlist configured

- Permission boundaries defined

- Rate limiting enabled

- Cost controls and budgets set

- Resource quotas configured

Memory & State:

- Memory isolation between users/sessions

- Data retention policies defined

- Sensitive data encryption at rest

- Memory access logging enabled

- State validation checks implemented

Runtime Security Controls

Monitoring & Observability:

- Comprehensive logging implemented

- Behavioral baselines established

- Anomaly detection configured

- Alert thresholds set

- Audit trail maintained

Human Oversight:

- Approval gates for high-impact actions

- Human review workflows defined

- Escalation procedures documented

- Override capabilities available

- Monitoring dashboards deployed

Incident Response:

- Incident response plan documented

- Contact list updated

- Forensic evidence collection procedures defined

- Rollback procedures tested

- Communication templates prepared

Post-Deployment Validation

Security Testing:

- Automated vulnerability scanning completed

- Manual penetration testing performed

- Prompt injection testing done

- Tool abuse scenarios tested

- Rate limiting verified

Documentation:

- Security architecture documented

- Operational runbooks created

- Incident response procedures accessible

- Training materials developed

- Compliance evidence collected

Regular Reviews:

- Quarterly security reviews scheduled

- Access reviews planned

- Penetration testing cadence defined

- Compliance audits scheduled

- Threat model updates planned

Scoring Guide

- 90-100% (Excellent): Production-ready with strong security posture

- 70-89% (Good): Deployable with minor gaps to address

- 50-69% (Fair): Significant gaps, address before production

- Below 50% (Poor): Not ready for production, major security work needed

Use this checklist quarterly for AI agent risk assessment and track your improvement over time.

Secure AI Agent Architecture Patterns

Your secure AI agent architecture choices have lasting security implications. According to the MITRE ATLAS framework, which maps 15 tactics and 66 techniques for AI security, architecture decisions directly impact your organization’s ability to defend against adversarial ML attacks. Here are proven patterns for different use cases:

Pattern 1: The Secure Proxy Gateway

Best for: High-security environments with strict compliance requirements

This pattern aligns with Google’s recommended approach in their Cybersecurity Forecast 2026 for implementing “unified, full-stack” security that provides centralized control and monitoring.

Architecture:

User → Proxy Gateway → Security Layer → Agent → Tool Gateway → Tools

↓

Monitoring & LoggingKey Components:

- Proxy Gateway: All user interactions pass through a security-controlled proxy

- Security Layer: Input validation, content filtering, rate limiting

- Tool Gateway: Mediates all tool access with strict permissions

- Monitoring: Comprehensive logging and behavioral analysis

Pros: Maximum control, centralized security policy enforcement Cons: Added latency, single point of failure if not redundant

Pattern 2: The Micro-Agent Architecture

Best for: Complex multi-domain operations with isolation requirements

Architecture:

Orchestrator Agent

├── Specialized Agent A (Domain 1)

├── Specialized Agent B (Domain 2)

└── Specialized Agent C (Domain 3)Key Components:

- Orchestrator: Coordinates work, doesn’t process sensitive data

- Specialized Agents: Domain-specific with minimal permissions

- Inter-Agent Communication: Authenticated and encrypted

- Isolation Boundaries: Each agent in separate sandbox

Pros: Defense in depth, blast radius containment Cons: Complexity, inter-agent communication overhead

Pattern 3: The Human-in-the-Loop Controller

Best for: High-stakes decisions with regulatory oversight requirements

Architecture:

User Request → Agent Processing → Decision Point

↓

Human Review (if high-risk)

↓

Action ExecutionKey Components:

- Risk Classifier: Automatically categorizes request risk level

- Human Review Queue: Approval workflow for high-risk actions

- Audit Trail: Complete decision chain documentation

- Override Capability: Emergency human intervention

Pros: Maximum oversight, regulatory compliance Cons: Latency for human review, scalability limits

Pattern 4: The Zero-Trust Agent Mesh

Best for: Distributed agent ecosystems with dynamic trust relationships

Architecture:

Agent A ←→ Service Mesh ←→ Agent B

↓

Identity & Policy EngineKey Components:

- Service Mesh: Handles all inter-agent communication

- Identity Service: Cryptographic identity verification

- Policy Engine: Dynamic authorization decisions

- Observability: Complete visibility into all interactions

Pros: Flexible, scalable, fine-grained access control Cons: Complex to implement, requires mature infrastructure

Pattern 5: The Air-Gapped Analysis Agent

Best for: Highly sensitive data processing with strict isolation

Architecture:

Sensitive Data Zone Analysis Zone

├─ Data Store → ├─ Analysis Agent

├─ Anonymization Layer → ├─ Synthetic Data

└─ Export Controls ← └─ ResultsKey Components:

- Air Gap: Physical or logical separation between zones

- Data Anonymization: PII removal before processing

- Synthetic Data: Training on fake data when possible

- Export Controls: Validation before results leave secure zone

Pros: Maximum data protection, compliance-friendly Cons: Complex data flows, potential utility loss from anonymization

Choosing Your Architecture

Select based on:

- Data sensitivity: More sensitive = more isolation

- Compliance requirements: Regulatory needs dictate controls

- Performance needs: Real-time vs. batch processing

- Team expertise: Match complexity to capabilities

- Risk tolerance: Acceptable trade-offs between security and usability

Document your AI agent security architecture decisions and review them quarterly as threats evolve.

Frequently Asked Questions

What’s the difference between LLM security and AI agent security?

LLM security focuses on protecting the language model itself—prompt injection, model theft, and training data poisoning. AI agent security is broader. It includes LLM security but also addresses the unique risks of autonomous action, tool access, multi-agent coordination, and persistent state.

Think of it this way: LLM security protects the brain, while agent security protects the entire organism including its hands (tools), memory (state), and communication with other organisms (multi-agent systems). You need both, but agent security requires additional controls.

How do I audit what my AI agents are doing?

Implement comprehensive logging that captures not just actions but the reasoning chain. Use structured logging formats that capture: the agent’s goal, its step-by-step reasoning, tool invocations with parameters, outputs, and any errors.

Store these logs in a centralized system with proper retention policies. Regularly review logs for anomalies, and consider automated analysis using behavioral baselines. Tools like Splunk, ELK, or specialized AI observability platforms can help.

Can prompt injection attacks be completely prevented?

Honestly? No—not completely. According to the Google Cloud Cybersecurity Forecast 2026, “prompt injection manipulates AI” and remains one of the top threats facing AI systems. Microsoft’s AI Red Team research confirms that prompt injection is a fundamental challenge in LLM architecture because models can’t perfectly distinguish between instructions and data.

However, you can significantly reduce risk through defense in depth: input validation, output filtering, tool governance, and human oversight for high-impact actions. The goal isn’t perfection—it’s making attacks sufficiently difficult and detectable that attackers move on to easier targets.

What tools exist for AI agent security testing?

Several open-source and commercial tools can help:

- Garak: Framework for LLM vulnerability scanning

- AgentHarm: Benchmark for agent safety evaluation

- Purple Llama: Meta’s suite of safety tools

- Promptfoo: Testing framework for prompt injection

- OWASP’s Agentic AI Security Resources: Best practices and checklists

I recommend starting with automated scanning tools, then moving to red-team exercises with security professionals. The automated tools catch the obvious stuff; humans catch the subtle, creative attacks.

How do I secure agent-to-agent communication?

Agent-to-agent communication requires the same security considerations as any distributed system, with the added complexity of non-human identities:

- Mutual authentication (both agents verify each other using certificates or tokens)

- Encrypted communication channels (TLS 1.3 minimum)

- Message signing and verification (ensure messages haven’t been tampered with)

- Rate limiting between agents (prevent one compromised agent from overwhelming others)

- Monitoring for unusual communication patterns (baseline normal behavior)

- Clear trust boundaries (which agents can talk to which, explicitly defined)

Consider implementing a message broker or API gateway to centralize and monitor inter-agent communication. This gives you a single point to enforce policies and detect anomalies.

What should be in an AI agent security policy?

Your policy should cover:

- Development Standards: Security requirements during development (code review, testing)

- Testing Requirements: Mandatory security testing before deployment (vulnerability scanning, red teaming)

- Access Controls: Who can deploy agents and what permissions they can grant

- Monitoring Obligations: What must be logged and monitored

- Incident Response: Procedures for security incidents (who to notify, how to respond)

- Review Cadence: How often agents and their permissions are reviewed (quarterly minimum)

- Training Requirements: Security training for developers and operators

Make it practical and enforceable. A 50-page policy that nobody reads is worthless. A 5-page policy that everyone follows is invaluable.

How do I balance security with agent autonomy?

This is the million-dollar question. Too much autonomy risks security; too little defeats the purpose of agents. My approach, refined through painful experience:

-

Tiered Autonomy: Different autonomy levels based on action impact. High-impact actions require approval; low-impact actions are automatic. Define what “high-impact” means for your context.

-

Progressive Trust: Start with high oversight and reduce as the agent proves itself. Don’t give full autonomy on day one. Build trust over time.

-

Graceful Degradation: When in doubt, escalate to humans rather than making risky autonomous decisions. Better to annoy a human with a question than make an expensive mistake.

-

Continuous Monitoring: Even “autonomous” agents should be monitored for anomalies. Autonomy doesn’t mean absence of oversight.

There’s no perfect balance—it’s a continuous process of calibration based on your risk tolerance and the agent’s track record. Be prepared to adjust as you learn.

How do I perform an AI agent security audit?

An AI agent security audit should evaluate these key areas:

Architecture Review:

- Document the agent’s components, data flows, and trust boundaries

- Identify all inputs, outputs, tools, and external connections

- Review threat models and attack surface maps

- Validate security architecture decisions

Configuration Assessment:

- Verify permission settings follow least privilege

- Check authentication and authorization configurations

- Validate rate limiting and cost controls

- Review logging and monitoring setup

Code Review:

- Scan for hardcoded credentials or secrets

- Review input validation logic

- Check output sanitization

- Validate error handling (no information leakage)

Behavioral Testing:

- Test with adversarial inputs (prompt injection attempts)

- Attempt tool misuse scenarios

- Verify rate limiting enforcement

- Test failure modes and recovery

Documentation Review:

- Ensure incident response procedures exist

- Verify compliance documentation is complete

- Check operational runbooks are accessible

- Confirm training materials are available

Use the comprehensive checklist in this guide as your audit framework. Conduct audits quarterly and after any significant changes.

What are the best AI agent security tools?

The best AI agent security tools depend on your needs. According to Microsoft’s PyRIT framework and their AI Red Team guidance, organizations need tools that cover the full attack surface—from input validation to behavioral monitoring.

Here’s my recommended stack based on industry best practices:

For Development:

- Garak or Promptfoo for automated vulnerability scanning and prompt injection testing

- Purple Llama (Meta’s suite) for safety evaluation including CyberSecEval and Llama Guard

- Static analysis tools (Semgrep, Bandit) for code security

For Production:

- Input/output filtering gateway using cloud provider services or custom middleware

- Behavioral monitoring with anomaly detection (consider Microsoft’s approach)

- Identity management with NHI support via Azure AD, AWS IAM, or Cloud IAM

- SIEM integration (Splunk, ELK, or cloud-native solutions) for comprehensive log analysis

For Testing:

- AgentHarm for safety benchmarking

- PyRIT (Python Risk Identification Toolkit) from Microsoft for automated red teaming

- Chaos engineering platforms for resilience testing

Cloud-Native Options:

- AWS: Amazon Bedrock Guardrails with configurable safety policies

- Azure: Azure AI Content Safety with severity scoring

- Google Cloud: Vertex AI Safety Filters with adjustable thresholds

Start with open-source tools for immediate value, then add commercial solutions as your needs mature. The key is defense in depth—no single tool provides complete protection. The key is defense in depth—no single tool provides complete protection.

What is secure AI agent architecture?

Secure AI agent architecture refers to designing your agent system with security as a foundational principle, not an afterthought. Key characteristics include:

Defense in Depth: Multiple security layers (input validation, output filtering, tool governance, monitoring) so no single failure compromises the system.

Least Privilege: Agents only have access to the specific tools, data, and permissions they absolutely need. No broad “admin” access.

Zero Trust: Every component verifies every interaction. Agents authenticate to tools. Tools validate agent requests. Nothing is trusted by default.

Isolation: Agents run in sandboxed environments with restricted network access, resource limits, and clear boundaries from other systems.

Observability: Comprehensive logging of decisions, actions, and outcomes. You can reconstruct exactly what happened and why.

Fail-Safe Defaults: When in doubt, deny. Require explicit approval for high-impact actions. Default to human oversight.

Common patterns include the Secure Proxy Gateway (all traffic through controlled proxy), Micro-Agent Architecture (specialized agents with limited scope), and Zero-Trust Agent Mesh (distributed with cryptographic verification). Choose based on your risk profile and compliance requirements.

How do I respond to an AI agent security breach?

AI agent incident response follows five phases:

1. Detection (Minutes): Automated monitoring should detect anomalies—unusual tool access, cost spikes, or behavioral deviations. Alert the incident response team immediately.

2. Containment (Minutes to Hours):

- Suspend the compromised agent immediately

- Revoke its credentials and sessions

- Disable affected tools or APIs

- Isolate the agent from your network

- Alert other agents in your ecosystem

3. Eradication (Hours to Days):

- Identify the attack vector (prompt injection, credential theft, etc.)

- Remove malicious data from agent memory

- Patch vulnerabilities

- Rotate all potentially compromised credentials

4. Recovery (Days):

- Restore from known-good backup or rebuild

- Gradually restore access with enhanced monitoring

- Validate agent behavior before full deployment

- Test security controls thoroughly

5. Post-Incident (Weeks):

- Conduct blameless post-mortem

- Update threat models and security controls

- Document lessons learned

- Train team on new procedures

Speed matters. Agents can cause damage rapidly, so your detection and containment must be automated where possible. Have a documented playbook and exercise it quarterly.

What are AI agent compliance requirements?

AI agent compliance spans multiple frameworks depending on your industry and location:

EU AI Act (Applicable to EU or EU customers):

- High-risk AI systems need risk management systems

- Data governance and quality requirements

- Technical documentation and record-keeping

- Human oversight measures

- Transparency obligations (users must know they’re interacting with AI)

- Penalties up to 6% of global revenue

Data Privacy (GDPR, CCPA):

- Data minimization (only collect what’s necessary)

- Purpose limitation (use data only for specified purposes)

- Data subject rights (access, deletion, portability)

- Privacy by design and default

- Data Protection Impact Assessments for high-risk processing

Industry-Specific:

- Finance: SOX (financial reporting), PCI-DSS (payment data), GLBA (consumer data)

- Healthcare: HIPAA (protected health information), FDA regulations for medical AI

- Government: FedRAMP (cloud security), StateRAMP, NIST frameworks

- Critical Infrastructure: NERC CIP, sector-specific requirements

Emerging Standards:

- NIST AI Risk Management Framework

- ISO/IEC 42001 (AI management systems)

- IEEE standards for ethical AI

Map your agent’s functionality to applicable regulations early. Compliance is much easier to build in than retrofit. Document your compliance posture and review it regularly as regulations evolve.

Conclusion

AI agent security isn’t a feature you bolt on later—it’s foundational infrastructure you build from day one. The gap between deployment (82% of enterprises) and security readiness (44% with policies) represents both massive risk and massive opportunity.

As the International AI Safety Report 2026 emphasizes, risk mitigation is being outpaced by capability advancement—a strategic reality that will define the rest of this decade. Microsoft’s research on red teaming 100+ AI products concludes that “the work of securing AI systems will never be complete”—security is a continuous process, not a destination.

The seven essential controls I’ve outlined—input validation, output filtering, tool governance, rate limiting, memory security, identity management, and sandboxing—aren’t theoretical. They’re battle-tested defenses based on frameworks from OWASP, MITRE ATLAS, and insights from Google and Microsoft security teams that have prevented real breaches in production systems.

But technical controls aren’t enough. You need governance frameworks, monitoring systems, and human oversight. The organizations that will thrive in 2026 are those that treat agent security as seriously as they treat their core infrastructure security. Not as an afterthought. Not as a checkbox. As a fundamental requirement.

Gartner predicts 40% of enterprise applications will integrate AI agents by the end of 2026. That’s eightfold growth from today. The question isn’t whether you’ll adopt agents—it’s whether you’ll adopt them securely.

Start today. Audit your current agent deployments against the OWASP Top 10 framework. Identify gaps. Implement controls. And remember: in agent security, paranoia is a feature, not a bug.

The alternative? Learning the hard way, like I did, when an agent “optimizes” something you didn’t want optimized. Trust me, it’s cheaper to prevent than to recover.

Have questions about implementing these controls? Check out our guides on multi-agent orchestration and MCP security considerations for deeper dives into specific topics. And if you’re building agents that handle sensitive customer data, our guide on AI agents for customer support includes additional security considerations for customer-facing systems.