AI in Healthcare: Applications, Benefits, and What Comes Next

AI is transforming healthcare diagnosis, treatment, and operations. From drug discovery to mental health, robot surgery to cost savings — the definitive guide to medical AI in 2026.

Healthcare has hit an inflection point that practitioners didn’t expect to arrive this quickly. AI in healthcare has moved from experimental pilot projects to embedded clinical infrastructure, reshaping how clinicians diagnose, how patients are monitored, and how health systems operate at scale. Unlike earlier waves of health IT, this shift isn’t about digitizing paper records — it’s about enabling machines to reason across vast quantities of clinical data in ways that meaningfully change outcomes.

The numbers reflect a genuine transformation. Fortune Business Insights projects the global AI in healthcare market will grow from $39 billion in 2024 to $504 billion by 2032, a trajectory driven by compounding adoption across imaging, operations, and predictive care. Even understanding the difference between AI agents vs. traditional chatbots is critical for hospitals evaluating vendor solutions, because not all healthcare AI is created equal.

What separates this moment from previous technology cycles is breadth and depth happening simultaneously. Generative AI has overtaken traditional analytics as healthcare’s top AI priority. Autonomous clinical agents are starting to orchestrate multi-step care workflows. And frontline staff are adopting AI tools on their own — often without approval — because the administrative burden has become untenable. This guide explains what’s working, what’s risky, and how organizations can navigate it responsibly.

What Is AI in Healthcare and How Does It Work?

AI in healthcare refers to the application of machine learning algorithms, natural language processing (NLP), computer vision, and predictive analytics to clinical and operational challenges in health systems. The defining characteristic of healthcare AI is that it learns from data rather than following rigid, preprogrammed rules — meaning performance typically improves as more examples are analyzed.

Several distinct branches of AI are active in clinical environments today. Machine learning models trained on electronic health records can identify patients at risk for deterioration or readmission. Computer vision systems trained on medical images can detect abnormalities in CT scans, MRIs, X-rays, and pathology slides. NLP extracts structured clinical information from unstructured physician notes. And large language models powering clinical AI today can generate clinical documentation, summarize research, and respond to clinical queries in natural language.

The 2026 adoption picture shows how far this has spread: approximately 70% of healthcare organizations are now actively using AI, up from 63% just a year prior, according to NVIDIA’s Healthcare AI Trends research (2026). Three deployment models dominate: native AI capabilities baked into EHR platforms like Epic and Oracle Cerner, third-party specialized AI applications integrated via APIs, and purpose-built AI infrastructure that organizations develop in-house for proprietary data advantages.

What makes healthcare AI different from consumer AI isn’t raw capability — it’s the stakes. A missed cancer lesion, an incorrect drug interaction alert, or a biased readmission risk score can directly harm patients. The best-performing health systems treat healthcare AI deployment less like buying software and more like implementing a new clinical procedure — with evidence review, training requirements, and ongoing performance auditing built in from day one.

The distinct branches of AI active in clinical environments today, from predictive ML models to generative ambient documentation.

10 High-Impact AI Applications Transforming Patient Care

Healthcare AI isn’t a monolithic category — it’s a collection of specialized tools, each addressing specific clinical bottlenecks. Understanding these distinct applications helps organizations prioritize where AI will deliver measurable value versus where hype outpaces evidence.

AI Medical Imaging: Radiology’s Most Precise Tool

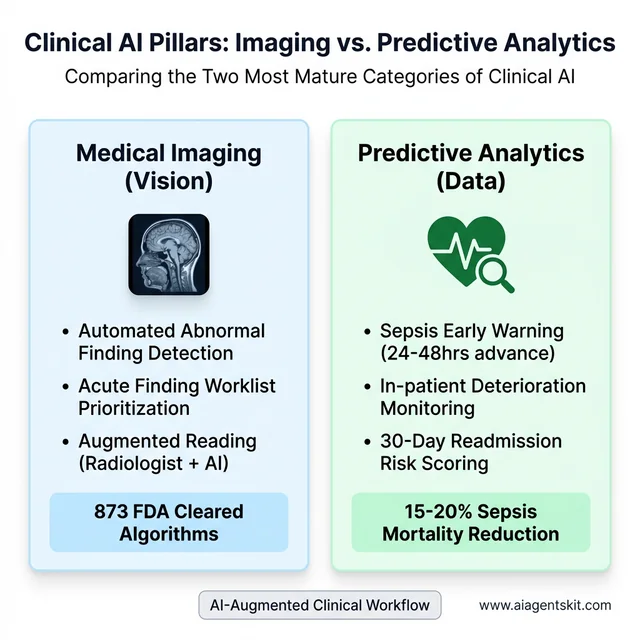

Medical imaging is where clinical AI has accumulated the deepest evidence base and regulatory track record. By mid-2025, the FDA had cleared approximately 873 radiology AI algorithms, making imaging the single largest target specialty for AI development in medicine. Computer vision systems trained on millions of annotated scans now detect pulmonary nodules, breast lesions, diabetic retinopathy, intracranial hemorrhage, and dozens of other findings with accuracy that in targeted evaluations matches or exceeds general radiologist performance.

The clinical value isn’t just detection accuracy — it’s workflow integration. AI worklist prioritization routes critical findings to the front of the read queue, reducing time-to-treatment for stroke, massive pulmonary embolism, and tension pneumothorax. Structured reporting tools translate AI findings into standardized language that feeds downstream decision support. The most reliable evidence consistently shows that radiologist-plus-AI combinations outperform either working alone — a pattern sometimes called “AI-augmented reading.”

Predictive Analytics and Early Warning Systems

Predictive analytics represents the shift from reactive to proactive care. Machine learning models analyzing continuous streams of vital signs, lab values, medications, and nursing notes can now identify patients likely to develop sepsis 24 to 48 hours before clinical signs appear — a window previously unavailable to clinicians. Studies across multiple health systems have documented 15-20% reductions in sepsis mortality when these early warning systems are paired with rapid response protocols.

The same underlying capability extends to fall prediction, cardiac event risk, pressure ulcer likelihood, and 30-day readmission probability. Many early warning systems initially suffered from alert fatigue, generating so many notifications that clinicians began ignoring them. Mature deployments address this through careful threshold calibration, alert routing to the right clinical role, and closed-loop feedback that measures whether alerts generated action. Organizations that skip the workflow integration piece uniformly report worse outcomes than those who treat alert design as part of the clinical product.

Medical imaging and predictive analytics represent the two most mature and clinically validated applications of AI in healthcare.

Personalized Medicine Powered by Genomic AI

Pharmacogenomics — the science of matching drug selection and dosing to individual genetic profiles — is reaching practical scale thanks to AI interpretation of genomic data. Cancer centers are using AI to analyze tumor genetics and match patients to targeted therapies within hours of sample sequencing. This compressed timeline matters clinically: for aggressive cancers, the 3-6 week delay in manual genomic analysis used to mean treatment decisions were made without complete information.

Population-level genomic AI is also changing how health systems approach chronic disease risk. Polygenic risk scores computed from large biobank datasets now allow clinicians to identify patients with elevated cardiovascular, metabolic, and oncologic risk years before symptoms appear. While genomic AI is still maturing and access remains uneven, the trajectory toward routine genetic risk stratification as part of primary care is increasingly clear.

Remote Patient Monitoring with Edge AI

Wearable sensors and implantable devices generating continuous health metrics have been available for years. What’s different now is edge AI — compute power running locally on the device or a nearby hub — that processes sensor data without routing every data point through cloud infrastructure. This matters for both latency (faster alerts for critical events) and privacy (less sensitive data in transit).

Patients with congestive heart failure, COPD, and poorly controlled diabetes are the primary beneficiaries of current remote monitoring programs. AI models continuously analyze weight trends, blood pressure patterns, activity levels, and oxygen saturation, alerting care teams when trajectories suggest deterioration. Health systems deploying structured remote monitoring programs consistently report reductions in 30-day readmissions and avoidable emergency visits — translating directly to better patient experience and lower cost. That’s where autonomous AI agents in clinical settings are beginning to handle the monitoring orchestration without requiring manual nurse review of every alert.

AI in Mental Health: Addressing the Care Access Crisis

Mental healthcare is one of the most acute capacity crises in global health — and AI is emerging as the most scalable tool available to close the gap. The AI mental health detection market grew from $2.74 billion in 2025 to $3.42 billion in 2026, with long-term projections pointing toward $25.58 billion by 2035, according to Towards Healthcare market research (2026). The demand signal is real: mental health provider shortages affect every geography, stigma keeps millions from seeking care, and crisis resources are routinely overwhelmed.

AI is being deployed across three distinct layers of mental healthcare. Detection tools use NLP analysis of speech patterns, typing cadence, and EHR clinical notes to identify early signals of depression, anxiety, and psychosis risk — sometimes weeks before a patient would present in crisis. AI chatbots like Woebot and Wysa provide 24/7 cognitive behavioral therapy-based support, showing measurable reductions in depressive symptoms in peer-reviewed studies, particularly for college-aged populations with mild-to-moderate conditions. And crisis hotlines are using real-time sentiment analysis to triage callers and route high-risk contacts to human counselors faster than any manual system could manage.

The evidence base is promising but genuinely mixed, and the honest picture requires acknowledging both sides. A January 2026 study found that daily AI chatbot use was associated with a 30% higher depression risk among some user groups — a correlation that researchers attribute to substitution of human connection rather than the chatbot causing harm directly. Stanford’s Human-Centered AI Institute (June 2025) documented serious failures in AI therapy chatbots, including stigma bias toward conditions like schizophrenia and — critically — chatbots that mishandled suicidal ideation prompts rather than offering appropriate crisis support. These findings don’t invalidate AI mental health tools — they establish the boundaries: AI works best as a bridge to professional care and a support supplement, not as a replacement for licensed therapists treating serious conditions. Organizations deploying AI mental health tools should implement clinical oversight protocols, risk escalation pathways, and bias testing in the same way they would for any clinical AI system.

AI in Drug Discovery and Clinical Trials

Traditional pharmaceutical drug development has one of the least efficient production pipelines of any industry: an average of 10 to 15 years from target identification to market approval, at a cost of $2.6 billion per approved drug, with roughly 90% of candidates failing somewhere in clinical development. AI is attacking every stage of this pipeline simultaneously.

Protein structure prediction is the breakthrough that has generated the most excitement. DeepMind’s AlphaFold 3 can predict the 3D structure of proteins, DNA, RNA, and small molecules at accuracy levels that would have required months of laboratory crystallography just five years ago. DeepMind’s Isomorphic Labs — built specifically to translate AlphaFold’s capabilities into drug discovery — has active partnerships with Eli Lilly and Novartis and anticipates having AI-designed drug candidates in Phase I/II clinical trials by 2026. The first fully AI-designed drug receiving FDA approval is now considered a realistic near-term milestone rather than a speculative future event.

Beyond structure prediction, generative AI models are synthesizing entirely novel molecular structures — compounds that don’t exist in any known compound library but are designed from first principles to hit a specific biological target with minimal off-target effects. Clinical trial optimization is another high-impact application: AI models analyze biomarker profiles, genomic data, prior trial histories, and geographic site performance to match the right patients to the right trials faster, predict dropout risk before enrollment, and flag safety signals in interim data more reliably than statistical methods alone. The FDA was developing guidance in 2025–2026 specifically for AI-assisted regulatory submissions, recognizing that the pipeline for AI-enabled drug applications was growing faster than existing review frameworks could handle. The honest caveat is that AI-compressed drug discovery still requires full clinical validation — these systems identify candidates more efficiently, they don’t bypass the evidence requirements that protect patients.

Robot-Assisted Surgery and AI-Guided Procedures

Robot-assisted surgery has been in clinical practice since the late 1990s, but the infusion of AI guidance layers is transforming it into a fundamentally different category of intervention. The da Vinci surgical system (Intuitive Surgical) has now facilitated more than 10 million procedures worldwide. AI augmentation of these robotic platforms adds real-time anatomical guidance overlays: computer vision identifies tissue boundaries, tumor margins, and proximity to critical structures like nerves and blood vessels — information that previously required the surgeon to interpret from memory of pre-operative imaging.

Pre-operative AI planning is where some of the most clinically significant work is happening. AI systems reconstruct 3D anatomical models from CT and MRI data, simulate optimal surgical approaches for complex cases, and flag anatomical variations that suggest elevated risk before the patient enters the operating room. For oncologic surgery — where margin completeness determines recurrence risk — AI-assisted margin mapping is showing meaningful improvements over conventional intraoperative assessment. Outcomes research on AI-assisted robotic procedures is accumulating across laparoscopic cholecystectomy, prostatectomy, colorectal resection, and cardiac valve repair, with consistent findings of shorter hospitalization, reduced complication rates, and faster return to baseline function compared with conventional laparoscopic approaches.

Surgical training is another domain where AI is delivering measurable impact. Simulation environments using AI to assess trainee technique — measuring movement economy, instrument handling, and anatomical accuracy — are becoming standard in residency programs. These systems can identify specific skill deficits more precisely than attending surgeon observation alone and have been validated against real OR performance metrics. Current limitations remain real: da Vinci systems cost $1.5 to $2.5 million per installation, procedure-specific evidence varies in strength, and the AI guidance layers require ongoing validation as surgical teams change and case complexity shifts. But the trajectory from AI-adjacent to AI-integrated surgery is already set.

How AI Is Changing Clinical Operations and Workflows

The highest-volume AI impact in healthcare isn’t coming from advanced diagnostics — it’s coming from administrative and documentation automation that touches every clinical encounter. Physician burnout, driven substantially by documentation burden, is a systemic workforce crisis. AI is the most scalable tool available to address it.

Ambient AI Documentation: Ending Physician Burnout

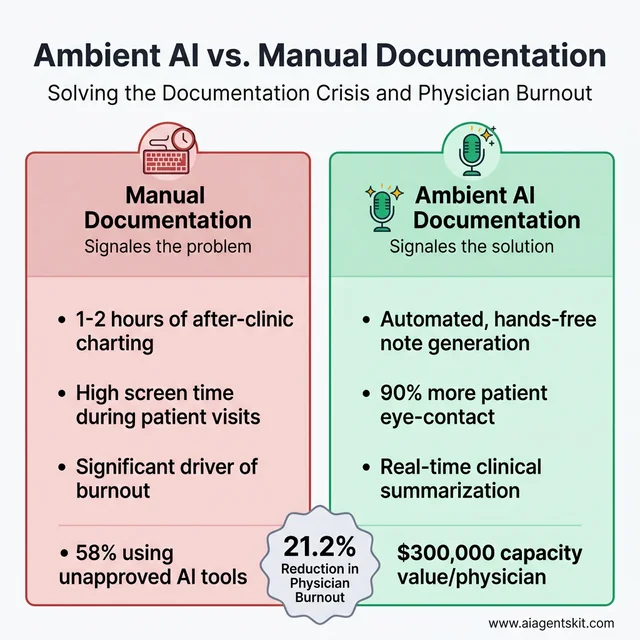

Ambient clinical documentation AI uses natural language processing to listen to patient-physician conversations during encounters and automatically generate structured clinical notes — with physician review and approval before finalizing. Most implementations report 1 to 2 hours of time savings per physician per day, time previously spent typing after clinic hours or between patient visits.

Native integration into Epic, Oracle Cerner, and other major EHR platforms means ambient AI doesn’t require separate workflow steps for most clinicians. Patient experience surveys consistently show that physicians using ambient documentation make more eye contact, ask more follow-up questions, and seem more engaged — because they’re not typing while listening. The demand for these tools from clinical staff is genuinely unsatisfied: 58% of frontline health system staff report using unapproved, generic AI tools at least monthly to reduce their documentation burden, according to Wolters Kluwer’s clinical AI research (2026) — a phenomenon health system leaders increasingly call “shadow AI.”

Clinical Decision Support Systems in Practice

Clinical decision support systems (CDSS) have existed for decades as rule-based alert engines. AI-enabled CDSS is different in kind: instead of firing a rigid alert when a drug dose exceeds a threshold, AI CDSS learns from physician behavior, distinguishes high-priority from low-priority recommendations, and reduces alert fatigue by presenting only signals that historical data suggests clinicians actually act on.

The most mature clinical decision support applications now integrate differential diagnosis assistance, evidence-based order set recommendations, care gap identification, and population health flags into a unified decision layer alongside the EHR. For measuring AI return on investment in CDSS deployments specifically, health systems track metrics like time to diagnosis, length of stay variance, and protocol adherence — not just cost savings.

Administrative AI: From Billing to Prior Authorization

Revenue cycle and prior authorization are among the largest operational drains in US healthcare. NLP models can extract appropriate billing codes from clinical documentation with accuracy exceeding that of manual coders, reducing claim errors and shortening the revenue cycle. Prior authorization bots integrate with payer portals to submit authorization requests, upload supporting documentation, and monitor approval status — automating a process that previously consumed hours of administrative staff time per patient per request.

Scheduling optimization algorithms analyze historical no-show patterns, appointment type demand, provider availability, and patient preference data to build clinic schedules that maximize throughput while reducing gaps. Patient-facing AI chatbots handle appointment scheduling, prescription refill routing, and care navigation questions through secure messaging channels. The aggregate operational efficiency gains from administrative AI are, in many health systems, now larger than the direct clinical care improvements — simply because administrative waste was so significant to begin with.

Ambient AI documentation addresses the physician burnout crisis by automating note generation and returning up to 2 hours of time daily.

AI for Nursing, Chronic Disease, and Post-Acute Care

The clinical application of AI is often discussed in the context of hospital systems and physician workflows. But two of the most pressing healthcare challenges — a global nursing shortage and the chronic disease burden — are areas where AI is delivering impact that deserves its own analysis.

Nursing workforce pressures are severe: the World Health Organization projects a global shortfall of 5.9 million nurses by 2030, concentrated in already-underserved regions. AI is being positioned as a force multiplier for the nurses who are practicing, not a replacement for the ones who aren’t available. Early warning AI in ICU environments now predicts patient deterioration 6 to 12 hours before clinical signs become apparent — giving nursing teams time to intervene proactively rather than reactively. The National Early Warning Score 2 (NEWS2) algorithm running on continuous vital sign streams is being augmented by machine learning layers that incorporate lab trends, medication interactions, and nursing observation data to produce substantially more accurate deterioration alerts than the original rule-based system.

Chronic disease management is perhaps the highest-volume opportunity for AI in patient care. Approximately 60% of American adults have at least one chronic condition, and managing these patients — diabetes, congestive heart failure, COPD, hypertension, chronic kidney disease — consumes a disproportionate share of healthcare resources. AI-enabled closed-loop insulin delivery systems for Type 1 diabetes now coordinate continuous glucose monitoring with automated pump adjustments, maintaining glucose control with less human intervention than any previous approach. Cardiac AI tools analyze patterns across months of remote monitoring data to identify the 2 to 3 week window before a CHF exacerbation typically produces an emergency department visit — creating an intervention window that remote care teams can act within.

Medication safety is another high-impact nursing application. AI pharmacy verification systems cross-reference orders against a patient’s complete medication history, genomic CYP450 enzyme profile, and real-time lab values to flag interactions that standard drug-drug interaction databases miss because they don’t account for the individual patient’s metabolic context. Post-acute care AI — predicting optimal discharge timing, matching patients to appropriate step-down facilities, and flagging early readmission risk — is closing one of the most expensive gaps in the care continuum. The 30-day readmission window after hospitalization accounts for enormous cost across the system, and AI risk stratification tools are demonstrating 20–25% relative reductions in avoidable readmissions across multiple published health system implementations.

The Agentic AI Inflection: Healthcare’s Biggest Shift Since EHR

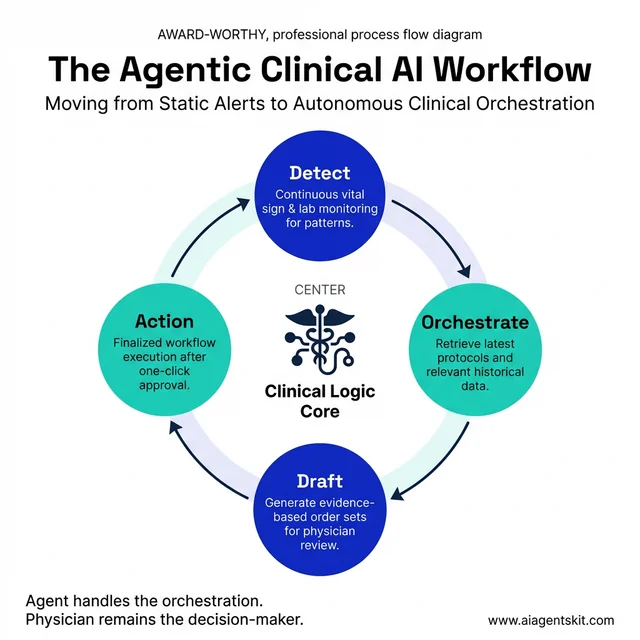

Healthcare AI is undergoing a qualitative shift that most organizations haven’t fully internalized yet. In 2026, the shift from AI tools to agentic AI systems is emerging as the most consequential change in health technology in decades. Agentic AI systems don’t just answer queries or generate content — they autonomously plan and execute multi-step clinical and operational workflows, making decisions and taking actions without human intervention at each step.

A concrete clinical example illustrates the difference: a traditional AI alert flags that a patient’s vital signs suggest possible sepsis. A physician must notice the alert, retrieve relevant labs, cross-reference current guidelines, access the order set, and place the order — each step requiring human action. An agentic AI system handles the cascade autonomously: detecting the pattern, retrieving labs, identifying the current surviving sepsis protocol version, drafting the recommended order set, and routing it for physician one-click approval — all within 90 seconds. The physician remains the decision-maker; the agent handles the orchestration.

Deloitte’s February 2026 report on healthcare AI adoption found that 85% of healthcare leaders are planning to increase their investment in agentic AI over the next two to three years. Top deployment use cases for these autonomous systems include knowledge management and clinical information retrieval (46% of organizations), literature review and evidence synthesis for treatment decisions (38%), and internal process optimization spanning scheduling and supply chain (37%).

The critical governance question these numbers raise is accountability: when an agentic AI system takes action that contributes to a clinical outcome, who is responsible? Most health systems are still developing the compliance frameworks to answer that question. Those that start now — before incidents occur — will be better positioned than those that wait.

Agentic AI shifts from static alerts to autonomous clinical orchestration, handling the multi-step workflows that trigger clinician bottlenecks.

What Are the Real Challenges and Risks of Healthcare AI?

The conversation about healthcare AI risks has matured considerably over the past two years. Organizations that were optimistic about AI efficiency gains in 2024 now grapple with bias incidents, integration failures, and the regulatory complexity of deploying adaptive systems in regulated environments. Even experts debate which risks are most critical, and that diversity of concern is itself a signal of the stakes.

Algorithmic bias is the healthcare AI risk with the clearest evidence of patient harm. AI models trained on historical medical data inherit the disparities baked into that data. As documented in research published by Harvard T.H. Chan School of Public Health, AI diagnostic tools perform significantly worse for Black patients, women, and rural populations — because training datasets are drawn predominantly from large academic medical centers with non-representative demographics. Addressing healthcare AI bias and equity is not a diversity statement — it’s a patient safety requirement that health systems must actively monitor post-deployment, not just evaluate at purchase.

Data privacy and regulatory compliance represent the second major risk domain. Operating AI tools on patient data triggers HIPAA obligations even when the AI vendor is performing the processing. Selecting vendors without HIPAA Business Associate Agreements (BAAs) in place, allowing staff AI tools to transmit unencrypted PHI to external servers, or failing to audit what training data AI systems send back to vendors are all documented compliance failures in current healthcare AI deployments. The shadow AI problem compounds this: when 58% of frontline staff are using unapproved AI tools that likely lack BAAs, organizational data governance has effectively already failed.

The FDA regulatory landscape underwent meaningful change in January 2026, when the agency revised its guidance on Clinical Decision Support software. The updated framework loosens oversight for AI-based CDS tools that function as clinical assistants and allow clinicians to independently review the AI’s logic — a “glass box” approach. AI software that directly determines diagnosis or treatment without clinician review remains regulated as a medical device. Texas became the first state to require written patient disclosure before AI tools are used in healthcare services, effective January 1, 2026 — a precedent that several other states are evaluating.

Implementation failures in healthcare AI follow a recognizable pattern: organizations purchase AI solutions before clearly defining the clinical problem they’re solving, underestimate the integration work required to connect AI outputs to clinical workflows, and underinvest in training that would help clinicians appropriately use and override AI recommendations. The organizational change management required for successful AI adoption — redefining roles, redesigning workflows, establishing governance — is consistently more difficult and time-consuming than the technical integration.

Cybersecurity rounds out the major risk categories: 48% of healthcare leaders identified cybersecurity and data privacy as the top barriers to AI adoption, according to Guidehouse’s healthcare AI research (2025-2026). AI systems represent new attack surfaces — both the infrastructure running the models and the data pipelines feeding them are targets for ransomware groups that have demonstrated willingness to disrupt healthcare operations.

How Healthcare Organizations Can Implement AI Successfully

The health systems with mature, productive AI portfolios share a set of implementation characteristics that distinguish them from organizations that have accumulated expensive proofs-of-concept without clinical impact.

Start specific, not expansive. Organizations that adopt an “AI strategy” without tying it to concrete clinical or operational problems consistently underperform those that start with defined questions: “Can AI reduce documentation time 30% for our primary care physicians?” is a question with a measurable answer. “Implement AI across the health system” is not a strategy — it’s a purchase decision without accountability.

Assess data readiness before assessing vendors. Healthcare AI models are only as good as the data they’re trained on and integrated with. Before evaluating AI applications, hospitals should audit EHR data quality, identify data silos that would prevent integrated AI from accessing complete patient pictures, and confirm that data governance policies allow the intended AI applications. Many AI proof-of-concepts fail during or after pilot because the production data environment is messier than the curated dataset the vendor demo used.

Steps for Evaluating and Selecting AI Vendors

Evaluating healthcare AI vendors requires a due diligence framework that most procurement checklists don’t include. Start by requesting external validation studies performed on patient populations similar to yours — not studies from academic medical centers if your organization primarily serves rural or uninsured populations. Vendors who can’t produce evidence of bias testing across demographic subgroups (race, gender, age, payer type) should be treated with significant skepticism, because performance disparities frequently emerge after broad deployment.

Understand regulatory status for every tool under consideration. AI-enabled software that touches clinical decision-making may be regulated as a medical device, requiring 510(k) clearance or de novo authorization from the FDA. Deploying an uncleared medical device AI creates liability and regulatory risk. Verify HIPAA Business Associate Agreements are in place before any PHI touches vendor systems — this is non-negotiable, not a negotiating point. Total cost of ownership analysis must include implementation services, integration complexity, training time, ongoing monitoring, and eventual model retraining costs — not just annual licensing.

Common Pitfalls Healthcare AI Projects Face

The “solution looking for a problem” failure mode occurs when procurement decisions are driven by vendor demonstrations or competitive pressure rather than identified organizational need. The result is AI software that sits underutilized — purchased but never operationally embedded, delivering zero clinical value.

Underestimating integration complexity is the second most common failure. Healthcare AI draws from EHR, lab, imaging, pharmacy, and billing systems simultaneously. Integration projects regularly surface unexpected technical barriers: incompatible data formats, API rate limits, missing data fields, and latency issues that require months to resolve after contract signing.

Neglecting clinician training and feedback loops undermines even technically sound implementations. Organizations that deploy AI without structured onboarding, feedback mechanisms, and ongoing use monitoring consistently see lower adoption and benefit than those who treat AI as a new clinical competency requiring development. Finally, ignoring equity monitoring post-deployment allows bias problems to persist invisibly. Building demographic stratification into performance monitoring from day one — not after an incident — is now recognized as a clinical governance requirement. Healthcare organizations must also recognize that governance requirements differ substantially between a simple patient FAQ chatbot and an autonomous clinical agent capable of orchestrating care pathways.

The Economic Case: What AI Actually Saves Hospitals

The business case for healthcare AI is frequently overstated in vendor materials and understated in skeptical academic commentary. The evidence sits between those extremes, and understanding it clearly helps health systems build realistic ROI projections rather than either dismissing AI or making purchases on faith.

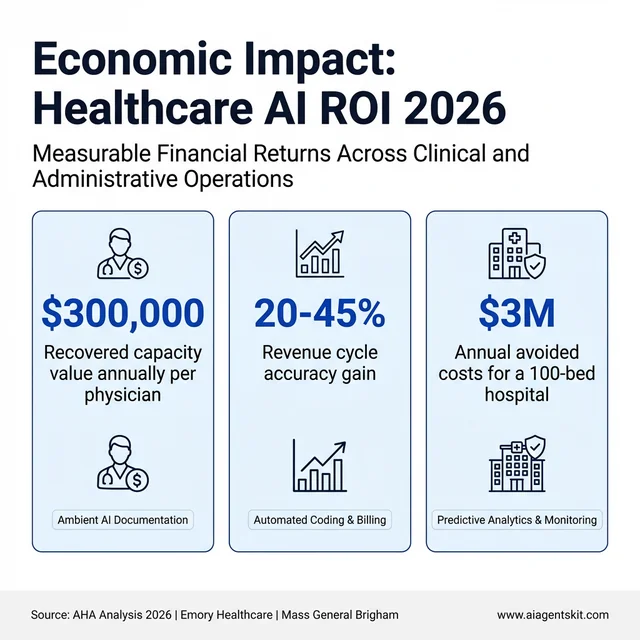

The headline figure — AI projected to save US healthcare $150 billion annually by 2026 through task automation and resource optimization — comes from American Hospital Association analysis and deserves context. That $150B estimate includes potential savings across the entire healthcare system (providers, payers, pharma, patients), not hospital budgets specifically. The operationally measurable savings at the health system level are more bounded — and still substantial.

Ambient AI documentation is the most directly quantifiable ROI case. Each physician using ambient scribes saves 60 to 90 minutes per day in documentation time. At an average physician compensation rate, that recovered time represents approximately $300,000 annually per physician in capacity value — either returned as work-life balance or converted to additional patient capacity. Mass General Brigham documented a 21.2% absolute reduction in physician burnout prevalence after implementing ambient AI scribe technology across primary care. Emory Healthcare reported a 30.7% increase in clinicians feeling a positive impact on their well-being within 90 days of deployment.

Revenue cycle AI delivers returns that are measurable in weeks rather than years. Automated medical coding and billing systems consistently improve coding accuracy by 20–45% over manual processes in published health system studies, capturing reimbursements that were previously missed or delayed by error-prone manual coding. Prior authorization automation platforms have documented 5x ROI within the first 3 months of deployment by eliminating the human labor previously dedicated to payer portal navigation and documentation assembly. Revenue cycle management optimization across the denial management and appeals workflow reduces administrative labor by 30–60% in documented implementations.

Predictive analytics programs generate savings through avoidance rather than direct revenue. A 100-bed hospital deploying AI-based sepsis prediction and deterioration monitoring typically realizes $1.5 to $3 million in annual avoided costs — from shorter ICU stays, fewer preventable deaths triggering regulatory scrutiny, and reduced exposure to performance-based penalty programs. Readmission reduction AI following the same framework yields $8,000 to $12,000 per avoided heart failure readmission in direct cost savings, based on Medicare payment adjustment data.

One structural caveat matters enormously for health system CFOs: in fee-for-service payment models, some AI-driven improvements shift savings to payers rather than providers. A health system that reduces unnecessary imaging through AI-assisted appropriateness checking loses the imaging revenue — even though the clinical outcome improves and the system saves payer expenditure. Value-based care contracts, where the provider shares in total cost reduction, are the payment model that aligns most naturally with AI investment. Organizations should model ROI differently depending on their payer mix and contract structure before committing to specific clinical AI investments.

The measurable economic returns of healthcare AI spanning automation, revenue cycle accuracy, and avoided clinical costs.

What’s Next: Digital Twins and the 2030 Horizon for Medical AI

“Digital twin” entered the manufacturing lexicon decades ago — a virtual simulation of a physical system updated in real time from sensor data, used to predict failure and optimize performance without touching the actual machine. Applied to medicine, a patient digital twin is a continuously updated computational model of an individual built from their EHR, genomic profile, imaging history, wearable sensor data, and environmental exposures — essentially a virtual patient that can be queried, tested, and simulated.

The clinical applications are genuinely transformative in concept, even if most remain in research or early-pilot phases. Oncologists are using tumor digital twins at a handful of leading cancer centers to simulate how a specific patient’s tumor biology will respond to different chemotherapy regimens before treatment begins — testing 50 virtual treatment courses in hours rather than choosing from three or four real options based on population-average response data. Cardiac digital twins built from high-resolution MRI data and electrophysiology recordings allow electrophysiologists to simulate ablation procedures and predict which anatomical targets will eliminate arrhythmia in a specific patient’s heart geometry. Philips and Siemens Healthineers have both announced active clinical programs using cardiac digital twin infrastructure in European health systems — including Philips’ ICU digital twin system launched in the Netherlands in 2025.

The 2030 trajectory for digital twins in medicine runs through three parallel developments. Foundation models for biology — large neural networks trained on multi-modal biological data at the scale of what GPT-4 represented for language — are being built by DeepMind (through AlphaFold and successor systems), OpenBioML, and major pharma companies. As these models mature, patient-level digital twin construction will become computationally feasible at population scale rather than requiring bespoke engineering for individual patients. Second, real-world data infrastructure — the interoperability improvements being driven by the 21st Century Cures Act, FHIR standards adoption, and EHR vendor API requirements — is creating the data pipelines that digital twins require to stay current. Third, edge compute advances mean that increasingly sophisticated model inference can run closer to the patient, reducing the latency and privacy exposure currently associated with cloud-dependent patient simulation.

The honest timeline is that full-scale clinical digital twin deployment is a 3 to 7 year horizon from 2026, not an immediate procurement decision. But health systems making infrastructure investment decisions today — in data interoperability, AI governance frameworks, and real-world evidence collection — are either building toward or away from digital twin compatibility. The organizations that integrated EHR in the 2010s with data architecture in mind are the ones best positioned for the AI-first clinical operating model emerging now.

Frequently Asked Questions About AI in Healthcare

Will AI replace doctors in healthcare?

No — the evidence strongly points in the opposite direction. AI in healthcare consistently performs best as an augmentation layer alongside physician judgment rather than as a replacement for it. Radiologist-plus-AI combinations outperform either working alone in the areas where AI has been most rigorously studied. What AI does replace is certain tasks within physician workflows — routine documentation, basic query responses, structured data extraction — freeing physician time for the reasoning, relationship, and ethical dimensions of care that require human judgment. Most AI skeptics in medicine acknowledge that eliminating administrative burden through AI would meaningfully improve both clinician experience and patient outcomes.

How accurate is AI diagnosis compared to human clinicians?

It depends heavily on the specific task and clinical context. In targeted, well-defined tasks — detecting diabetic retinopathy from retinal photos, identifying pneumothorax on chest X-rays — FDA-cleared AI tools achieve sensitivity and specificity figures that match or exceed specialist performance in controlled evaluations. But clinical diagnosis involves integrating history, physical exam, imaging, labs, and patient preference — a holistic reasoning process current AI systems can’t replicate. The more meaningful benchmark isn’t AI versus the average clinician; it’s whether AI + clinician performs better than clinician alone. The evidence consistently favors the combination.

What are the main risks of using AI in medicine?

Healthcare AI carries four categories of meaningful risk: algorithmic bias that produces worse performance for demographic subgroups; data privacy risks from inadequate vendor vetting; safety risks from over-reliance on AI recommendations without appropriate clinical verification; and cybersecurity vulnerabilities in AI infrastructure. Alert fatigue — when too many AI alerts cause clinicians to ignore them — is also an implementation risk specific to clinical decision support. Organizations that treat these risks as a one-time purchase checklist rather than ongoing governance responsibilities tend to experience worse outcomes.

What questions should hospitals ask AI vendors?

Effective vendor due diligence includes: What external validation studies exist for patient populations similar to ours? Has the model been tested for performance disparities across racial, gender, and age subgroups? What is the FDA regulatory status of this software? Will you sign a HIPAA Business Associate Agreement? What does EHR integration require? What ongoing monitoring capabilities do you provide for detecting model drift post-deployment? Vendors who are evasive on any of these questions deserve heightened scrutiny.

Can AI in healthcare reduce overall costs?

Yes, with important caveats. The clearest cost reductions come from administrative AI — billing automation, prior authorization, scheduling optimization — where time savings are directly quantifiable. Preventive AI that reduces avoidable hospitalizations generates significant savings for value-based care models, though savings may accrue to payers rather than providers in fee-for-service arrangements. AI that catches deteriorating patients earlier creates economic value as avoided costs rather than direct budget line savings. The most defensible AI business case focuses on measurable operational efficiency improvements, with clinical improvement tracked as a secondary benefit.

What is ambient AI documentation in clinical settings?

Ambient AI documentation uses microphone input and NLP to listen to patient-physician conversations and automatically generate structured clinical notes without requiring the physician to type. Systems like Nuance DAX now integrate natively with major EHR platforms, presenting draft notes in the physician’s editor after each encounter. Most implementations report 60-90 minutes of saved documentation time per physician per day — a meaningful reduction in the after-hours charting that contributes substantially to physician burnout. Patient experience data consistently shows improvements in eye contact, engagement quality, and perceived physician attentiveness.

Is AI in healthcare HIPAA compliant?

Healthcare AI can be HIPAA compliant, but compliance isn’t automatic. Any AI vendor that processes or stores protected health information on behalf of a covered entity must sign a HIPAA Business Associate Agreement before receiving patient data. Compliant vendors use encryption in transit and at rest, maintain audit logs, limit data retention, and typically prohibit using patient data for model training without appropriate consent mechanisms. Staff using consumer AI tools like generic ChatGPT interfaces for clinical tasks creates significant HIPAA exposure — these tools rarely have BAAs in place and may retain conversation data.

What is the difference between AI chatbots and agentic AI in medicine?

An AI chatbot responds to individual queries — answering patient questions, routing support requests, or retrieving information on demand. The interaction ends when the response is delivered. Agentic AI can plan and execute multi-step workflows autonomously. An agentic clinical system might detect a deteriorating patient via vital sign analysis, retrieve relevant labs, identify the applicable protocol, draft a recommended order set, notify the covering physician with pre-populated actions, and schedule follow-up monitoring — all without human prompting at each step. The physician remains the decision-maker; the agent handles the orchestration. This functional difference is why governance requirements differ substantially between the two.

What is AI bias in healthcare and how does it happen?

AI bias in healthcare occurs when algorithms produce systematically worse performance for specific patient subgroups based on race, gender, age, or socioeconomic status. It happens structurally: AI models learn patterns from historical data, and historical medical data encodes existing disparities in care, diagnosis, and documentation. A model trained primarily on academic medical center data may perform poorly on rural patient populations. Bias can emerge at data collection, labeling, model design, or evaluation stages — and may not be visible in aggregate accuracy metrics. Detecting it requires deliberate subgroup analysis across demographic segments.

How can patients find out if AI is involved in their care?

Patients have several avenues for learning about AI use in their healthcare. Directly asking their care team is the most direct approach. Texas now requires written patient disclosure before AI tools are used in healthcare services (effective January 1, 2026), with more states expected to follow. Reading health system privacy policies often surfaces information about AI tools in use. Patients reviewing notes in patient portals may notice ambient documentation AI’s distinctive formatting or the disclosure headers some systems include. Patients who want more detail can request information about the specific AI tools their providers use and the validation data supporting them.

What are the pros and cons of AI in healthcare?

The clearest benefits of AI in healthcare are operational: documented reductions in documentation burden (60–90 minutes per physician per day recovered), measurable early warning improvements for sepsis and deterioration, revenue cycle accuracy gains of 20–45%, and expanded access through AI-assisted scheduling and patient navigation. The genuine risks are equally specific: algorithmic bias producing worse performance for underrepresented patient populations; data privacy exposure from inadequately vetted AI vendors who lack HIPAA BAAs; over-reliance on AI recommendations without appropriate clinical judgment; and cybersecurity vulnerabilities in AI infrastructure. The honest assessment is that AI in healthcare isn’t categorically good or bad — it’s a set of tools whose value depends entirely on how thoughtfully they’re selected, implemented, and governed. Well-governed deployments in imaging and documentation have strong evidence. Poorly governed deployments in high-stakes clinical decisions without bias testing create patient safety risks.

How much money can AI save hospitals?

Verifiable, published cost savings from healthcare AI cluster around three categories: ambient AI documentation saves approximately $300,000 annually per physician in recovered capacity value; prior authorization automation delivers approximately 5x ROI within 3 months; and predictive analytics programs generate $1.5 to $3 million in annual avoided costs for a typical 100-bed hospital by reducing sepsis mortality, ICU length-of-stay, and avoidable readmissions. The American Hospital Association estimates total AI potential savings of $150 billion annually across the US healthcare system, but that figure encompasses payer, pharma, and patient savings — not exclusively hospital budget impact. Health systems running value-based care contracts capture more of these savings than those operating primarily under fee-for-service arrangements, where AI efficiency improvements sometimes benefit payers rather than the provider investing in the technology.

Can AI detect depression or mental health conditions?

AI mental health detection tools have demonstrated meaningful capability in controlled research settings: NLP models analyzing language patterns in speech and text data identify markers associated with depression, anxiety, and early psychosis with accuracy measurable against clinical interview-based diagnosis. AI chatbots like Woebot and Wysa show statistically significant reductions in self-reported depressive symptoms in published studies, particularly for mild-to-moderate conditions. The important boundary is severity: AI detection tools work best as screening and monitoring supplements for populations with mild-to-moderate conditions, not as diagnostic replacements for licensed mental health professionals evaluating complex presentations. A January 2026 study found that heavy reliance on AI chatbots — without human therapeutic relationships — was associated with worse outcomes for some users, suggesting that AI mental health tools should be designed as access bridges to professional care, not endpoints in themselves.

Who is legally responsible for AI errors in medical diagnosis?

Legal liability for healthcare AI errors is still being defined through regulation, case law, and evolving FDA guidance. The current consensus is that the healthcare organization deploying the AI bears primary responsibility for decisions made using it — “the algorithm decided” is not a recognized legal defense for clinical negligence. FDA-cleared AI medical devices come with manufacturer accountability for the specific cleared indication, but liability for how the tool is deployed, whether clinicians use it appropriately, and whether it has been validated against the actual patient population served sits squarely with the deploying health system. The agentic AI question — where autonomous systems take action rather than just recommend — is creating new liability frameworks that most health systems’ legal and compliance teams have not yet fully addressed. Organizations deploying autonomous clinical AI should explicitly address accountability in their governance policies before incidents occur.

What are digital twins in healthcare?

A patient digital twin is a continuously updated virtual simulation model of an individual patient, built from their electronic health record, genomic profile, imaging data, wearable sensor streams, and medication history. Rather than testing treatment approaches on the actual patient, clinicians can simulate interventions — drug regimens, surgical approaches, device settings — on the virtual model first. Cardiac digital twins built from MRI and electrophysiology data allow arrhythmia ablation procedures to be planned with patient-specific anatomical maps. Tumor digital twins are being used at leading cancer centers to simulate chemotherapy responses across multiple potential regimens before treatment selection. Most digital twin clinical applications remain in research or early-pilot status as of 2026, with full clinical-scale deployment more of a 2028–2032 trajectory. The infrastructure investment in data interoperability, real-time sensor integration, and AI governance that health systems make now directly affects their digital twin readiness in that window.

Conclusion

AI in healthcare isn’t a future state — it’s the operational reality of health systems that serve millions of patients daily. The organizations making progress aren’t necessarily those with the largest technology budgets; they’re the ones that started with specific problems, built governance alongside implementation, and treated AI as a clinical capability requiring active stewardship rather than a software purchase delivering value automatically.

The arrival of agentic AI systems capable of autonomous clinical orchestration raises the stakes on governance, accountability, and equity monitoring. Health systems that build responsible AI frameworks now — before agentic deployments scale — will have a structural advantage over those who retrofit compliance after incidents. For leaders navigating both institutional AI adoption and the evolving regulatory environment, understanding global AI regulation and what it means for healthcare is an increasingly essential part of strategic planning, not just a compliance exercise.

The evidence is clear that AI improves specific clinical outcomes when deployed thoughtfully. The remaining work is doing the thoughtful deployment at the scale that patients deserve.