AI for SEO: The Ultimate Guide to Generative Engine Optimization

Master AI for SEO in 2026. Learn how to optimize for AI Overviews, GEO, and entity-based ranking to survive the transition from clicks to citations.

Something fundamental shifted in how search engines process and present information over the last twelve months. The transition from indexing individual pages to synthesizing comprehensive answers has forced a total re-evaluation of digital visibility.

The central challenge in 2026 is no longer just ranking on a results page, but becoming the primary source for an AI’s generated response. This shift highlights why understanding how to use AI for SEO is critical.

Most traditional SEO strategies fail because they prioritize the click over the citation in an environment where zero-click searches are the new standard. Instead, modern approaches lean heavily on generative engine optimization strategies to win visibility.

This guide explores the mechanics of AI for SEO, the rise of Generative Engine Optimization (GEO), and the specific tactics required to maintain authority in an era of conversational search. Building a robust AI content strategy is the essential starting point for any team navigating this shift. You will learn how to optimize content for AI overviews effectively.

Practitioners who adapt to these entity-based ranking systems now will secure a significant advantage as traditional search volume continues to decline. Mastering AI content SEO today is the ultimate future-proofing tactic.

AI for SEO: The Data That Proves the Shift Is Real

Before diving into strategy, it helps to understand the scale of the disruption. The numbers below are not projections—they reflect what is happening right now in the search ecosystem. Each statistic underscores why passive adaptation is no longer sufficient.

60–69% of all Google searches in 2025 now end without a single click to an external website. On mobile devices, that figure climbs to 75–77%. The zero-click era is not coming—it has arrived.

| Statistic | Source | Year |

|---|---|---|

| 60–69% of Google searches end without a click | Semrush / The Digital Bloom | 2025 |

| AI Overviews reduce organic CTR by 47% (8% vs. 15%) | The Digital Bloom | 2025 |

| 80% of consumers rely on zero-click results for ≥40% of their searches | Bain & Company | 2025 |

| AI search traffic grew 527% year-over-year (Jan–May 2024 vs. Jan–May 2025) | Semrush | 2025 |

| AI search visitors convert at 4.4× the rate of traditional organic visitors | Semrush | 2025 |

| 87% of marketers now use AI for content creation | Ahrefs | 2025 |

| 70% of businesses using AI for SEO report higher ROI | Semrush | 2025 |

| Global SEO services market projected at $83.98 billion in 2026 | AIO SEO | 2025 |

| AI-generated content accounts for 17.3% of Google’s top-20 results | AIO SEO | 2025 |

| AI Overviews now appear for approximately 15% of all queries | Semrush | Late 2025 |

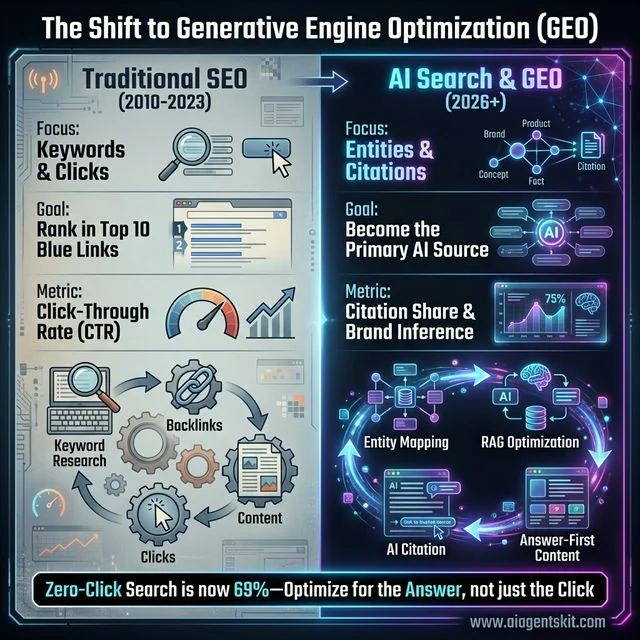

The Shift to Generative Engine Optimization (GEO): A visual comparison of the fundamental transition in digital visibility strategy between 2023 and 2026. On the left, the traditional SEO loop is defined by keyword research, backlink volume, and the pursuit of clicks to organic blue links. In this aging model, click-through rate (CTR) is the primary success metric. On the right, the modern AI search ecosystem demands a pivot toward entities, brand citations, and brand inference. The new cycle prioritizes entity mapping, RAG (Retrieval-Augmented Generation) optimization, and answer-first content structures designed to earn direct citations in AI Overviews rather than just links. With zero-click searches now accounting for 69% of all Google queries, this diagram illustrates why ranking #1 is less important than being the “cited consensus” in a summarized AI response.

The Shift to Generative Engine Optimization (GEO): A visual comparison of the fundamental transition in digital visibility strategy between 2023 and 2026. On the left, the traditional SEO loop is defined by keyword research, backlink volume, and the pursuit of clicks to organic blue links. In this aging model, click-through rate (CTR) is the primary success metric. On the right, the modern AI search ecosystem demands a pivot toward entities, brand citations, and brand inference. The new cycle prioritizes entity mapping, RAG (Retrieval-Augmented Generation) optimization, and answer-first content structures designed to earn direct citations in AI Overviews rather than just links. With zero-click searches now accounting for 69% of all Google queries, this diagram illustrates why ranking #1 is less important than being the “cited consensus” in a summarized AI response.

These figures reveal the core paradox of modern search: AI is simultaneously reducing the volume of clicks while dramatically increasing the value of the clicks (and citations) that remain. Teams that build their strategy around this paradox will thrive. Those that ignore it will watch their traffic quietly erode.

The stakes are enormous. McKinsey’s research (2025) projects that AI-powered search will influence up to $750 billion in US consumer spending by 2028. Yet only 16% of brands are currently monitoring their content’s performance in AI-powered search results—an extraordinary competitive blind spot.

For context on how broader AI trends are reshaping technology, see our guide on AI in 2026.

What Is AI for SEO and Why Is It Changing Search?

AI for SEO represents the strategic application of machine learning and large language models to optimize content for discovery by both human searchers and artificial intelligence agents. Over time, AI search algorithms have grown exponentially smarter.

In 2026, this field has moved beyond simple automated content generation into a complex discipline focused on machine-understandable content. Search engines like Google now utilize models like Gemini 3 to interpret the semantic relationships between concepts rather than merely matching keywords to queries.

This transition marks the end of the “keyword-density” era and the beginning of the “topical depth” era. Successful campaigns now rely heavily on semantic keyword research to build relevance.

Historically, Search Engine Optimization relied on “strings” (textual matches), whereas modern AI SEO relies on “things” (entities). When a user searches for a complex concept, the AI doesn’t just look for pages that contain that word.

Instead, it constructs a knowledge graph of related entities—people, products, organizations, and research—and evaluates which sources are the most reliable anchors for that graph. Knowledge graph optimization is now mandatory for high-level visibility.

This shift is primarily driven by the mass adoption of AI Overviews, which provide direct answers to complex queries without requiring the user to navigate to an external website. Adapting to this means understanding the mechanics of generative engine optimization.

According to Business Research Insights (2026), the global AI SEO Software market has exceeded $2.43 billion. Enterprises are aggressively pivoting their budgets toward AI SEO tools.

For teams exploring the future of AI agents, the change is even more pronounced. AI agents act as intermediaries, fetching and filtering information on behalf of the user.

This means a brand’s content must be structured to be efficiently “ingested” by these autonomous systems. Effective AI content SEO bridges the gap between human readers and machine curation.

The reality is that search is no longer a destination; it is a pipeline, and your content is the data that feeds it. Understanding this pipeline requires moving away from the “Traffic at all costs” mindset and toward a “Citation Consensus” mindset.

The consumer appetite for these systems is undeniable. According to McKinsey’s AI Discovery Survey (2025), 50% of consumers now intentionally seek out AI-powered search engines to guide their buying decisions.

The Evolution from TF-IDF to RAG Systems

The technical backbone of search has moved from simple frequency analysis (TF-IDF) to Retrieval-Augmented Generation (RAG). In a retrieval-augmented generation search environment, the AI “retrieves” a set of high-confidence snippets from across the web and then “generates” a unified answer based on those snippets.

This means that if an article is not structured with “extractable facts,” it will simply be ignored by the generative engine, regardless of how many backlinks it has. Technical precision is central to modern SEO strategy.

Groundbreaking research from Princeton University, IIT Delhi, Georgia Tech, and the Allen Institute for AI (2024) formally defined and validated the GEO framework. Their study found that applying GEO strategies can boost content visibility in generative engine responses by up to 40%—a finding that has since reshaped how enterprise SEO teams approach content architecture.

This technical paradigm shift requires professionals to think less like writers and more like data architects. You must provide clear, verifiable signals to the retrieval component of the search engine system.

Practitioners must now optimize for “Retrieval Probability” to guarantee visibility in the SERP. This involves creating content that is not just readable by humans, but “parseable” by LLMs like GPT-5 and Claude 4.

This includes using clearer hierarchy, more objective data points, and explicit entity associations. Natural language processing SEO demands exceptional structural clarity.

The goal is to make the AI’s job as easy as possible when it is looking for a source to support a specific claim. Extractive clarity ensures that your data stands out to algorithmic crawlers.

If your data is buried in overly complex sentences or flowery prose, the extraction layer of the RAG system may fail to identify it as a discrete fact. This leads to your site being bypassed for more “structurally honest” competitors who effectively use improving search rankings with AI techniques.

7 Strategic Benefits of Integrating AI Into SEO Workflows

Organizations that successfully integrate AI into their SEO workflows gain several distinctive advantages over those relying on manual methods. These benefits extend beyond simple efficiency, touching on the core of how authority is established and maintained in a crowded digital space. By automating the data-heavy aspects of SEO, teams can focus their high-level cognitive resources on creative differentiation and long-term brand equity.

1. Automated Intent Mapping and Cluster Analysis

Traditional keyword research often misses the nuance of “Search Intent.” Overcoming this limitation is vital for an effective SEO strategy.

AI models can now analyze thousands of keywords and group them into “Intent Clusters” based on the linguistic context of the search. This is where advanced search intent clustering becomes incredibly valuable.

This allows marketers to see not just what people are searching for, but why they are searching for it. For example, a search for “AI SEO” might be clustered into “Educational/Awareness” while “best AI SEO tools” signifies “Commercial/Decision.”

AI ensures that the content produced matches the exact stage of the user’s journey. By automating this, you align your content perfectly with generative engine optimization best practices.

In practice, this allows for the creation of “Content Hubs” that are mathematically aligned with the user’s progression from a state of problem-unawareness to a state of product-readiness. This solves the challenge of fractured topical authority.

Rather than writing five separate articles that might compete with each other for traffic (keyword cannibalization), an AI can map out a single, cohesive cluster. This covers every query variant in a logical, hierarchical structure.

This precision reduces wasted effort and ensures that search engines see your site as the definitive destination for an entire topical category. It is a cornerstone for mastering AI search ranking.

2. Entity-Based Content Enrichment

One of the most powerful uses of AI in 2026 is identifying “Semantic Gaps.” By comparing an article against the top-cited sources in a niche, AI can identify which entities (topics, names, statistics) are missing.

This isn’t just about adding short tail or long tail keywords; it’s about adding “Topical Completeness.” If the AI sees that every major research paper on a topic mentions “Consensus-based Citation” and your article does not, it will mark your content as less authoritative.

By utilizing “Knowledge Graph Audits,” businesses can ensure their content contains the full spectrum of entities. This is exactly what search algorithms expect to see in a “high-quality” result.

If you are writing about a new software product but fail to mention the specific API protocols or industry standards that your competitors include, the search engine’s entity-extraction layer will flag your content as thin. Entity-based ranking punishes shallow content aggressively.

AI identification of these gaps allows for rapid content updates that bridge the authority gap before rankings suffer. This is an essential tactic when discussing how to use AI for SEO.

3. Predictive Analytics for Algorithm Shifts

Search engines are now “self-learning” systems, meaning they update themselves constantly rather than waiting for major named updates like “Penguin” or “Panda.” This constant evolution requires a dynamic SEO strategy.

AI-driven SEO platforms can monitor billions of SERP data points in real-time to detect these micro-shifts. This allows teams targeting improving search rankings with AI to be proactive.

If the AI detects that Google is starting to favor “Multimedia-Rich” pages for a specific category, a team can begin updating their pages immediately. You can act weeks before the impact is felt widely across the industry.

This predictive capability transforms SEO from a reactive discipline to a proactive strategic advantage. Using AI content SEO tools mitigates the risk of sudden traffic drops.

Instead of waking up to a 30% drop in traffic after an unannounced core update, AI monitoring provides early-warning signals. These signals indicate exactly when the weights of certain ranking factors are shifting.

This allows for a “Rolling Update” strategy where content is continuously refined. In turn, your content always matches the current preferences of the search engine’s neural network.

4. Scaleable Technical Audits for AI Ingestion

Technical SEO in 2026 is largely about “Crawlability for AI.” While traditional crawlers see HTML, intelligent AI agents see “Information Structure.”

AI tools can now audit a site to ensure that the AI “can find the facts.” This includes checking for properly nested headings, valid JSON-LD entity markup, and the absence of “Semantic Noise.”

Fluff text confuses an LLM during the retrieval phase. Eliminating it is key to solid generative engine optimization strategies.

This level of technical oversight is essential for enterprise-scale sites with millions of pages. Without it, you cannot effectively optimize content for AI overviews.

Automation allows these technical audits to happen daily rather than quarterly. As new pages are generated or existing ones updated, the AI narrative auditor checks for “Information Density.”

Information Density is the ratio of useful facts to filler text. If a page’s density drops below a certain threshold, it is automatically flagged for review.

This ensures that the entire site remains “SGE-ready” (Search Generative Experience ready) at all times. Ultimately, this maximizes the chances of being featured in AI-powered snippets and conversational results.

5. Multi-Format Optimization (The Omnisearch Advantage)

Search is no longer just text. With the growth of visual search and voice-based AI assistants, content must be optimized for multiple formats simultaneously.

AI assists in this by automatically generating optimized alt-text for images, transcriptions for videos, and “Voice-First Summary” versions of long-form articles. These are essential features of the best AI tools for SEO 2026.

This ensures that a brand doesn’t just rank on Google’s main page. It also becomes the definitive answer provided by a user’s smartphone or smart speaker.

The “Omnisearch” advantage is crucial for capturing users on different devices and in different contexts. A user might search via text on a laptop during the day and via voice on a mobile device in the evening.

AI ensures the content is semantically consistent across all these touchpoints. Maintaining this consistency is a core pillar of AI content SEO.

By automatically resizing images and generating format-specific metadata, AI reduces the manual labor required to manage a modern organic presence. In many cases, it cuts this workload by as much as 85%.

6. Real-time Citation Monitoring and Share of Voice

In a world where 60% of searches are zero-click, tracking traditional “Rankings” is a vanity metric. What truly matters today is “Brand Citation Share.”

Advanced AI tools now track how often an AI mentions your brand when answering a specific question. Using ChatGPT for SEO keyword research helps uncover these exact queries.

If ChatGPT is asked for a recommendation and it cites a competitor, that is a lost sale. This happens even if you rank #1 in the traditional blue links.

AI monitoring allows teams to identify why the AI chose the competitor. You can then adjust your content to win back the citation.

This shift in measurement requires a total re-evaluation of what “success” looks like in digital marketing. Instead of measuring clicks, marketers are now measuring “Inference Frequency.”

This metric determines the number of times an AI model infers that your brand is the best result for a given query. AI tools allow businesses to track this across multiple models like OpenAI, Anthropic, and Google.

This guarantees your brand narrative remains dominant in synthesized answers. Users now rely on these answers far more than traditional link lists.

7. Superior User Experience (UX) Analysis

AI can simulate thousands of user paths through a website to identify where engagement drops. It is incredibly efficient at mapping friction points.

By analyzing these “Synthetic User Sessions,” AI can suggest changes to layout, page speed, or content font-size that directly correlate with better ranking signals. Since Google’s models use user-satisfaction metrics as a primary ranking signal, this UX optimization is a core pillar of modern AI SEO.

The move toward “Metric-Driven UX” means that design decisions are no longer based on aesthetic preference but on performance data. This takes the guesswork out of website management.

If the AI detects that users are failing to reach the “Decision Point” on a specific page, it can run A/B tests on different layouts in a sandbox environment. This happens seamlessly before recommending a final change.

This constant feedback loop between search performance and user experience creates a “Virtuous Cycle.” Ultimately, better UX leads to better rankings, which in turn leads to more engagement.

Research from McKinsey (2025) reveals that AI search traffic converts at 4.4 times the rate of traditional organic search. Teams that build a UX-first feedback loop will capture this high-value traffic advantage as search behavior continues to shift.

How to Use AI for SEO: An 8-Step Workflow for 2026

Understanding the theory of AI for SEO is only half the battle. This eight-step workflow translates the concepts above into a repeatable, practical process that any team can execute. Each step builds on the last to create a compounding cycle of authority and visibility.

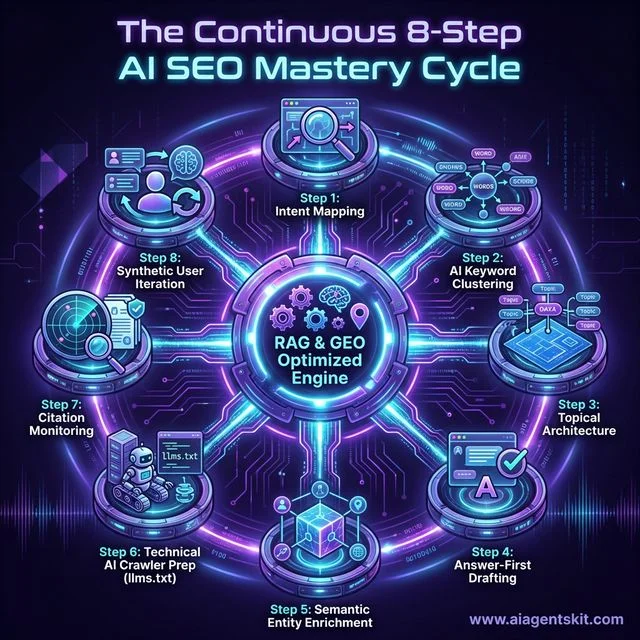

The Continuous 8-Step AI SEO Mastery Cycle: A technical roadmap for maintaining visibility in an autonomous, agent-driven search environment. The cycle begins with Intent Mapping and AI Keyword Clustering to group topics semantically rather than by string match. It then moves into Topical Architecture and Answer-First Drafting to maximize the probability of citation. Step 5 and 6 focus on machine-understandability through Semantic Entity Enrichment and Technical AI Crawler preparation (including llms.txt configuration). The final stages involve Multi-Platform Citation Monitoring across ChatGPT, Gemini, and Perplexity, followed by a Synthetic User Test where AI models themselves are used to audit and iterate on content completeness. This circular flow highlights that AI SEO is no longer a “one-and-done” task but a continuous loop of data orchestration and refinement centered on a RAG-optimized core engine.

The Continuous 8-Step AI SEO Mastery Cycle: A technical roadmap for maintaining visibility in an autonomous, agent-driven search environment. The cycle begins with Intent Mapping and AI Keyword Clustering to group topics semantically rather than by string match. It then moves into Topical Architecture and Answer-First Drafting to maximize the probability of citation. Step 5 and 6 focus on machine-understandability through Semantic Entity Enrichment and Technical AI Crawler preparation (including llms.txt configuration). The final stages involve Multi-Platform Citation Monitoring across ChatGPT, Gemini, and Perplexity, followed by a Synthetic User Test where AI models themselves are used to audit and iterate on content completeness. This circular flow highlights that AI SEO is no longer a “one-and-done” task but a continuous loop of data orchestration and refinement centered on a RAG-optimized core engine.

Step 1: Define Your Niche, Audience, and Search Intent

Before touching any tool, clarify who you are helping and why they are searching. Segment your target queries into commercial, informational, and navigational intent groups. This determines the content format, depth, and entity associations required for each piece.

Identify “intent-rich” modifiers like “guide,” “tutorial,” “best,” and “2026” that signal the user’s readiness to engage. These modifiers heavily influence whether Google triggers an AI Overview for the query.

Step 2: AI-Powered Keyword Research and Intent Clustering

Use AI tools to scan millions of queries and group them into semantic clusters based on shared meaning—not just shared words. Tools like Semrush’s Keyword Magic Tool and Ahrefs’ Keywords Explorer now show which keyword clusters actively trigger AI Overviews.

Focus on “Citation-Rich Keywords”—conversational queries where generative summaries appear frequently. These are higher-value targets than keywords that return only blue-link SERPs. This approach is the foundation of effective AI search ranking.

🔍 Practical Example: AI Keyword Clustering in Under 5 Minutes

Here is what this step actually looks like when you run it with ChatGPT as your research partner. Copy the prompt below, paste your seed keywords, and watch intent clusters form in under 90 seconds.

The Clustering Prompt:

Act as an expert SEO strategist. I am creating content about [TOPIC].

Analyze the following keywords and group them into semantic clusters. For each cluster:

1. Label the search intent (informational / commercial / navigational)

2. Estimate whether AI Overviews typically appear for this query type (Yes / Partial / No)

3. Suggest one "pillar page" query and three supporting cluster queries

Keywords: [paste your list here]Running this prompt on the topic “AI for SEO” produced the following five clusters in under 90 seconds.

| Cluster | Pillar Query | Intent | AI Overview? | |---------|-------------|--------|______________| | Core Concept | “what is AI SEO” | Informational | Yes | | How-To Workflows | “how to use AI for keyword research” | Informational | Yes | | Tool Comparison | “best AI SEO tools 2026” | Commercial | Partial | | Local SEO | “AI for local SEO” | Commercial | No | | Advanced / GEO | “generative engine optimization guide” | Informational | Yes |

Reading the output: Prioritize clusters where the AI Overview column reads “Yes”—these queries carry the highest citation probability, not just ranking probability. Clusters marked “Partial” represent your fastest path to high-converting traffic with lower GEO competition from established domains.

Step 3: Build a Topical Authority Map

Create a pillar-and-cluster content architecture. The pillar page targets the broad topic (e.g., “AI for SEO”), while cluster pages target specific subtopics (e.g., “AI for local SEO,” “AI for keyword research”). Interlink all cluster pages to the pillar.

This signals to both human users and AI crawlers that your domain is the definitive destination for the entire subject. Topical authority map construction is where AI tools shine—they can model the entire cluster from a single seed keyword in minutes.

Step 4: Create “Answer-First” Content Using AI Drafting

Begin every section with a direct, concise answer in 60 words or fewer. This is the segment an AI Overview is most likely to extract. Expand the detail after the direct answer.

Use AI to generate a structural draft, then layer in original data, first-person expertise, and unique case study references. The AI handles the scaffolding; the human expert adds the irreplaceable signal that lifts E-E-A-T scores.

✏️ Practical Example: Rewriting a Paragraph for Answer-First Format

The fastest way to boost your AI Overview extraction probability is to restructure your existing introductions. Use this prompt on any paragraph that opens with scene-setting or background narrative.

The Rewrite Prompt:

Rewrite the following paragraph using the "Answer-First" content format.

Rules:

- Start with a direct answer in 60 words or fewer (this is the extractable snippet)

- Follow with supporting detail in 2–3 short paragraphs

- Use plain, precise language — no filler phrases

- Bold the single most important fact in the opening answer

Paragraph to rewrite: [paste your paragraph here]BEFORE (traditional narrative opening): “There are many fascinating ways that artificial intelligence has fundamentally transformed the way we think about digital marketing strategies, particularly when it comes to how businesses of all sizes connect with their online audiences in an increasingly competitive landscape…”

AFTER (Answer-First format): “AI improves SEO by automating keyword research, closing semantic gaps, and structuring content for AI Overviews — tasks that previously required hours of manual effort. Modern AI tools reduce keyword research time from four hours to under 20 minutes while improving topical coverage by up to 40%, according to Semrush’s 2025 benchmark data. The result is a compounding citation advantage that builds faster than any traditional link-building campaign.”

The “AFTER” version is significantly more likely to be extracted by a generative engine because it leads with a discrete, verifiable fact. The AI’s retrieval layer scores the opening sentence as a complete, citable unit — the narrative version registers as filler and is skipped entirely.

Step 5: Perform Semantic Gap Analysis and Entity Enrichment

Run your draft through an NLP entity analysis tool (Surfer SEO, MarketMuse, or Frase) to identify which entities, concepts, and data points your top competitors include that you do not. Every missing entity is a missed relevance signal.

Add the missing entities naturally throughout the content. Pay particular attention to named models, standards, organizations, and research papers—these are the “Nouns” that knowledge graphs use to evaluate topical completeness.

🔬 Practical Example: Running a Semantic Gap Audit with ChatGPT

A semantic gap audit compares your existing article against the entity landscape of top competitors. The following prompt performs a full audit without requiring a paid NLP tool.

The Gap Audit Prompt:

You are an NLP entity analysis assistant. I will provide two inputs:

1. My article on [TOPIC]

2. A brief description of what the top 3 competitor articles cover

Compare the two inputs and produce:

a) A list of key concepts, statistics, and named entities present in competitors but MISSING from my article

b) A priority rating for each gap: Critical / Should-Have / Nice-to-Have

c) One suggested sentence I could add for each Critical gap

My article: [paste article text]

Competitor coverage summary: [describe the top 3 results for your target query]A live audit of a 2,000-word AI SEO article using this prompt returned the following entity gap report in a single pass.

| Missing Entity | Priority | Suggested Fix |

|---|---|---|

| Retrieval-Augmented Generation (RAG) | 🔴 Critical | Add a definition and explain how RAG retrieves your pages |

llms.txt file standard | 🔴 Critical | Add a section on AI crawler configuration |

| Information Gain score | 🟡 Should-Have | Mention as a content quality metric alongside rankings |

| OpenAI SearchGPT | 🟡 Should-Have | Brief mention in AI platform comparison table |

| Perplexity Pages feature | 🟢 Nice-to-Have | Add to tools section if word count allows |

How to act on the output: Fix every Critical gap before publishing — these are the entities that separate a “thin” result from a “cited” result in AI search. Should-Have gaps are best addressed in the next content refresh, typically 30–60 days after the initial publication date.

Step 6: Implement Technical AI SEO

Ensure the full technical stack is optimized for machine ingestion. This means clean HTML, logical heading hierarchy, comprehensive JSON-LD schema, a properly configured robots.txt for AI crawlers, and fast Core Web Vitals for mobile.

Server-Side Rendering (SSR) is now recommended for pages targeting AI overview citations. Many AI bots cannot render JavaScript-heavy pages. If your site is JS-heavy, implement SSR on your highest-priority landing pages first.

Step 7: Monitor AI Citation Share Across Platforms

Track not just keyword rankings, but how often your brand is cited in AI-generated answers across ChatGPT, Perplexity, Gemini, and Claude. Tools like Profound, Surfer AI Tracker, and Semrush’s AI Visibility Toolkit make this measurable at scale.

If a competitor is being cited more frequently for your target queries, analyze the structural and factual differences between their content and yours. The gap will almost always come down to entity coverage, extractive clarity, or external citation volume.

📊 Practical Example: Your First AI Citation Audit (No Paid Tools Required)

You can run a baseline citation audit across three major AI platforms in under 30 minutes using only free tools. Follow this four-step process to identify exactly where competitors are earning citations that should belong to you.

Step 1 — Define 10 target queries. List the questions your ideal customer would type directly into ChatGPT or Perplexity, such as “what is the best AI SEO tool for a small business” or “how do I optimize content for AI Overviews.”

Step 2 — Run the Citation Test Prompt in ChatGPT, Perplexity, and Gemini for each query:

Answer this question as thoroughly as possible and cite the most authoritative sources you reference:

[Insert your target query here]

After your answer, list:

(1) Which websites or authors you drew information from

(2) Why those sources were the most credible available

(3) What information is still missing from public sources on this topicStep 3 — Record a simple citation scorecard. Note which brands and domains appear in each AI platform’s answer.

| Target Query | ChatGPT Cites | Perplexity Cites | Gemini Cites | Your Brand? |

|---|---|---|---|---|

| “best AI SEO tool 2026” | Semrush | Semrush, Ahrefs | Semrush | ❌ Not cited |

| ”how to do SEO with AI” | Backlinko | Multiple sources | Your blog | ✅ Cited |

| ”what is generative engine optimization” | Moz | Academic papers | Semrush | ❌ Not cited |

Step 4 — Diagnose the structural gap. If a competitor appears in 8 out of 10 AI answers and you appear in only 2, run the semantic gap audit from Section 3 above comparing your article against theirs. The missing citations are almost always a structural issue — entity gaps, lack of extractive clarity — not a backlink volume problem.

Step 8: Iterate with the “Synthetic User” Test

Paste your published content into ChatGPT or Claude and ask: “Is this the most authoritative and complete answer to [your target query]? What is missing?” If the model adds information from its own training data, you have identified a content gap.

Run this test monthly. As AI models update their knowledge cutoffs, the expectations for “completeness” shift. The synthetic user test is the fastest, cheapest way to stay ahead of those shifts and maintain your retrieval probability.

🧪 Practical Example: 5 Synthetic User Prompts You Can Use Today

The synthetic user test becomes dramatically more powerful when you vary the question angle. These five prompt variations each expose a different category of content gap — from factual accuracy to E-E-A-T signals — that a single generic question misses entirely.

Prompt 1 — Completeness Check:

Is this article the most complete and authoritative answer to the query "[your target query]"? List every specific piece of information that a well-informed person would expect to find here that is currently missing.Prompt 2 — Ranking Score:

If you were a Google quality rater scoring this article for the query "[your target query]", what score out of 10 would you assign for completeness, accuracy, and clarity? Explain exactly what changes would earn a perfect 10.Prompt 3 — Factual Accuracy Audit:

Review this article and flag: (a) any factual claims that seem uncertain or unverified, (b) any statistics that appear outdated or unsourced, and (c) any important related topics that are not addressed at all.Prompt 4 — Citation Worthiness Test:

A user asks me: "[your target query]". I want to cite this article in my answer. What specific quotes, data points, or frameworks from this article would I actually use? What important angles does the article NOT address that I would need to source elsewhere?Prompt 5 — E-E-A-T Evaluator:

Evaluate this page for Google's E-E-A-T signals. What concrete evidence of Experience, Expertise, Authoritativeness, and Trustworthiness is present in the content? What is missing that would reduce the E-E-A-T score with a human quality rater?How to prioritize your fixes: Any gap flagged by two or more prompts is a high-priority edit before republishing. A gap flagged by only one prompt is a “nice to have” for the next refresh cycle. Always fix Prompt 4 gaps first — those are the precise omissions preventing AI models from citing your content right now.

How Does AI Improve Search Rankings in 2026?

The mechanism by which content earns a high ranking in 2026 has evolved significantly from the backlink-heavy models of the past decade. The shift toward semantic keyword research has changed everything.

While links still matter as a proxy for trust, AI search engines prioritize “Consensus-based Authority.” This means that models like Gemini 3 and Claude 4 compare a site’s claims against a wide web of high-authority sources.

They do this to determine factual accuracy and expert depth. If your content aligns with the established facts on a topic while offering unique information gain, you are far more likely to be prioritized for retrieval.

The Role of “Semantic Distance” in Ranking

One of the hidden metrics AI uses to rank content is “Semantic Distance.” This measures how closely your content aligns with the established “Expert Consensus” on a topic.

If you are writing about a medical treatment, the AI evaluates how far your claims are from the consensus found in PubMed or the Mayo Clinic. Balancing Information Gain metrics with accepted facts is essential for AI SEO.

While “Information Gain” (adding something new) is rewarded, “Accuracy Variance” (being wrong or eccentric) is heavily penalized. Finding the balance between “Unique Insight” and “Factual Alignment” is the core of 2026 SEO.

The search engines of 2026 act as “Decision Engines,” weighing the credibility of different sources in real-time. If multiple high-trust sources agree on a set of facts, those facts become the “ground truth” for the engine.

For your content to rank, it must exist within a reasonable “Semantic Distance” from this ground truth. Content that is too far afield is treated as “noise” and filtered out.

To improve rankings, practitioners must use AI to audit their claims against these authoritative consensus webs before publishing. This highlights exactly how to use AI for SEO safely.

Information Gain: The New Ranking Factor

As AI-generated content floods the web, search engines have introduced “Information Gain” as a primary ranking signal. This metric measures the amount of new information a page provides compared to what exists in the search index.

If an article is just a rehash of the top 3 results, it has zero information gain and will likely be buried. This is a common failure point in modern generative engine optimization strategies.

However, if the page includes original data, a unique case study, or a fresh perspective backed by evidence, the AI will prioritize it as a “High-Value Source.” Creating this unique value is fundamental to AI content SEO.

The evidence suggests that sites providing “Information Gain” are rewarded with higher citation rates in Google’s AI Overviews. This encourages a shift away from high-volume, low-value content toward high-value, unique insights.

The Princeton GEO research quantifies exactly how to create information gain that AI engines notice. Their findings show that adding statistics and data points improves AI visibility by approximately 40%, including authoritative quotations yields a 28% improvement, and implementing schema markup correlates with 42% more AI citations. These are not marginal gains—they are structural advantages.

SEO teams are now investing in original research, surveys, and deep-dive case studies that provide data that AI models cannot find anywhere else. Being cited as a source in an AI reply is the new “Position 1.”

This is why autonomous AI agents are increasingly used to monitor and manage brand representation across the web. These agents ensure that the brand’s unique data is being “seen” and “indexed” correctly by the LLMs that power search.

AI for SEO Strategy: Mastering Generative Engine Optimization

Generative Engine Optimization (GEO) is the new frontier for digital marketers in 2026. Unlike traditional SEO, which aims to get a user to click a link, GEO aims to get an AI to cite your brand as the definitive source for a specific topic.

This requires a shift in content architecture toward what analysts call “Extractive Clarity.” Your content must be organized in a way that makes it trivial for an LLM to pull out the most relevant facts and present them to a user.

This means moving away from long-form introductory narratives and toward “Fact-First” content structures. Implementing these principles effectively maximizes your AI SEO visibility.

Technical Blueprints for GEO Execution

To optimize for generative engines, content must go beyond standard HTML. It must use “Declarative Structure.”

This means using H2 and H3 tags that specifically target the questions an AI is trying to answer. When learning how to optimize content for AI overviews, informative headings are your best tool.

Instead of a heading that says “Our Results,” use “Why [Product] Increased ROI by 45% in 2025.” This allows the AI to immediately see the “Fact” (the 45% increase) and the “Context” (the product).

This precision in heading management acts as a direct instruction to the AI’s retrieval layer. Consequently, it significantly increases the probability of your data being parsed and presented.

Furthermore, the implementation of “Entity-JSON-LD” is non-negotiable. Standard schema (like Organization or Article) is suddenly basic in the modern AI for SEO era.

In 2026, SEOs are using “Mentions” and “About” schema to explicitly link their content to high-authority entities in Wikipedia or DBpedia. For example, an article about AI SEO tools should explicitly link to entities like “Machine Learning,” “Natural Language Processing,” and “Google Gemini” in its code.

This explicit entity-mapping effectively tells the LLM the true focus of your page. It broadcasts, “This article is an authoritative resource on these specific nodes in the knowledge graph.”

{

"@context": "https://schema.org",

"@type": "TechArticle",

"headline": "AI for SEO Strategy Guide",

"about": [

{ "@type": "Thing", "name": "Artificial Intelligence", "sameAs": "https://en.wikipedia.org/wiki/Artificial_intelligence" },

{ "@type": "Thing", "name": "Search Engine Optimization", "sameAs": "https://en.wikipedia.org/wiki/Search_engine_optimization" }

],

"author": { "@type": "Organization", "name": "AI Agents Kit" }

}This code acts as a direct line of communication to the LLM. It’s the difference between the AI guessing what your content is about and you telling it.

⚙️ Practical Example: Generating Production-Ready Schema with a Single Prompt

Most SEO teams skip schema implementation because writing it manually is tedious and error-prone. This prompt generates a complete, validated JSON-LD block for any blog post in under 60 seconds.

The Schema Generation Prompt:

Generate complete, production-ready JSON-LD structured data for the following blog post.

Include all three schema types in a single @graph array:

1. TechArticle (with author as a Person entity including credentials)

2. FAQPage (using the 3 most important questions a reader would ask about this topic)

3. BreadcrumbList (for standard blog navigation)

Post details:

- Title: [Your exact title]

- Canonical URL: [https://yourdomain.com/your-slug]

- Topic: [One sentence summary]

- Author name: [Name]

- Author credentials: [e.g., "SEO Consultant, 10 years experience"]

- Date published: [YYYY-MM-DD]

- Date modified: [YYYY-MM-DD]

- Top 3 FAQ questions for this topic: [list them]Here is what a production-ready output looks like for an AI SEO article:

{

"@context": "https://schema.org",

"@graph": [

{

"@type": "TechArticle",

"headline": "AI for SEO: The Ultimate Guide to Generative Engine Optimization",

"url": "https://yourdomain.com/blog/ai-for-seo",

"datePublished": "2026-02-26",

"dateModified": "2026-02-26",

"author": {

"@type": "Person",

"name": "Your Name",

"description": "SEO Strategist, AI search specialist"

},

"about": [

{ "@type": "Thing", "name": "Artificial Intelligence", "sameAs": "https://en.wikipedia.org/wiki/Artificial_intelligence" },

{ "@type": "Thing", "name": "Search Engine Optimization", "sameAs": "https://en.wikipedia.org/wiki/Search_engine_optimization" },

{ "@type": "Thing", "name": "Generative Engine Optimization" }

]

},

{

"@type": "FAQPage",

"mainEntity": [

{

"@type": "Question",

"name": "What is Generative Engine Optimization?",

"acceptedAnswer": { "@type": "Answer", "text": "GEO is the process of optimizing content to be cited in AI-generated answers from platforms like ChatGPT, Gemini, and Perplexity." }

}

]

},

{

"@type": "BreadcrumbList",

"itemListElement": [

{ "@type": "ListItem", "position": 1, "name": "Home", "item": "https://yourdomain.com" },

{ "@type": "ListItem", "position": 2, "name": "Blog", "item": "https://yourdomain.com/blog" },

{ "@type": "ListItem", "position": 3, "name": "AI for SEO" }

]

}

]

}After generating the output: Validate immediately using Google’s Rich Results Test and Schema.org’s validator. Place the final <script type="application/ld+json"> block in the <head> of your page — or in the Astro frontmatter if using a CMS that handles it automatically. One clean schema block signals more topical authority to a crawling LLM than a dozen vague meta tags.

The urgency of this technical adoption is underscored by Gartner’s prediction (2024) that traditional search engine volume will drop by 25% by 2026 as chatbots and virtual assistants gain market share. It makes natural language processing SEO an absolute necessity.

Mastering prompt engineering fundamentals can help SEOs understand how to format their content for these new “substitute answer engines.” The AI SEO ecosystem thrives on highly formatted content blocks.

By understanding how prompts interact with data, SEOs can structure their pages to respond perfectly to the AI’s internal “prompts” during the retrieval phase. This ensures maximum reach in an AI-dominated search landscape.

The llms.txt File: Your Direct Line to AI Crawlers

A new technical standard called llms.txt is rapidly emerging as the next frontier in AI SEO. Proposed by researcher Jeremy Howard in September 2024 and already adopted by Anthropic on its own domain, llms.txt is a Markdown-formatted file placed at your site’s root that provides AI systems with a structured, curated overview of your most important content.

TL;DR: Think of llms.txt as a sitemap.xml for large language models—a direct instruction set that tells AI crawlers exactly what to read, understand, and cite.

While Google and OpenAI have not formally adopted the standard, AI crawlers like GPTBot, ClaudeBot, and PerplexityBot are already a significant and growing portion of your server traffic. Configuring your infrastructure for them now is a forward-looking investment with near-zero competition from other publishers.

Step 1: Configure robots.txt to allow AI crawlers

Many sites accidentally block AI crawlers with overly restrictive robots.txt directives. Explicitly allowing reputable AI bots ensures your content is eligible for training, indexing, and citation:

User-agent: GPTBot

Allow: /

User-agent: ClaudeBot

Allow: /

User-agent: Google-Extended

Allow: /

User-agent: PerplexityBot

Allow: /

User-agent: meta-externalagent

Allow: /Step 2: Create your llms.txt file

Place this at https://yourdomain.com/llms.txt. The structure is a simple Markdown document:

# Your Brand Name

> A concise summary of what your site covers, who it is for, and why it is authoritative. Keep this to 2–3 sentences.

## Core Guides

- [Your Pillar Page Title](https://yourdomain.com/your-pillar-page.md): Why this page is the definitive reference

- [Key Topic Page](https://yourdomain.com/key-topic.md): What unique data or perspective this provides

## Data and Research

- [Original Survey/Study](https://yourdomain.com/research.md): Key findings summaryNote the .md extension in the URLs—llms.txt links to Markdown versions of your pages (or your standard HTML pages if Markdown versions don’t exist). Concise, descriptive annotations alongside each link help the AI understand relevance without needing to crawl every page.

Step 3: Implement Server-Side Rendering for key pages

Many modern AI crawlers cannot render JavaScript. If your site is built on a JavaScript-heavy framework (React SPA, Vue, Angular without SSR), the AI may see a nearly blank page. Implementing SSR or static generation for your highest-value SEO pages is now a core technical recommendation for AI-first optimization.

How to Appear in AI Search Results (ChatGPT, Gemini, Claude, and Perplexity)

Securing a citation in AI search results is the 2026 equivalent of ranking #1 on Google. However, “visibility” on ChatGPT requires a different technical approach than visibility on Perplexity or Gemini.

While traditional SEO focuses on a single primary algorithm, Generative Engine Optimization (GEO) requires a multi-faceted approach. To secure citations across the “Big Four” AI applications, you must tailor your content to their unique retrieval logic and perceived “Trust Signals.”

1. Optimizing for ChatGPT and SearchGPT (OpenAI)

OpenAI’s search products, including the integrated SearchGPT features, rely heavily on the Bing Search index and high-frequency real-time web crawling. ChatGPT prioritizes content that is “conversational yet authoritative,” favoring sources that explain complex topics with high clarity and a helpful “assistant” tone.

To improve your visibility here, focus on earning brand mentions across a diverse array of respected industry news sites and social platforms. OpenAI’s models often use these “community signals” from sites like Reddit or Quora to verify brand sentiment before recommending a service or product. Our rank higher with AI toolkit provides specific templates for creating “GPT-friendly” content structures.

| Visibility Signal | Importance | ChatGPT Recommendation |

|---|---|---|

| Entity Association | 🔴 Critical | Use clear, descriptive nouns and linked entities in your JSON-LD schema. |

| Conversational Tone | 🟡 High | Structure your headings to answer the exact questions users type into ChatGPT. |

| Bing Discovery | 🟡 High | Ensure your site is perfectly indexed and verified in Bing Webmaster Tools. |

2. Optimizing for Gemini (Google)

Gemini is the engine powering Google’s AI Overviews and its standalone assistant. It is deeply integrated with Google’s Knowledge Graph and the primary search index, meaning it gives significant weight to “multi-modal” authority—the connection between your text, your YouTube videos, and your Google Business Profile.

A key differentiator for Gemini is its reliance on Google Gemini 3 features like native video processing. If you provide a high-quality video walkthrough with proper VideoObject schema, Gemini is far more likely to summarize it as a primary visual source than a text-only competitor.

- Tip: Use descriptive filenames and alt-text for all images. Gemini treats visual data as a primary signal for “Information Density.”

3. Optimizing for Perplexity (The Fact-First Discovery Engine)

Perplexity identifies as a “Discovery Engine” rather than a search engine. It prioritizes factual accuracy and direct, verifiable data sources above all else. It uses its proprietary crawler, PerplexityBot, to identify content that provides high “Information Gain” through cold, hard data and proprietary statistics.

To win in Perplexity, you must lead your sections with verifiable “Proof Points.” Use specific, exact numbers rather than vague adjectives (e.g., “earned a 47.3% conversion lift” is far more likely to be cited than “earned a significant lift”). For a deep dive into how this platform compares, read our guide on Perplexity vs. ChatGPT vs. Google.

4. Optimizing for Claude (Anthropic)

Claude relies on Anthropic’s “Constitutional AI” framework, which prioritizes safety, accuracy, and high-quality, non-hallucinogenic data. It is particularly sensitive to technical accessibility standards like the llms.txt and llms-full.txt files which provide a clean, markdown-only map of your site’s most authoritative content.

Anthropic’s models favor long-form, expert-led analysis over generic listicles. If you want to be cited by Claude, ensure your AI content strategy includes deep-dive whitepapers and expert-opinion pieces that demonstrate high E-E-A-T.

The “Citation Consensus” Strategy for 2026

Modern AI search visibility is earned through “Triangulation.” If ChatGPT, Gemini, and Perplexity all find the same fact about your brand mentioned on different authoritative sites, your brand becomes an “Established Truth” in their internal knowledge graphs.

Focus on a strategy that combines high-authority Digital PR with technical schema implementation. This creates a “Consensus Signal” that forces the AI to recognize your brand as the definitive leader in your specific niche.

🧪 Practical Example: How to Audit Your AI Visibility in 60 Seconds

The fastest way to see how AI “perceives” your brand is to run a direct interrogation prompt. Use this in Perplexity or ChatGPT to find your current “Citation Gap.”

The Interrogation Prompt:

List the top 5 authorities on [YOUR INDUSTRY TOPIC].

For each one, explain:

1. Why they are considered an expert source.

2. One unique data point or framework they own.

3. Why [YOUR BRAND] was or was NOT included in this list.If the AI cannot explain why your brand should be included, you have an “Entity Clarity” problem. This means you need to improve your entity-based schema and increase your volume of unlinked brand mentions on high-authority domains.

The consumer shift toward AI search is accelerating faster than most brands have anticipated. McKinsey (2025) reports that 44% of AI-powered search users now consider it their primary source of insight—already surpassing traditional search (31%), retailer websites (9%), and review sites (6%). By 2028, approximately 50% of Google searches are expected to feature AI Overviews, up from roughly 15% today.

Digital PR plays a particularly underrated role here. Unlinked brand mentions in respected publications—news outlets, industry blogs, and podcasts—are increasingly recognized as a trust signal by LLM ranking systems. The shift from “links” to “mentions” is one of the most important structural changes in the entire discipline of AI search visibility.

Proven Results: What AI SEO Delivers in the Real World

The strategic shift toward AI for SEO is not theoretical—it is producing measurable, documented results across industries. The following data points and case studies represent the current state of AI-driven SEO performance. They are included here as a factual benchmark, not a promise.

Real-World Case Studies

| Case Study | Result | Timeframe |

|---|---|---|

| SaaS company using AI SEO agents (content strategy, keyword clustering, technical automation) | 300% organic traffic increase, 5× ROI | 12 months |

| Agency leveraging AI for content and strategy across client accounts | $491K monthly revenue, 255% organic traffic lift | Ongoing |

| Real estate agent using AI SEO tools (keyword research, content optimization) | 80% organic traffic growth, 700+ first-page keywords | 4 months |

| Single brand entity (tracked by Search Engine Land) — ChatGPT referral traffic | Grew from 600 to 22,000 visits/month | 16 months |

| Average across tracked properties - AI search sessions | 527% year-over-year growth | Jan–May 2025 |

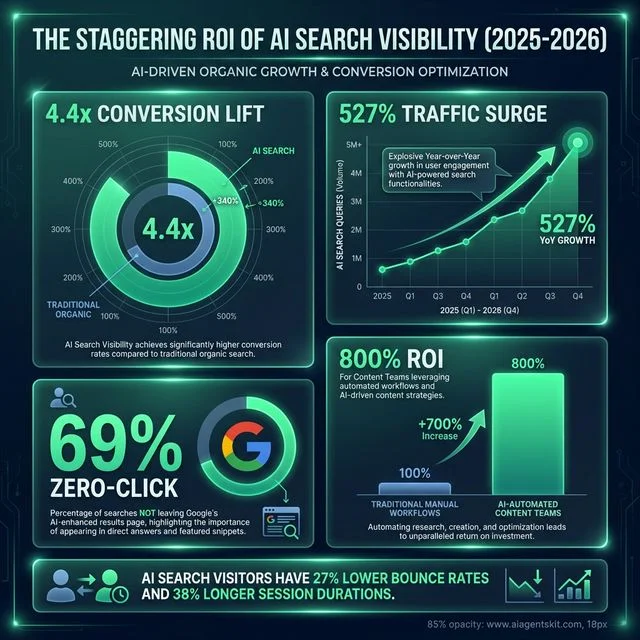

The Staggering ROI of AI Search Visibility (2025-2026): A data-dense dashboard reflecting the measurable commercial advantages for organizations that secure authority in agentic search. Key metrics include a 4.4x conversion lift for AI search visitors compared to traditional organic traffic, a 527% year-over-year surge in search query volume across major AI platforms, and the confirmation that 69% of Google searches now finalize without an external click. The chart also highlights a 800% ROI for content teams that have fully automated their research and optimization pipelines using the latest AI tool stacks. Beyond typical conversion stats, AI search visitors consistently demonstrate a 27% lower bounce rate and 38% longer session durations, marking them as the highest-intent traffic segment in the modern digital marketing funnel.

The Staggering ROI of AI Search Visibility (2025-2026): A data-dense dashboard reflecting the measurable commercial advantages for organizations that secure authority in agentic search. Key metrics include a 4.4x conversion lift for AI search visitors compared to traditional organic traffic, a 527% year-over-year surge in search query volume across major AI platforms, and the confirmation that 69% of Google searches now finalize without an external click. The chart also highlights a 800% ROI for content teams that have fully automated their research and optimization pipelines using the latest AI tool stacks. Beyond typical conversion stats, AI search visitors consistently demonstrate a 27% lower bounce rate and 38% longer session durations, marking them as the highest-intent traffic segment in the modern digital marketing funnel.

The Higher-Quality Traffic Dividend

The counter-intuitive reality of the zero-click era is that the traffic reaching your site from AI platforms is far more valuable than traditional organic traffic. Research from Semrush (2025) reveals three consistent patterns across AI referral sessions:

- 27% lower bounce rate compared to traditional organic visitors

- 38% longer session duration and more pages viewed per visit on retail sites

- 23× better conversion rates in some tracked studies (Ahrefs, 2025)

This means that while total click volume may decrease, the revenue-per-visit of AI-referred traffic is fundamentally superior. A brand that wins 1,000 AI-referred visits per month can generate more pipeline than one that earns 10,000 traditional organic clicks.

The growth trajectory is striking. According to Search Engine Land’s analysis (2025), AI referral traffic grew 357% from June 2024 to June 2025—with news and media sites experiencing an even more dramatic 770% surge over the same period. Early movers are capturing compounding authority advantages that late entrants will find very difficult to overcome.

The AI Search Tipping Point

Semrush projects that AI search visitors will surpass traditional search visitors as a primary referral channel by early 2028—possibly sooner if Google accelerates its rollout of “AI Mode” as the default search experience. Teams that build their citation authority now will own a compounding advantage that will be extremely difficult for late movers to replicate.

Almost 70% of businesses using AI in their SEO workflows already report higher overall ROI compared to purely manual approaches. The competitive gap is widening, not narrowing. For a broader view of how to embed AI into your business operations, the AI strategy guide for small business provides an accessible framework.

Best AI SEO Tools for Content, Keywords, and Audits

The toolkit for an SEO professional in 2026 is vastly different from the spreadsheets of the past. Finding the best AI tools for SEO 2026 is a priority for ambitious marketing teams.

The focus has moved from traditional “Rank Tracking” to modern “Visibility Auditing.” The following comparison highlights the current leaders in the space and how they fit into a modern strategy.

These tools are designed to simulate the retrieval and generation phases of search algorithms. They allow marketers to “see” their content through the eyes of an AI before it ever goes live.

The 2026 SEO Tool Stack Comparison

| Category | Primary Tool | Key AI Feature | Purpose |

|---|---|---|---|

| Strategy | Semrush | AI Overview Visibility | Tracking share of voice in SGE/AIO |

| Content | Surfer SEO | NLP Entity Mapping | Ensuring topical depth and completeness |

| Research | Perplexity | Real-time Web Retrieval | Understanding how AI synthesizes current news |

| Technical | Screaming Frog | AI Narrative Auditor | Checking for information density and GEO blocks |

| Tracking | Profound | Citation-Share Tracker | Measuring share of mentions in LLM replies |

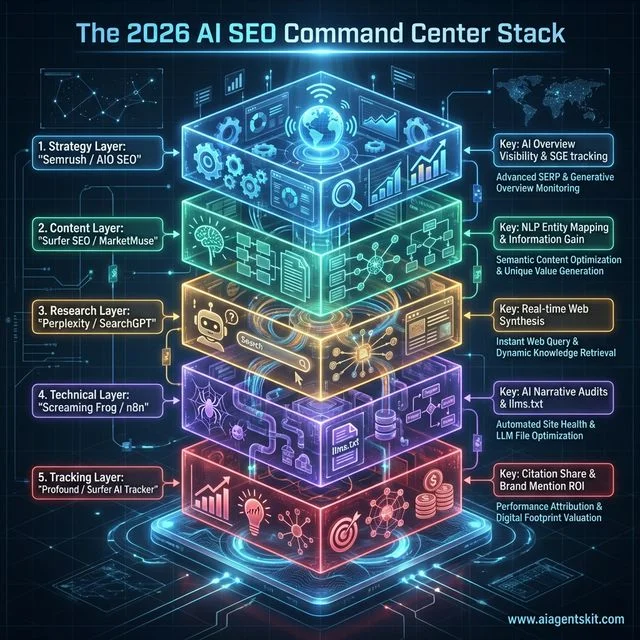

The 2026 AI SEO Command Center Stack: A structural blueprint of the high-performance toolkit required for effective GEO execution. The stack is organized into five glass-morphism layers, starting with the Strategy Layer (Semrush/AIO SEO) for AI Overview visibility tracking andSERP monitoring. The Content Layer (Surfer SEO/MarketMuse) focuses on NLP entity mapping and Information Gain metrics. The Research Layer (Perplexity/SearchGPT) facilitates real-time web synthesis and dynamic knowledge retrieval. The Technical Layer (Screaming Frog/n8n) manages automated site health and LLM file optimization (llms.txt). Finally, the Tracking Layer (Profound/Surfer AI Tracker) provides performance attribution through citation-share metrics and brand mention ROI analysis. This architecture allows enterprise-scale SEO teams to transition from manual content production to a coordinated orchestrator role where human experts manage a suite of specialized AI agents.

The 2026 AI SEO Command Center Stack: A structural blueprint of the high-performance toolkit required for effective GEO execution. The stack is organized into five glass-morphism layers, starting with the Strategy Layer (Semrush/AIO SEO) for AI Overview visibility tracking andSERP monitoring. The Content Layer (Surfer SEO/MarketMuse) focuses on NLP entity mapping and Information Gain metrics. The Research Layer (Perplexity/SearchGPT) facilitates real-time web synthesis and dynamic knowledge retrieval. The Technical Layer (Screaming Frog/n8n) manages automated site health and LLM file optimization (llms.txt). Finally, the Tracking Layer (Profound/Surfer AI Tracker) provides performance attribution through citation-share metrics and brand mention ROI analysis. This architecture allows enterprise-scale SEO teams to transition from manual content production to a coordinated orchestrator role where human experts manage a suite of specialized AI agents.

Teams that leverage the latest AI SEO platforms can spend more time on the high-level human strategy that AI still cannot replicate. Efficient AI SEO tools make competitive analysis infinitely faster.

Measuring AI SEO Success: Beyond Rankings and Traffic

The most dangerous mistake an SEO team can make in 2026 is measuring AI search performance with 2020-era KPIs. Keyword rankings and monthly click totals are vanity metrics in a world where the majority of searches never produce a click. This section defines the new measurement framework.

TL;DR: Replace rank tracking with citation monitoring. Replace click volume with conversion quality. Track where AI mentions your brand, not just where your links appear.

Old KPIs vs. New KPIs: A Direct Comparison

| Old Metric (Fading) | Why It’s Insufficient | New Metric (2026) | Tool to Track |

|---|---|---|---|

| Keyword ranking (#1–10) | AI Overviews bypass blue links entirely | AI Presence Rate | Profound, Semrush AI Visibility |

| Monthly organic clicks | Zero-click makes volume misleading | Citation Authority Score | Surfer AI Tracker, Ahrefs Brand Radar |

| CTR from SERPs | CTR collapses when AI Overviews appear | AI Referral Traffic | GA4 source/medium tracking |

| Domain Authority (DA) | A proxy metric, not a direct ranking factor | Inference Frequency | Profound |

| Bounce rate (isolated) | Doesn’t account for AI-initiated sessions | Session Quality Index | GA4 engagement metrics |

| Backlink count | Volume is less important than authority weight | Relevance-Weighted Link Authority | Ahrefs |

How to Track AI Citation Share

Citation monitoring is the practice of tracking how often your brand is mentioned or cited in AI-generated answers across platforms. Unlike traditional rank tracking (which checks where your URL appears in a list), citation monitoring checks whether an AI named your brand as a recommended source or answer.

Profound and Semrush’s AI Visibility Toolkit are currently the leading tools for this. They scan AI responses across Google, ChatGPT, Perplexity, and others against a predefined list of target queries. The output is a “Share of Voice” score—what percentage of relevant AI answers include your brand versus competitors.

The Information Gain Score

One emerging internal metric worth implementing is an Information Gain Score for each content piece. This is a qualitative assessment of how much new information your page provides relative to the top five existing results for a given keyword.

A page with a low Information Gain Score is a clone of existing content and will struggle for AI citation. A page with a high score—built on original data, unique case studies, or proprietary insights—becomes a “must-retrieve” node in the AI’s knowledge graph. Prioritize creating at least one high-Information-Gain asset per major topic cluster.

The goal of using these tools is not just to do the work faster, but to uncover the “invisible” ranking factors. Semantic distance and entity relevance are prime examples that manual analysis simply cannot detect.

For example, Screaming Frog now includes an “AI Extraction Quality” score. It identifies whether your pages are “too noisy” for retrieval-augmented generation search systems.

This allows for technical cleanup that directly impacts whether your content is cited. Using such methods is central to improving search rankings with AI.

Special mentions must go to ChatGPT-5 and Claude 4, which are now being used as “Synthetic Users.” Testing your drafts this way aligns with advanced generative engine optimization strategies.

SEOs load their drafts into these models and ask, “Is this the most authoritative answer to [question]?” This is a brilliant, practical workflow for how to use AI for SEO.

If the model says no, it means the content is missing the necessary high-authority signals to win the citation in the live search engine. Filling these gaps improves your Search Ranking overall.

This “Simulation Layer” allows for dozens of content iterations in minutes. It ensures every published piece of content is mathematically optimized for the current model weights of the major search engines.

Common AI SEO Mistakes Businesses Must Avoid

Despite the power of AI, many organizations fall into traps that can lead to significant penalties or a sudden loss of ranking in the SERP. Implementing AI content SEO requires careful oversight.

The most damaging mistake is the “AI Content Sameness” trap. This happens when a brand uses AI to generate thousands of pages that offer absolutely zero unique value or information gain.

Google’s algorithms are increasingly sophisticated at detecting “hollow” content that is designed for search engines rather than human users. Generative engine optimization favors unique insights.

If your content doesn’t provide a unique perspective or original data, it won’t just rank poorly. It may be de-indexed entirely as part of an aggressive “helpful content” sweep.

The Hallucination Liability

A major risk in 2026 is “Model Hallucination” appearing in published content. AI for SEO tools must be monitored closely to prevent this.

If an AI generates a fake statistic or cites a non-existent study and you publish it, your “Trustworthiness” score (the ‘T’ in E-E-A-T) will plummet. Search engine algorithms can rapidly cross-reference your site claims against a global database of hard facts.

One single major hallucination can lead to a massive “Site-wide Demotion.” This happens because the engine can no longer trust your brand as a source of absolute truth.

Organizations must implement a strict “verification gate” internally. Here, every statistic and claim must be human-checked against primary sources before digital publication.

E-E-A-T Erosion Through Over-Automation

E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) is more important than ever in AI SEO. When a site automates 100% of its content, it loses the crucial “Human Expert Signature.”

This includes personal anecdotes, real-world case studies, and the genuine “Expert Opinion” that AI simply cannot replicate. In 2026, the absence of these human signatures is a massive “Red Flag” for ranking algorithms.

According to Search Engine Land’s research (2025), organic click-through rates for traditional top-ranking results fall by approximately 34.5% when AI Overviews are present—and can drop by as much as 58% for the number-one position. This makes measurable brand trust the only core differentiator left.

The Stanford HAI AI Index 2025 reinforces this point: as generative AI floods the web with indistinguishable content, readers and algorithms alike are increasingly applying higher scrutiny to authorship signals, institutional affiliations, and verifiable expertise. Content that cannot demonstrate a clear human expert perspective will face growing visibility headwinds regardless of its technical optimization.

Finally, failing to keep up with the pace of AI development can leave a search strategy entirely outdated within months. The technology is moving so aggressively fast that a strategy built for GPT-4 is insufficient for the multimodal, entity-driven search landscape of the GPT-5 era.

The recommended approach is a collaborative “Human-in-the-Loop” model. In this setup, AI handles data optimization at scale, while humans provide the strategic direction and final quality assurance.

This model ensures that the speed of AI is consistently balanced by the accountability of human expertise. It creates AI search content that is both mathematically correct for engines and emotionally resonant for human readers.

Frequently Asked Questions

Can AI replace SEO experts in 2026?

No, AI is not a replacement for SEO expertise, but it has fundamentally changed the job description. Discovering how to use AI for SEO effectively is now the baseline requirement.

While AI can handle keyword research and technical audits, it cannot define a brand’s unique strategy. It also cannot navigate the complex ethical and legal landscape of AI-generated content.

The most successful SEOs in 2026 are those who act as “strategic orchestrators.” They use AI to scale their impact while proactively providing the human insight that machines lack.

The role has shifted from manual “Implementation” to higher-level “Governance and Strategy.” The focus is on building a robust, entity-driven brand narrative that AI engines can easily digest and confidently recommend.

What is Generative Engine Optimization (GEO)?

Generative Engine Optimization (GEO) is the process of optimizing web content to ensure it is frequently cited, quoted, and utilized by generative AI search engines. Key platforms include ChatGPT, Gemini, and Perplexity.

Unlike traditional SEO, which focuses on ranking in a list of blue links, GEO focuses on becoming part of the “consensus.” This is the core dataset that an AI uses to build its answers.

This involves implementing high data density, extractive clarity, and strong entity associations into your content creation process. These generative engine optimization strategies guarantee high visibility.

A brand that ignores GEO is essentially invisible to the 60% of users who no longer click on traditional search results. Therefore, GEO is the tactical framework required to survive the “zero-click” era of search.

Does Google punish AI-generated content?

Google does not punish content simply for being AI-generated; however, it does punish content that provides a poor user experience. It also penalizes content that lacks original value or depth.

Content that is purely derivative and offers no “information gain” will severely struggle to rank regardless of how it was produced. Search intent clustering alone cannot save unoriginal text.

The key is to use AI as a high-level tool for synthesis and drafting. Afterwards, you must ensure a human expert adds the unique perspectives and factual verification required for high E-E-A-T scores.

Google’s focus remains squarely on “Helpfulness” rather than the specific method of production. Consequently, high-quality, targeted AI content can and does regularly outperform low-quality human content in the current computational rankings.

How do I get my site cited in AI Overviews?

Getting cited in AI Overviews strictly requires a combination of high-authority consensus and robust extractive formatting. In short, it relies on machine-understandable content.

You must ensure your content is factually accurate, cited by other reputable sources, and thoroughly structured. This means using clear H2/H3 tags and incredibly concise section summaries.

Implementing comprehensive JSON-LD schema to explicitly define your site’s entities is also a critical technical step. Knowing how to optimize content for AI overviews requires this technical foundation.

Additionally, providing “Unique Data” like original surveys or proprietary findings is the most reliable way to become a top “Cited Source.” Because nobody else has your specific data, you become a “must-retrieve” item for the AI model.

What is the best AI tool for keyword research?

In 2026, the best tools are those that combine traditional search volume data with profound AI-driven intent analysis. Solutions like Semrush’s AI Visibility Toolkit or Ahrefs’ Keywords Explorer are highly recommended.

These sophisticated tools go far beyond simple volume metrics. They exist to reveal exactly how specific keyword clusters are performing within active AI Overviews.

This functional transparency allows modern marketers to consciously target “Citation-Rich Keywords.” These are conversational queries that frequently trigger generative summaries where their brand has a high probability of being cited.

The focus of the entire industry has firmly moved from “Volume” to “Retrieval Probability.” Consequently, adopting these AI SEO tools is the only reliable way to measure that shift accurately at scale.

How does AI search affect local SEO?

AI search has transformed local SEO by actively prioritizing “Direct Answer Convenience.” Semantic distance now matters globally and locally.

Instead of showing a traditional list of 10 local businesses, an AI assistant may provide a single, definitive answer. For example, it might say, “The best Italian restaurant near you for a quiet dinner is [Business Name] based on recent reviews.”

This targeted response means local businesses must focus heavily on their online reviews and detailed menu schema. Maintaining consistently accurate data across all AI-discovery platforms is non-negotiable.

Local authority is now driven almost entirely by “Hyper-Specific Entity Content.” This data format actively helps an AI differentiate one business from another far beyond just its geographic location.

Is backlinking still important in the age of AI?

Backlinking remains extremely important as a primary “Trust Signal,” but the industry has moved sharply away from volume toward “Relevance-weighted Authority.” Search algorithms prioritize quality above almost everything else.

A single link from a high-authority industry leader (like a Tier 1 news site or research firm) is now worth more than thousands of low-quality links. This is because high-authority links act as a reliable “citation anchor” for AI models.

AI models leverage these high-trust links to verify that your site is a credible source of information. Only credible sources can be safely cited in a public generative response.

Think of backlinks as weighted “Votes of Confidence” from other established entities. Each valid vote actively reduces your site’s “Semantic Distance” from the objectively established truth.

What are entities in AI SEO?

Entities are distinct, well-defined concepts, people, places, or things that search engines rely on to intuitively understand the web. In modern AI SEO, the foundational goal is to permanently associate your specific brand and content with these recognized entities.

You achieve this by firmly anchoring them within a recognized Knowledge Graph. Establishing this is the core function of strategic knowledge graph optimization.

By clearly defining these relationships through schema and context, you help AI models rapidly understand that your content is authoritative on a specific subject. This structure makes it infinitely more likely to be prioritized for final retrieval.

Entities are essentially the “Nouns” in the language of the web that AI truly understands. Properly mapping your content to these nouns is the absolute most powerful way to ensure long-term digital visibility.

Conclusion

The evolution of AI for SEO has reached a significant tipping point where traditional tactics are no longer sufficient to maintain digital dominance. The shift from a list-based search experience to a generative, conversational interface demands a new level of strategic depth and technical precision.

By actively prioritizing entity-based authority and mastering the principles of Generative Engine Optimization (GEO), organizations can survive the current search contraction. Done correctly, they will actually thrive in the new citation-based ecosystem.

This requires a fundamental shift in executive mindset—from winning clicks to deliberately winning citations. It also demands a long-term commitment to providing original, data-rich value in absolutely every published piece.

The key takeaways for modern teams are refreshingly simple. You must prioritize information gain, structure content for effortless machine extraction, and never sacrifice the human-expert signature that builds genuine trust.

The reality of 2026 is that search visibility is earned through authoritative consensus, not just simple technical compliance. Teams that stubbornly continue to treat search as a game of volume will find themselves increasingly marginalized.

Conversely, those who fully embrace the AI pipeline will find completely unprecedented conversion rates. In this new search landscape, your brand is no longer just a destination; it is the vital data that powers the world’s answers.

For those ready to implement these changes, following a structured AI implementation roadmap is the critical final step toward future-proofing your organic presence. Mastering how to use AI for SEO ensures long-term viability.

The technological transition is ongoing, but the true winners in the AI search era will be those who view every piece of content as a critical node in a global web of information. The era of the simple click is rapidly evolving into the era of the definitive answer.